At NAB this year, The Pixel Farm created a buzz with an early showing of a brand new application, PFDepth. The product was demo’d, but then due to an overwhelming response, the company decided not to do any further publicity until it was ready. The team appeared on fxguide live (our NAB coverage) and allowed us to write a brief story, but so strong was the demand for the product that one sensed Pixel Farm simply knew they were onto a winner and shut down all distractions to get the product fully working.

If The Pixel Farm can find traction with PFDepth it will open up a major new revenue market for them building on their current pillars of image restoration and 3D camera and object tracking. Bucking the trend of putting out early buggy public betas, Pixel Farm opted to wait for a full release of version 1.0 – no alpha, no beta – although there has been a lot of private industry consultation. We spoke to Daryl Shail, Pixel Farm’s VFX Product Manager. “The difference between now and NAB is that NAB was a technology showing, and it has evolved quite a lot since then,” he says.

So how did they do? fxguide got an exclusive early look at the product which today is not only being shown again at IBC but is also officially shipping.

What is it?

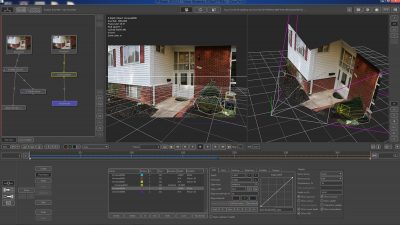

PFDepth is a tool for creating stereo images from mono footage by creating depth maps. However, as we will point out below, it also has a variety of uses in VFX, even for mono productions. But focusing on stereo conversion, PFDepth is designed to help with a variety of tasks required in possibly multiple different styles of stereo conversion pipelines. The product aims to be the tool that helps you but not the application that defines your approach. In stereo there are several points that can be quite different between two workflows and both are valid. For example, are the cameras parallel or converged? PFDepth aims to be a tool for both.

What makes it different from what is being done today? Broadly speaking, Ocula from The Foundry was born from the world of shooting stereo and needing tools to align, match and repair stereo captured footage. PFDepth is born of a world of stereo conversion. Clearly with the number of films being converted, pipelines do exist, but most of these are proprietary and while all using similar tools, such as roto, most are not based on a standalone and integrated app such as PFDepth. Most non-proprietary pipelines are based on a combination of products, especially apps like Mocha and Nuke.

PFDepth is also different than these existing solutions because it works in real world co-ordinates. “Up until now,” says Shail, “it has really been down to the individual artist’s perception or notion of what depth is and assigning an arbitrary grey scale value to that. Sure the darker it is, the further back it is in z-space, and the brighter it is, the closer forward it is. But there is no actual relationship between where that depth plane lives in z-space versus where the camera actually is. So you can’t auto-animate things like, convergence based on the focal plane at any given time, and that is where the real power of PFDepth is. We are deriving all our depth based on real world camera models. So if I say an object is 15 feet from the camera, it IS 15 feet from the camera.”

This is, of course, as opposed to someone guessing a value. The depth map from PFDepth is much more accurate to actual real world scenes you are filming and converting.

What isn’t it?

PFDepth does not do stereo correction of material shoot live in a stereo rig.

It does not have image modeling or allow you to bring in survey LIDAR. This can all be done in PFTrack, but it is not duplicated inside PFDepth. LIDAR in PFTrack is very new and while it is not on the PFDepth release schedule Shail discussed with fxguide that there was potential to explore this in the future. “It is conceivable it could, and I can’t think of a reason why I wouldn’t want it in PFDepth,” he notes, speculating about possible extensions. But it is unlikely that PFDepth would support RAW LIDAR directly. A more likely path would be to import a z-space plane derived from the LIDAR, perhaps from PFTrack either as a cloud or geometry.

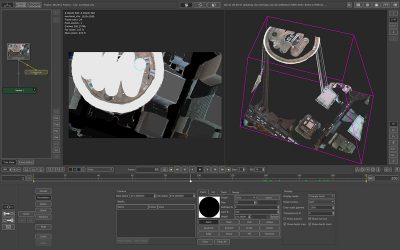

PFDepth does in fact import geometry, when geo comes in as part of an fbx file, depth data is automatically assigned based on the near/far plane, and that object’s distance from the camera. This geo can either be static, like set geometry, or deforming, as in a geometry-based facial or object track.

It also does not handle edits or long multi-cut sequences – it is not designed to conform a stereo project.

How does it work with PFTrack?

The geometry tracking, image modelling and z-depth tools found in PFTrack can also contribute to help refine the immense data sets created in PFDepth. One does not need PFTrack to use PFDepth, but it would help. However, there is no concept of a reverse workflow – there is no sense of PFDepth aiding or exporting to PFTrack.

It should be noted that PFClean also has a role to play. If a stereo conversion is being done of an older library title, then PFDepth will benefit from PFClean. “Throw PFClean into the mix, and you have a complete end-to-end solution for bringing archive films through restoration, remastering and stereoscopic conversion for redistribution,” comments Shail.

One of the greatest advantages of PFDepth stems from working in real-world space – you can assign depth values based on world space and offsetting with pre-defined units of measure, and using 3D camera positions (motion or static) to dynamically adjust the perceived dimensionality of the scene.

PFDepth supports FBX import, since while it works well with PFTrack, one doesn’t have to use PFTrack (which would not natively pass FBX) and PFDepth tries to support a wide variety of Pixel Farm and other vendor products. The data could come directly from Maya, for example.

Roto tools

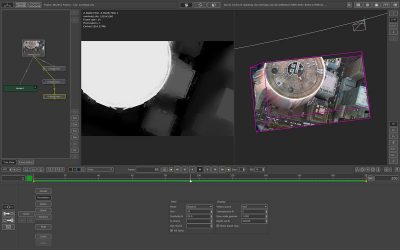

Probably what one could consider the core of the app is the rotoscope module (Z-Depth Object) that lets you define the planes, and with mixed geometry coming from PFT.

Shail explains that from The Pixel Farm’s point of view, “the roto tools are very cool indeed, but we consider them a necessary evil to be honest. You can’t avoid roto in conversion work, but the real coolness in PFDepth comes from the way we are working with geometry, depth maps, and depth modifiers (filters that can quickly deform a 2D image plane to add shape and surface texture).

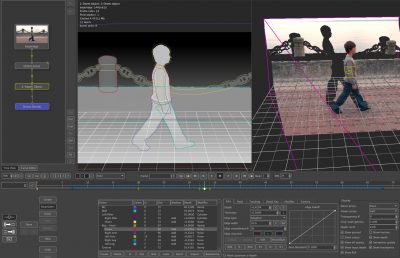

The tracking issues needed for PFDepth are not exactly the same as the requirements of tracking for camera tracking. In a room with an actor giving a performance a camera tracker tries to ignore the actor and track the static room, while a stereo conversion is very much focused on the actor – much like an object track. But more than that, it is not enough just to isolate the depth of the actor, one needs to have separate depth map levels for their nose, compared to the eye, compared to their ears etc. And thus with most stereo conversion pipelines a central issue is roto. To help with this problem PFDepth provides a hierarchical roto tool.

The different layers of the actors would be a linked file of rotos – linked to the z-depth of the ‘body’ of the actor and then the arms, or hands etc are offsets from that base z-space position. So the fingers, hands, arms etc are all derived from the ‘centre’ of the actor which would normally be established as the actor’s head.

Often times there can be a bunch of items whose relationship to the parent is relatively static, so by working this way, one adjustment to the partner ‘centre’ node adjusts the depth on everything – with the appropriate off-sets.

The team at Pixel Farm have done a lot of work on the z-depth object tools (roto tools) which means that while one can do traditional splines, you can also do other things, such as paint a rough inner and outer mask – and then instead of perhaps just doing a gradation of softness in the depth map, PFDepth will do edge isolation and auto-separation. “It’s about as close to ‘auto-roto’ as you can get,” says Shail. “It allows you to draw a round boundary and allow PFDepth to discern what is part of that foreground object versus part of the background.” This is exceptionally useful on hard to roto areas like hair and fine lines.

The product also has a planar tracker solver built in, these can aid in managing deformations of a basic roto shape due to perspective or movement changes of the camera, allowing for keyframing rather than per frame. The planar tracker can also be used directly in map generation (excluding roto applications).

So impressive were the roto tools, that at NAB people asked the company about either exporting the rotos or using these tools just for general roto. Right now the company feels there are other companies with specialist tools that they are not supporting this. “Is it possible that at some point in the future we’ll let you render those out? Yeah maybe,” says Shail, “but for now we have worked with the Imagineer guys and the Sfx guys and support their roto shapes and import their roto shapes. So we are not duplicating the workflow that could be happening somewhere else in a large facility – we’ll actually utilize that.”

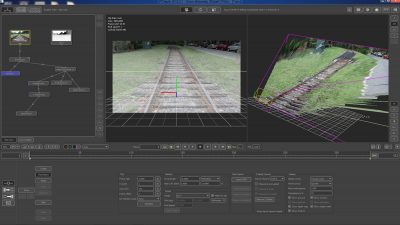

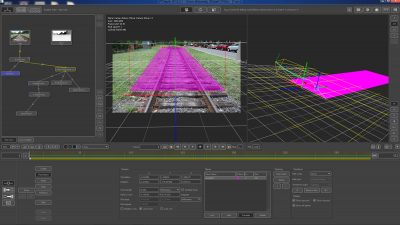

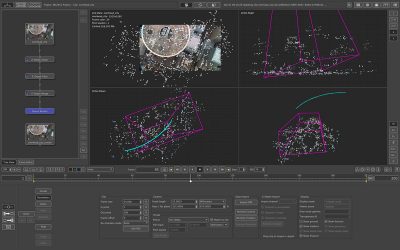

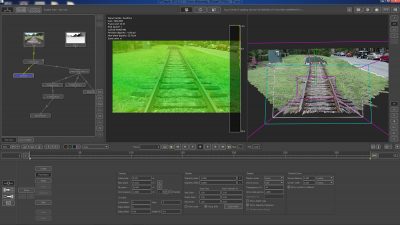

The train track footage, Victor Wolansky, visual effects supervisor.Example: city skyline

Here is an example using the z-depth tracker and z-depth edit node.

An example of the workflow would be to track a shot in PFTrack. From this we know the movement of the camera relative to say the buildings the camera was flying over in a helicopter. This camera path can be imported into PFDepth. The next stage is to make a z-depth map and establish the baseline for the shot.

The next stage is to isolate the objects. Here one can use the ‘roto’ tools.

The stroke here identifies co-planar points and defines the distance to that co-planar roof top.

(Say this building is 200 feet from camera, etc)

Then we have a sensible depth map for a frame.

This uses the fill tab – the fill tab allows the user to use photogrammetry and known distances (from above) to fill in the depth map but only on a single frame.

The z-depth edit node lets you create a depth map in several different ways.

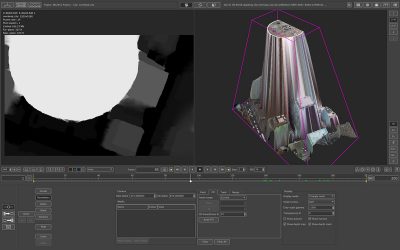

Now we can make a classic grey scale depth map.

This depth map can then be tracked into the rest of the image sequence, all the time improving the result, and building on the original depth map.

The image on the left is a solved stereo solution, where the initial z-depth combined with the camera track have built together with the z-depth tracker and z-depth filter to produce a final shot.

Additional features

There is also geometry camera and geometry object tracking technology from PFTrack, but the tracking is purpose-based to depth solutions, as frankly depth tracking is not as demanding as what is needed in camera or object tracking from PFTrack for say 3D CGI integration. So while PFDepth has a lot of PFTrack’s geo cam/object tracking it does not export camera tracks separately (and thus replace PFTrack or PFMatchit).

There is also a new project management setup, and the product has lookup tables for color management. As this product comes from the same code base as PFClean, it gains the LUT experience from the PFClean product, which is a mature release and used daily in production.

PFDepth can take a shot through to final render of left and right eyes – what the convergence is, the interaxial, plus it covers the in-painting of the missing information using a variety of approaches including temporal in-filling: comparative edge dilation on a per depth plane basis, edge softening, threshold percentages, etc. The point of PFDepth is that you can finish a shot in PFDepth and not need to go to say NUKE to finish the shot.

The tools extend to eye strain and edge violation, based on the viewing environment, the software will flag when the stereo you have created has violated your own guidelines of ‘acceptable’ / comfortable stereo. This is done with a simple ‘traffic light’ color coded system.

One final point is that while PFDepth is clearly aimed at stereo conversion, it is not limited to just this. For example, a valid and reliable depth map could be an invaluable tool for color grading and there has been interest from several grading companies.

It is also a very useful map for a general VFX pipeline, CG character placement and relighting. The export OpenEXR could drive a Nuke comp and similarly PFDepth could import a CG generated depth map and use it in stereo conversion.

PFDepth supports exporting to an effects pipeline the depth maps it creates and could easily see adoption in facilities that do no work in stereo conversion at all.

The product, which is 64-bit running natively on OSX, Windows and Linux, is on sale as of Friday, September 7th for £2000 / $3300 / €2500. PFDepth 2012.4 is the official first version 1.0 release – to bring it into line with the standard quarterly naming convention of The Pixel Farm products.

The Pixel Farm will be demonstrating PFDepth and its other products this weekend at IBC 2012 (September 7th-12th) at RAI Amsterdam, stand 6.C18.

The company has also lowered prices on its tracking products. The latest release of PFTrack 2012 which supports full resolution raw LIDAR scans is now available for £1000 / $1600 / €1200. PFMatchit. which will replace PFHoe as the entry point to The Pixel Farm’s VFX matchmoving product line-up, is now available for £300 / $480 / €360.

I’m not sure why they don’t want people using this product for roto? It’s bread and butter work that every facility has to deal with, anything that makes it faster is going to attract attention and at least some purchases.

It’s not about what something is designed to do, it’s about what it can do. The user decides, not the manufacturer.

This looks like a great product and I could think of a lot of other uses for it, hopefully it will not become a walled garden in what it can export.

[…] Pixel Farm’s PFDepth: exclusive first look […]