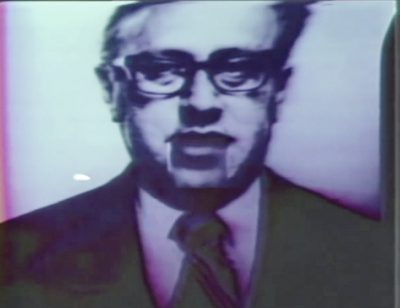

The history of facial animation or more exactly, tracking faces to feed an animation pipeline, may well have started in the late 1970s when Tom DeWit used a marker on a chin to track a ‘Monty Python’ style jaw movement onto a cut-up photo of Henry Kissinger. While the jaw only went up and down, the performer’s own mouth drove the avatar using DeWit’s Pantomation and one could argue, as Nvidia’s Lance Williams did in discussing the history of face tracking, that this was the beginning of facial animation tracking. Williams himself has produced and contributed greatly to facial tracking and realistic animation, having been central to the 2000 Disney Human Face Project as well as many other more recent projects such as Nvidia’s version of USC-ICT Digital IRA.

The history of facial animation or more exactly, tracking faces to feed an animation pipeline, may well have started in the late 1970s when Tom DeWit used a marker on a chin to track a ‘Monty Python’ style jaw movement onto a cut-up photo of Henry Kissinger. While the jaw only went up and down, the performer’s own mouth drove the avatar using DeWit’s Pantomation and one could argue, as Nvidia’s Lance Williams did in discussing the history of face tracking, that this was the beginning of facial animation tracking. Williams himself has produced and contributed greatly to facial tracking and realistic animation, having been central to the 2000 Disney Human Face Project as well as many other more recent projects such as Nvidia’s version of USC-ICT Digital IRA.

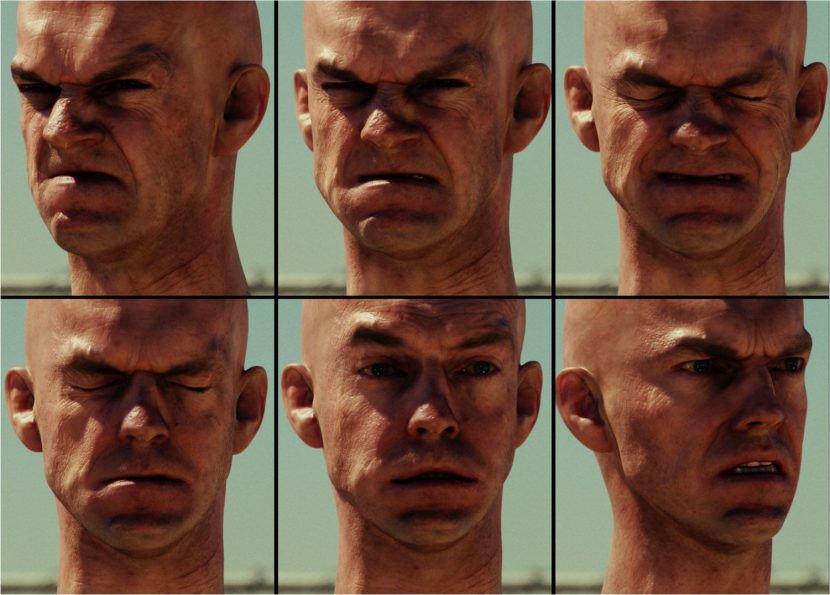

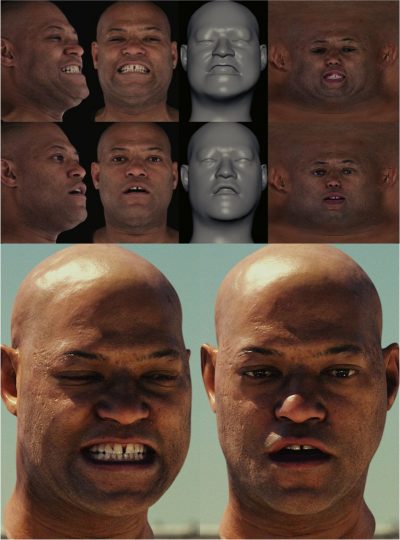

This week the Academy honors two other groups, the team behind the Universal Capture system (UCAP) and the more recent MOVA system. The Universal Capture system broke new ground in the creation of realistic human facial animation. MOVA produces an animated, high-resolution, textured mesh driven by an actor’s performance. This technology is fundamental to the facial pipeline at many visual effects companies. It allows artists to create character animation of extremely high quality. These two teams have contributed enormously to the advancement of a craft still very much the holly grail of computer graphics – a believable human face pipeline. In the case of UCAP – the work is best known for the Matrix sequels. For MOVA, the system is very much still in development and has been used on films such as The Curious Case of Benjamin Button, in the Harry Potter films (the multiple Harry sequence), Hulk in the Avengers, Gravity and more recently Guardians of the Galaxy.

Earlier Work

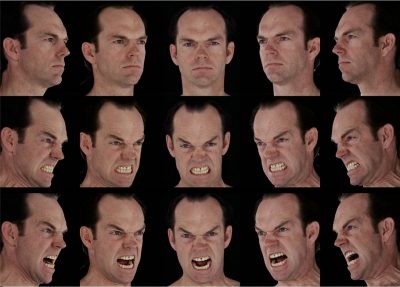

The original Disney Human Face Project was loosely connected to these, although it preceded them, it was not made public until after the UCAP system was implemented. But like UCAP it used optical flow. While the Human Face Project was never converted into a film and thus was not open to the Sci-tech honors, it represented the start of the art at the turn of the millennium. Williams credits much of the project’s technical success to the very talented Hiroki Itokazu (facial shapes modeling and rigging) who developed his technique known as Hirokimation, not at Disney but earlier at Warner Bros. Itokazu is a true modeling facial expert who is now back at Disney with credits such as Big Hero 6, Feast and Frozen. The Disney project aimed to make a younger version of VFX professional (and then Disney VP) Price Petheral. In the test film Price meets himself. The project was run by Oscar winner Hoyt Yeatman (The Abyss, Mighty Joe Young) – himself a Sci-tech winner for solving the red fringing on traveling mattes. The Human Face project was Disney’s best effort to throw all the best technology they had at the time at the problem of a face pipeline. It used cyberscanning, face casts, optical flow registration, early light stage data, separate diffuse and specular, and thanks to Itokazu – one of the best rigged blend shape solutions done up until that point.

It is interesting to see this year’s winners compared to the state of the art at the time. Disney had no sub-surface scattering SSS. So while the animation was remarkable, the images did not succeed enough for the project to roll into a feature film. SSS is so vital to human skin and yet it was only just appearing at the start of the new millennium. The optical flow used in the project was also advanced but only used for alignment of passes. The pipeline ended up being (in simplified terms):

- model – mask and cyberscans

- film expressions – blend shapes

- match to performance using an optimizer (using the source and target’s dot product not Oflow)

- retarget

- render

Interestingly, stereo was in its early days too, and while the performance of Price Petheral was filmed with two cameras – they were not the same make of cameras and so the second camera was not sync’d or matching so it was not used. Dan Ruderman at Disney would later write code to convert/align them but the project remained primarily mono.

UCAP

By contrast the UCAP system very much addressed SSS and really pushed optical flow technology. It was optical flow that led to the development of UCAP, the team of Dan Piponi, Kim Libreri and George Borshukov were working on other projects and had developed O-Flow technology for such Oscar winning films as What Dreams May Come. The team had applied O-flow data to a model and were surprised at how well it worked.

By contrast the UCAP system very much addressed SSS and really pushed optical flow technology. It was optical flow that led to the development of UCAP, the team of Dan Piponi, Kim Libreri and George Borshukov were working on other projects and had developed O-Flow technology for such Oscar winning films as What Dreams May Come. The team had applied O-flow data to a model and were surprised at how well it worked.

After the original Matrix used O-flow and the work of Bill Collis so well to smooth the bullet time, the team was very comfortable with technology, but it was John Gaeta who effectively came up with the term Universal Capture or UCAP as a means to acquire both form and texture over time. And to apply that to humans and performances as well as environments. Understandably concerned about occlusion issues, the team did think that a facial camera capture rig would be possible and thus as the first step to a much vaster and more complex solution UCAP was born as the first step in providing Gaeta the universal scene capturing tool he wanted.

JP Lewis, now at Weta, joined the team from having worked on the Human Face Project, but without bringing over any actual technology. The Disney work was confidential and Piponi had already completely separately developed the UCAP advanced O-Flow system. While Lewis did contribute to O-flow it was his work with Borshukov in inventing texture space diffusion that really had enormous significance. The notion of doing skin diffusion functions in effectively UV or flat space was so significant that it is still used today in most game engines in one form or another.

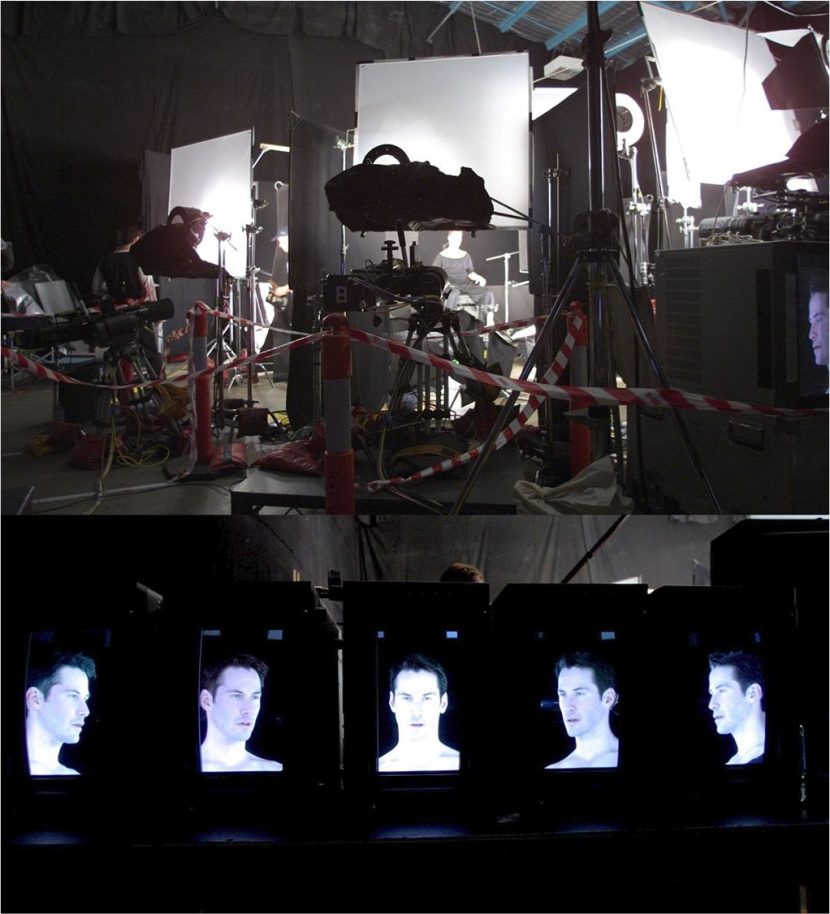

UCAP’s solution was much different that the model 3D approach of Disney. Their innovation was to not use the performance cameras as reference but by turning the HD cameras on their sides, they would film, convert and seamlessly stitch a high res diffuse face pass of the actor. With advanced optical flow they would modify the base face model and then effectively project the stitched face back on top. This produced remarkable results, especially when improved with Texture Space Diffusion SSS.

But to sell the face it still needed to be added to the scene. So the projected flat lit face was also rendered with the spec correctly produced from matching the location lighting. In effect, Mr Anderson and Agent Smith were the actors’ stitched diffuse performance with location spec + SSS and some additional texture maps to provide the high detail skin texture. The results were stunning. The problem came really in the distant shots which today most agree are not as strong as the close up face shots. Ironically this is not to do with the face pipeline, but rather Mipmap texture issues identified even around that time by Oliver James. The coarse mpmp levels and averaging of normals is quite different from what is needed, and this is only being addressed today with innovations such as LEAN mapping and other spec solutions.

The UCAP system then in simplified terms was:

- film with 5 HD cameras the ‘performance’

- stitch and O-flow the material

- modify and ‘warp’ the model based on the O-flow

- project the stitched texture back on

- add SSS

- add high frequency texture

- light with target scene lighting for the correct SPEC and shadows (and comp in)

The UCAP team are still very close although the team broke up, and Dan now works at Google on projects such as the incredible Loon Project, George is working as part of an advanced startup solving rapid prototyping and preview in the clothing and sports industry (he is CTO at the successful Embodee) and Kim has just moved from ILM and Lucasfilm to the games industry (Epic). All three will be attending the Sci-techs this weekend to collect their well deserved award, but this is by no means their first Sci-techs. Dan for example has now three! (After being head of R&D at Manex and then at ILM he was the first of the three to expand beyond visual effects).

MOVA

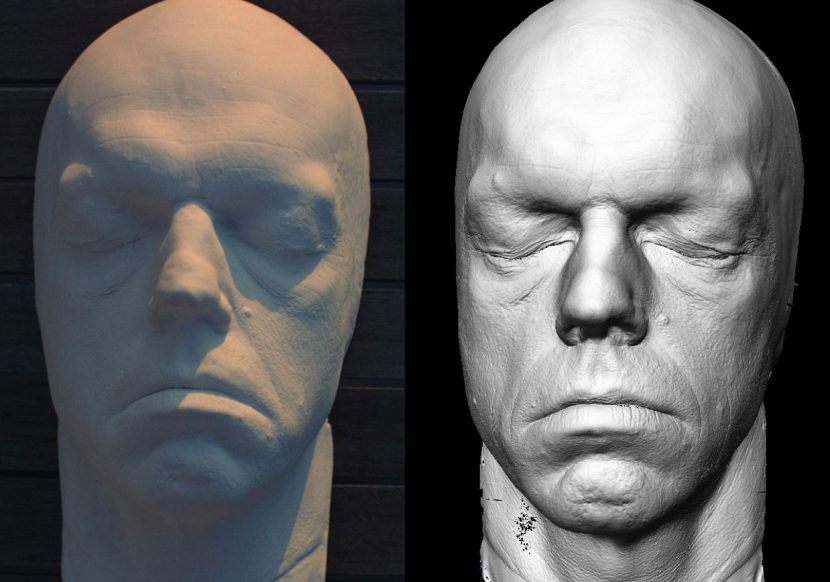

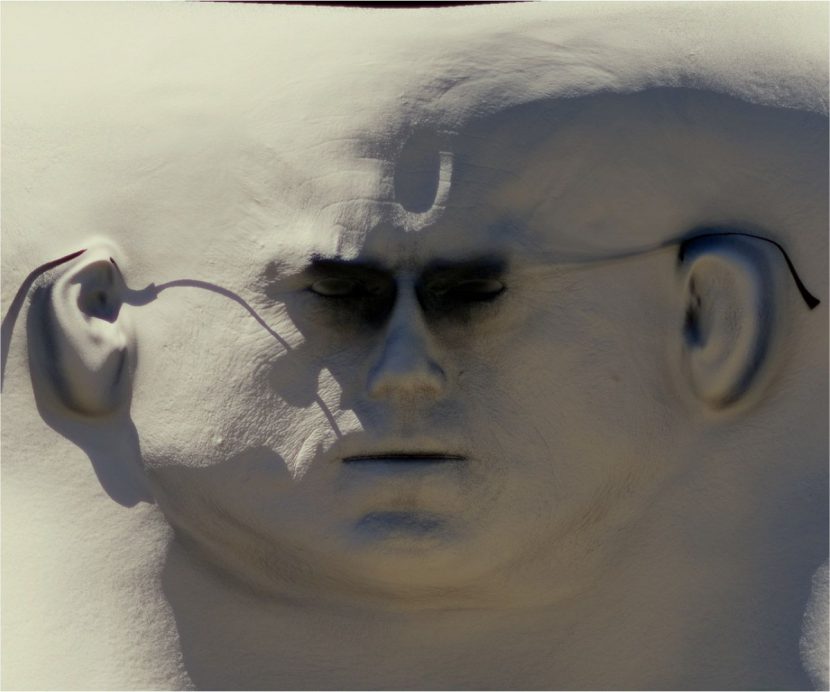

Tim Cotter, Roger van der Laan, Ken Pearce and Greg LaSalle are being honored the innovative design and development of the MOVA Facial Performance Capture system. This started in around 2003 to 2005 with motion capture using Vicon standard approaches as part of Rearden Studios, this section developed and then got spun out inside OnLIVE until 2012 and is now connected to DD. The system may have started with just body capture but it moved to markers on faces. The team worked hard to perfect their facial pipeline and thought “there should be a better way?”. They started applying dots to the actors faces and decided that a ‘hockey mask’ mold would speed up consistent tracking markers. In this approach a mask is made with holes so consistent dots are always made at the same place on the face. As the team were very production focused they decided to be even more innovative and use a small real airbrush to ‘spray’ on the dots. They were also experimenting with using phosphorescent paint and black lights. What they discovered in a classic happy accident that the over shoot ‘spray’ patterns actually tracked really well. The mistakes were actually excellent noise – non-repeating patterns.

Unlike the earlier systems, the MOVA system converts the performance camera footage into Fourier Transforms (FFTs) (not O-flow) for alignment and then reconstruction. Also unlike the other systems, MOVA can use an array of say 29 cameras not 5 to get every angle on the performance. The cameras are calibrated and aligned yet the setup time for any actor using the rig is very fast. There is, of course, the application of the airbrushed paint but then the time in the chair is relatively quick and the rig with all its cameras allows for some flexibility while giving a performance, it is not a requirement to be locked into a head rig or stiff chair.

The other advantage of the noise airbrush approach and getting rid of the hockey mask dot alignment approach is that the system is using now some 7000 dots to get the performance. This is a remarkable level of fine detail on a reconstructed face.

Importantly there is also a stage to temporarily align the frames to produce a consistent and valid spacial and temporal set of data, but while the MOVA does provide reference ‘stitched textures’ it is not intended as anything more than reference. The data from MOVA is without holes and thus this eye reference – temporarily projected back on to the digital face is very helpful, as it is filmed the way it is with both the airbrushed (phosphorescent) paint and the lack of target lighting. It is not intended to be used in the final production. There have been some advances in this direction and special cases such as Gravity but for most of MOVA’s clients they are retargeting the data anyway. In the case of say DD, they may do an USC ICT Lightstage scan and data set and combine this with MOVA performance as part of a face pipeline. The MOVA team work to build great data sets that feed into the pipeline of whoever wants to work with them and that VFX house decides on the rest of their own unique pipeline. MOVA stops at delivery of the temporally coherent data.

Controversy and Comment

In a surprise move this week, the MOVA award has been complained about. Earlier this week Steve Perlman, the president and CEO of Reardon Mova sent a letter of appeal to the Science and Technology Council, arguing that the “core members of its R&D team responsible for essential inventions or major contributions,” including himself, are not being recognized. He is requesting a review.

While agreeing with the selection of three of the four names, Reardon Mova’s, Steve Perlman, contends that LaSalle, who is now working with the MOVA system at Digital Domain, was “not even on the R&D team … [and] made no essential inventions or major contributions to its development.” according to an article in The Hollywood Reporter.

While fxguide can not comment on the specifics of this case, what The Hollywood Reporter did not explicitly discuss is just how rigorously the Sci-Tech committee investigates applications for merit. There are two very important aspects to any such claim. The first is that this dispute is in no way unique in the history of Awards. Time and time again there are complaints as to who is nominated for any award – in almost any award show. Award shows nearly always have to limit the number of names and so from the VES awards to the Academy Awards, there are always those who feel hard done by and those who frankly would have rightly been included – had more names been allowed. The second and more specific point is the timing. To read the THR article it might seem like this is exactly when one might expect such an objection to be lodged, but in reality the Academy has a very open process dating back months. Fxguide reported on August 16th that the Academy was considering officially MOVA for a Sci-tech :

“High-resolution motion capture techniques for deforming objects

Prompted by MOVA (MOVA) and GEOMETRY TRACKER (ILM)”

This is how the Sci-tech Academy operates, it is not a private voting system like the VFX Oscar.. it is a public discussion of what is being considered, with the express declaration that “after thorough investigations are conducted in each of the technology categories, the committee will meet in early December to vote on recommendations to the Academy’s Board of Governors, which will make the final awards decisions”.

This means that anyone having any claim on the MOVA award or thinking that their technology was more deserving has a chance to be heard. Moreover, this is not a passive committee that sits back and waits to see if anyone will raise their hands. This is a dedicated group of some of the best minds in our industry who investigate claims. They are not paid, they are not associated with some group or agenda, – they are senior professionals who work extremely hard to trace back the development of key technologies that have impacted our business. There has been months to submit input on who should win and who should not. People have had time to be heard. It is worth noting UCAP was not on the original consideration list published in August and for that matter – ILM’s Geo Tracker was not awarded any award this year (but was up for consideration). The only reason is clearly that the Sci-tech committee did their job and felt UCAP needed recognition and, for now, Geo Tracker did not. Thus it is that at tonight’s Sci-tech awards there will be people awarded who’s companies made submissions, but also there will also be people there who were surprised to learn that due to some other technology being investigation, their work was to be awarded and recognized.

Is the process perfect? No, nothing is. But in talking to unrelated award winners of this year’s Sci-Tech awards, fxguide was told that they felt this was the most professional and through awards process the recipient had ever been apart of. One awardee commenting “I know now why they say I want to thank the Academy…” explaining that the Sci-tech committee and the Academy do a valuable and time consuming investigation process second to none in our industry.

It is insulting and offensive to the award winners who have earnt the right to be awarded and the selection committee to imply otherwise. It is sad that people who feel wrongly done by can not themselves see that the umpire has given their ruling and these are the 2015 winners.

It is perhaps most telling that this is also done at a time when there is a licensing issue dispute.Again to quote from the THR article: “Compounding the situation is a bitter disagreement over licensing of the technology, as Perlman makes a related claim that LaSalle and Digital Domain used MOVA technology for Marvel’s mega hit film Guardians of the Galaxy, but “I never granted Digital Domain a license.”

The Sci-tech committee do an outstanding job, a simple thank you seems appropriate from the community, and a celebration of the winners who have all, yes all, deserved the right to be honored.

There are still more pipelines and innovations happening in this space and we will be covering these especially a special report on both Faceware and separately the work at USC, but we want to join the Academy in applauding the great work by both the UCAP and MOVA teams.