Few VR projects span decades. CGI dates so quickly that the ‘cutting edge’ blunts in a very short amount of time. So it was remarkable that the VR ‘DAVID’ experience that allows a viewer to walk around Michelangelo’s 17-foot (5-meter) statue of David using virtual scaffold, is built on data that comes from the 1990s!

In 1998, Dr Marc Levoy led a team from Stanford University to scan Michelangelo’s David in Florence. Over the years, this dataset has been used by many technical papers, but as it totals more than a billion polygons, it has never before been viewable in real time.

Epic Games’ Lead Technical Animator Chris Evans is somewhat of a Michelangelo fan. “I am a bit of a Michelangelophile. I actually have a plaster cast of David’s face at home, taken from the statue in 1870 when it was moved. I have studied Michelangelo for many years, and I find his life and works really intriguing. At one point I was told that I have more books on Michelangelo than the Staedel Art Library in Frankfurt!”

Over a six month period, with the help of Adam Skutt, (Epic Games character artist), Evans baked the dataset onto an in-engine representation, down to the quarter millimeter.

The Scan

Dr Marc Levoy decided to do a high resolution 3 dimensional scanning project with his grad students as part of an academic sabbatical. As luck would have it, Stanford University had an overseas study centre in Florence and the David Statue had an optically appropriate diffuse, white colour suitable for scanning with the technology of the day.

The scan of Michelangelo’s David, begun on February 15, 1998 and took 4 weeks. Most of the art historians in Florence were keen to have such a professional research project, even given the extremely valuable and highly restricted access to the statue.

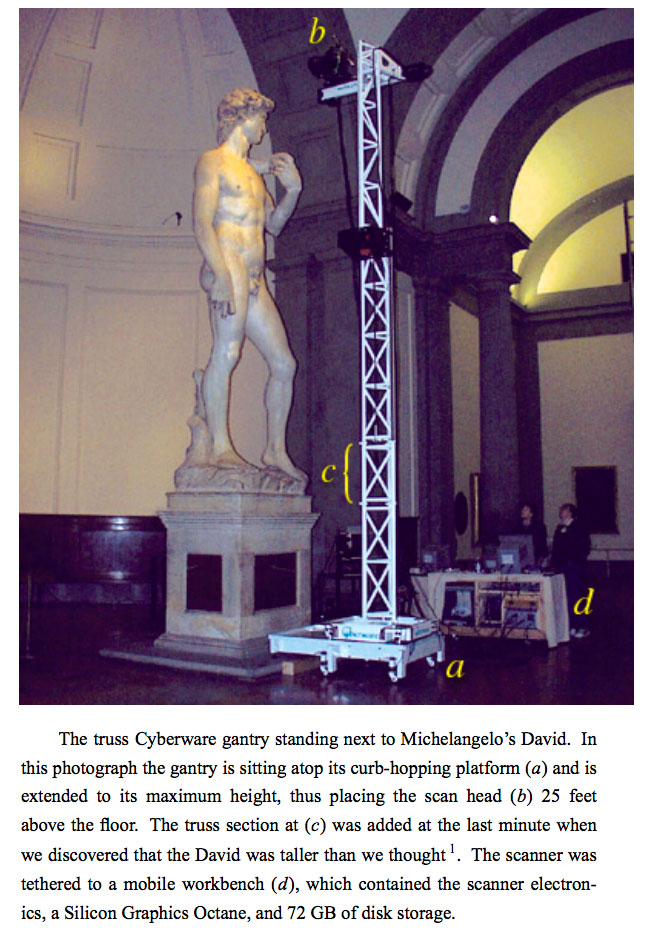

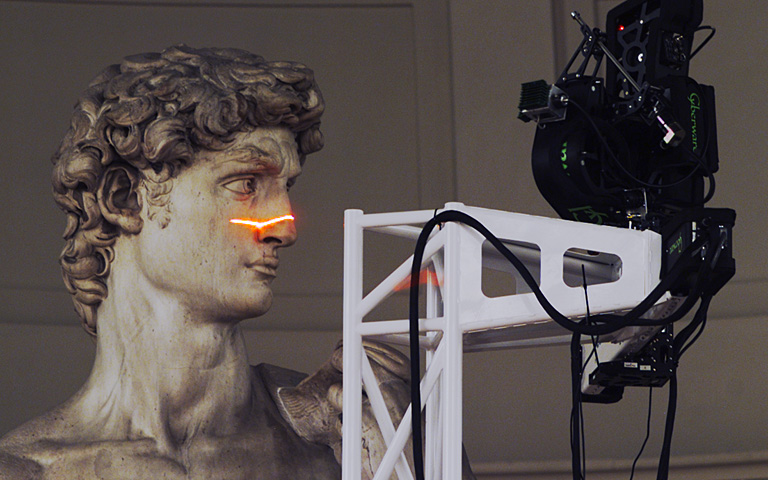

The team used a Cyberware swept line 3D scanner, mounted on a special rig. The actual scanner was very slow by today’s standards but it’s 1/4mm accuracy is still considered as very high quality today.

The workstations are a mixture of SGIs and PCs. In the photograph above the gantry is at maximum height. The 2′ truss section roughly level with David’s foot (C) was added at the last minute after the team discovered, much to their horror, that the statue was taller than they thought. “We designed our gantry according to the height given in Charles De Tolnay’s 5-volume study of Michelangelo. This height was echoed in every other book we checked, so we assumed it was correct. It was wrong. The David is not 434 cm without his pedestal; he is 517cm, an error of nearly 3 feet!” explained Levoy.

The team, from right to left, Kari Pulli (running the scanner), Marc Levoy (with his hand ready on the emergency stop button), Lucas Pereira, and Domi Pitturo. The Cyberware gantry could be reconfigured to scan objects of any height from 2 feet to 24 feet. These reconfigurations, which involved removing one or more pieces of the tower, took several hours to do.

The team, from right to left, Kari Pulli (running the scanner), Marc Levoy (with his hand ready on the emergency stop button), Lucas Pereira, and Domi Pitturo. The Cyberware gantry could be reconfigured to scan objects of any height from 2 feet to 24 feet. These reconfigurations, which involved removing one or more pieces of the tower, took several hours to do.

The scanner head was reconfigurable. It could be mounted atop or below the horizontal arm, and it could be turned to face in any direction. To facilitate scanning horizontal crevices like David’s lips, the scanner head was also rolled 90 degrees, changing the laser line from horizontal (shown below) to vertical.

“I modeled the interior of the Tribune at the Galleria della Accademia in Florence, where the David is housed, and created the level allowing people to ride a scaffold up and down while walking around the statue”.

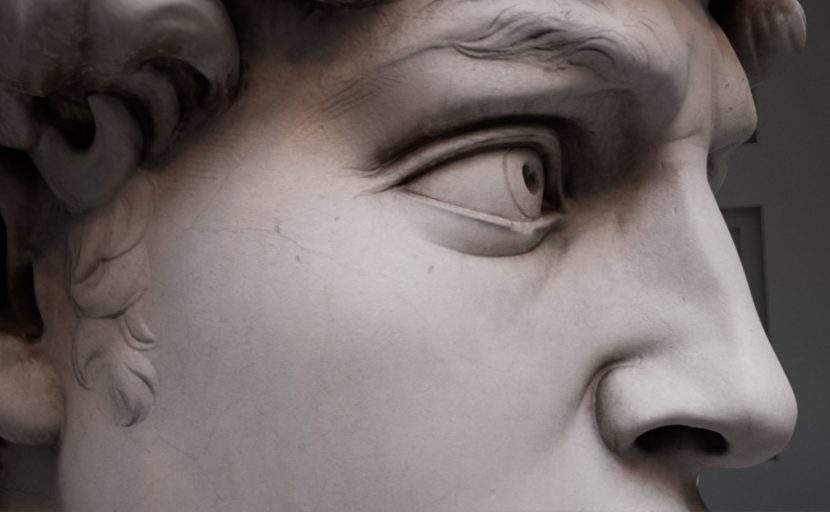

Although most of the statue is smooth, chisel marks can be seen in several places in VR, such as here to the right of the sideburns. Also visible clearly in VR is a break in the arm of the David Statue that few people will have ever seen. During an anti-Medici rebellion on April 26, 1527, rioters occupied Florence’s Palazio Vecchio while soldiers battered the doors. The occupiers threw furniture off the parapets to repel soldiers on the ground. A bench tumbled down and struck the left arm of the statue of Michelangelo’s David, breaking it off in three pieces. Interesting aspects such as this are able to be learnt in VR, thanks to Chris Evans incorporating an “inspection mode”, that plays various audio files if selected.

The VR version

Chris Evans studied Michelangelo at College. “My landlord at that time taught a two semester course on The Sistine Chapel ceiling, (painted by Michelangelo between 1508 – 1512), and he got me really interested in Michelangelo, and I have studied Michelangelo for years. So when the Stanford work was first presented at SIGGRAPH… I was super interested” he explained.

In 2003, researchers at Stanford released something called ScanView which allowed users to move around a proxy 3D version of the statute and pick any angle and have a high resolution render done offline and emailed to you. “I used a ton of my dorm room’s bandwidth using that!” Evans jokes, “But it was a single view jpeg, not a 3D model or anything”.

The last work on the full ‘billion point set’ was worked on actively in 2009, but not at Stanford University. So it was somewhat of a surprise when Chris Evans contacted Marc Levoy about repurposing the Data set for VR. Not only had it been 8 years but the enquiry came from an ‘animator at a games company’ and not a researcher. Levoy has licensed the data several hundred times, for various reasons, but “this one particularly intrigued me as it was going to be VR, and we had not done anything before in VR”, commented Levoy.

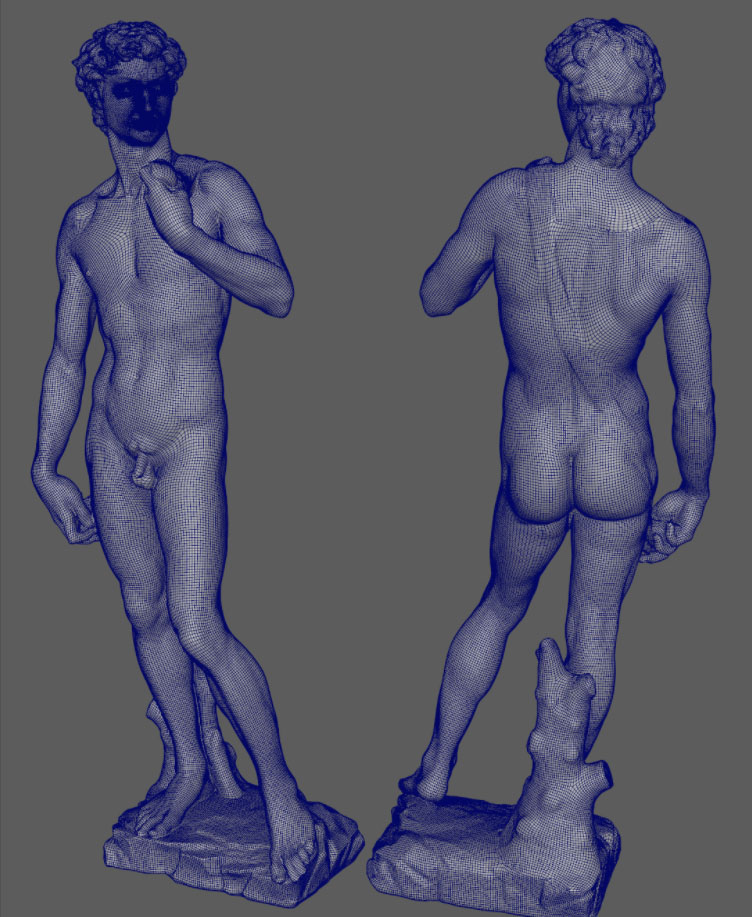

For SIGGRAPH 2017, Evans decided to produce an interactive version of the vast data set. The original data was 2 billion polygons. Each part of the statue was covered by at least two scans. Difficult sections were covered 5 or more times, for example when trying to reach the bottom of cracks. In addition, there were 7,000 color images (1520 x 1144 pixels), for a total of 32 gigabytes of Data, which in 1998 was almost incomprehensibly vast. In the 90s, no computer could hold the uncompressed dataset in memory. In 2017 Evans was able to move around the reconstructed statue in real-time in VR at 90fps.

To do a clean bake of the data at the 1/4mm resolution of the face, was complex. Chris Evans wanted all of the details, especially around the face to accurately reflect the vast amount of data. Looking up the 2009 dataset took a couple of hours just to get about 70 -100 million polygons of the face out of the data set.

This original set of polygons then needed to be decimated or reduced to a lower number of triangles, for real time use. The topology needs to be manifold to do this (a topologically closed surface in 3D – and without opposite orientation of normals between polygons), but the key is preserving the boundary edges.

The only program that could decimate that data was Blender, and “it decimated somewhere between 70 to 100 million polygons down to about a million, without any visual quality loss – as I have shown with side by side comparisons”, says Evans. (See the image above which shows the before and after, left and right, without visible quality differences). Evans then needed a very clean facial topology for the high resolution mesh. But he needed to modify the in-game real time topology of the eyelids etc of the scan so that he would have a clean and well preserved bake. Internally at Epic Games they have a very good in-house and clean topology which is used for all of their face projects such as Senua and MEETMIKE.

The same underlying basemesh facial topology that was used on fxguide’s Mike Seymour MEETMIKE with the correct edge loops was used for the DAVID. In Wrap3, Evans could do a non-rigid alignment of Epic’s standard high quality facial topology onto the DAVID to bake it across very cleanly.

Wrap3 by Russian3dscnner.com makes it possible to take an existing basemesh and non-rigidly fit it to new scans. It also provides a set of very useful scan processing tools like decimation (although not used on DAVID), mesh filtering, texture projection and many more. It has a node-graph architecture that allows one to convert a series of 3D-scans of say actors to production ready characters sharing the same topology and texture coordinates. In Wrap3 Evans could “pick the edge of the nose, and the ear lobes etc and it non-rigidly aligned the topology onto any face so we could align the same topology we used for our rigs onto DAVID and then that allowed us to do a really clean bake across onto the Statue” he explained.

This video shows Wrap3’s wrapping an other features.

Wrap3’s wrapping uses a coarse-to-fine approach. For example, the high quality Epic basemesh was deformed by a set of control nodes. The process is divided into several steps. Each step a set of control nodes is generated on the basemesh with specific density which increases with each new step. The algorithm tries to find a position for control nodes so that the basemesh fits the target geometry as close as possible. When a solution is found, it proceeds to the next step. It samples the basemesh with a bigger number of control nodes and repeats the fitting again. The bigger the number of control nodes in the current step the more precise the result.

The lighting

Peter Sumanaseni, senior technical artist at Epic, lit the scene using lots of photo reference and information about the skylight given to the team by Victor Coonin, the world expert on the David. “Pete did an awesome job lighting it”, Evans explains, clearly very proud of the team effort to so faithfully realise the data into an accurate virtual DAVID.

The image above shows the result of a 12 hour lightmass bake in Unreal. Lightmass Global illumination creates lightmaps with complex light interactions such as, area shadowing and diffuse interreflection. It is most often used to precompute portions of the lighting contribution of lights with stationary and static mobility, such as this scene. While the Lightmass built from the skylight was given a long time to compute, it plays back in realtime with stunning diffuse bounce area lighting. Sumanaseni simulated the actual skylight above the real DAVID and Epic’s Adam Skutt added in a small amount of Ambient Occlusion just to darken around the eyes. “To make it a little dirtier, somewhere between where it was when it was scanned – which was very dirty at the time, – and today when the DAVID is very clean”.

VIVE head gear

Chris Evans decided to set the DAVID up in a VIVE capture volume and VR setup. Given the scale of the actual David Statue and the size of a normal VIVE VR capture volume, the actual VR experience requires special extension cables to allow the 17 feet square virtual scaffold to provide a 1:1 space in VR that is still in the volume. The system uses a clever system that raises and lowers the Scaffold allowing people to feel as if they are being lifted up to head height with the DAVID.

Michelangelo’s statue was originally supposed to sit on a cathedral roof line, which Evans explains is why some of his features (like his hand) are exaggerated in size, to compensate for the statue being viewed from below. This is also why the VR experience is so interesting. Almost everyone experiences the Statue from the ground. Only a very few, Levoy amongst them, has really stood close to the statue high up.

In 1998, many hours on the scaffolding gave the team plenty of time to contemplate Michelangelo’s masterpiece. On the last night on March 27th before the team disassembled the gantry and packed up their gear to leave the Galleria dell’Accademia, Marc Levoy asked the team to leave him alone. “I climbed up the actual scaffolding, similar to the one in the VR, on the last night, around 4 am, and I told my students to not dbother me for the next few hours. I just sat there, cross legged communing with the head of the David Statue, thinking about Michelangelo and his art… while the sun slowly rose over the top of the dome, and the light started to change across the head,… it was a very emotional experience. So I am very familiar with sitting up there and looking at the statute at scale.” Now that the project is fully realised Levoy feels that the result is “frigging awesome.. it was a great experience, it was the most real experience of the David since I was actually there on scaffolding in the gallery”.

this was one of the most amazing things I saw at Siggraph. Even it is not so recent, it is an excellent example of using VR technology as opportunity to expand education and Human experience.