We take a look at Sony Pictures Imageworks’ visual effects for The Amazing Spider-Man, including their approach to the sub-surface scattering of lizard skin, spidey’s animation, the digital New York and working with the RED EPIC stereo footage. And we talk to Pixomondo about their work for the bridge sequence and other shots.

The Lizard

In a recent article on fxguide we discussed the advanced work Weta did in the film Prometheus. In particular, the new sub-surface scattering (SSS) advances they made, to better balance quality with speed. In that fxguide story we talked about their push to more accurate SSS models – this is a quote from the article:

During Avatar, Weta’s SSS approach was based on a Bidirectional Scattering Surface Reflectance Distribution Function (BSSRDF), that used a dipole (two) diffusion approximation allowing for efficient simulation of highly scattering materials such as skin, milk, or wax. A BSSRDF captures the behavior of light entering a material such as alien or human skin and scattering multiple times before exiting. Monte Carlo path tracing techniques can be employed with high accuracy to simulate the scattering of light inside a translucent object, but at the cost of long render times…Weta moved to a translucency quantized-diffusion (QD) model…which puts a lot more detail on the surface than dipole does.

Sony Pictures Imageworks also wanted to up the level of their SSS approaches for The Amazing Spider-Man, particularly for The Lizard, but unlike Weta they skipped a step effectively in moving to a more advanced solution. Weta moved to beyond dipole to a quantized-diffusion (QD) model which was primarily authored by Eugene d’Eon.

Independently of Weta, Sony solved this problem by jumping entirely to a full Monte Carlo path tracing technique. This is a remarkable commitment to image quality as almost the entire industry has stopped short of a full Monte Carlo solution for large scale production. It should be noted that while not the only difference, SPI also used an Arnold renderer for Spider-Man, while Weta relied on RenderMan.

While not using the same renderers, Sony did face extremely complex and challenging render times, just as Weta had to consider when preparing and researching their solution. We spoke to SPI CG supervisor Theo Bialek and asked just what they were thinking!!!

“We actually used a brute force Monte Carlo solution for the sub-surface scattering for the bulk of the Lizard, and yes, it is very computationally expensive,” admits Bialek. “Previously at Imageworks we primarily used a permutation of the common dipolar solution that relied on a point cloud of presampled data, which has several inherent inefficiencies when it comes to multi-threading and accuracy. Though costly, our single scatter raytraced method provided a nice forward scatter effect from rim lights – allowing for the realistic transmission of light through the thinner parts of the skin, such as the red in back lit ears, accurate traced occlusion from inner objects like the dentin underneath the enamel on his teeth, and yet still had enough soft diffusion for the backscattering. It was ideal for the look we were trying to achieve given the detail and highly displaced nature of the Lizard’s scales.”

An increased level of accuracy was required with the sub-surface since its contribution to the overall lighting of the Lizard’s skin was proportionally high compared to the diffuse and specular shading components. In addition, given the character’s proximity to camera, so many of Imageworks’ shots required accurate scale to scale occlusion and shadowing to be observed in the calculations of the sub-surface.

And if this was not bad enough, Sony eventually implemented one of the most complex set-ups for doing the ray tracing solution in, namely that the indirect light also needed to contribute to the sub-surface illumination. As the sub-surface was an integral part of his illumination the bounced light off of surfaces was clearly missing from the sub-surface when the Lizard stood on top of a brightly lit floor or near a wall. Though not ideal, the workaround was to place extra low intensity bounce lights to compensate, but this was also a time consuming process and would lead to inconsistency between artists.

Watch an Imageworks breakdown of the classroom fight between The Lizard and Spider-Man.“The rendering of the Lizard started out fast enough at around 4 hours on a single core, with our basic raytraced SSS and a single layered shader,” notes Bialek. “Through the natural evolution of the lookdev phase we began to add in complexity and variation, eventually topping out with an 8 layered shader – as to be expected the render times swelled to 30 hours and beyond. Adding in the indirect lighting to the sub-surface calculation our renders took another big hit and reached well beyond 50 hours on medium or closer framed shots. The extra realism was a huge improvement, though we knew the increased render time wasn’t viable for a large scale production. So we started optimizing, removing layers in our shader by baking some of the look into textures and making compromises in other areas where the extra complexity wasn’t adding substantially to the overall look. In the end we reduced the Lizard’s skin shader to four layers but our render times, though markedly improved, remained too high. So we relented and turned off the extra indirect SSS contribution. Hovering around 20 hours a frame the render times were still unrealistic given scope and number of shots. It was at this point that we leaned on our facility shading and Arnold team and asked, “What can you do?'”

The Spider-Man team turned to Sony’s in-house Arnold team (which runs in parallel to Solid Angle’s Arnold team). In a short period of time SPI’s R&D team came back with a set of optimizations in the render that pruned out rays which had little to no discernible contribution. In addition, a host of small optimizations were added that slowly chipped away at the overall computation time with little to no loss in image integrity. “After a few weeks our R&D team not only cut our rendering time in half to just over 10 hours a frame,” says Bialek, “but they were also able to implement a version of the indirect SSS contribution that allowed us to turn the feature back on with negligible effects to the render time. The realism of the Lizard is definitely something we would not have been able to achieve without the development team’s help.”

The biggest bug bear with ray tracing solutions is battling noise, and despite the Multiple Importance Sampling method Sony uses inside of Arnold, it was a challenge on the show when confronting the long render times. “Noise is always an issue,” recounts Bialek. “One of the advantages of having our version of Arnold which is natively physical, is not having the sampling solutions tied to the individual shaders.” Previous implementations of Sony’s Arnold had the shaders independent of one another determine their own sampling methods. “In our current version,” says Bialek, “the render sort of polls all the shaders at once and then works out the best solution for tackling the noise on a global basis. The renderer may make a decision to solve one area in a slightly less efficient manner but only if it allows other areas to benefit for a net gain.”

“In this case, Arnold sort of polls all the shaders and then it can work out what it thinks the best solution is and optimize it,” says Bialek. “It was an amazing job – the guys turned on the bounce to the SSS, and we said, ‘No you can’t do that!’, but then we looked at it and it just looked way more realistic so we all agreed – we just need this – so we went back and after a few weeks they worked out ways to throw away some rays that were not contributing, and they did it. It was definitely something we would not have been able to achieve without the development team.”

The few parts of the Lizard that were not SSS with full Monte Carlo ray tracing were his eyes and inside his mouth. Says Bialek: “The interior of the mouth – you never see the back of so that was a prime candidate for our point cloud based sub-surface scattering.” On the character’s face the team added very fine short hairs and flaking skin. “The visual effects supervisor said it was too clean so we went with actual polygons of peeling skin. Those also used the point based scattering as they were those highly transparent larger flakes. For additional granular breakup on profile fine irregular hairs were added, analogous to the peach fuzz we see on human faces. Lastly, we also included little bits of short thick opaque hairs stuck within the grooves of the scales to further dirty him up.”

See how Imageworks created the sewer shots featuring The Lizard and Spidey.Modeling

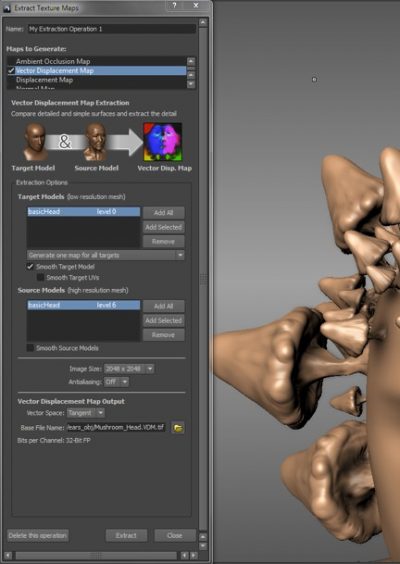

Displacement vectors are different from traditional displacement maps in that they can bend over and produce more complex surfaces. A vector displacement can overhang other surface points in the model. This is not possible with traditional grayscale or ‘height map’ displacement maps.

Because the displacement map is seen as a vector, they can move in any direction, and overlap providing a more highly detailed skin surface.

Vector displacements allow for undercuts, folds, and bulges, and of course scales. Once extracted, maps can be also used as brush stamps or stencils in Mudbox to sculpt complex detail onto meshes easily. Artists can build up a library of commonly-used forms and reuse them.

On set, production had a full body model and a more detailed bust of the Lizard that was used primarily for on-set lighting reference. “Our initial version of the Lizard was a near replica of the maquettes as supplied from production,” explains Bialek. “As such, we had virtually all of the details intact from the original, including the unintentional tooling marks from the sculptor. First step was to remove those non-organic details but retain the design of the scale layout given the purposeful patterns worked out between the sculptor and the director. As the character started to take shape in the 3D realm and we began rudimentary motion tests, it was clear that the maquette was going to be only the starting point of what the final design would become.”

As to be expected with any hero character, an extensive iterative phase began where Imageworks explored refining the layout to suit deformations and other subtle variations to imbue characteristics of the actor into the Lizard. “As a general rule of thumb we bring a character to 85% of what we consider finished so that we can reserve a portion of our resources to modify the character once we start to see it in actual shot production,” adds Bialek. “We knew however that our Lizard would be featured in extensive detail so we put in quite a bit more detail than we have on other hero characters in the past. Even so, once we started seeing him in actual shots we would go back into Mudbox and upgrade our vector displacements as the camera became increasingly closer and closer. It was a labor of love as practically every scale in his vector displacement map was painted and repainted.”

“The main advantage of using the vector displacements was being able to create a more interesting profile to each scale,” says Bialek. “Instead of just displacing the geometry straight away from the normal our lead modeler was able to sculpt the scales to each have their own angle and curvature. This type of variation was particularly noticeable in the spec response and as the scales rounded at their edges.”

Mudbox 2012 allows 32bit (OpenEXR or Tiff) depth files interpreted as 3D vectors. SPI used the OpenEXR format for the Lizard. As you can see in this image at left from Autodesk, concave shapes are interrupted into a vector / floating point color map in much the same way compositors use Pmaps (Positional maps) but for the surface only.

The Lizard was UV mapped. But for the vector maps, notes Bialek, “you can’t really use to drive any of your other color maps because they are vector based, but Mudbox does put out a traditional displacement which is really a bump map before the pixels are pushed around and that we can use inside Photoshop and derive color maps. Sometimes we would use projection maps but at the end it is all mapped out to a z-map.”

The Lizard from The Amazing Spider-Man is the most complex character ever built at Imageworks, with a model of over 128 million polygons including its base and all the hand-crafted displacement shapes.

One of the most complex aspects of the scales was how they would bend or animate. At a surface level, would the scales individually bend or would they slide over each other? And exactly how would the animators be able to achieve enough emotion, given most reptiles are not emoting the way the character clearly needs to in Spider-Man (being part man)?

“We thought about using sheering maps, so that when the skin would fold we would treat the displacement differently, and we opted for a simpler approach,” says Bialek. “What we observed when we looked at a lot of reference material was that in highly flexible areas of the skin the scales get much smaller. We looked at a lot of iguanas and other lizards. When you get an area that is flexible and moving around a lot, the scales are really small, and so you don’t notice the stretch and compression on them as the area is so fine, but for the areas with large scale we were very aware of where the deformations would occur and we would paint them out or put in new folds. But we would put larger scales in more rigid areas and smaller ones where it needed to be flexible.”

There were a couple of transformations in the film, such as transforming back to being human, and losing a lizard arm – these required skin disintegration. Most of the transformations, such as around his face, required referencing the practical effects from on set. There is one scene where the lizard skin moved to human skin and this was the only primary shot which required digital human skin, “but it was a night shot and not as hard as you might think,” says Bialek.

Lizard lighting

On set HDRIs were taken using a Spheron. From these, a sky dome was made, an unclipped HDRI. From this HDRI, the biggest contributing lights were promoted and extracted and mapped to area lights. As area lights they can provide parallax to the character, in a way that they can’t when remaining mapped out at the sky dome level.

Next, and critically, the ground plane is extracted, and projected onto geometry. If the ground plane was not extracted and projected, while it would contribute to the primary lighting – just as the sky dome does – it would not get shaded, but the characters themselves would. As such, the ground bounce lighting would always be fully ‘on’ and not broken up with sometimes large character contact lighting shadows.

This was an approach developed on The Smurfs (see fxguide’s coverage) where the team also developed the technique of using the Spheron’s photogrammetric technology (based on the two sweep passes the Spheron makes to place the promoted light at the right place in 3 space). Note what is important here is that the approach goes beyond set reconstruction, to removing lights from the HDRI skydome and re-introducing them at the right place in the 3D environment, which provides much greater accuracy when characters move away from the single point the HDRI was recorded at.

From this starting point, which is highly accurate, the team needs to start lighting the character the way the lighting TD or visual effects supervisor wants the character lit. It is very hard to light a non-existent character on set, so naturally additional lighting is always needed. Even the maquette was only a bust and inanimate.

“I would say 80% of the shots that had a physical set we would use the HDRI contribution with just a little bit of help,” says Bialek, “like pushing the rim light, and for the rest we would relight them ourselves.”

With the vast variety of lizards and skins in the real world, there were many ways to design the skin and still have it looking realistic and ‘familiar’ to an audience. “In the end we tended to reference Komodo dragons and also iguanas,” explains Bialek. In addition to the core properties of the skin, the character fought and got dirty and damaged from those interactions, so there were layers of detailing and additional over laid textures. “We started off with three layers of specular – you want to get the broad response – but then the tighter response where it best wet or where it is worn, or where it has had a lot of contact on the ground – maybe the scales are smoother – but the biggest thing that differentiates his skin compared to human skin is the introduction of iridescence into the specular.”

Initially the team made the lizard skin look like the maquette, but then from looking at (iridescence) reference that included beetles and most importantly, the Brazilian rainbow boa constrictor found from Costa Rica through central South America. “An interesting aspect of their iridescence is that it’s off angle,” comments Bialek, “so when you look at the animal you have a specular highlight reflection from the light source but then the iridescence is not on the spec highlight – it is off that – oblique to the camera. So we mimicked that, and yet we found it distracting.” In the end the team opted for a slightly more traditional approach by tinting the specular highlight at the edge – “it is in their enough on one of the three spec passes.”

Watch a scene from the film featuring The Lizard and Gwen Stacy (Emma Stone).In a traditional RenderMan pipeline it would not be uncommon if attempting iridescence and shading related to a Rainbow Boa to produce just a special Fresnel pass. While most of the SPI staff have a strong background in RenderMan, in switching to open shaders and an Arnold pipeline, “we went as far as we have ever gone in implementing a physically plausible shading approach,” notes Bialek. “And so look dev does not adjust those responses so much anymore, they now pick a ‘roughness’ value and that drives everything in the shader, the refraction, the Fresnel, the contribution of that versus the spec – that type of thing is now automatic and based off the models. You definitely give up a lot of flexibility, as you can’t adjust those things independently but it is also freeing as you don’t need to worry about that. You pick a roughness and trust the renderer that it is doing the right thing. You just need to pick that one thing – that one seed that accurately describes it accurately.” All the dirt and all the wetness comes from different settings.

It is common practice in production to have a renderer produce several simultaneous outputs. In addition to the ‘beauty’ render, there may be say, just diffuse, and/or specular, reflections, just the contribution of a subset of lights. Such outputs are often recombined in compositing. In many renderers, it is common to define many such outputs, one for each of these ‘arbitrary output variables’ or AOVs.

OSL-based renderers such as Sony’s implementation of Arnold discourage the practice of cluttering the outputs, and instead allow a renderer-side specification of which light paths contribute to which renderer outputs. New outputs may be specified using light path expressions, without any modification to the shaders at all. This is possible because OSL shaders are not computing raw colors, but rather are computing ‘radiance closures’, and the closure primitives (such as diffuse, phong, etc.) know what kind of light paths they are computing.

OSL is open source but only currently used by SPI.

As part of moving to the Open Shader Language OSL, the team was able to “write out light AOVs, and of course you get component AOVs,” says Bialek. “You get spec, reflection, indirect diffuse, the diffuse, the sub surface – you get all those components as separate AOVs that you can access in the composite but now you also get – with little or no overhead – each light’s component as well. So the lighters have access to the components of the lighting as they build it up and then each light contribution. You have to be careful to not ruin the relationship you have and you have to choose how you want to go in comp.”

As a lighter on Spider-Man at SPI, one would:

- use the Spheron data and set an HDRi sky dome,

- choose which lights to extract and promote into the scene

- choose which lights you want to output as Light AOVs

- the compositor would get those – say key, fill, sky contribution, and they could adjust the fill to key ratio.

Compositing The Lizard

Compositing was done in Nuke stereoscopically as the film was shot in stereo. The team developed a way to work with Positional maps (Pmaps), to roto on one part of the rendered creature and then effectively the Pmaps would help maintain that root on the same place on the Lizard as the frame count moved forward. So if a reflection needed to be reduced in one spot, the Pmap would allow the artist in Nuke to draw on the frame a region, adjust the reflection and the combination of this roto and Nuke’s knowledge of where that sits in 3 space from the Pmap allowed that correction to move forward with the character as the animation and composite happened. “Most shots required one or two little changes,” says Bialek. “It always helps to make some little changes.”

An amazing Spider-Man

Imageworks had principal duties on the previous Spider-Man films and were of course proficient with the look and feel of the main character, this time played by Andrew Garfied. But director Marc Webb wanted a much more naturalistic feel to the action scenes for this incarnation. “Marc’s touchstone was basically District 9,” says Imageworks visual effects supervisor Jerome Chen. “There was also definitely an emphasis on integrating live action stunt work with the CG Spider-Man. I’m definitely proud of that work, taking a stunt performer and integrating cut-to-cut with a CG Spider-Man.”

The Spider-Man rig itself contained almost 100 deformers and 600 hand modeled face and body ‘poses’. Animators had specific shapes they could manipulate for muscle flexes and wrinkles. Andrew Garfield’s body was scanned and studied and his movements also replicated without the use of extensive motion capture. “Andrew embraced the suit and could honor all the comic book poses by basically animating himself,” says animation supervisor Randall William Cook. “He was very conscious of the spidery movements he was making.”

For Cook, the naturalistic motion, informed by many of the practical stunts, was important to keep in the shots, despite the technology at Imageworks’ disposal. “In the stop motion days it was hard to get something with the fluidity of human and animal form,” he says. “Computers have the opposite problem. They move in much more mathematical perfection than flesh and blood and bone can move. You’ve got to go in there and rough it up a little bit. The thing about having Spider-Man in a mask is that it becomes incumbent upon us to make the performance simple and readable and direct and true.”

Watch behind the scenes of the Riverside Drive shoot and then the final scene.Ultimately many shots became a mix of performances by Garfield, sequences filmed against bluescreen, live action stunts with pulley systems by Andy Armstrong’s stunt team (including for a sequence on the elevated portion of Riverside Drive), and Imageworks’ incredible digital New York environments and CG Spider-Man. Garfield also grappled with The Lizard in many scenes. For one such encounter at the school, an actor in a black muscle suit and tracking markers enabled close physical interaction before Imageworks replaced the stand-in with a digital Lizard.

One of Spidey’s key weapons in the film are his home-made web-slingers. Webb wanted these, too, to have their own distinctive language and movement with a very spidery and lattice feel. “We’re now rendering with physically based rendering systems, so we wanted the webs to respond to natural lighting captured on set or that had been built into the environments,” explains digital effects supervisor David Smith. “Our animators would animate just a line to indicate speed or action and get the general pacing and then hand off to the effects team.”

The effects artists added textural qualities within the web geometry, including a barbing system to give it more dimension. “We could play a lot of different light artefacts off that and give little imperfections like chromatic aberrations,” says Smith. “We had to render most of our webs at 4K for fine detail so that our Arnold raytracer could solve to it, without a lot of noise. And there was also a lot of negative space – parts of the web disappear into the darkness and you just see a little bit of detail to see it into the night, which was more realistic.”

– Take a look at Spider-Man’s webs in this video from our media partners at The Daily.

Swinging through New York

Although for this new Spider-Man outing many of Spidey’s web-slinging shots were realized with live action stunts, Imageworks was still called on to do numerous set extensions, CG characters and often completely digital New York environments through which the masked hero swings. Still, the starting point remained the actual city. “We spent a lot of time studying the city shot on the RED because we wanted to make sure our CG city matched,” acknowledges Jerome Chen. “It wasn’t just an issue of blacks or illumination – I really wanted to look at how windows would flare out and how the camera recorded red blinking lights, say.”

An SPI team spent days in New York acquiring Lidar scans and reference stills in order to provide city views, which were mostly for nighttime scenes. “In doing the night city,” says Chen, “we discovered that a lot of the realism came from what would we put into the windows of the buildings. This was an existing pipeline from the other Spider-Man movies that we modified extensively. Also, certain buildings have a color temperature in the way the entire lights illuminate the tints of the glass. On certain floors we noticed patterns – you’d see a whole floor lit up when people are cleaning it, or individual offices and bull pens. So all those different configurations of lights give each building a character and adds to the believability.”

Sixth Avenue in New York was one particular area the SPI team photographed and scanned from street level and on adjacent rooftops. For rooms and areas inside buildings, the studio used a special shader, as CG supervisor John Haley explains: “We create a flat plane but we shoot a (Spheron) HDRI image of room interiors. It’s a traditional spherical image that basically shows all sides of the room at 26 levels of exposure. So depending on the light exposure and the angle of view you can see any portion of the re-projected room. On rare occasions where we get so close to the room interiors – only about two shots – we had to swap out with physical geometry and re-project portions of those rooms onto geometry.”

An extension of earlier room interior effects R&D allowed views from one corner of a glass building, say, right through to the other side with the appropriate transparency. “They were able to update the techniques used so we could pass rays through the one room interior,” explains Haley, “give it the geometry and attribute, letting it know it was a corner office essentially, letting it know you could pass through the other side and see the building on the other side of the street.”

Watch an Imageworks making of showing how they animated Spidey and digital New York.Armed with the Lidar scans, reference stills and interior Spheron HDRs, Imageworks created a library of individual buildings that could be used to plug and play into whole city blocks before the scene was rendered in Arnold. The enormous amount of geometry was often divided up into overlapping sections, with a system that dealt with the light contamination from section to section. “It would still take at least 24 hours per frame to get all the geometry pushed through, but dividing it up block by block meant if there was a problem two blocks down you were able to re-run that particular pass without affecting the entire environment,” says Haley.

In addition, varying levels of detail was added for sidewalks, cars and pedestrians and atmosphere, depending on the area of the city. “Some city blocks have a lot of detail, other city blocks are more modern and clean and smooth,” says Chen. “We found that this particular New York (when we were there) seemed to be under construction. When we were shooting there was scaffolding everywhere – that became a component of what we would make to do our buildings.”

A stereo workflow

Shot in stereo (parallel) on RED EPICs using a 3ality Technica rig by DOP John Schwartzman, The Amazing Spider-Man certainly embraced 3D. Like any stereo production, the filmmakers performed some initial triage on the footage using various tools including a Mistika system to fix up any alignment and color issues. “We called it a bucket pass,” explains Chen. “We look at the initial footage and there are five different buckets we place the shot into:

1. Didn’t have to touch it – perfect stereo (very rare)

2. Minor stereo corrections for geometry and alignment

3. More ocular work – some artefacts

4. One eye really screwed up – would need to send to say Lowry Digital / Reliance MediaWorks for image processing

5. Throw the eye out and have to convert it.”

Imageworks also worked closely with the color scientists at RED in terms of resolution and color from the EPICs. “Our working pipeline was around 2K resolution with a ten percent pad and 16 bit DPX colorspace,” says Chen. “We would take the R3D files, use Nuke to scale it down using a Simon filter, and then go right to 16 bit DPX in a log format. In compositing we would open to a linear format, and go back out to log.”

Read our Shooting diary of Spider-Man article here.

Around 100 different lenses used during filming were mapped with a camera grid, and Imageworks also calculated rolling shutters for individual camera bodies. “The RED EPIC sensor lets us shoot a larger area of picture more than is intended to be screened and use the extra real estate to do our alignment and convergence work and to generally do any post moves,” says 3D visual effects supervisor Rob Engle. “It was kind of like shooting with a Vista Vision camera. The framing lines that the DP saw were 10% smaller than what RED captured.”

In terms of the film’s depth budget, Engle notes, “We played with the depth. We allowed the quiet moments not to be overtaken by the stereo, but when you’re in Spider-Man’s shoes you really feel like you’re swinging through the canyons of New York. We definitely varied the depth treatment through the film. So we needed floating windows and virtually created a deeper world to bring it out into the audience.”

“The director wanted to capture the real essence of what it’s like to be Spider-Man, both from stuntmen performing stunts and with the 3D,” adds Engle. “I was at one shoot in downtown LA and we were following the stuntman on this car. He starts running through the street, he’s being chased by cop cars, he chases this pickup truck, holds on to the lift gate on the back and starts sliding and does a backflip into the bed of the truck all while it’s in the motion – our challenge in creating the virtual Spider-Man was to make it feel as real and visceral as the real actors.”

Pixomondo crafts effects for Williamsburg Bridge sequence

We talk to Pixomondo visual effects supervisor Boris Schmidt about his studio’s work on the bridge sequence in which Spidey first encounters The Lizard, some of the lizard skin transformations, and Dr Connors’ arm replacements.

fxg: Could you describe Pixomondo’s work for the Lizard chase on the bridge?

Schmidt: In the bridge sequence Pixomondo worked on, Spider-Man faces his enemy, The Lizard, for the first time. The Lizard is on a rampage, throwing cars over the side of the bridge and Spider-Man is trying to save all the cars. For this sequence, we had to completely recreate the Williamsburg Bridge, the whole environment and the cars all in CG.

Watch part of the bridge sequence here.fxg: How did they film this sequence in terms of live action and what kind of 3D and set extension work was required?

Schmidt: They built a small portion of the bridge to have on set, and then we had to digitally extend the bridge. Ultimately, we decided to use only the foreground elements of the plates, like the actors and some of the cars, and not use the filmed parts of the bridge. We basically replaced the whole bridge because it was easier for us to do the lighting, matchmove and stereo work. We had about a dozen practical cars on set, essentially one lane of the bridge so we had to add additional lanes and the traffic going the other direction. The production built girders and pylons up until about 15 feet up in the air and everything else was CG extension. Everything on each coast was also CG and matte paintings. There were very few plates where we got everything in live action plates.

fxg: How did Pixomondo approach the lizard transformation work it had to do (both for Connors and the police)? What kind of human skin and lizard skin R&D was required?

Schmidt: We made sure we got a lot of digital scans of the SWAT actors for the face recreation in 3D. Also, during the shoot, they placed markers on their faces. The little dots were easy to paint out later on but helped us in tracking their face movement. We had to do the complete CG face recreation for each actor. In the concept phase, we went back and forth on details like how many scales the face was going to have and such. We really modeled the whole lizard face until the director, Marc Webb, was satisfied with the look and then we began defining transition points. When the blue ash hits the face, this is where the healing starts so the underlying human face is revealed in that area. There was a lot of timing and concept work involved.

For the skin, we got early shots from SPI where they did a similar task for reference. Based on this first look, we created our CG model then there was some was back and forth on details. We would give them an example and they gave us notes until we determined the final look. We were trying to control individual scale so we could more easily morph the characters.

fxg: The arm replacements are great invisible effects. Can you describe a typical shooting set-up and how the final shots were done?

Schmidt: Most of the arm replacement shots were stereoscopic so we had two plates, one for the left eye and one for the right eye. The actor, Rhys Ifans, wore a green sleeve for arm coverage so we needed to paint it out and replace everything that was behind the green arm. These particular shots weren’t complicated but they were tedious since we needed to make sure everything worked in 3D. We first tracked and matchmoved the arm movement, then we developed a CG stump for the missing arm. There were also a lot of different reflections we needed to paint out. In the lab, there is a lot of glass, metal and mirror-like objects so we needed to make sure the original green sleeve didn’t show in any of the reflections. For Dr. Connors’ lab coat, we needed to do a cloth simulation. When an actor has an intact arm, he moves differently than if it was just a stump. We had to animate the arm to make it feel more like a stump and less like a full limb.

All images and clips copyright © 2012 Columbia Pictures.

Fantastic article and I loved the breakdown videos! Looking forward to the VFX show :).

Pingback: The State of Rendering – Part 2 | 次时代人像渲染技术XGCRT