Color management and color workflows have evolved over many years. in this article we will trace from Cineon to Scene Referred Linear OpenEXR format. Cineon is such a backbone of the industry but it is now over 18 years old and perhaps it is time we moved to a more robust, flexible and accurate system.

We need to know where we are in color science terms to allow visual effects pipelines to perfectly match the live action with CGI. If you can’t replicate the colorspace of the film’s images, how can you generate CGI images and composite them seamlessly into those images? How can you have CGI shots interact with live actions shots if you can’t generate the same imagery ‘look’ in the computer since you don’t know how or where the live action footage is in colorspace?

Listen to Mike talk to Joshua Pines from Technicolor in his capacity as a member of the Academy. Joshua Pines is Vice President of Imaging Research and Development of Technicolor speaking not on behalf of Technicolor but as a member of the Academy’s Science and Technology Council.

Once film was only a capture and display media, and all post-production meant digital manipulation, the industry has been in a constant battle to make sure that the director’s vision was correctly translated to the final screen. The aim was simple: to keep the data and processing time under control, avoid the images drifting in color or degrading in quality and simultaneously push the technology and state of the art of filmmaking to make more and more arresting imagery that would emotionally connect with an audience. From accurate skin tones on the side of the face of your leading lady, to the black levels of a CG robot, to the interacting of effects and non-effects shots, the challenge required some of the best minds of the industry to tackle color management.

Color management is a huge topic, but one aspect of it of interest is the transition from an 8 bit file to a scene linear referred floating point file. This evolution follows both the rise of Moore’s law of computing power and the simultaneous drop in the price of storage. 10 bit files once seemed huge, storing your files as 32 bit (RGBx 10 bits + 2 spare bits) vs a 24 bit (RGB x 8 bits) was an expensive 40% increase in file sizes, but an even more vast hit in computational time to move from video to film processing. Yet today 64 bit files seem reasonable, and they move through the pipeline with multiple additional passes, mattes and maps – and no one thinks of an OpenEXR 16 bit half float file as exotic.

But a DPX or OpenEXR file is really just a container. It is the way we interpret the world – the color spaces, gamma and associated mathematical approaches – that has really evolved. When Digital Domain held a private screening for a group of high level directors to see if they could tell the difference between shots done on an 8 bit (yes 8 bit) film pipeline and a full 10 bit log (12 bit) pipeline – when the same shots were projected – only one director picked the difference. If that is the case, why has the industry pushed so hard to move to ever larger and more complex file formats?

The answer lies in what we now require these files to do. It was true that the ‘correct 8 bits’ hand selected from a film scan could be intercut back with film without a really noticeable difference, but then we did not require as much processing to be done on the frames. The effects back then were heavily anchored in practical effects and miniatures. Color grading was done at the lab using printer lights and actors used look alike stunt doubles hiding their own faces to allow Arnold Schwarzenegger to ride a horse through the lobby of the Bonaventure hotel in True Lies. We did not require a digitally generated Brad Pitt to age backwardly while sitting on the body of another actor in Oscar winning perfect harmony as we do in The Curious Case of Benjamin Button.

Video and the ‘right 8 bits’

In the early 90s when Discreet’s Flame was first released as the then ‘art of the art’ in digital film compositing, many film pipelines used LUTs to convert 10 bit Cineon film scans into 8 bit ‘linear’ files. LUTs were destructive, literally picking the ‘right 8 bits’ from a much larger film and converting from Cineon Printer Density (CPD) colorspace to sometimes a video colorspace to allow feature film effects to be done.

The world back then was split much more between film and video. Video assumed a gamma correction but was nicknamed by many as linear color since linear seemed to be the opposite of the film which used a log encoding scheme to squeeze often 12 bit non-realtime time scans into the marginally smaller Kodak invented Cineon file format. The confusion from this mixing up of terms and concepts such as file format, bit depth, gamma or not, film vs video and true linear or gamma corrected footage has continued to this day.

In reality, much of the high quality video was generated on film and transferred in real time to video on telecines such as the flying spot (CRT) Rank Cintel URSA, and later the Line Array BTS/Bosch/Philips Spirit DataCine (SDC 2000). But the history of telecines date back to John Logie Baird and the birth of broadcasting.

Unlike film scanners such as the Arri-scan, telecines were primarily concerned with producing a conversion from film to video. Film scanners were developed to maintain film’s density and complexity with an eye to eventually reversing the process and film recording back to film on say an Arri-laser recorder.

Cineon and DPX files: the days of 10 bit log

Cineon started life as a computer system that just happened to have a film format. It was designed as an end to end digital film computer processing system including film scanning and recording hardware. It was designed by Kodak for digital intermediate film production. It included a scanner, tape drives, workstations with digital compositing software and a film recorder. The system was first released in 1993 and was abandoned by 1997.

As part of this system Kodak’s fine engineering team (and at the time there was none finer) declared that the full film image could be captured digitally and stored in a 10 bit log file format called Cineon. Importantly, this was an intermediate format – it was designed as a film bridge. It was not designed for CGI nor any digital final delivery. It was meant to represent the “printing density”, that is, the density that is seen by print film. It is vital to understand that Cineon files were assumed to operate as part of a film chain keeping whatever values were originally scanned from your negative to be later reproduced on a film recorder. It was designed to retain the original neg’s characteristics such as color component crosstalk and gamma.

Film, unlike a digital sensor, requires the light to pass through other layers of the film to get to the layer recording its particular color. For blue light to be recorded by the blue layer of the actual film it had to pass through a bunch of other layers – including the green and red film layers. This leads to a few key film characteristics, such as crosstalk or the mixing of the RGB values more than say a pure CMOS sensor would with individual blue, green and red photo sites. Of course, CMOS data will then get interpreted (Matrix-ed) creating its own issues.

Conversion from film to video normally involves the concept of a “black point” and a “white point”. In video, white for example is all channels – all on. In 8 bits that would be 256, 256, 256 but in film this is more of a white matte piece of paper white, but the film records or carries higher – whiter – values such as the ping off a shiny car. If the most white value was used as white in the conversion to video, the increased latitude of film would mean that all the ‘normal’ exposure values would be pushed down to make room for these very hot whites and the result would be an unreasonable dark image. But this is also a reflection of the fact that light is perceived as being very even, but in reality we as people interpret the true linear nature of light in a non-linear fashion. For example, if you look at a ramp from white to black the value or tone most non-industry professionals would pick as a mid grey is only 18% along from black to white.

The common conversion points for a film to video file then are 95 and 685 on the 0-1023 scale. Pixel values above 685 are “brighter than white”, such as the pings on chrome or bright highlights. Pixel values below 95 similarly represent black values exposed on the negative. These values can descend in practice as low as pixel values 20 or 30.

Cineon was hugely successful and immensely significant in the development of film effects and film production in general, as shown by its widespread use still today. Cineon as a file format is however somewhat limited and in the 90s the DPX file format was developed. It can be thought of as a container within which Kodak’s Cineon (.cin files) are often found. DPX or Digital Picture Exchange is now the common file format for post-production interchange and visual effects work and is an ANSI/SMPTE standard (268M-2003).

The original DPX file format was improved upon over time and its latest version (2.0) is currently published by SMPTE as ANSI/SMPTE 268M-2003. As the the central file format is most commonly the original Cineon file style, DPX too represents the density of each color channel of a scanned negative film in an uncompressed “logarithmic” image where the gamma of the original camera negative is preserved as taken by a film scanner. It is a DPX file that is most commonly used to grade a feature, for example, but the newer DPX format also allows a reasonable amount of metadata to move in the same file, like image resolution, colorspace details (channel depth, colorimetric metric, etc.), number of planes/subimages, creation date/time, creator’s name, project name, copyright information, and so on. One problem, however, is that header information is sometimes not accurate or not used equally by all. As with all metadata, the problem can be one level of confidence in it.

OpenEXR: Floating point – the holy grail

While Cineon and DPX are successfully used daily in production, a new format appeared at the start of the new millennium, OpenEXR. OpenEXR was created by Industrial Light and Magic (ILM) in 1999 and released to the public in 2003.

The history and team who primarily contributed is well documented as part of the OpenEXR community documentation. The ILM original OpenEXR file format was designed and implemented by Florian Kainz, Wojciech Jarosz, and Rod Bogart. The PIZ compression scheme is based on an algorithm by Christian Rouet. Josh Pines helped extend the PIZ algorithm for 16-bit and found optimizations for the float-to-half conversions. Drew Hess packaged and adapted ILM’s internal source code for public release and maintains the OpenEXR software distribution. The PXR24 compression method is based on an algorithm written by Loren Carpenter at Pixar Animation Studios.

ILM developed the OpenEXR format in response to the demand for higher color accuracy and control in effects. When the project began in 2000, ILM evaluated existing file formats, but rejected them for various reasons (according to openexr.com):

- 8 and 10 bit formats lack the dynamic range necessary to store HDR imagery.

- 16 bit integer-based formats typically represent color values from 0 (“black”) to 1 (“white”), but don’t account for over-range values as discussed above. “Preserving over-range values in the source image allows an artist to change the apparent exposure of the image with minimal loss of data, for example”.

- Conversely, “32 bit floating-point TIFF is often overkill for visual effects work”. While a 32 bit floating point would be more than sufficient precision and dynamic range for VFX images, it comes at the cost of twice as much storage compared to half-float, but for very marginal additional accuracy.

Now at version 1.7, OpenEXR is supported now by most companies in the industry. But OpenEXR does not need to be half-float – it just most commonly is. In addition to the floating point type, OpenEXR supports 32 bit unsigned integer for example.

OpenEXR is an open format, not tied to any one manufacturer or company and it is remarkable in several ways.

First and most importantly, the file format is floating point, half-float to be exact. It is a 16 bit format, where 1 bit is sign (positive or negative) and then there is the 5 exponent bits, and 10 mantissa bits. For linear images, this format provides 1024 (2 to the 10th) values per color component per f-stop, and 30 f-stops (25 to the – 2 power), with an additional 10 f-stops with reduced precision at the low end.

Second, the file format, being floating point, has one exceptionally useful mathematical property which is that it is inherently logarithmic in nature. This is nothing to do with encoding and everything to do with numbers expressed in the floating point format.

Finally, and very importantly, OpenEXR images can have an arbitrary number of channels, each with a different data type. So OpenEXR is extensible, developers can easily add new compression methods layers or mattes each annotated with an arbitrary number of attributes, for example, with color balance information from a camera. Another example occurred in 2010, with the support of Weta in particular, when support for stereoscopic workflows was added.

Thus it is that OpenEXR is the state of the art and industry experts and long time senior color scientists such as Charles Poynton see it remaining adequate and central to the industry for years to come – he says “it is likely to last us at least 10 or 20 years.”

Scene linear workflow

Now that much of what is shot is no longer shot on film, it is worth examining the steps of an image moving from sensor to screen.

Scene Linear, or Scene Referred generically pertains to images capturing and respecting a linear relationship between the pixel values and the original physical light intensity of the scene.

We don’t have a spectral way to record images we only have a tri-stimulous or red green blue system. Most modern sensors are RGB CMOS chips. That is to say they have red, green and blue filtered light sensor points on the chip. While these light reading sensor points read either red, green or blue, they only each read one. So in reality one could think of the CMOS chip as producing a single ‘image’ of the world, as for every pixel there is but one value not a collective RGB. It is in reality a weird form of ‘black and white’ image, but of course un-viewable. What makes the image useful is we interpret each of the senor points, knowing they are filtering for red, green or blue and then interpolate as it were the values to produce three RGB ‘images’. But even then this first stage – known as debayering – is not decoding into a colorspace exactly.

Some call this first stage of moving from an un-bayered image to a triplicate of RGB values through debayering moving the image to a ‘camera space’. But strictly speaking we don’t have a colorspace yet. The smartest choice at this stage of picking a triplicate of RGB is to go to a set of primaries that are as close as possible to the native set of primaries of the sensor – at this stage you are not clipping or reducing the gamut – just moving to set of primaries that has as much information as possible. This camera space is not useable yet, but it is key as it reflects the sensor.

The next stage is to matrix or cross inform the image and sort of multiple up to a color space. What color space is critical?

Film is not perfect for scene linear workflow, as the negative placed a ‘fingerprint’ on the imagery that was carried into the Cineon file – regardless of the log encoding aspects of a Cineon file. If you come from a digital camera background you might ask, “what colorspace does film get scanned into?” But that is a deceptively complex question to answer. It is influenced by the toe and the shoulder of the film response curves, it is influenced by the fact that the film is transmissive – layers on layers so there is crosstalk between the colors, it is influenced by the film processing (think what a cross processing or bleach by-pass does to the image!) and of course the actual stock we are talking about: Kodak vs Fuji. But don’t forget Cineon is designed as a film digital intermediate. It was designed to capture ‘film-ness’ in a digital file.

Digital capture can be a better candidate for scene linear workflow. That is a workflow that says “let’s work on this file with it referencing the source – real life – imagery and not the output.” In its purest form scene linear is a linear relationship between light intensity in the scene and a set of RGB values, with no bias or consideration to encoding to some. It is not designed to be an image that is viewed directly. It is defined as a base line linear.

By contrast, moving into a REC709 colorspace is completely NOT scene linear – it is the opposite – we move the imagery into a workspace of HD monitors, we make it refer to its destination. Scene linear workflow tries to not do that at all – it wants to only reference what was in front of the lens.

So which colorspace would a scene linear workflow go into? Great question.

Here we need to think about the camera companies, as they are central to this issue in practice.

Each camera company wants to sell more of its cameras. But the post production industry would like the imagery just be one common interchangeable format. Think about that, we’d like in post to not care about whose file is what and just have one pipeline, while the camera manufacturers – to a point – want the opposite. They want their cameras to be different so you buy theirs and not just some other cheaper version.

So what do the camera manufacturers do?

Most camera pipelines are flexible so it is not normally the case you are locked in to just one option, but their preference is actually fairly transparent, and their preference reveals the very DNA of each company.

The RED Digital Cinema Camera Company would recommend you go to a REDcolor2 colorspace. This is their own solution and you could say this reflects their – ‘individuality’ – their desire to be different and trail brazing, a ‘do it differently and better’ approach. It is such a RED solution, and they will argue very effectively that they know their cameras best and this is the best solution for their cameras, and it’s true REDcolor2 works really well for RED cameras.

Arri would recommend a Cineon style approach recommending in the Alexa Log C which basically includes the toe and shoulder aspects of film, but not the unique crosstalk we see in film. It also reflects their German film camera history. They love film, they know as much about it as anyone in the industry and they are very precise at dealing with it. This is such an Arri solution: it is innovative, effective but respectfully built on the best of the past.

Sony takes yet another tack and it comes from video – Sony really knows more about video than any other company. And Sony is extremely Japanese in seeking a standard, an agreement to place their blockbuster mighty footprint on. Sony does not care if the solution is a standard – such as it was with CCIR 601 or REC 709 – Sony knows (thinks) that it can ‘out engineer’ anyone to the most professionally engineered reliable solution. In the short term Sony favors their Log S format, which is log but not mimicking film. In the medium to longer term, Sony was perhaps the first camera company to adopt the new Academy ACEs standard. It is such a Sony solution: let’s have a professional high-end standard – which should be a level playing field – but then they will aim to do that new standard better than anyone else.

As for the rest, one could go on with the generalizations: Panasonic follows Sony, Canon still can’t get past stills and Vision Research sort of follows RED (as this isn’t their primary market and they just want to get the best out of their cameras). Of course all of this is what one might call a gross generalization but there is more than a grain of truth in it also.

The difficulty of all of these different approaches is clear, it is hard to perfect such a multi-format pipeline. Most manufacturers make it remarkably easy to build a “pipeline primarily composed entirely of their own stuff,” jokes Charles Poynton. “They are setting it up so you can work within that (proprietary end to end) system and they are setting it up to some degree, so you can take whatever data metric they have chosen as the default of their camera and plug that into a monitor and get a good looking picture.” The challenge then is to be able to have a workflow that accommodates the very real world production fact that most productions and most companies have to deal with a huge range of cameras, formats and thus workflows and not just one.

ACES: Academy Color Encoding Specification

What is the ACES workflow and IIF colorspace that Sony has already adopted, even though it is not yet ratified or fully released?

A while ago the Academy of Motion Picture, Arts and Sciences “decided to once again focus on the sciences part of their name,” muses Charles Poynton. The Academy is proposing a standard primarily for feature film production and post that would produce a new middle ground.

ACES or Academy Color Encoding Specification, is under the umbrella of the Science and Technology Council of the Academy. It is actually part of the image interchange framework, or IIF. ACES really owes a lot to a technology donation from Industrial Light & Magic. The idea is that the ACES files would be scene linear workflow imagery. But for ACES to work, it needs to sit in an even bigger framework that involves profiling cameras and rendering or outputting.

Instead of saying we have a standard that is a film digital intermediate (ie. Cineon), the Academy is saying in effect, “Could we produce a new pipeline that is a known entity? Could we move or convert all the different digitally sourced files into this known new colorspace and make that new colorspace with such high end professional specs that we could do everything we want in it, without compromise and then get it out again back to whatever you want?”

Could we make a pipeline that is manufacturer independent and yet not be a committee solution that was awkward, slow and thus pleased no one while trying to be everything to everyone?

The current thinking is as follows.

Each camera will be different, each camera has to be different, but it should be possible to publish a common high end target and then get each of the camera makers to allow you to render or convert your images into that one well understood common space. Furthermore, this would be scene linear, we would understand mathematically how we get here from the scene so there is no secret sauce – no magic numbers. This new place is somewhere that CGI can also very, very accurately render to. Each camera company will need to understand their own cameras to make the conversion, and clearly even in this common place some source material will be higher or lower resolution, noisier or quieter, with more or less dynamic range, but that once we are in this common place all files are scene linear. Their idea of the color blue is not influenced by how it might be projected, this color blue will not be clipped or restrained by a small gamut. In fact, the ACES / IIF colorspace gamut is enormous. And this ‘ACES thing’ will sit in a slightly restrained version of an OpenEXR file – so we don’t all have to rebuild every piece of software we have.

Once we have graded, comped, matched or image processed our film in ACES we then need to get it out of this sort of utopian wonderland of flexibility and actually target it for a cinema, or HD monitor or whatever and thus the Academy have also drafted a spec for what is called a renderer which will convert the files back to destination referenced imagery – for example standard Rec 709. It is important to note this final conversion is again standardized. Each company does not get to render its solution back to a slightly different creatively different version. One conversion will match another, even when done on different boxes.

Stages of the ACES workflow

Values are directly proportion to the scene, there is a linear correspondence between the photons in front of the camera, and the values in the middle of an IIF / ACES workflow. So part of the secret of the workflow is to understand that we need to strip off any ‘special sauce’ that might happen to the original sensor data to make the image look cool, or better in the eyes of a camera manufacturer.

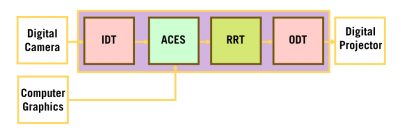

The IIF PROCESS

START: IDT (Input Device Transform)

Sensors should be linear – but they sometimes have a curve like an S-log or Log C curve, so the first step is IDT. The IDT is to undo that curve.

MIDDLE: ACES

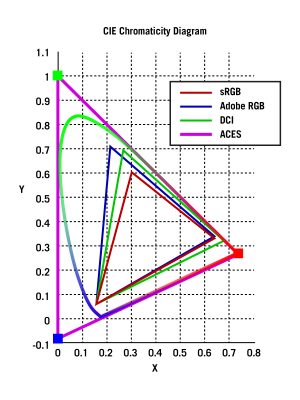

The middle of the flow diagram. Here we think of the gamut as being so huge (compared to say Rec 709 or Adobe RGB) that it is virtually unlimited. It may sound odd but the ACES color space is vast and thus great for doing mathematical operations in. You will find it near damn impossible to hit the walls and clip or limit color if you do grading or image processing in the ACES colorspace, its gamut is just that big.

ACEs colorspace is a very wide color space “It is not just wide it is ridiculously, extremely, wide – it is a color space that encompasses the entire visible spectrum and more”, explained Joshua Pines, (speaking as a member of the Academy.)

END: RRT Reference Rendering Transform / ODCT Output Device Color Transform

The final stage is an inverse transform that not only renders out the image from ACES and also targets something that will give us a wonderful ‘film like’ image in the theater viewing environment. While the middle ACES images have conceptually unlimited gamut and dynamic range, the final output is very much a specific limited particular encoding for a particular output device. It literally accommodates for the fact that the viewing screen would be much darker than the source was. For example, the light levels outside in the sun is just much brighter than the same scene filmed and then displayed in your living room. We think of them as looking the same but of course in your living room the room even with the TV on is much less light that outside while filming and this is vital for accurate viewing.

Will it work?

This is the million dollar question, and the jury is still out. After all the draft still has to move from the Academy to SMPTE to become a standard. The Academy is not a standards body.

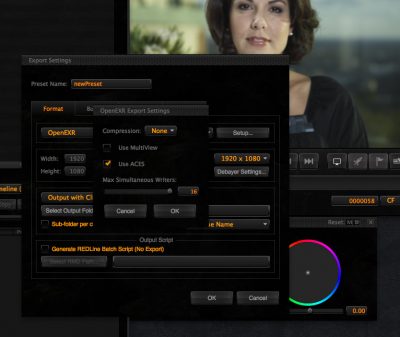

But it looks like it really will take off. Even before it is published companies are including the ACES option as one you can use. For example, even though RED may have a better solution you can export .r3d files into ACES/OpenEXR today in RedCineX.

Is ACES everything? No, it is important to understand what it is not. It is not a RAW format. To convert to ACES you need to pick a colorspace by definition. So things like color temp, white balance, saturation etc all need to committed to. It could be argued that this is splitting hairs since the conversion from a RAW file like Arri-RAW or RED Raw to a Floating Point ACES file, – is converting the files into a very flexible floating point place so it is still very much able to be later adjusted and edited. Unlike the commitment to converting to say Rec709 where you are locking in a lot of aspects, once converted to an OpenEXR ACES file one should be able to still grade or convert to different looking images without much trouble, (just as you can convert to different color temperatures on a RAW file without much trouble). But an OpenEXR ACES file is no longer a RAW file, and many people like keeping their files in the RAW format.

Ray Feeney, co-chair of AMPAS’ Science and Technology Council and one of the SciTech industry leaders discussed the ACES / IIF program for colorspace and color management at Siggraph this year, and it is safe to say that the Science and Technology Council and then SMPTE are all actively seeking industry input to make this work.

How will it work?

ColorOpenIO (OCIO) and Nuke

Sony Pictures Imageworks (SPI) for many years has managed their color pipeline with ColorOpenIO. Based on development work started in 2003, OCIO enables color transforms and image display to be handled in a consistent manner across multiple graphics applications. Unlike other color management solutions, OCIO is geared towards motion-picture post production, with an emphasis on visual effects and animation color pipelines.

Sony Pictures Imageworks (SPI) for many years has managed their color pipeline with ColorOpenIO. Based on development work started in 2003, OCIO enables color transforms and image display to be handled in a consistent manner across multiple graphics applications. Unlike other color management solutions, OCIO is geared towards motion-picture post production, with an emphasis on visual effects and animation color pipelines.

OpenColorIO will soon appear in all the Foundry products as standard such as Nuke 6.3 v3.

Significantly OpenColorIO supports a wide range of color management approaches and pipelines including the new ACES / IIF Academy standard, or draft 1.0 ACES standard.

This means that the Foundry has an end to end company-wide ACES-friendly workflow built on the real world production experiences of SPI which dovetails in perfectly with their OpenEXR Nuke pipeline. We have not yet had a chance to test the RRT implementation but SPI’s own commitment to open source makes it a safe bet that their implementation will conform fully to the ACES/IIF Draft.

Nuke converts every image to linear light space already and into floating point, it does this as part of the Read Node. The Read node allows a LUT to remove gamma corrections and adjust for Log space if used but the while the Foundry does a lot of work in gamma correct and linear light maths, its colorspace gamut mapping is not as strong, this will change with the adoption of OCIO.

Autodesk Flame

Autodesk’s newest release of Flame also supports scene linear workflow. Autodesk has one of the strongest reasons to like scene linear workflow and ACES style approach since Autodesk is the major player in 3D animation, and vfx CGI applications are some of the strongest motivations for this entire style approach to workflow.

Autodesk is also a manufacturer of color grading systems with Lustre so it has a dog in every fight of this workflow. It is uniquely placed to provide an end to end solution and if the implementations in Flame 2011 and 2012 are anything to go by, Autodesk understands the issues very well. The Flame recent implementation has massively caught up Autodesk’s compositing efforts.

Flame has new workflows for Scene Referred methods of working that include an advanced LUT editor and new Photomap tools, as one of the functions that is related and some would say key to dealing with these workflows is ‘tone mapping”.

The process of tone mapping actually aims at taking a Scene Referred image, and defining how it should become an Output referred because images ultimately require an output device to be seen! “The photographic chain is a very familiar example of an actual tone mapping system. It takes the information of a scene, and then applies a series of transforms to the colours so that the perception of the image, once printed on paper (or projected on a film screen), would appear perceptually similar (or plausible) to the original scene in specific viewing conditions” explains Autodesk’s Systems products Philippe Soeiro.

On Photomap Soeiro explains that it “actually uses the photographic analogy to drive this process (from Scene Referred to Output Referred). Essentially Photomap allows you to build your custom photographic chain, and dissociates the characteristics of the photographic system (your negative if you like), from the final output device characteristics, but combines them together to deliver the tone mapped image. The Encoding defines the typical output characteristics and implicit viewing conditions that come with it (DCI projector, sRGB monitor….)”.

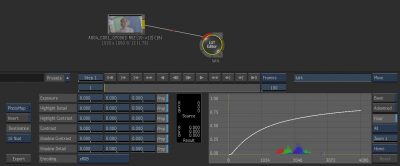

RED: RedCineX

As mentioned above, RedCineX supports exporting OpenEXR in ACES. This is a very interesting workflow. The Red Digital Cinema Camera company has pioneered the use of multisampled HDR film production with their HDRx solution. This solution, combining as it does two exposures, achieves a much larger dynamic range and does so at up to 5K resolution at high frame rates. RedCineX allows these two frames to be time aligned with Magic Motion and the resulting single frame has a vast grading latitude. This file is well suited to a scene linear workflow and while it can be output as an OpenEXR with REDcolor2 it can also be exported as an ACES file type. Anecdotal evidence suggests most Red users are not yet adopting this workflow, but it will be interesting to see if it develops as more and more people and post pipeline allow full ACEs support.

As mentioned above, RedCineX supports exporting OpenEXR in ACES. This is a very interesting workflow. The Red Digital Cinema Camera company has pioneered the use of multisampled HDR film production with their HDRx solution. This solution, combining as it does two exposures, achieves a much larger dynamic range and does so at up to 5K resolution at high frame rates. RedCineX allows these two frames to be time aligned with Magic Motion and the resulting single frame has a vast grading latitude. This file is well suited to a scene linear workflow and while it can be output as an OpenEXR with REDcolor2 it can also be exported as an ACES file type. Anecdotal evidence suggests most Red users are not yet adopting this workflow, but it will be interesting to see if it develops as more and more people and post pipeline allow full ACEs support.

One problem that RED has suffered from is that the RED workflow is believed by some to be more complex than some others, whether right or wrong. A move to an OpenEXR means that the workflow is simpler and more robust. No longer is the ‘transcoding’ as big an issue for getting varied results from a RED workflow, as all (arguably) of the image is being carried forward into post-production. A scene linear workflow reduces the notional ability for someone to screw up a RED pipeline, while also leaving the native .r3d format and adopting a much wider format in OpenEXR.

In production

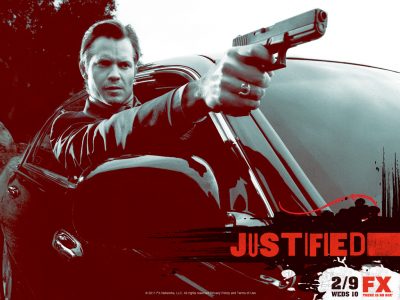

This year the first actual episodic show was posed in an IIF/ACES workflow by Encore Hollywood for the second season of Justified.

In a Film&Video article by Jaclynne Gentry, the chair of the ASC Technology Committee, Curtis Clark, ASC, was quoted as saying, “If you have a digital camera that’s capable of recording wide dynamic range like film, but you then do a transform that puts it in a limited color-gamut space like Rec. 709, you’re going to clip highlights.” He also added, “All the detail that you know is there suddenly doesn’t appear to be there. So how do you maintain the full potential within a workflow all the way to the finished output?”

“It’s a major difference,” said cinematographer Francis Kenny, ASC , “It’s not just in the highlights and the shadows. There’s a range in the midtones where you can see a difference in the gradations and shades of grey.”

In the case of Encore, one of Hollywood’s most respected post houses, the workflow was as follows. The show was shot on the Sony F35 or SRW-9000PL and converted from the camera RGB image to 10-bit 4:4:4 S-Log/S-Gamut encoding for recording on tape (or to 10-bit DPX files).

For color-grading, those 10-bit images are transformed via an IDT to 16-bit data in OpenEXR files. In this process, the S-log camera data is linearized (using a one-dimensional LUT) and the S-Gamut RGB data is converted to the ACES RGB color gamut via a 3×3 matrix color transform. From there the post pipeline is OpenEXR (ACES), except at this time the Lustre used to grade the material could not natively read the OpenEXR ACES files. So for this program the data in the 16-bit linearized ACES-encoded file was “quickly re-encoded into a log format,” Clark says, so that Lustre’s log toolset can be used for grading rather than its more video-centric (i.e., lift-gain-gamma) linear adjustments, which aren’t appropriate for the wide-gamut ACES data. The data is re-linearized after grading takes place.

Clearly, as companies such as Autodesk adopt ACES’ principles this process of a linear step down will not be required.

Summary

Kodak contributed so much to the industry with Cineon, but even for Kodak today film stocks have advanced and modern film stocks have themselves overrun 10 bit linear. Modern stocks are much better than the ones Kodak itself based Cineon on. Nor has computer storage or computer performance remained static and today we can afford to devote much more power and disk space to the problem of post-production. OpenEXR is also very GPU friendly and floating point now in modern chips is almost as good as integer performance, which was not the case when Kodak decided on the integer (non-floating point) 10 bit log file format.

Scene linear workflow built on OpenEXR seems now to be the de facto direction the entire industry is heading. It is likely but by no means a certainty that the Academy ACES/IIF scheme will figure very prominently and that over the coming 12 to 18 months we will see a dramatic up-shift in ACES implementation.

Find out more about color workflows this term at fxphd.com as part of the Background Fundamentals series.

One reason that the DPX format became the gold standard for telecine is because it carries timecode in the metadata. Since it is crucial that we are able to track every frame from offline to finish, I wonder how timecode fits into a workflow based on OpenEXR. A few years ago, I was told by an Autodesk software engineer that OpenEXR could contain timecode, but only as a “custom attribute”. This means that different apps would probably never be able to read/write timecode the same way (as opposed to the standardize format used in DPX). So, does anyone know how timecode is tracked within the ACES/OpenEXR system? Also, I’ve read that the OpenEXR standard is going through some revisions, so I wonder if timecode and other critical metadata will become standardized in future versions.

The latest IlmImf library has the KeyCode class which is used for identifying a film frame.

See ImfKeyCode header file comment

//—————————————————————————–

//

// class KeyCode

//

// A KeyCode object uniquely identifies a motion picture film frame.

// The following fields specifiy film manufacturer, film type, film

// roll and the frame’s position within the roll:

//

// filmMfcCode film manufacturer code

// range: 0 – 99

//

// filmType film type code

// range: 0 – 99

//

// prefix prefix to identify film roll

// range: 0 – 999999

//

// count count, increments once every perfsPerCount

// perforations (see below)

// range: 0 – 9999

//

// perfOffset offset of frame, in perforations from

// zero-frame reference mark

// range: 0 – 119

//

// perfsPerFrame number of perforations per frame

// range: 1 – 15

//

// typical values:

//

// 1 for 16mm film

// 3, 4, or 8 for 35mm film

// 5, 8 or 15 for 65mm film

//

// perfsPerCount number of perforations per count

// range: 20 – 120

//

// typical values:

//

// 20 for 16mm film

// 64 for 35mm film

// 80 or 120 for 65mm film

//

// For more information about the interpretation of those fields see

// the following standards and recommended practice publications:

//

// SMPTE 254 Motion-Picture Film (35-mm) – Manufacturer-Printed

// Latent Image Identification Information

//

// SMPTE 268M File Format for Digital Moving-Picture Exchange (DPX)

// (section 6.1)

//

// SMPTE 270 Motion-Picture Film (65-mm) – Manufacturer- Printed

// Latent Image Identification Information

//

// SMPTE 271 Motion-Picture Film (16-mm) – Manufacturer- Printed

// Latent Image Identification Information

//

//—————————————————————————–

Just finished short film with a full ACES pipeline with our own custom created IDT for the Canon T2i and worked like a charm! Gave a lot of flexibility when you finally get your head wrapped around what’s going on with the color image encoding and scene to display colorimetry rendering.

Trailer: http://lunebateaux.com/muffet

Pingback: #NABShow 2014 Day 02.02 Shooting and post for EDR/ HDR #edr @dolby | BluefishBlog

Pingback: Swinburne Lecture August 2012 • LateNite Films Blog

Pingback: Image Sequence Read Speed in Nuke: Does File Format and Size Matters? - taukeke