Facial scanning has become ubiquitous in visual effects, but so far most efforts have focused on reconstructing the skin. Eye reconstruction, on the other hand, has received very little attention. Even the best state-of-the-art methods are cumbersome for the actor, time-consuming, and require carefully setup and calibrated hardware.

Disney Research in Zurich has been addressing the problem of realistic eyes for a couple of years now, led in great part by the research efforts of Pascal Bérard and a team of researchers in Switzerland. Their initial efforts were to develop and capture a much more accurate eye model, far more accurate than most previous attempts. The second stage was to use the set of complex and rich data to make a fast and very simple, yet accurate eye generation tool. The first stage was published last year and the second this year at Siggraph, This second stage is now being used in production in films such as Doctor Strange (see our story here).

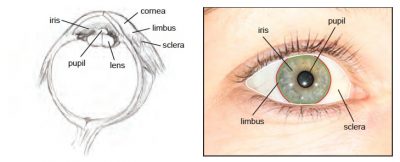

The problem of capturing an accurate eye may ‘at first glance’ seem easy, but it is no accident that most facial scanning systems produce digital faces with no eyes. Most facial reconstruction techniques use photography and often photogrammetry to rebuild the skin of the face. Your eyes have two features that do not work easily with such approaches. Firstly, the eye is wet and curved. This means there are loads of reflective highlights and pings which are beautiful but confuse a photogrammetry solution. The second reason is that under that layer of wet shiney reflectiveness is a lens, so the eye does all sorts of refraction and light bending that again confuses a system that may try to triangulate a point on the side of your iris.

Disney Research in Zurich solved all of these problems and went even further. Most eye models simply scale a pupil larger or smaller. The Swiss team actually sampled the iris opening and closing (due to light levels) to capture how the iris sphincter muscles change the iris. The iris is like a carpet around the pupil so as it expands and contracts there are tiny circular and radial folds in the iris itself. This level of detail provides very different detailed maps of the iris. Not only is your iris unique but the level of fibrous material of the iris is very much linked to eye colour. Some eye rigs just tint the iris to match the person, but the colour, detail and muscles of the iris are very much unique to your eyes.

The team also made sure to get the ‘whites of their eyes’ right. The sclera or whites of your eyes have complex veins. While many artists may add some degree of blood shot eyes to a digital face, they are rarely accurate. The veins in your eyes are not uniform, most noticeably they are visible in layers. There are also veins in the sclera, the whites of the eye balls. But the eye ball needs to be sitting in the head and there is a layer called the conjunctiva that can also have veins. These blood vessels are normally redder in colour as they are closer to the surface. This does not only affect a still but the sclera and the conjunctiva can move differently. Simply adding one level of red veins or vessels on your eye model fails to capture these differences.

The team also made sure to get the ‘whites of their eyes’ right. The sclera or whites of your eyes have complex veins. While many artists may add some degree of blood shot eyes to a digital face, they are rarely accurate. The veins in your eyes are not uniform, most noticeably they are visible in layers. There are also veins in the sclera, the whites of the eye balls. But the eye ball needs to be sitting in the head and there is a layer called the conjunctiva that can also have veins. These blood vessels are normally redder in colour as they are closer to the surface. This does not only affect a still but the sclera and the conjunctiva can move differently. Simply adding one level of red veins or vessels on your eye model fails to capture these differences.

This level of detail may seem excessive, but eyes are so important to non-verbal communication, tiny changes in wetness can affect an actor’s performance. Redness of the eyes can signal everything from emotion to fatigue. Human’s are highly developed to spot these differences even if we can’t explain in words what we are seeing. We have often heard the criticism of digital faces that the eyes are dead.

How they do it:

There are two key Disney Research eye papers, the first outlines how to model and texture a really high resolution eye. The second that followed a year later used the data gathered using the first paper, to more quickly make new high resolution eyes. The newer paper doesn’t aim to build a 3D eye just from images but rather from the data and parameters from a library of high res eyes and the input of only one good new image. This means the new paper can produce radically more accurate eye models, but not take as long or be as intrusive as the original approach.

Siggraph 2015: Part 1

How do I know?

My eyes were some of the eyes scanned at very high resolution. This allows incredibly talented but time poor film stars to get their eyes reproduced in a fraction of the time today! As fxguide published last year, we visited the team in Zurich as part of the Digital Human League Wiki human project. In addition to doing some great Medusa face scanning, I got the full eye treatment.

For my eye we used the original detailed scanning approach that required me to lie down in a neck brace and have lights shone in one of my eyes while the other eye was photographed with a bank of SLRs. By illuminating my right eye, my left iris would contract in sync (as your pupils are synced) and my left eye could be scanned with different pupil diameters.

This pupil work is really important, as this approach not only captured the geometry of my eye but the unique way my iris contracted and responded to light.

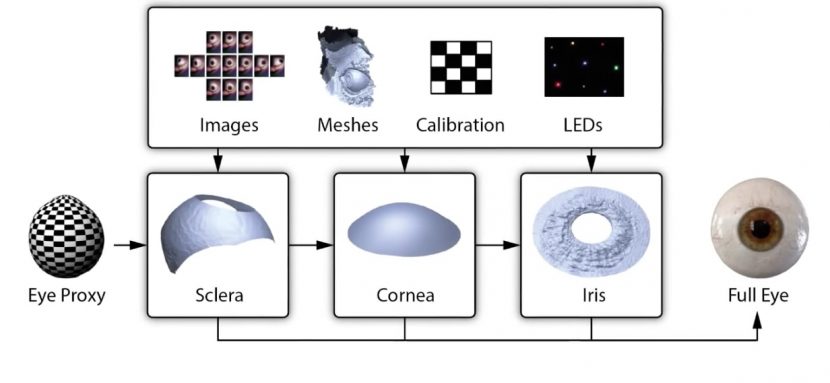

The scanning approach cleverly relied on reflected coloured LEDs to work out the shape of the main eye ball or sclera and also the corneal bulge. The images produced a series of meshes that when calibrated allowed for the wet and reflective eye ball to be scanned even with all of the lens refraction and eye ball curvature. Along with the accurate geometry, very accurate textures are produced that provide the fine detailed iris and its colour.

(Above Mike is getting ready to have the eye barrier put between his eyes so only one eye will be illuminated and the other can be photographed, note the coloured LEDs used to determine surface points based on specular highlights – Images from 2015 taken at Disney Research in Zurich).

The process solves the three main key ‘shapes’ of the eye using a set of 13 images, calibration and coloured specular highlights reflected on the subjects eye.

Here is the video from the first Siggraph paper – which requires detailed and lengthy scanning, (but not any longer)

Siggraph 2016 – Part 2

While the results of the first method are remarkable, it is not particularly fast or actor friendly. The new solution published this year and already used in production takes one high resolution image and then references the database to produce a valid eye model.

One of the great advantages of this process is how tightly coupled iris colour and texture are, for instance, blue eyes have very different textures to brown eyes. This distinctive iris colour – texture link means that now one new input image can inform the 3D texture in z space based just on the colour of the eye photographed from the front.

“there is an inherent coupling between iris texture and geometric structure. The idea is thus to exploit this coupling and synthesize geometric details alongside the iris texture. The database (provided by the first approach) contains both high-res iris textures and iris deformation models, which encode iris geometry as a function of the pupil dilation. Since the iris structure changes substantially under deformation, we aim to synthesize the geometry at the observed pupil dilation”. – from the 2016 Siggraph paper.

Interestingly, one might assume that the one input photo needs to be taken with special polar filters but the opposite is the case. “We do not use linear polarization filters because the photoelastic effect of the eye becomes apparent. This results in wrong iris colors that look like a rainbow pattern. Since the main illumination is usually a ring flash, we can get away by ‘hiding’ the reflection of the flash inside the pupil.”, according to lead researcher Pascal Bérard.

Vein thickness and depth can be artistically controlled and varied. By controlling the sclera vein network and appearance, an artist can simulate physiological effects such as red eyes due to fatigue.

The new approach does this by parameterising the aspects of the eye and this is perhaps most significant in producing an accurate parameterized iris.

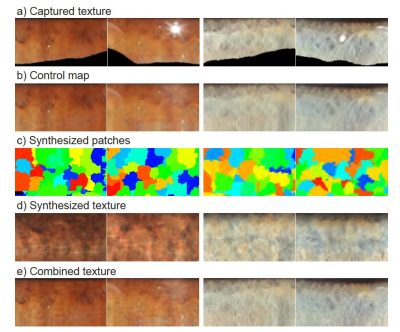

The digital iris consists of capturing initial textures (a), from which the specular highlights are removed (b). This control map is then input into a ‘constrained texture synthesis’ that combines irregular patches (c) grabbed from the database and makes up a new single texture (d). This synthesized texture is then filtered and recombined with the control map above to add high-frequency detail (e).

This ‘interpolation’ of sorts produces a great result, as seen with both a brown and blue eye example (right).

Here is the second video showing the latest research:

It should be noted that “the new paper works with a single image, but is not limited to it. It can use a multi-view setup and produce higher-quality results”, explains Bérard.

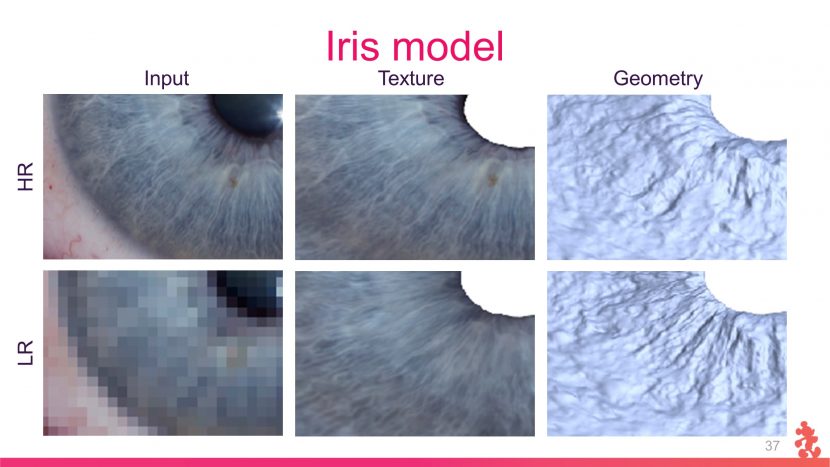

While we have discussed that the approach can work a single input image that is a high resolution still, – it is possible to use less than perfect imagery and get plausible results. Below is both a high resolution and low resolution image of my own left eye. The top image is normal (HR) and the bottom image is Low Resolution (LR). While the iris model is less detailed in the fine colour detail, the geometry is remarkably similar.

To see more clearly the results, the image below zooms in on the images, again the High Res (HR is on top) and the Lower Res (LR) is at the bottom.

This works so well that even painted eyes or eyes from non-human creatures can be used to generate valid believable custom eye models. It is a remarkable process.

This works so well that even painted eyes or eyes from non-human creatures can be used to generate valid believable custom eye models. It is a remarkable process.

The new process is an extraordinary advance reducing the amount of time and effort required and building on the detailed work that the team had previously done.