Weta Digital completed over 600 shots across six of the final episodes of Game of Thrones (GoT). These ranged from large scale environments, epic battles, dragons and destruction. Most, if not all, involved simulation. This ranged from Massive crowds to complex Dragon fire and water simulations. Weta has a team of experts who develop state-of-the-art simulations for visual effects such as water, fire, fluids, muscle, cloth, hair, and plants. On Game of Thrones, the Weta effects animation team used almost every tool they had.

We start by speaking to Weta Digital VFX Supervisor Martin Hill about the range of simulation work done at Weta that adds so much production value and richness to GoT…and if those huge battles in GoT reminded you of Weta’s original work on Lord of the Rings, then we agree. We also sit down with Geoff Tobin, a long time Massive specialist at Weta and discuss the similarities between both of these iconic Massive crowd simulations, done over 15 years apart.

Dragon Fire : Fire simulation

One of the most iconic aspects of GoT is the Dragons, who featured heavily in the last set of espiodes, especially in the epic battle sequences. The Dragon’s fire was achieved as a combination of flame elements shot on motion control flame throwers attached to wire rigs similar to spidercams… + along with complex effects simulations.

Weta combined special 2nd unit plate photography with computer generated fire, but given the dominant visual role of the fire, the team needed to go much further than just composite using a library of fire elements. Even when the fire elements were shot live action, the effects animation team would simulate them. The team would recreate the fire digitally to provide correct lighting for the rest of the shot in terms of contact lighting and reflections. Each shot element was projected into 3 space as part of the Weta deep compositing pipeline. This meant that even shot studio elements would technically have volume, be reflected correctly in CG water and light the surrounding volumes correctly.

Martin Hill wanted the digital fire and real flame elements to match as closely as possible. The effects animation team matched the physical volumes of the real propellent, simulating its explosive properties, the type of fuel, the forces that such a volume would exert and the heat it would generate. “We needed to adjust all our flame parameters to match the burn temperature and the amount of fuel pressure. We matched the fuel that was emitted by volume and the flux of propellant coming out. We tried to physically match all aspects of the on set flame thrower so our simulation version would look the same as the on-set flame elements,” says Hill. He always opted for the real fire if possible, due to its spontaneity and realism. And also because he preferred the creative team to be able to approve from takes shot on set before simulating. He did request this, even when it was just as a guide to Weta as to the effect the creative team were after.

Hero simulations were created to generate dragon fire and vast heat fields for a dragon traveling at speeds of over 200 mph.

Weta’s concept art team produced breathtakingly beautiful concept art for the project, (see above).

The complex simulations were combined with live action elements

One tool that Weta used to aid in compositing was their proprietary deep tool: Shadow Sling. This is part of the compositing pipeline and used for relighting volumes. All of the Weta shots were produced in a deep compositing pipeline and so Shadow Sling allows for CG elements such as the dragon to be added or moved around after the volume renders correctly shadow and effect volumes around them during the Nuke composite.

Martin Hill explains “It’s part of the volume relighting setup, it essentially controls a shadow component. You can put in the light source passed on from the 3D lighters, and add geometry or ‘blockers’ and you can generate correct shadow volumetrics within the deep composite”. As this is done during the comp and it is very advantageous, “especially when you’ve got 300 shots with atmospherics, You can move the lighting or the objects in the scene around without having to re-render the expensive volumetric pass” he adds.

For each shot, when it was being prepared for compositing during the Battle of Winterfell, the automated system at Weta would produce three sets of mist and fog elements, with different velocities and turbulences. All three sets were presented, ready to go, to the compositor to use to sell the shot. If none of these worked creatively, the team could adjust the direction and speed and generate specific elements. Overall, the team knew in the plot that the wind was coming from the north, but sometimes that did not look right in the edit. While these elements could be interchanged easily, they were created before lighting and would not address the corresponding shadows. “Shadow Sling let us move lights and objects, recomputing accurate shadows on the misty atmosphere from the dragons”, explains Hill.

The Dragon reference is at 29 mins in, above.

The actual animation of the Dragons in Episode 3 during The Battle of Winterfell, was done by Image Engine. In an unusual move, Image Engine’s experienced team animated the dragons and then passed over an Alembic Cache to Weta. The Render team would render the dragons, along with their fire and effects animation. “We worked really closely with Image Engine and got on the phone a lot !” explained Hill. “They were really terrific and they gave us all the material in a very organised way”. The decision to work this way was made by GoT production VFX Supervisor Joe Bauer who was the supervisor on Episode 3: The Long Night. Bauer had a very strong prior working relationship with the animation team at Image Engine. On Episode 4: The Last of the Starks, the visual effect supervisor was Stefen Fangmeier and he was “happy for us (Weta) to do all the animation in that episode”. Thus Weta worked on and rendered all three dragons in these episodes, but the animation was sometimes provided by Image Engine.

Image Engine Provided the Dragon Animation:

https://www.facebook.com/ImageEngine/videos/366939643944969/

Viserion’s Fire and Destruction Simulation

The team integrated plate fire and smoke with CG elements to preserve the gritty and realistic aesthetic of the battle. The team worked hard to get the right feeling of damage and undead in the Ice Dragon’s wings and body.

During the Battle of Winterfell, Jon Snow fights his way through Winterfell trying to reach Bran. He is spotted by the Ice Dragon Viserion and is almost killed by blue fire. The Fire of Viserion had the same fire simulation type and photographic elements as the other two living dragons. The difference was in the grade of the fire. “It is effectively a hue colour wheel shift” jokes Hill. In reality, the hue was altered and then colour corrected to “brighten up the core and give it a little bit more of what, Joe Bauer called an ‘electric feel’. We would take some of the brighter parts of the flames and vary the hues, add pulses and really flare them out” explained Hill.

Death at Sea: Water simulation

Weta was required to produce fire and water simulations combined, that interacted and effected each other. Weta used a combination of tools, including their own Synapse tool, along with Houdini. Synapse is Weta’s distributed sparse volumetric solver in-house simulation framework. Synapse allows sims to be distribute over multiple machines. It provides huge voxel density and directly feeds into the deep composting Nuke pipeline. “What we find is that it is important that you are able to simulate things where you take the forces and physical properties and transfer them from one software package to another, whether it’s another simulation software or affecting the shaders in the render ” says Hill. “It’s really about building between packages and making sure that those bridges are working. It is key that they all render together well”.

For the battle between Daenerys and Euron’s fleet in Blackwater Bay, Weta developed effects animation technology to enable them to combine fire and water simulation and rendering for both CG and plate element Dragon fire with ocean water and explosions.

The ships crews were animated with Massive crowds. The team created generic sailors agents with actions such as pulling ropes and loading boxes etc. Geoff Tobin explains that “the animation team would take our sims and populate the boats required for each shot, for as many ships as they needed”. The Weta team already had sailor agents from previous shows, and these agent brains were modified and updated as needed. “We had sailor agents that would do whatever was needed. So we were able to recycle that motion and build onto it, so it fit these ship assets” he adds. In terms of the Massive simulation, as discussed below, it was actually the Battle of Winterfell and the wight army that required the Massive team to innovate and extend beyond anything they had done before.

Rhaegal’s Death

Rhaegal’s horrific death in episode 4 plays a key role in igniting Daenerys’s fury and driving the plot for the last two episodes of the series.

In the critical death scene in episode 4, Weta combined 3 initial previs shots for Rhaegal’s death, into one dynamically choreographed orbiting camera move. The previs was originally three separate death shots but, in consultation with Episode 4’s VFX supervisor, Stefen Fangmeier, Weta’s animation supervisor Dave Clayton combined them into one long camera shot circling the action.

In the moving sequence Weta also simulated the arrow impacts and blood bursting from his lungs with his last breath. The scene is both graphic and surprising, in the best traditions of GoT.

See 5:50 and the death of

Cloud & Lighting simulation

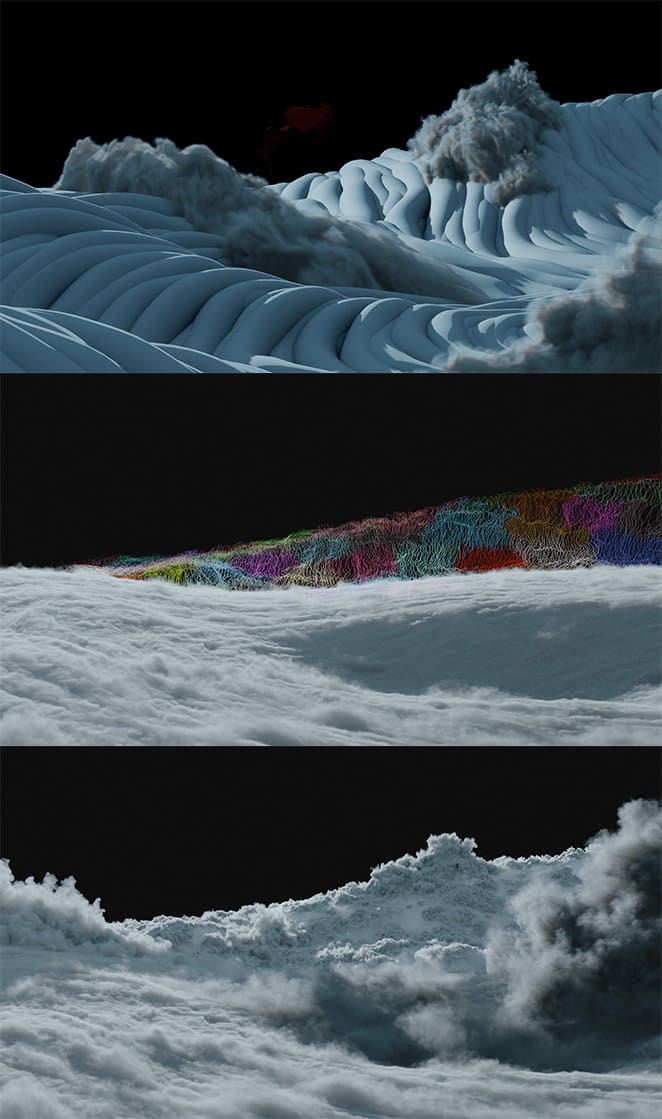

As almost a respite in the Battle of Winterfell, Jon Snow and Daenerys Targaryen climb above the Night King’s storm. “The concept department came up with a really great base image using a tornado as a base and then a sweeping wave of cloud in the background, with just these small dragons so show scale. Everyone really liked it”, comments Hill.

Weta’s effects simulation team then had to come up with what Hill described as a “vast simulation, which they did really well and it really matches the artwork pretty closely if you compare them” (see below).

The effects team produced a vast simulation of the top section of a tornado, which Hill explains “has octaves of detail, down to individual threads cloud – with their own dynamics as well as an overall rotation”. The scale and complexity of the shot is only highlighted by the scale of the Dragons. The shot takes on an angelic quality, and produces one of the most stunning and unexpected shots in the entire battle sequence.

The FX department also had to create a vast FX storm that appears with the arrival of the Night King. It needed to both be physically impressive and also have a magical quality of finger like tendrils that loomed over the battle. Michael Pangrazio created a concept art which the client liked, and this was passed to the FX dept to create the art directable form that still was governed by physics. FX supervisor Adrien Toupet and Prema Paetsch created a layered guide curve driven sim for both the micro and large scale features. During this design phase, we realised the stormfront was like a surf wave in form, and having Drogon and Dany ride underneath it created a great graphic. This was further enhanced by art directing Drogon’s strafing fire, so the uplight cast a large shadow of the dragon on the underside of the wave. The team refined the density of the storm so the fire light framed the images and just permeated through the volume when viewed from the top.

Massive Wight and Dothraki Crowd Simulations

The Weta massive team for GoT was primarily six artists, “I think everybody here worked on Game of Thrones at some point”, joked Geoff Tobin, who was lead crowd TD for the project.

Winterfell is the ancestral castle and seat of power of House Stark and is considered to be the capital of the north. It was also the first line of defence against the Night King, the White Walkers and the Wight.

The Wight is the army for the reanimated corpses of humans, made when the White Walkers turn the dead and decomposing into wights. Weta were responsible for the vast crowd fights at Winterfell. For a long time, Weta has used Massive software to produce complex crowd simulations and in this respect the Battle of Winterfell has a direct connection to the Lord of the Rings battle simulations.

The wight army is animated via Massive which controlling agents. It works by taking custom motion capture files and combining them with a procedural AI brain system for the wight agents. Each of the Wight is controlled by their programmed ‘brain’ which has a set of rules for both what the character should be doing and what their goals are. The brain manages the wights path, avoids collisions and then accesses into the Massive motion capture library to find the appropriate MoCap file. Once animated, the data is passed on and in to the general animation pipeline of Weta. At any time the Weta team can lift the animation out of the control of Massive and pass control over to traditional key frame animation. Similarly, it can pass a character to Massive to use the system’s rag-doll simulator. For example, a character might be running as part of a crowd, this would be achieved by the Massive software accessing pre-processed MoCap data of actors running in character. The director could ask for a very specific animation action to be insert at a point, if the plot required it. If the character is say blown up by Dragon fire, then the system switches effortlessly to its rag-doll simulation to toss the character into air (something not in the MoCap library). The Massive physics engine is less complex than Weta’s full physics simulator but still quite advanced and it delivers very believable physically plausible animation in the form of rag doll simulations.

Weta recorded MoCap for GoT for many days, the MoCap sessions were supervised by the Massive team as they require special types of animation to be captured, especially animation which can be looped and work inside the dynamic Massive library.

On this show, the major R&D for the Weta Massive team, centred around the wights climbing the walls of Winterfell.

To get the wights to climb the walls, the team started with a new motion capture session. The MoCap team and the Massive supervisors devised recording the MoCap performers going up steep 45 degree inclines. “We then rotated the data so that they were climbing straight up the wall. For the actual agent brain. we had to do a lot of R&D”. In the Massive program there is a ‘lanes’ feature, typically this would be the tool used for pedestrians on a pathway or cars to drive and follow roads. “Normally you draw a spline on the terrain and the agents know where they are relative to that spline (line). They know how far they are from the center of the line, and how far they are along the line”. he adds. “But in this case we made the line go up the Winterfell wall, so the wight agents would know how far they were from the center of the tower that they were climbing and how far up”. The agent software inside Massive would then use that information to build the ‘human’ tower or pyramid. By accessing the right Mocap and knowing where they were relative to this line, the tower was bigger at the bottom and then it built to a triangular peak at the top. “And once they reached the top of the wall, the whole thing would collapse and the wight would all fall on top of each other” he explains.

The wight were attacked by Jon Snow and Daenerys Targaryen’s dragon attacks. For these sequences, the animation team would provide the path of the Dragon fire strike and how it hit the ground. “We would then use that timing to trigger when our agents would explode and get thrown up into the air and blown to bits”, Tobin explains. “Massive has its own physics engine built in, so you can activate the rag-doll at any point instead of having the agents being controlled by the brain, they are under physics control. You just give them a force underneath them and they just fly up and fall under gravity”.

This ability to switch between agents accessing MoCap data and a rag doll physics simulation was also most effectively when the Night King dies. “In the shot where all the wights are dying that’s exactly how we did it. We just had to work on the timing of when they would drop in camera. The trickiest part was the big pile of wights up against the wall and getting them to fall down in a sort of convincing pile of dead bodies”.

While Massive includes software to leave footprints and marks in the snow behind agents, Weta does this by generating a special pass. “We run a special set up that is just feet attached to a skeleton from our generated motion capture data, because that is all that is needed. We pass that simplified setup to the effects animation team. They use that to calculate where the feet collide with the ground and kick up snow or dust and leave footprints”, Tobin explains.

The second major Massive simulation in this episode was the Dothraki horseback charge. Here the team was able to access the extensive Weta library and build on MoCap data and Massive Agents from the original Lord of the Rings. “A lot of this particular sequence was recycling and relying on what we have done before” comments Tobin. “The horses, for instance, – when the Dothraki ride off into the darkness, All the horse motion was pretty much the motion we captured for Lord of the Rings. The host assets themselves have changed over the years and the motion has been updated accordingly, but it’s pretty much the same brain and simulation”.

For the Battle of Winterfell, Tobin estimates the team had some 20,000 to 30,000 agents animated in Massive. But unlike when he first started at Weta in the Massive department, for Lord of the Rings, Tobin and the other Massive artists can now have a version of any shot play on their computers running in real time. “Back when making The Lord of the Ring, I had just a rough Open GL preview displayed out of Massive” he recalls. “Back then, you had to run shots overnight to see what they would actually look like. Whereas these days we’ve got our real time engine built into Maya and we can input the crowd data and preview it with textures, shading and even a rough approximation of what the lighting is actually going to look like. That is a huge help in blocking out shots, particularly for the client, so they can see a close approximation of what the final shot will look like”.

Eddy Fire Simulation

In addition to the Massive simulations in the Battle of Winterfell, the Weta crew made extensive use of the Eddy Nuke tool set. This was used to simulate and render flaming Dothraki swords.

While normal steel will not kill the them, fire does work on the wights, – as they are seemingly very flammable. Azor Ahai has long had his magical fire sword: The Lightbringer, but in episode 3, it is Melisandre who arrives at Winterfell and asks Dothraki to rise their weapons and then ‘lights’ up their swords.

“The Eddy software was built by a couple of Weta guys and it is a terrific tool for fluid simulations” explains Martin Hill. Weta uses Eddy on smaller elements, allowing the main effects animation team to tackle the extremely large and more complex simulations. “One of the really great examples of that was when we used Eddy for the flaming swords in episode three” Hill adds.

Eddy for Nuke, by VortechsFX, is a plugin for simulating gaseous phenomena inside of the Foundry’s Nuke. Eddy makes it possible to work with smoke, fire and similar gaseous fluid sims fast and almost completely interactively.

Weta had a combination of plate elements of the Dothraki actors holding up their swords, some of which were practical props with LED lights on them and just a couple of them actually with fire. While the real fire swords were good as reference, the timings weren’t necessarily accurate to what was needed in the final edit so Weta ended up replacing and adding all the fire swords for CG Dothraki Army. But the LED swords proved very useful for the Eddy solution that the Weta’s Nuke team came up with.

The traditional way Weta would solve something like the Dothraki swords would be to start with tracking all the swords. The team would then create a pre-canned simulation and track it the swords, or even more likely, Weta would solve for all the swords position in three space and do a full 3D simulation in situ. “That is a very laborious process when you’re talking about hundreds and hundreds of these swords in a wide shot” comments Hill.

The new approach with Eddy did not require a full effects animation department simulation. “We know each swords depth as we project all the shots into ‘deep’ so we can add other volumes and CG,” Hill explains. “We used the illumination of the LED swords essentially as a fuel source for the simulation within Nuke”. As the compositors know the object depth and position they can a 3D Eddy simulation of the flames. “We use an optical flow system to stabilize all the swords and then with painting on a single still frame, we set the timing of all the swords lighting up. We then use that to drive the simulation in Eddy,” Hill outlines.

The Nuke artists in Eddy have control over wind direction and other attributes, so the compositors reverse or unwind the optical flow stage and the swords are then once again correctly moving around in their right places. “You then essentially have a 3D positioned source as the ‘fuel’ to generate the fire in Eddy. We give the compositors fairly elementary & quick to use tools for the fire. They have wind direction and vorticity and Turbulence”. As Eddy is very fast as a tool, it literally took the compositors just a couple of seconds per frame to generate all the fire on the swords. “You’re not going through the process of caching simulations, rendering them and then passing them to the compositors” adds Hill. If there was a change of the desired wind direction, it could be updated directly in the composite. “Eddy was pretty interactive, it was not real time by any means, but it’s certainly fast to work with, and any change was properly handled, with all the new rendered elements ‘live’ in the deep composite all the time” Hill concluded.

It’s hard to imagine the amount of work that went into the last season. Even just episode 3 seems mind-boggling, and I’m sure the teams excelled themselves.

Which is unfortunate seeing how the broadcast looked. I saw the episodes using the HBO Go streaming service, and Episode 3 look horrendous. The entire episode happens at night, and due to what I assume are compression artifacts used to reduce file sizes for streaming, all blacks looked horrible. The amount of banding was worse than when we had low bandwidth video on the Internet. It was so distracting that I had to switch off, reboot, change computers, watch on my TV, etc., but everywhere, it just looked awful.

What a shame. I will never see it the way it was intended, because there is no way I’m buying the Blu-Ray to watch what ended up being a very disappointing season plot-wise.