Story written by guest writer Leif Pedersen, edited by fxguide.

Piper is the kind of film that elicits disbelief, a film that allows you to admire the beauty of the imagery and yet also marvel at its technical prowess. As with any Pixar film, the focus is on the story, and this is where RenderMan was able to help the artists and the tools development team with the creative edge that the Director needed to tell this remarkable story.

Piper tells the story of a daring bird who is confronted with a tough problem as it tries to approach it creatively and collaboratively, a fitting parallel for the challenges and solutions the development team faced as they approached the beautiful golden sun-drenched beach of Piper.

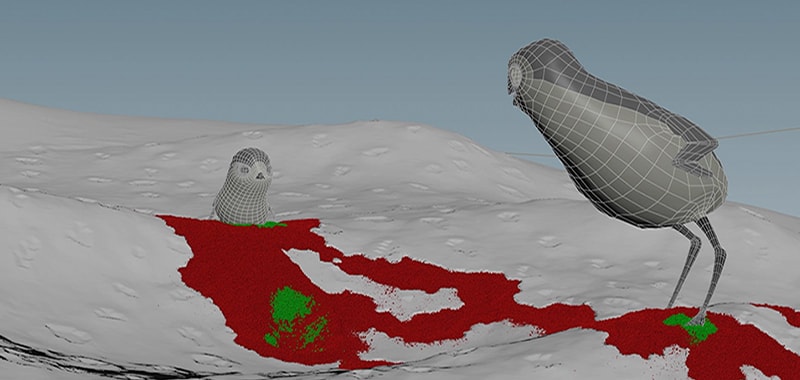

For the first time, this project came from Pixar’s tools department, which is in charge of creating the cutting edge technology responsible for the wonderful imagery present in all Pixar films. Piper was also the only short at Pixar which was started in REYES and transitioned into RIS. Development was done very early on with REYES’ plausible hybrid-raytracer.

“…the approach to many of the usual technical challenges had to be completely different.”

At the time, the team was getting interesting results and traditional shading and lighting methods were beginning to be deployed, such as reliance on displacement maps, shadow maps and point clouds for global illumination and subsurface scattering, but when the team got their hands on RIS they were sold.

“Our initial images with RIS were very promising, the characters and their environments looked integrated, like they belonged in the same world,” said Erik Smitt, Director of Photography on Piper, “We no longer had to approach our shots with per-character lighting rigs in order to create cohesion in the shot, this was very creatively liberating, because we could get close to a finished shot much quicker.”

The team was able to light shots and approach shading in a completely physical manner, so much so, “that the approach to many of the usual technical challenges had to be completely different” he adds. One example was the beach sand. “Traditionally, we would have used displacement maps and shading tricks to accomplish such an intimate and finely detailed beach,” said Brett Levin, Technical Supervisor on Piper, “but we decided to see what RIS could do.” And they did…the team decided to fill the beach with real grains of sand, all of it…

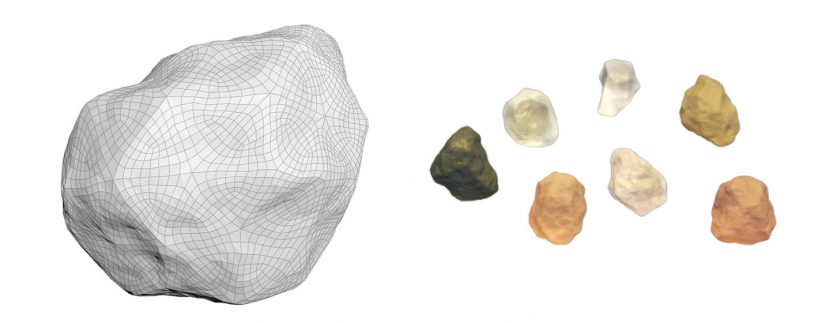

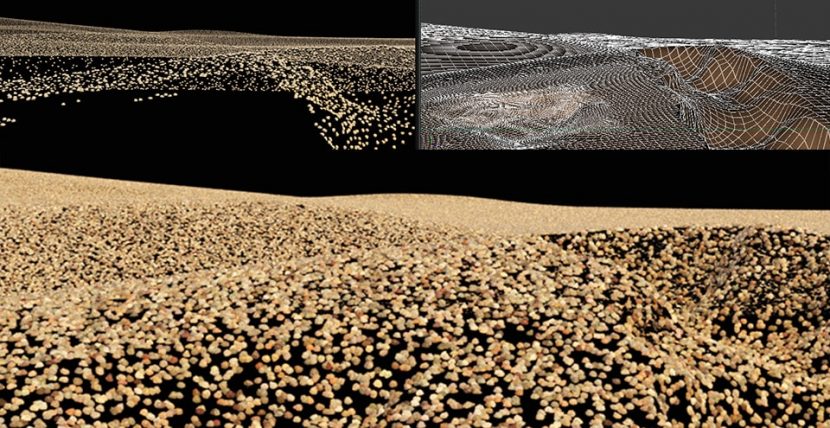

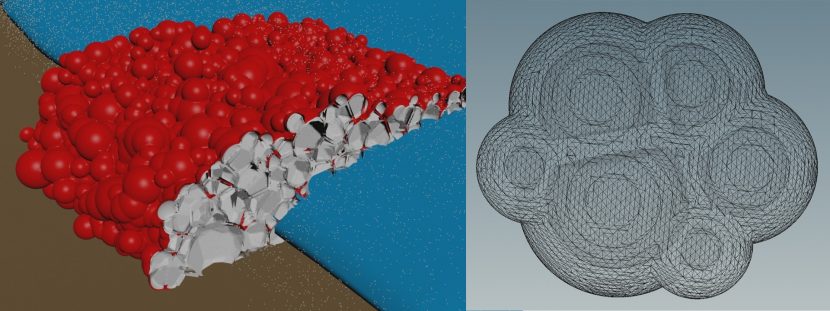

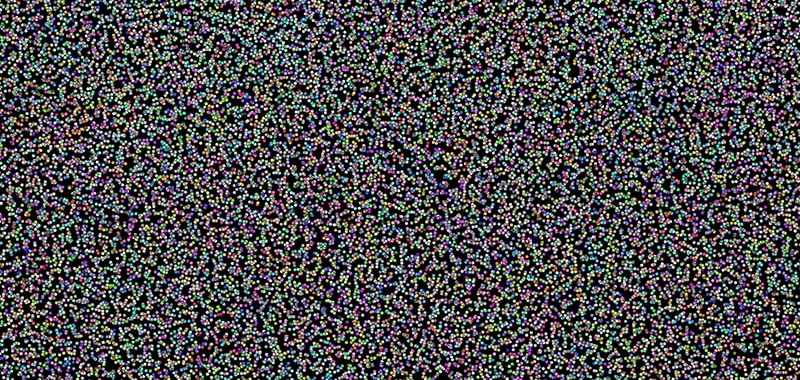

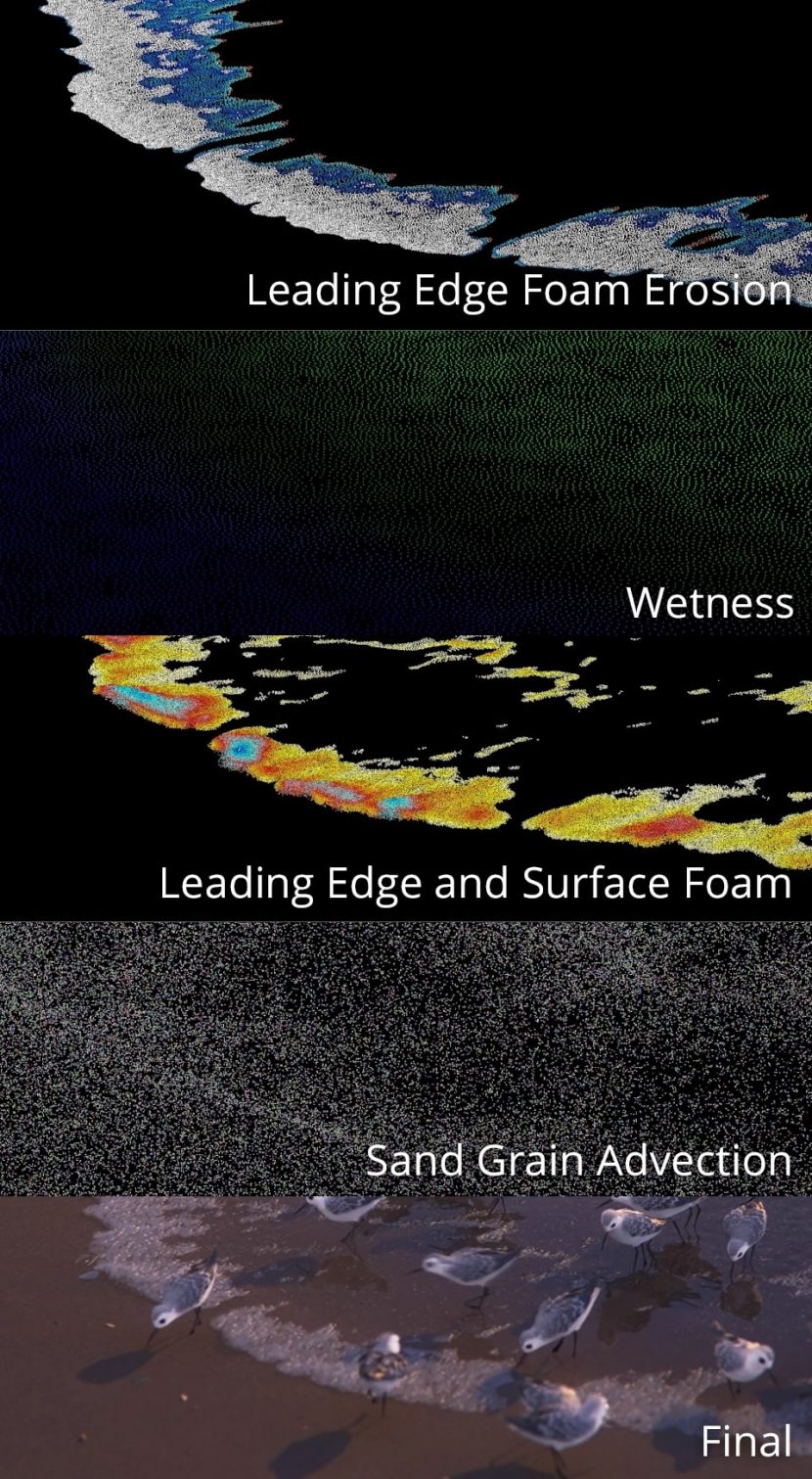

Every shot in Piper is composed of millions of grains of sand, each one of them around 5000 polygons. No bumps or displacements were used in the grains, just procedurally generated Houdini models. Displacements were the first option for creating the sand, but after many tests, the required displacement detail needed to convey the sand closeups were approximately 36 times the normal displacement map resolution used in production, which made displacements inefficient and started to make sand instancing a viable solution.

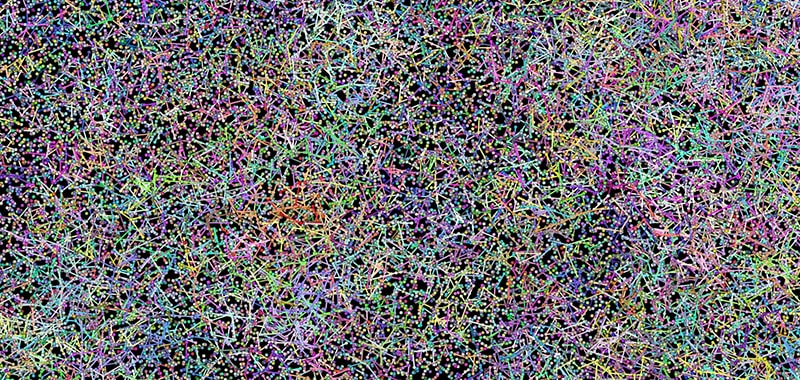

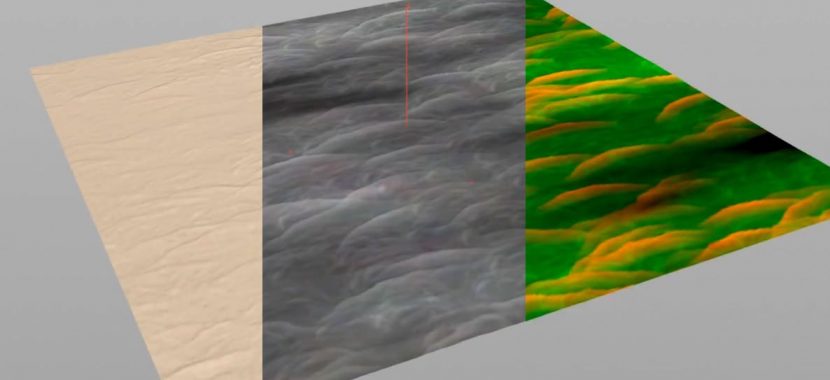

The sand dunes were sculpted in Mudbox and the resulting displaced mesh drove the sand generation in Houdini using a Poisson Distribution model. The resulting sand grains were simulated in Houdini’s grain solver which created a fine layer of sand on top of the mesh, this result was driven by a combination of culling techniques including camera frustum, facing angles and distance, creating a variance of dense to coarse patches of sand for optimum efficiency.

The variety of sand was driven by RenderMan Primvars in order to drive shading variance and dry-to-wet shading parameters, this gave the shading team great control with only 12 grains of sand. The grains were populated using geo instancing and point clouds, both data types handled via Pixar’s USD (Universal Scene Description), now an open source format, which allowed for the efficient description of very complex scenes.

“Rendering such large data sets was going to be a challenge, but we were able to leverage instancing very successfully, with some scenes using a ray depth of 128, which would have been impossible with previous technology,” said Brett Levin. This makes the renders incredibly lifelike, but traditionally very resource intensive, yet RIS was able to handle the enormous computational needs out-of-the-box. “We were able to try new and inventive ways to problem solve a shot in a physical way, trusting in RenderMan’s pathtracing technology, which we would have never attempted before RIS.”

The color of the sand was taken from an albedo palette generated from the beach on an overcast day, from which they made many types of shaders, varying from shell, stone, and refractive glass. These also had to be wet, dry and have varying degrees of tints and shades in order to simulate the billions of grains of sand convincingly.

Another challenge were Mach bands, which is an optical illusion that exaggerates subtle changes in contrast when the values come into contact with one another, thus creating unpleasing sand images. The team created a custom dithering solution for this, making the final images feel much more natural.

“We were able to rely purely on light transport”

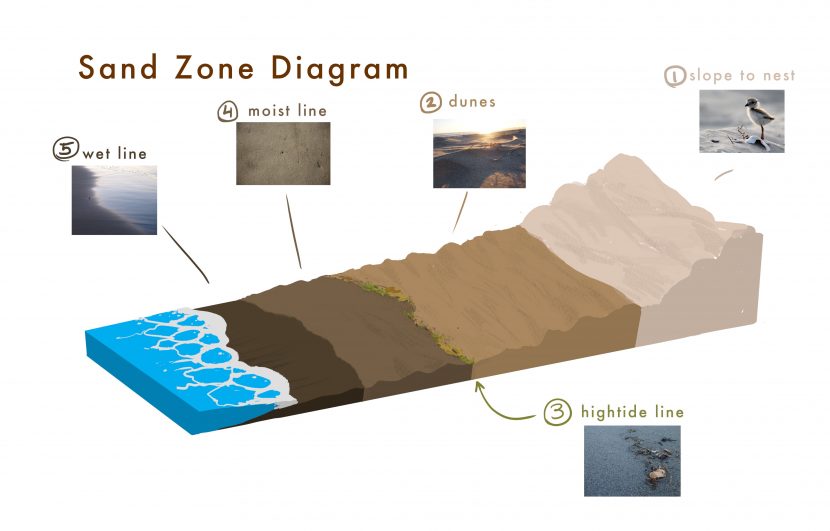

Depending on the shoreline, sand can behave in many different ways. Creating these differing sand types was a nice challenge. The team ultimately categorized three distinct types: Wet, Moist, and Dry.

Explicit constraint networks were created to give the sand grains a level of stickiness, yet with the necessary breakable constraints to allow for a crumbling effect. After manipulating the grain solver in Houdini, the team was able to separate these subtle differences in moisture into art-directable states which could be simulated and animated with predictable results.

The water and foam used Houdini’s FLIP solver. For timing, the team animated simple wave shapes, from which they extracted the leading edge curve and encoded direction. They then built the FX wave shapes and adjusted the thickness based on flow. From there, they sourced the points and ran a low-res FLIP simulations. Lastly, a frustum-padded area is used to run high-res FLIP simulations using velocities from low-res pass. Sometimes retiming of waves was needed for artistic control.

The foam shading was done with a hybrid volume/thin surface shader, which was rendered with over 100 ray depth bounces. This gave the foam particles the required refractive luminance to realistically convey water bubbles. “RenderMan loved these challenges. We were able to rely purely on light transport to convey such realism in the foam and sand. RIS was doing really well with the incredibly demanding tasks,” said Erik Smitt.

Several in-house solutions for bubble interaction and popping were used, such as GIN, Pixar’s system for creating fields in 3D, as well as procedural foam patterns. These tiny bubbles were then carried with the water surface velocity and used a power-law distribution function for creation. Density was based on particle proximity and in turn, it was used to drive foam popping and stacking.

Another great shading trick was used to work around the inherent issues with liquid simulations and water tension, causing an effect called Meniscus, where an unwanted “lip” is formed where the water meets the beach. To work around this, the team duplicated the entire beach geometry and used a dynamic IOR blend to transition between the wet and moist sand. This got rid of the imprecision and created natural lapping water.

Combined with procedurally generated water runoff in Houdini, the team was able to use the resulting ripple patterns as image based displacements in Katana, giving subtle touches to a beautiful looking beach shore.

Piper was heavily influenced by macro photography and originally the team was going to use Depth of Field in Nuke with a Deep Compositing workflow but realized that RenderMan was handling in-render DOF incredibly well and with an accuracy that was hard to match in post. For some exaggerated DOF shots, a combination technique was used, where they re-projected the renders onto USD geometry and added extra blur with Deep Image Data, creating a more stylized look.

Finally, to get rid of pathtracing noise, the development team was happy to use the new RenderMan Denoise. Traditionally, refractive surfaces and heavy DOF requires a lot of additional sampling to clean up entirely, but the Denoiser allowed the team to really push these techniques without having to be afraid of unwanted noise or impossibly long render times. They also leveraged integrator clamps and LPE filtering on caustic paths to kill ‘fireflies’.

In the end, Piper was able to push RenderMan to new physical shading and lighting standards, which helped carry its new RIS technology to unprecedented heights, paving the way for new and exciting times for physical workflows at Pixar.

Part 2 of our Tech of Pixar Oscar series focuses on the Feature film: Finding Dory.

Note: All images Copyright Disney/Pixar. Do not reproduce without permission.

Awesome!!!

That’s incredible huge amount of work and research. Really nice done.

brilliant

oh my lord

I spent the majority of Finding Dory discussing with the person who went to watch the movie with me about how the hell did Pixar make this short. Thank you very much for the info

“Every shot in Piper is composed of millions of grains of sand, each one of them around 5000 polygons.”

Wow…

A lot of resources

We are looking at 5 billion polygons plus all the polys of the characters and grass…That’s some setup

Is it just me or does this seem like a massive waste of resources? Why not use a displacement map for most of it?

Read the article again, it’s all in there. The whats, the hows and the whys

Read my post again.

Displacement maps were not right for the CLOSEUPS. That doesn’t mean polygons are not incredibly wasteful for the rest of the beach.

Oh sorry, my bad. Missed that part 🙂

Once you instance the geometry you get billions for free. How do you think all of the tree’s were rendered on Moana. It’s actually cheaper to use the geometry.

Displacments still need alot of intense calculatoins for just being in the scene. So no mater if its close to camera or far away, the engine needs to calculate the dislament maps in the whole scene, then how light is going to affect the scene afterwards.

“Displacements were the first option for creating the sand, but after many tests, the required displacement detail needed to convey the sand closeups were approximately 36 times the normal displacement map resolution used in production, which made displacements inefficient and started to make sand instancing a viable solution.”

Literally an extract from the article.

Yes, I get that, but I don’t believe that all of those particles had to be sand. I haven’t seen the full film tho, only clips.

I’m not a render developer, but seeing the advancements on the latest render engines (RIS and other raytracers), polygons are not the devil as they were used to be. Most renders can crunch millions of them easily, specially if the’re instanced.

breaking the boundaries of computation

Really enjoying these Pixar articles, mostly because they successfully bridge all the super-technical stuff with clear explanations of the phenomena they are trying to simulate. Well done!

Impressive stuff. Advances like this make the “We’re all living in a simulation” theories not seem so far-fetched, after all

It’s just a pity Pixar’ll spoil the jaw-dropping visuals by giving the character a whiny American teenager’s voice, which sounds like fingernails being dragged down a blackboard.

Piper has been out since June 2016 and has no voice acting, American or otherwise, but don’t let a little thing like the facts get in the way of a perfectly good prejudice.

it says multiple times throughout that RIS handled things with ease, I’d like to know the render times and machine specs to judge what that truly means

It look stunning, but making sand grain of 5k polys! Thats just insane, why???

Incredible work by PIXAR..Loved the details in the article!