In these two impressive spots, one showcasing road safety and the other machine innovations, the visual effects teams from Fin and Method Studios break down their work for fxguide.

NZ Transport Agency – ‘Small Mistakes’

‘Small Mistakes’, a NZ Transport Agency spot that hammers home the importance of safe driving, features visual effects from Fin as a car accident is slowed down, dissected and then shown as from in-car perspective. Visual effects supervisor Stuart White breaks down how Fin delivered the shots necessary to tell that story.

Slow-down: The time slowing down moment was one of the few shots that were explored in previs form before the shoot. Initially this shot would have looked very different and there was an intention at one stage to capture it entirely in-camera using a Phantom or similar high speed rig. However, as the coverage changed and the parameters of the shoot became clearer, director Derin Seale and the Fin team hatched a last minute plan to shoot it just using the Alexa.

How to freeze: The hardest bit about this moment is freezing time without it looking like the car had merely braked to a halt. To overcome this the car stops far faster than any real car possibly could with zero bounce back on its suspension. We also added some CG leaves blowing around and then freezing mid-flight to help sell the effect. To confirm that this would all work, we did the previs for Derin.

Multi-pass approach: The car itself was shot in two passes. First it drove straight past the camera at 100kph without stopping – that’s the first half of the shot you see. Then we shot the second half of the shot backwards. The car parked in its freeze position, then slowly reversed back past the camera. When you speed that up 4x and reverse it, bingo. A car stops on a dime from 100kph with zero suspension bounce. It’s times like these when it’s great to bring previs onto the set. Trying to explain the plan to a film crew who are racing to catch the only good light of the day becomes a lot faster and involves a lot less argument – it’s all there right on the screen, stitched together and working perfectly. In post the shot came together very easily.

Covering the crash: The crash was covered with a great many cameras on the day including two cameras (an Alexa and an Epic) crash rigged one each inside the two cars. When some stuntman has the guts to get into a car and drive it full tilt into a remote controlled target car, you’re only going to do it once! Hats of to that stuntie because the sound of the impact was sickening, it was the real deal. He saw stars for a moment after the impact apparently, one tough SOB.

Choosing the right angle: Even though there were a lot of good angles to choose from, Derin felt that the money shot was the one where we are inside the car that gets t-boned, looking out through the passenger window. He felt that this would be the case even before the shoot and the storyboard reflected that. The trick is, you see the father sitting right there in the seat at the moment of impact. We knew this going into the shoot, so the approach was to drive that victim car, empty of people, by remote control and film it being wrecked from inside the cabin. Then film the actor playing the father in a separate identical car being jerked violently in a wire harness rig, cut him out and put him into the crash car plate.

Plate re-build: We really ended up rebuilding the whole plate in post from a few frames before impact. This enabled us to choreograph the violence with much more control, and involved a lot of elements – a 360 degree panorama of the intersection so we could spin the view out the window any way we liked, CG glass and extra cabin debris flying, a photo-mapped 3d replacement of the approaching car so we could control its post-impact trajectory, a 360 degree panorama of the interior of the wrecked foreground vehicle. The flexibility to do all of these things is the main reason why we like to send a VFX supervisor to a shoot – it allows us to react to unexpected events. Our compositors did a heroic job and must have done a hundred versions, but it was all worth it…it’s the one shot in the whole spot that just has to work and Fin was proud to help make it look the way it does.

Toolkit: Pipeline wise our 3d team uses Maya and renders with V-Ray. The compositing is done in Nuke and Smoke, but it’s usually Flame that does the real heavy lifting. By taking hundreds of shots of the crash scene intersection on set, software such as Agisoft Photoscan can construct a 3d model of the scene and texture that model with the actual photos from the day of the shoot. This then allows us to virtually walk around the film set and view it from any angle. We can measure distances, angles, camera positions. We were even using it to find individual pebbles and leaves on the ground to help nail some of the harder matchmoves. It is incredibly handy to be able to re-visit the set this way weeks after the shoot, sitting at a computer in another country. Photogrammetry has been around for a long time, but the software and the hardware are really maturing quickly to increase the fidelity of the models we can extract from it.

Credits

Agency: Clemenger BBDO, Wellington

Production Company: Finch

Director: Derin Seale

Visual Effects: Fin Design + Effects

VFX Supervisor: Stuart White

GE – ‘Childlike Imagination’

GE’s ‘Childlike Imagination’ races through the diverse innovations for which the company is responsible, with one small twist – they’re inside the minds of a child as she dreams of what her Mom does at GE. Method Studios created a just as diverse set of visual effects imagery from the spot, described below by artists from their New York and Los Angeles offices.

In the moonlight: Initially we were planning for some more establishing shots above water with our hero looking off the boat into the distance. Production was staged as a night shoot in the harbor at San Pedro. Balloon lights were used to help simulate the extreme moonlight and our camera was fit with an underwater camera housing that was able to dip a few feet into the water. At first we planned to do a CG camera takeover using the front end of the move as live action and transition to our CG camera once we breeched the water surface but In the end we went with a fully CG move to give us more freedom. From the live action shoot only the boat and the girl were taken from the original plate. In the shot we have Digital matte painting elements for the sky, moon and atmosphere layers, our CG water surface built in Houdini and transitional CG bubbles created in Houdini to help convey the force of the camera plunging into the water. Once we’re underwater the entire environment is CG.

Under the sea: Creating the look for the underwater scenes was a lot of fun and quite challenging. We watched a lot of reference of scuba divers, small submersibles and of course lots of films with major underwater sequences. In reality the visibility underwater even in very good conditions is extremely limited, and falls of to almost nothing within a matter of meters. Add this to the fact that we’re operating in a nighttime scene and you’d not really see anything. Finding the right balance between what would be physically accurate and what work best for the shot took a number of attempts. If we didn’t push the fall off enough, the look started to feel more like a moonscape; too far the other way and we would lose all the details.

One thing that we found quite helpful in a lot of the real world reference was the constrained color palate. As you travel deeper under the surface you quickly lose the red and green components of light resulting in the nearly all blue images. This was particularly helpful in grading of the submarine shots with our hero as well as our split tone moon look in the opening shot (yellow above water and cyan underwater).

One element we found very useful to help sell the depth was the volumetric lighting created in the scenes by our turbines. We modeled floodlights into the base of each turbine footing, creating additional areas of visibility around the turbines. By controlling the spread of these lights we were able to covey the scale and density of the environment. Other components to complete the underwater look included multiple layers of plankton and several large schools of fish. CG supervisor for the sequence, Dan Letarte, was responsible for assembling all the components of the scenes and rendering them out of Houdini using Mantra.

The bubbles that emanate from the moon and ultimately represent the tidal forces that drive the turbines were another challenge. Two of our lead effects artists, Goncalo “Gonzo” Cabaca and James Kirk worked on various techniques for creating the beautiful flowing “rivers” of bubbles. Ultimately these were built using a combination of simulations and procedural animation in Houdini. With well over a million bubbles in each of the streams, these were separated out for simulation and rendering and composited back together in the final scene. Additional simulations for the interaction of the bubbles and the turbine blades were also generated to add even more details.

Giving planes wings: There were a few challenges involved with the wings like how do we attach the engines to the bird wings and how far could we extend the flap animation with out it looking comical? In order to solve these problems we found an abundance of reference and did quick previs to share with the director and agency for immediate feedback. We studied footage of geese flying and realized after a few animation tests we had to be true to the actual size of the plane – not a bird. Therefore the feathers had to act accordingly as if they had more weight and strength, this meant slowing everything down and decreasing the amount of subtle feather turbulence. After creating the matte painted sky we refined the lighting in the shot going back and forth with comp. Compositing supervisor Dominik Bauch help gather great reference of planes in all lighting conditions to study their surfaces and material behavior, pushing our lighting team to match the reference.

Hospital pick-up: We shot the hand picking up a practical miniature model of the hospital first with the DP using a dioptre to magnify the optical power of the lens. Next we applied the scale math and shot an empty parking lot in downtown LA on a cherry picker for the bg plate. Shooting tiles allowed us to stitch plates and apply a nodal camera move on the larger canvas. After we had the camera working for both plates we matte painted over the parking lot with the surrounding hospital environment of paths, gardens and roads. Finally we added some cg debris and dirt falling off the underside of the hospital and added a few digi doubles walking around the hospital interior. We experimented with the hospital lighting in both shots searching for a balance of translucency and self illumination knowing we had to keep it in the same world as the practical set.

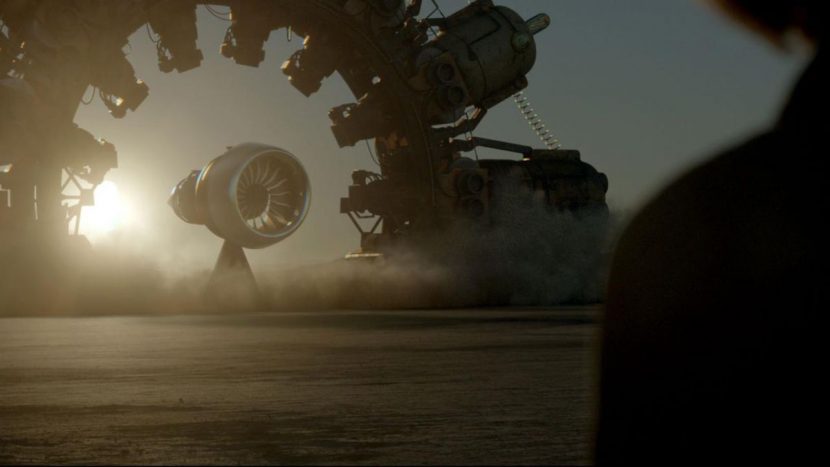

Engine in the desert: Shooting in the desert (at sunset) gave us a surrealistic starting point so adding an imaginary 3d printer and engine to the vision was always going to be fun. We received the cad files of the GE jet engine and the usual model cleanup was needed before texturing. We sourced online reference of the GE engines at testing facilities which helped locked surface materials. We lit the Engine and Printer in vray with turbulent dust created in Houdini. With a couple of the shots being entirely cg it was nice to be able to compose the shots and work with the position of the sun to enable some nice backlighting and flare kicks.

Through the trees, by train: Creating the train and trees sequence used a variety of techniques and softwares. We used our traditional hardsurface pipeline to create the train. Modeled in Maya, textured in MARI, animated in Maaya, and did shading and lighting with V-ray thru Maya. The trees required a more complicated setup. The trunk and first level of branches were modeled in Maya, sculpted in ZBrush, textured in MARI, and rigged and animated in Maya in the way we would create any cg character. Then, for each tree, procedural branches and leaves were grown from the base trunk using Houdini. These branches and leaves were animated via dynamic wire simulations to create secondary motion driven by the keyframe animation of the trunk. Houdini simulations of dust and flying leaves enhanced the realism of the surreal shots.

Credits

Agency: BBDO New York

Production Company: MJZ

Director: Dante Ariola

Visual Effects: Method Studios

VFX Supervisor: Benjamin Walsh