Welcome to Marwen, has significantly advanced the art of digital humans. We spoke to the Director Robert Zemeckis and Visual Effects Supervisor Kevin Baillie about the remarkable advances in character animation.

The film tells the story of how a victim of a brutal attack finds a unique and beautiful therapeutic outlet to help him through his recovery process. This results in 46 minutes of innovative digital character animation of the cast as action figure like dolls. What is remarkable is how appealing the ‘heroic’ doll versions of the actors are on screen, and how successfully the team managed to cross the Uncanny Valley.

Robert Zemeckis

“Technology has to be embraced because it allows the filmmaker to make the best possible movie. It’s all there to serve the character in the story”.

Robert Zemeckis believes that “in this movie we finally figured out how to really do CG faces, to make sure that the emotion is completely translated to the Avatar”. He thinks, “it’s close to perfection. It’s really great. And it just took R&D. It took some time, it took some really talented people, some trial and error, but in the end, we got another tool in the movie toolbox that we can use to tell interesting stories”.

The idea for the film started eight years ago. In the early days, Zemeckis wasn’t a 100 % sure how he was going to do make the film, “but while the script was being developed, the technologically advanced. By the time we got to the place where we were making the movie, it all came together”.

He points out that from a certain perspective, the “whole thing with performance capture has always just been a horsepower issue”. The director has done several films before using different Motion Capture (MoCap) characters but none as technically successful as he achieved in this film.

Technology in Film

People often ask the director about advances in technology, given Zemeckis incredible history of emotional story telling in films with cutting edge technology. Many of the Director’s films have been at the cutting edge of advanced film making technology. For example, Who Framed Roger Rabbit, Forest Gump, Back to the Future, Contact and in the area of motion capture, The Polar Express and Beowulf. And yet for Zemeckis it is wrong to frame these films as being different from other films with less visual effects, – as all films are complex technical undertakings.

“There’s nothing more ridiculous than a closeup, as far as an actor is concerned. He’s got a big piece of glass right in his face. You are looking at a piece of tape on a matte box. There are no other actors that you can see. Any fellow actors, also doing the scene, are 20 feet away, delivering their lines off camera. And then there’s a bunch of technicians, with their bellies hanging out, surrounding the camera AND the actor or actress has to deliver the most emotional moments of the movie. It’s pretty ridiculous, when you think about it,” Zemeckis points out. “And yet that’s the moment when everyone says, ‘Oh my God, look at that acting, – it’s just so real’, yet it’s being done in a completely unreal environment. Movies have always been technical. It’s a technical art form and this idea that there’s any reality anywhere, – unless you’re doing a documentary… – nothing is real! It never has been”.

Performance Capture

Zemeckis thinks of MoCap as being similar to black box theatre, where the actors have no costumes, no makeup, there is nothing but a folding chair and the actors go onstage and create a performance. Zemeckis says good actors enjoy the MoCap process. “They are very enthusiastic about the process because all they have to do all day is act,” he says. As MoCap generally doesn’t require special lighting, camera framing and blocking there is little reason to stop the acting to reset and thus a MoCap sessions can interrupt an actors process much less. “They don’t have to worry about hitting their mark. They don’t have to worry about focus. They don’t have to worry about doing scenes over and over and over for coverage. They get to act a scene from beginning to end. They get to pace a scene as if it were theatre, and they get to work with the other actors, in the volume with them”.

“The technology for performance capture focuses everything on performance and the tyranny of movie technology – of having to do an unnatural closeup, – of having to hit these marks, – of having to worry about whether this was great performance will make it because of focus problem… – all that stuff goes away. And everything just becomes about performance”.

It is somewhat ironic then that for Marwen, the Director found himself filming a hybrid solution, that was capturing the performances with MoCap, but they were also using the Alexa footage of the actors faces. Unlike normal MoCap, in the Marwen MoCap studio, the DOP had to accurately light the actors, and address issues like shadows from hats, time of day, reflections and bounce light.

Actors in the process

In MoCap there is normally no need for hair, makeup and wardrobe. “I’ve worked in performance capture with a giant array of magnificent actors, the only thing that is ever complained about when actors do performance capture is not having a costume. They do like that, they like to dress up and find their character. They like that, they really do.” comments Zemeckis. “In Polar Express, for example, Tom Hanks played four different characters and he had to wear the same MoCap leotard for every character, it never changed. The only thing that changed was the little name tag on the front. For his process, Tom wanted a different pair of shoes for every character, so every character would feel different for him. I thought that was interesting”.

For the Marwen MoCap, while once again the wardrobe was a grey MoCap suit, makeup and key props such as hats were important. As the actors faces would be partially used, the DOP spent a lot of time in pre-production working out lighting solutions. Any shadows or lighting effects from Hats or props needed to be captured visually, along with the MoCap data.

(Note: we will also be posting a podcast of our chat with Bob Zemeckis and Kevin Baillie )

How did they do it?

Visual Effects Supervisor Kevin Baillie has worked with Robert Zemeckis on many films, The director explains, “I do that with a lot of the crew, especially my key people, who are really talented and who I have a good rapport with. I always try and keep them since it is great to have a shorthand and to not have to start from square one on every movie”

Kevin Baillie (VFX sup. Atomic Fiction/now Method Studios) joined very early, before the film was green lit, to conduct a number of film tests. For one such test, the team explored going down the road of trying to shoot live action actors on giant sets. They tested augmenting the actors with doll parts, installing joints on their elbows and knees etc. This also involved digitally sliming the actor’s silhouette to a more a heroic doll shape, along similar lines to what was done for ‘skinny Steve’ in the first Captain America film.

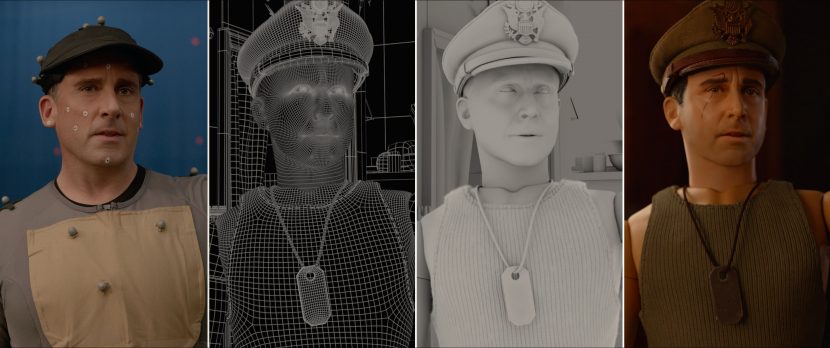

Atomic Fiction did several extensive tests of Steve Carell. The main test was a 2000 frame performance by Carell, not used in the film. “We matchmoved these doll joints onto him, and it just looked horrible” recalls Baillie. “It was like somebody in a high end halloween costume and it looked completely goofy. We were stuck at that point. We knew straight MoCap wouldn’t work and augmenting live action footage wouldn’t work, but before we gave up, we brainstormed possible solutions”. As the team already had a full Steve Carell body track, they decided to try some unorthodox ideas. “We figured we had these 2000 frames of tracking, – which is effectively ‘fake motion capture’ of an actor. How about instead of augmenting Steve Carell with doll parts, we augment a digital doll with Steve Carell parts? This was the film’s ‘Aha moment’ where we first tried just using Steve’s real eyes and mouth.” The team partially warped and re-projected the actors face onto his digital doll and then matched the lighting. “It was just like, ‘wow, this work, it reads like a human performance’. Steve’s performance was coming through completely clearly, but it also looked totally believable as a doll”. Following this test, the film got green-lit and the methodology was refined for the main shoot.

It isn’t just comping a face on.

The problem with explaining the methodology is that it implies the final result is just Carell’s eyes and mouth tracked onto a doll, but the process was more complex in reality.

The doll version of any of the actor’s faces are not 1:1 matches. Each of the doll’s were designed to be a differently proportioned ‘hero’ version of their relevant actor double. This meant that not only a cleaner set of features but often a thinner head, with different bone structure. “Steve’s doll had a sharper jawline, slightly smaller, and he’s a little bit more symmetrical” explained Baillie. “The women had smaller faces and more button noses. Every actor required a different treatment. There wasn’t any sort of a formula that we could apply across all the characters. Everyone was a custom design solution to successfully look correct as their doll alter ego.”

The matching of angles and tracking needed to be very accurate, the entire face needs to be accurately tracked. Unlike many common face capture pipelines, the actors could not wear head rigs, since uninterrupted footage of their face was needed. In addition, regardless of the comping work, the CG doll face still needed to be animated with exactly the same expressions and lip sync as the real actor. If an actor is talking most of their face is affected – it is not just their mouths or eyes. Their chins, cheeks, eye brows and forehead all move dramatically during any performance. Matching this without a head mounted camera rig (HMC) is not easy.

For any live action material to be retargetted and used with a digital doll it also needed to have the same lighting. More than just directionally the same, the lighting needed the same quality of light. It would look wrong to comp live action filmed with soft area lights onto a doll face lit with sharp shadow, for example.

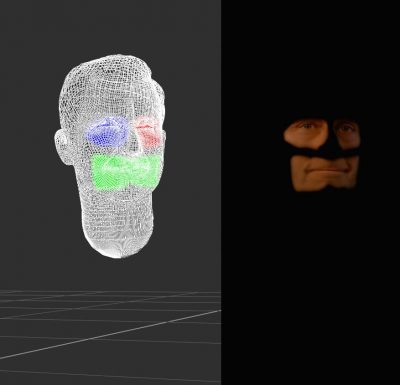

Finally, it is wrong to imagine the eyes and mouth as being patches simply comped over a CG face. Various passes such as specular and digital make up (including scars), had to be blended with live action skilfully. “Ultimately, in order to get the projection methodology to work, we found that not only did the angle of the head have to match pretty precisely, but the underlying animation of the face itself had to match pretty closely because we couldn’t just project the footage of the actor onto the digital head” explained Baillie. The final solution used several components. “We had to use passes of the underlying CG renders on top of the projected plate photography to give it that plasticky sheen and make sure that it never looked like a person in a suit”. Without this level of layering, the ‘doll’ versions did not always looked plasticized. “It was tricky, because we were using the actual face footage, we couldn’t shoot the actors and later scan their heads saying the lines, nor use a head cam rig”.

In these images above, while the middle image indicates where the majority of the live action was used. It is clear from the lighting, scars and digital makeup that the regions are not just comped onto the dolls, but rather a complex layered approach was used. The solution incorporating digital projection in NUKE and multiple passes of ‘doll skin effects’ to get the final result.

Knowing and Matching

To successfully light the actors and provide the correct final look of any shot, the PreViz needed to be more detailed than would normally be needed. Zemeckis also wanted to allow the actors the freedom to act and not be locked into prescriptive and restrictive choices on the MoCap stage.

Particularly important was being able to accurately light the previz. Additionally, a two man vfx team was on set to adjust the lights in UE4 to match whatever changed or happened to the lighting during filming. If the DOP needed an extra eye light, (not predicted in previz), he was free to add it and the UE4 team would dynamically match it on set in the Unreal Engine. Thus the two versions, the live action and the UE4 visualisation, were always matching and valid.

If the Unreal engine had not been able to match the lighting in real time, it would have been extremely unlikely that the captured footage, (shot on the Alexa on the MoCap stage), would have been correct for the final shots.

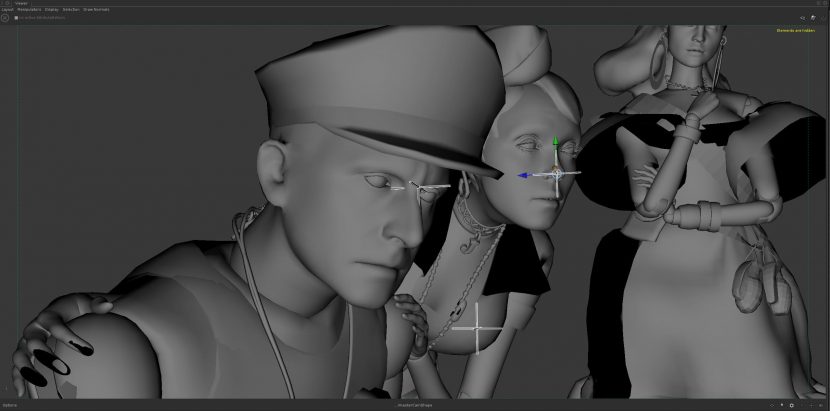

The faces of the actors had to be lit as closely as possible to what they would be in the final film, during the MoCap to use the face footage, so the actors were on blue screen stages, in grey MoCap suits, without any close up reference face cameras. “The process needed for producing the underlying facial animation required us to build a full FACS rig of every actor on the project and hand keyframe animate all of the faces throughout the entire 46 minutes that we spend in the imaginary doll world”, commented Baillie. The Doll facial animation was done by traditional character animators, and was entirely key framed.

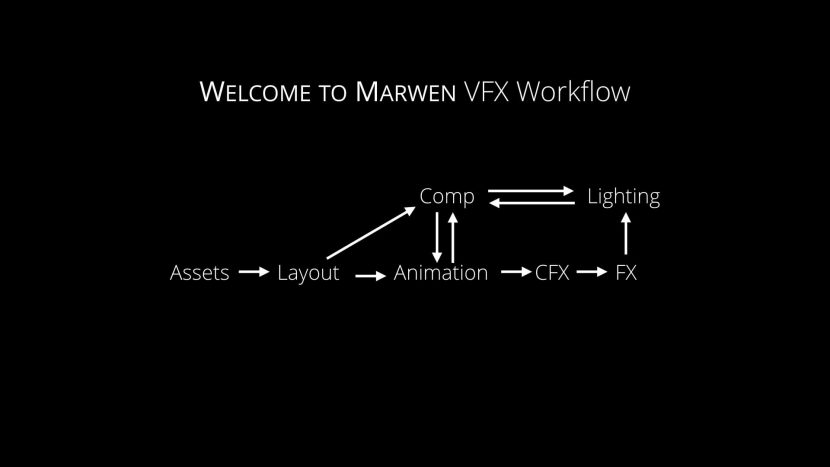

The whole process become a much more agile and less of a linear traditional vfx pipeline as a result of the new approaches the team worked out.

For every shot there was a NUKE comp template set up. This got Atomic Fiction’s compositors 70% of the way on how much of the mouth or face to use, but it was still up to the compositors to determine if they used the folds at the sides of the mouth or how much of an actor’s eyebrows would be included. “They needed to determine how much de-aging we included?” comments Baillie. ” How much spec do we bring back on top from the CG render to plasticized the actor ? Every single shot needed custom love to make sure that we were retaining the soul of the actor, which is really what Bob (Zemeckis) kept going back to: ‘I don’t feel the soul of Steven this shot or I don’t feel the soul of Leslie Mann in this shot‘. And then the team at Atomic Fiction would have to go back and figure out why that was”. The solution was rarely the same between any two shots. Sometimes, it was pulling more facial features in and sometimes less. Other times it was “a three degree tracking mismatch on the angle of the head or the key light was swung a little too far around. All those things would completely break the illusion”. For Baillie and his team the biggest surprise in compositing was how sensitive this methodology was to lighting. “If we didn’t match what the lighting was on the MoCap stage exactly, it would just break the likeness completely”. Which was why the detailed ‘Production Viz’ or real time game version of the film, emulating the set, sky and area lights, was so critical to the process.

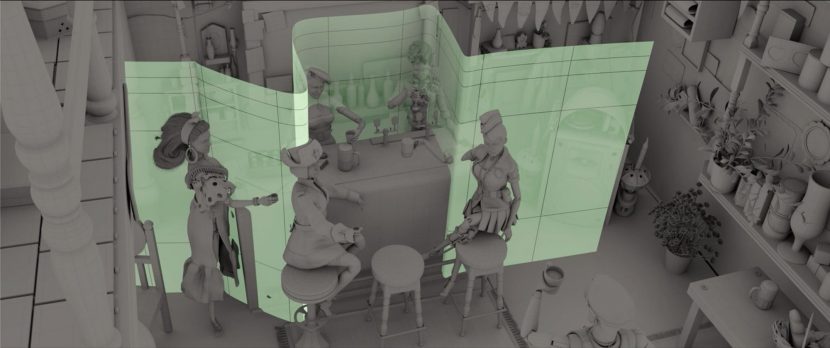

The complex nature of the compositing in bringing the whole process together was pivotal. The compositing was all done in NUKE and the lighting and rendering in KATANA. The comp team played an enormous role not only with the characters and the camera blocking but also in managing the focus plane for the shots. In combination with PIXAR’s RenderMan R&D team, the team at Atomic Fiction managed to work out a way to have a variable focus plane, or ‘focus curtain’, that allowed the compositors to keep the characters faces in focus in a way a traditional camera would not allow. Much like a real world diopter, (on steroids), the Marwen cameras can adjust the focus point across the frame.

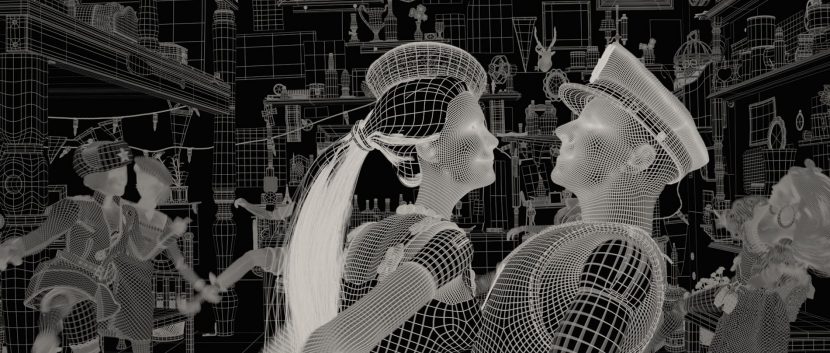

In the scene below all the main female dolls can be in focus while being at varying distances from the lens, but still inside the look of a shallow depth of field that we associate with miniatures.

The digital character work in Marwen is innovative, impressive and advances CG facial retargeting in ways that will no doubt influence future projects over the coming years.

Note: Atomic Fiction is now a part of Method Studios.