On the fxpodcast this week we talk to The Mill’s Gawain Liddiard and Daniel Thuresson about the Google Spotlight Story ‘HELP’, a 360 degree short directed by Justin Lin through production company Bullit. The Mill collaborated with Google ATAP on the project, which involved shooting with a custom four-RED camera rig, with the VFX studio incorporating many visual effects plus a creature into the film film while developing its own on-set stitching tool – Mill Stitch.

JUMP TO THE PODCAST

https://vimeo.com/130096298

We also talked to the project’s visual effects supervisor Alex Vegh from Bullit about the on-set shooting process.

fxg: How did you get involved in the short?

Vegh: I’ve been a long time collaborator with Justin for several years now. I did previs for pretty much all of his movies starting with Tokyo Drift. I did some television work for him too. I’ve done previs supervision and vfx supervision and second unit shooting. When this project came up it was such a great opportunity to work in this format. It was daunting, but Justin is not the kind of person to shy down from doing something new – he embraces that. It was an interesting challenge because I don’t think anybody has done it to the quality level that I think this piece has. There’s a lot of things that are out there that are more a GoPro level. The goal was really to create something that was cinematic quality with 360 degrees live action. That was an interesting problem to try and solve.

fxg: What development was required in terms of the camera rig and VR tech?

Vegh: We had to do a number of things in parallel – what the story was, how that was going to play out, how were we going to move the camera (was it moving or static). From there a lot of the technical solutions came to be. We had some great collaboration with The Mill. I also spoke to people from DARPA to Google about what is the latest and greatest stuff that’s not quite out there yet but maybe we can get our hands on and use. Also we had a time frame to get all this stuff by. It meant we had to use off the shelf componentry, and figuring out a clever way to arrange all that together.

fxg: How did you approach the camera rig?

Vegh: We chose REDs over ARRIs and all the other great cameras out there. For one, it was a quality issue and also a resolution issue – the REDs were the highest resolution camera that we could possibly get to give that look at 30 fps, and at night. We started off with this one route which was using these 2K SDI cameras. These were going to be bespoke 2K resolution cameras with pretty decent color depth that would also use C-mount lenses. You can get them from Fujinon and a couple of other companies make them.

That was going to be what we called ‘the Death Star’. It was this sphere that was 18 inches to 20 inches wide that had a series of 16 cameras on it. That grew and shrunk – the number of cameras. How we wound up getting to the REDs was – well there’s a couple of different approaches to solving the parallax issue. One is an optical one, where you’re trying to get the cameras as nodal as humanly possible, then you can get your action closer to the cameras. The other one is more of a software-derived one where you’re trying to gain all the depth information from all these cameras, and then you can use your pixels and project that onto geometry.

The biggest issue is seams. Once you get into the issue where two cameras are separated from each other, the parallax with objects that are close or far apart gets to be different. That’s one of the biggest things we had to figure out. Our solution was to try and use as few cameras as possible to have as few seams as possible. The other thing we did to aide us with that was to have an operated head, that as long as we didn’t go too fast, the motion blur would still feel correct, and you could operate a bit to keep people in one lens for a large period of time (since crossing frames is one of the tricky things).

When you get to a four camera solution and you’re only looking at cardinal points, you’re talking about something that needs to have a field of view of 180 degrees to get the correct amount of overlap. We looked at many different lenses – a 6mm Nikon – made in the 1970s. They’re huge – they look like a giant pizza plate. They see behind themselves. They have a 220 degrees field of view.

We tried playing with everything from that to a company that specializes in back-up cameras for cars. We ended up testing a Canon zoom which was fairly sharp – not necessarily the sharpest – but it was even across the entire lens. You wouldn’t necessarily have softness in one section of the lens and then sharpness in another. That was very important when getting to the blending part.

I worked with The Mill on do we want to a 2 camera rig, or 3 camera or 4 camera rig? We came to a 4 camera rig because we wanted to protect for resolution. That’s the other side of the story. A lot of the GoPro cameras – they’re not of the same quality as the RED so you introduce all kinds of jello’ing from the rolling shutter, the color depth isn’t quite as good, and they basically have plastic quality lenses. Granted, the Canon lenses are just photography lenses but for what we’re doing that was really the best option out there.

The rig also had to be stuck and plated together from the get-go. The Mill came up with a very creative way of tracking the lenses as far as the deformations and tracking the glass itself. Part of the process was making sure all the cameras stayed locked in place, and one particular lens was for one particular camera. Because what would happen is if you take these lenses off and put them back on again, they project onto the chip differently each time. Again, they’re not cinema quality lenses.

fxg: What about the actual thing the camera rig itself would be attached to?

Vegh: We started off with a cart system. We found that we were better moving the camera from above. We had a great deal of elevation changes. At one point we talked about doing motion control, but we really found that there wasn’t a moco unit out there that would give us the freedom of movement and the length of movement that we wanted. So we wound up using a Spider-cam cable-cam, three different companies to do cable operated systems – to try and keep us in a few passes as possible so we didn’t have to repeatable movement.

It was all intended to try and capture everything in one take. There’s three different sections – China Town, subway and the LA River. Those were three sections all tied together with what we shot on stage and seamlessly blended so you couldn’t really tell you were transitioning from one place to the other.

The cameras were all rotated sideways for the most width. When that lens would project that image onto the chip, just the top and bottom would get cropped a little bit, but the sides – you get the full image. So we rotated them sideways because we wanted more overlap. Not as much overlap was needed on the top because you’d been seeing the rig anyway (and it would be painted out). So we adjusted the camera rigs to maximise the overlap, which was the overlap when a character crosses frame. We knew they would be our critical moments and we wanted to give as much overlap as humanly possible.

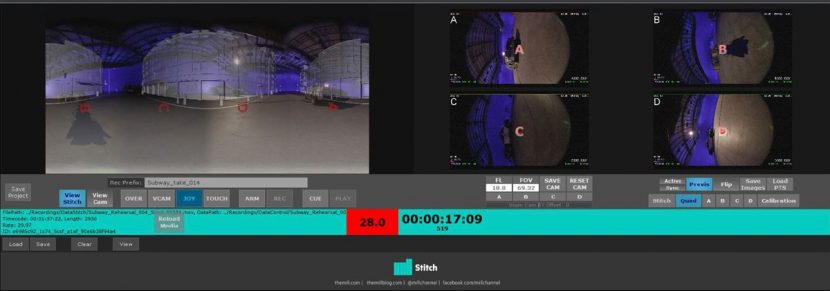

We had four monitors on the stage, and fish eye views, and the director comes out and we think he’s never going to be able to understand this. You can’t really frame anything like that. So what The Mill developed was this thing called Mill Stitch which in realtime did a low res stitch of the sphere completely together. Then we had a joystiq controller that allowed us to change virtual lenses. So we could go to a 22mm lens – so you’re looking at this 360 degree lat-long map and you could punch in or zoom out as desired.

That also takes into account the resolution. Phones are HD quality, so why do we need all that resolution? Well it’s because you’re only viewing a small segment of that entire world. So you need to have the pixel saturation in order to have a good quality image. That’s why we used the REDs at 6K.

fxg: How was material reviewed on set?

Vegh: We had four monitors that were the complete bare lenses projecting our fish-eyed image. Then we had Mill Stitch which was on a very large monitor which was operatable by a joystiq – it was like an XBox controller. I was operating it for Justin so he could look at and follow the action and see how everything was playing out from strictly a story standpoint.

The other tool we had – it was a wireless monitor that Justin could take on the stage with the correct lensing and look anywhere he wanted to look. That way he could have an actor or actress move around and work out the framing. When you’re looking at a lat-long it’s really hard to understand what your final framing is going to look like.

One of the big things was allowing the director to have the creatively to do what he wanted to do, and not have technology get in the way of that. For him to have as much freedom as possible and play out as naturally as possible, which you can in a 360 degree movie. One of the things we did learn along the way from the cable cam companies was that the systems are very capable – they’re generally pre-programmed and you can slow it up or speed it down, but it’s always going to go along that path. So when you have the actors on stage, it turns into more of a dance on stage – like a play. Normally the actors would act and you have the camera following them. But our camera is going to move where it’s going to move – there’s no adjusting for that. So it’s a different sensibility for direct action.

fxg: Can you talk about on-set approaches to lighting and shot design, given that it was a VR project?

Vegh: One of the greatest comments from one of the guys on set was he was moving some stuff around. He was like, OK, I’m going to go move this stuff over here. But it’s in the shot, we’d say. Yeah, but what are you shooting? We’d say, Everything. Everything is in the shot. It does make an interesting challenge for a DP who does want to light for a close-up. How do you do that in 360 degrees so the lights are not in the way, or causing a lens flare in one lens and being OK on another lens.

The other thing Justin considered was, he likes very practical lighting – feeling like it actually exists in that environment, and trying to sell that. The DPs had to adjust for the 360 degrees but they did wonderful work. There were some automated lighting systems to help out with that.

Even in the subway tunnel – there was a lot of interactive lighting that was on our actors. They built these huge strobing lighting rigs that would be like a tracer light that started on one end and went all the way down to the other to give the illusion that the subway car was moving. They built a lot of bespoke rigs to capture it. Also, the production designer did an amazing job trying to build something to accommodate our Spider-cam system. You have to be very aware how this thing travels down, what wires are going to be in the way in the beginning of the shot to the end.

fxg: Did you previs the film?

Vegh: We did previs it. One way was to make a hero camera, which was a standard Quicktime that was easy to move around and email and send off to people. From there we also built cubic spaces that are representational of what our real camera would be and put that onto devices – phones, tablets – to play with it. ATAP and the guys were great at making a brand new tool out of nothing where we could just drop in some Quicktimes, let it process and boom, you’d have the ability to go forward, backward, zoom in and out, look at the complete lat-long.

fxg: What kind of challenges then presented themselves in incorporating the visual effects?

Vegh: One of the first challenges was the amount of data. 6K raw files, 4 cameras shooting multiple takes. It was just terabytes of data, so managing that and being able to work with it. There’s a lot of information you have to push and pull around. Being able to review the material – all of the stitch tools wasn’t just for production. It went through the reviewing of materials over at the Mill. That way when Justin or myself were reviewing the material, it was easy to look at.

When you review normal shots, you watch through them several times and make comments. But with this: you sit in a room in an office chair, turn off the lights, you play it in one direction, over and over, rotate. Play it in the next direction, over and over, rotate. Then again. It would take me 2 or 3 hours to review just one section of it. You have to look in every single direction – up, down, all over the place to make sure you’re hitting every single note. With this there are so many notes, it’s exponential. Where normally you might take a matte and pull it off frame, it’s OK but with this there is no off-frame, so you can’t do that. That was interesting.

https://vimeo.com/129240342

Another interesting thing was color timing, and working with those tools in a standard color timing suite. What we would normally have is a couple of devices on hand, and then in the suite you’d zoom in or out – but generally you’re dealing with an overall lat-long so you have a nice base, and then deal individually once you’ve got that. You kind of get use to the lat-long after a while – you understand it.

Motion blur – dealing with a spherical environment, your motion blur in a lat-long map – you can’t just streak it from left to right, it actually has to follow that curve. The pixels down at the bottom versus the pixels at the center and the top – they’re completely different. You can’t apply the same type of blur to the top and the bottom because your capture resolution is different at the poles than it is at the center. It’s almost like an inverse bell curve for motion blur for those spots. We were also working in 30 fps so we could port over to all of the devices.

The trickiest thing for me to keep the live action tied into the CG, without having that separation. Most of the artists – you get used to working in the lat-long form – you have to isolate all the reasons why it might be a lighting problem or a matte. There was a lot of set extension and real sets, and digital lighting and practical lighting. There was a lot of detail work and resolution that you wouldn’t normally do. In a traditional shot it might be a simple couple of polygons with some textures applied to it. In 360 degrees you can see that thing coming towards camera and going away from camera so you need that resolution to withstand that.

fxg: What about the sound design, how was that accomplished?

Vegh: We did a traditional stereo mix, and then that was put into a binaural audio space. We would take the locations of where the sound would be, and we would track all of our people, our actors and CG in a 3D space, and then all of those sounds were individually projected in that environment. When you turned your head, it all correctly locates spatially the sound, which was very impressive.

Listen to Gawain Liddiard and Daniel Thuresson from The Mill discuss HELP in our fxpodcast below.