In this episode, we talk in-depth with Jules Urbach CEO, of OTOY Inc. about what it will take to build an actual Holodeck.

OTOY has been working with The Roddenberry Estate on creating assets for the Immersive Roddenberry Archive Experiences. This is a multi-year roadmap to preserve the history of Roddenberry’s Star Trek work across Movies, TV, Comics, and Literature.

There are three main topics we discuss:

- The digital recreation of the Enterprise and its associated assets from not just the original series but all Trek shows.

- The use of those assets in virtual production, specifically a test with the Unreal Engine in an LED stage.

- The future application of this material into volumetric experiences, both VR/AR and also use with light-field monitors (vai Light Field Labs).

The first Immersive Roddenberry Archive Experience in Las Vegas allowed people to navigate around a 1:1 recreation of the enterprise or view the way the bridge of the Enterprise evolved over time.

The first Immersive Roddenberry Archive Experience in Las Vegas allowed people to navigate around a 1:1 recreation of the enterprise or view the way the bridge of the Enterprise evolved over time.

The VP project was not a restoration of an episode but rather a re-creation of the original unaired pilot episode’s set and props inside an interactive 3D experience. This version of the experience is built in Unreal Engine and involved hiring actors to play iconic Trek characters such as Spock.

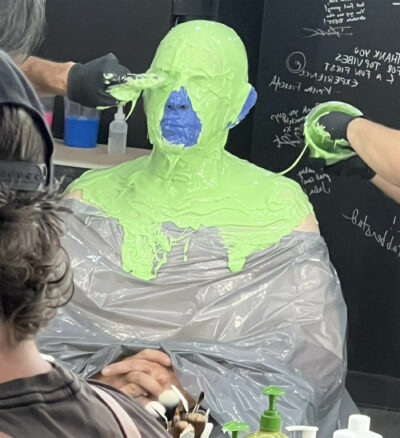

In the short sequence above, the live action was shot in 8K on the Red Helium camera with Lawrence Selleck and Mahé Thaissa as Science officer Spock and Yeoman Colt. Lawrence went through the wringer to look like Spock with complex prosthetics.

In the short sequence above, the live action was shot in 8K on the Red Helium camera with Lawrence Selleck and Mahé Thaissa as Science officer Spock and Yeoman Colt. Lawrence went through the wringer to look like Spock with complex prosthetics.

These prosthetics were then further digitally enhanced, especially his eyes. -The team deployed real-time shader and digital makeup for shots featuring close-ups of Spock’s eyes. As you can hear in the interview, Spock’s eyes were digitally applied while the team are filming. Additionally, in post, roto teams cleaned out any remaining visible makeup lines with in-painting.

When filming the actors they also experimented with capturing performances for future mediums such as VR or Light Field displays. OTOY and Light Field Labs signed a technology partnership some time ago. Light Field Lab will contribute its holographic display technology and OTOY will provide its ORBX Technology for rendering media and graphics on the glasses-less holographic display panels.

The OTOY team scanned in their Light Stage but they have also been moving to volumetric 4D scanning. This will provide the ability for actors, not just 3D reconstructions to be experienced in a VR or Light Field display. Fxguide previously discussed the Light Field work OTOY had produced with their experimental Batman VR Light Field project, you can hear the guys reference this in the podcast.

In the clip above, you can see the ‘live action’ footage can be viewed from any angle volumetrically.

And below is the OTOY Light Stage Body Capture for Roddenberry Archive being interactively rendered in Octane viewport.

.@Apple just featured @OTOY’s work on the @Roddenberry Archive for the second year in a row at today’s #appleevent. Apple unveiled #OctaneX for M2 iPad Pro with full support for #RNDR. App is out next month. Huge Thank you to @cbs for their support! #statrtek pic.twitter.com/6IH09ksPG4

— Jules Urbach (@JulesUrbach) October 18, 2022

In our podcast, John refers to the Apple keynotes: above is a link