Harry Shearer is a well-known and respected comedian with an incredible career most notable as of the lead voice actors in The Simpsons ( performing as many iconic characters including Mr. Burns, Waylon Smithers, Principal Skinner, and Ned Flanders), and in films such as This is Spinal Tap (1984), The Truman Show (1998) and A Mighty Wind (2003). Since 1983, Shearer has been the host of the public radio comedy/music program which is now a podcast. On his weekly program, Shearer alternates between DJing, commenting on the news of the day, and performing original (mostly political) comedy sketches and songs.

This year he has released a new album, The Many Moods of Donald Trump. The music and words are by Shearer, and it was recorded primarily at Esplanade Studios, New Orleans earlier this year. The first single from Shearer’s new album was Son-In-Law.

Just prior to the COVID lockdown, Shearer was in Sydney and he decided to produce the music video of the first single using motion capture so he could puppet a political satire version of the US President. As COVID broke, Shearer got on one of the last flights out of Sydney back to the USA, leaving his production team in Sydney to work out how to do an indie virtual motion capture, across the Pacific. This was not Shearer’s first brush with MoCap – he had worked on a similar project which was a comic short featuring President Obama showing the newly elected Donald Trump around the Oval Office in 2016. This new project is both much more advanced and done as a remote collaboration from both sides of the Pacific Ocean.

The motion-capture was recorded by DOP Matthew Mindlin in LA and with the MoCap team in Sydney Australia. The entire project was conceived and produced by Harry Shearer himself. The Australian team was centered around The Electric Lens Company’s Matt Hermans who was the project’s Technical Director. His team was aided by the team at MOD Studios in Sydney, led by Michela Ledwidge, who was the Virtual Production Director and Editor.

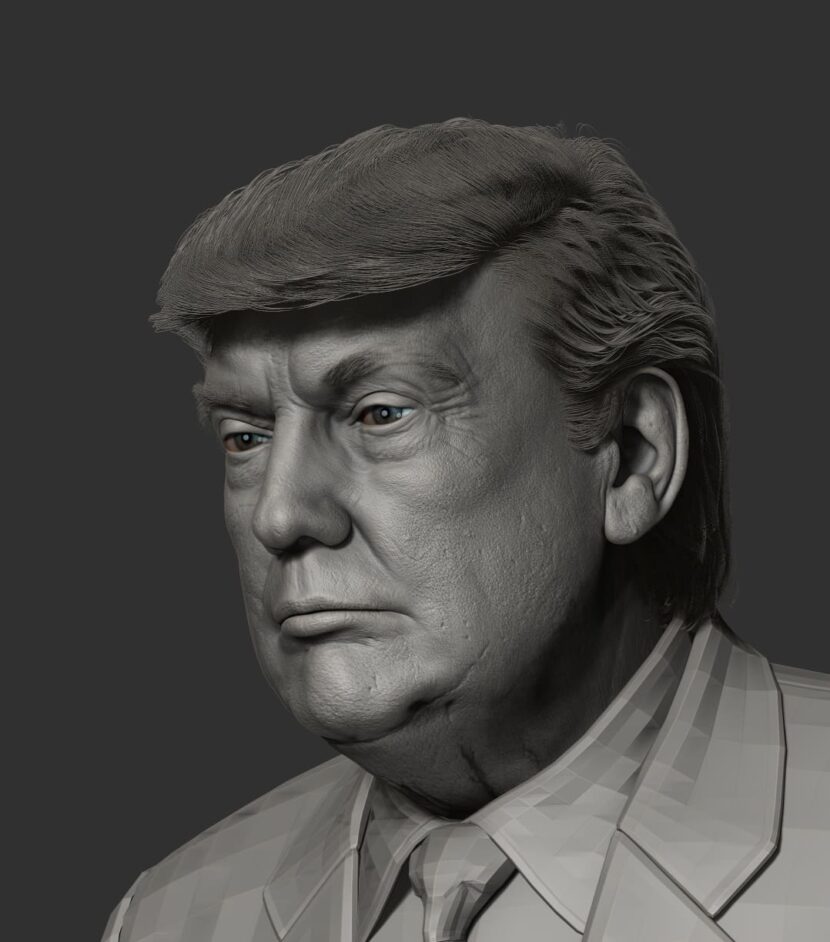

The project is a strong political satire, which visually relied on two technical stages, a CG President Trump who was rendered offline, based on the MoCap shoot using virtual production techniques, and an overlay on this footage of a ‘deep fake’ of the President’s face trained on publicly available footage.

We spoke to Harry Shearer in LA about this latest project and his remarkable career that started with his first film appearance in the film Abbott and Costello Go to Mars (1953) and encompasses almost every creative media, music, books, films, TV shows, radio, and podcasting.

Shearer has won a Primetime Emmy Award and has received several other Emmy and Grammy Award nominations. He was twice a regular cast member on Saturday Night Live and his career has seen him work alongside a pantheon of comic stars, including Jack Benny, Mel Blanc, Christopher Guest, Michael McKean, Al Franken, Rob Riener, Matt Groening and many more. In addition, he studied at UCLA as a political science major and attended graduate school at Harvard University for a year, and even worked at the state legislature in Sacramento.

For Electric Lens Company (ELC)’s Matt Hermans this was his first project with Shearer and when he first signed on, he had no idea how world events would so completely affect the project.

ELC invited Mod Productions onboard to help run the virtual production side of Son in Law. “It was our first LA shoot from Sydney, commented Ledwidge. “Our brief was broken down into R&D, the shoot, cameras, and edit”. The challenge for the team was to come up with a remote virtual production model that was feasible during COVID19 lockdown when only one person was allowed to be in the room with Shearer. The LA shoot had just Shearer’s long-term DOP Matt Mindlin in attendance. Mod was responsible for putting systems in place that could capture Harry’s performance, as Trump and his Army assistants, from the other side of the world, in a real-time interactive MoCap session. “Thank goodness I’d had experience with this,” comments Shearer.” Because if you’re doing this for the first time, it can get a little overwhelming and confusing. But I’d worn the suit before, and I’d done MoCap so it felt almost comfortable.”

The “shoot” comprised Ledwidge and Hermans at Mod’s Haymarket studio in downtown Sydney with Harry Shearer and Matt Mindlin working out of Harry’s home in LA.

Ledwidge worked closely with Hermans before and together they worked out how to test the feasibility of Matt’s idea to drive a deep fake from the digital Trump character instead of from a live-action clip. Ledwidge took advantage of an early beta of Epic Games’ Live Link Face iPhone app to record facial data. Shearer wore an Xsens suit for body capture and a pair of Manus Prime 1 gloves for his hands. Mod’s ShowControl app written in TouchDesigner was used to record and monitor multiple systems from Sydney, even to the extent of remote controlling the buttons on the iPhone app.

UE4.24 was used to provide an on-set real-time “magic mirror” preview of the Trump character but all performance capture data (face, body and hand) was also recorded externally to UE4 to keep traditional CG pipeline options open and flexible.

For someone known for being incredibly witty, Shearer takes his comedy fairly seriously. “I take the execution of it fairly seriously. I like nothing better than to be with colleagues that I’ve chosen to play with and just have a good time – making each other laugh, but there’s a lot more to it than that. Especially when you’re doing music, … there’s nothing, either funny or entertaining about bad music,” he comments. “Hopefully at the beginning when you’re developing the sketch or a song or a movie, you’re filled with funny ideas and you’re making yourself laugh every once in a while. And yet when you come to put the pieces together to make it a thing, – then it’s serious work”.

After the shoot, Ledwidge synched the rushes and set up an edit pipeline for the sequences in the Oval Office. The Press Room and Rose Garden scenes were comped in Nuke Studio at ELC. For the facial capture data, Ledwidge wrote a python tool for Houdini’s Procedural Data Graph to generate FBX files from ARKit data saved as CSV by the Epic Live Link Face app. She then used UE4 Sequencer to edit the piece using character animation files cleaned up in Maya at ELC and recorded virtual camera moves using a handheld Red Rock rig with HTC Vive tracker. Once the edit was locked, Mod delivered a base UE4 project back to ELC for final visual enhancements and rendering using UE4 RTX real-time lighting settings.

There were three levels of quality for the Trump character. The proxy used for real-time preview performance capture real-time mocap shoot and edit, the real-time RTX render, and the final look involving a deep fake face composited over the real-time character.

It took a while to get the video to a state that Shearer was happy with, given the complexity of this independent trans-pacific virtual production. “We were all trying to make three or four different technologies play nice with each other. And that’s almost as difficult as getting three or four people to play nice with each other,” he remarks. “I think this was sort of unprecedented in terms of all of these combinations and individually things … So everybody was learning. We just shot the second video for another song in this collection, another Trump song that will be out in mid-September, and clearly we’d all learned lessons from the first experience, so it went faster”.

The project demonstrated how remote working can support new ways of creatively filming. ELC and Mod productions are continuing its collaboration with Shearer to refine how character design and storytelling can be combined in virtual production for the second film clip in production now. Hermans admits that the new clip has several major advances and improvements, especially to the body and cloth rigging, but given the tiny crew, vast distances, and major complexity of the first Son In Law project, all the team was incredibly satisfied with the results.

Shearer is keen to do more “It had always been my goal to take what I do on the radio in terms of the sketches that I do, where I play all the characters and transfer that to a visual medium,” he explains. “In this project, I still play just one character and in some other experiments with this technique, not as developed as this, I was playing two or three characters in a scene, which really excited me. So as far as I’m concerned there’s more room to play in this particular space.”

For more Harry Shearer, visit Harryshearer.com, listen to Le Show or follow him on Twitter