For Transformers: Revenge of the Fallen, director Michael Bay enlisted ILM and Digital Domain to create several new characters and blockbuster action for the sequel to his monster 2007 hit. Mike Seymour speaks to ILM’s Digital Production Supervisor Jeff White in this week’s fxpodcast. In our online article, Ian Failes speaks to Digital Domain’s Visual Effects Supervisor Matthew Butler and CG Supervisor Paul George Palop about their work.

ILM

In this week’s fxpodcast Mike Seymour speaks to ILM’s Digital Production Supervisor Jeff White.

Mike and Jeff discuss in depth some of detailed work ILM did to produce some of the most technically complex shots we have seen this year, – truly magnificent work.

Digital Domain

Ian Failes speaks to Digital Domain’s Visual effects supervisor Matthew Butler and CG Supervisor Paul George Palop about their work.

fxg: What were Digital Domain’s key shots?

Butler: The work was predominantly split between ILM and Digital Domain. When we spoke to Michael Bay early on he thought we could tackle some of the new content, because it didn’t necessarily make sense to re-invent the wheel on stuff ILM had already created. So, as a result, ILM had the lion’s share of the work and we had about 130 other shots to do. Those comprised shots made up of essentially five different characters: The Pretender or Alice; The kitchen bots; Wheels or Wheelie, as he’s known; Soundwave and Reedman.

fxg: Was there a general approach at Digital Domain to creating these characters, or did each pose its own set of challenges?

Butler: Well, definitely each one created its own challenges. When it came to the kitchen bots, these were all going to be hand animated. The package of choice for us currently is Maya. The kitchen bots and Wheels were modelled in Maya. Pretender, Soundwave and Reed Man were slightly different. For Pretender, or Alice, we had to combine a very organic behaviour with a very inorganic object at the end.

We photographed the actress (Isabel Lucas) starting the transformation and then of course we had to remove her. So we needed to hand off between the real Alice, a CG Alice and then ultimately the Pretender. That required us matching organic, elastic tissue and clothing, then moving to a mechanical stage where she was coming apart by thousands of mechanically articulated pieces. This lent itself to a procedural system for which we used Houdini. Then we ended up back in a Maya world for the fully CG character.

For Reedman, he was made of literally hundreds of thousands of characters and though they may have some hand animated cycles in Maya and we end up building him in Maya, the huge chunk in the middle is heavily proceduralised and once again we used Houdini. So really the content drove which application we used.

fxg: How did you go about integrating your characters into the scenes?

Butler: We let the director shoot whatever he needed to shoot, and then we photographically acquired the data necessary to reproduce that. We acquire our high dynamic range environments via fish-eye lens camera work with a digital camera. So we’ll shoot a full 360 through multiple stops to give us a high dynamic range compound image of the scene. We’ll do that any time we have to integrate a character into the scene. So that gives you all the stop latitude you need, all the colour representation and lighting information you need. Of course, it’s never always completely straightforward because things don’t always go neatly on set, but that’s the general approach.

Then we would shoot still work ad nauseam just in case, say if we need to replace a background. And we do the usual geometric data acquisition to be able to reconstruct scenes or through shadows or occlusions on objects or onto new characters. This is also so we can track the shots and create a synthetic camera representation of what the shot was. I think I’ve been doing this now for more than 15 years, starting on Titanic, and I’m used to capturing all this stuff on set just to make sure you’ve got what you need.

fxg: How did you achieve the Alice character?

Butler: Well, Sam (Shia LaBeouf) goes off to university and the Decepticons are trying to track him down and get some information out of his head. So they throw a Decepticon at him who is called Pretender or Alice. It’s a transcan of an android. The concept was that the Honda corporation have created an android and somehow the Decepticons have transcanned it. So, what you’ve got is something that looks like a human, but is really a Transformer. We see this come about, because Sam’s in his dorm room, he’s just got to university and he’s been hit on by this hot chick. He’s trying to stay faithful to his love, Mikaela (Megan Fox), back home.

Meanwhile, this hot chick is coming on pretty strong and she basically jumps on him and pins him down. Then Mikaela shows up at the door and he’s busted. Alice exposes herself as being a Decepticon. It starts with a metallic protrudence from her behind, which is quite fun, where she’s going to intoxicate him with this snake-like needle, then later this metallic tongue comes out and eventually her face breaks down into a mechanical pattern and reveals the inner mech. Then her whole body exfoliates away, peels back in this mechanical, flower-like pattern to reveal this bad-ass robot and storms around the university after Sam until she’s nailed to a lampost by Mikaela.

fxg: What were some of the technical challenges for Alice?

Palop: When we first got the shot, we were going to approach it as a plate re-projection. We had geometry and we were going to re-project the plate and do some 2D work on it to get us out of the woods. It ended up becoming more involved than that. We had to actually build a complicated set-up in Houdini to deal with the transformation. We had geometry for the actress, which we roto-mated to the plate pretty closely. We did some cloth simulation for the dress. Then we used that as the base for the effect, taking it into Houdini and procedurally turning the surface into an array of little tiles which were distributed all over the mesh. Those tiles were to be revealed eventually by a set of 3D mattes that were being controlled by the artist.

The little tiles had to conform to the skin, which was kind of tricky. But Houdini’s a great tool for that. You can have access to all your geometry attributes – the normals and points. We were able to place those tiles where we wanted them, however many we wanted and be able to proceduralise all the tiles individually. We still re-projected the plates onto the geometry, but that only got us so far. We had to make sure it didn’t look cheesy, because we were constrained by the lighting that was baked into the plates. So we had to go the extra mile of rendering and lighting the geometry in Houdini and generating lighting passes for the compositors.

So, what we had was geometry that’s moving like the girl, hundreds of tiles that are moving and eventually letting you see the robot underneath. The robot was rendered as a separate layer and we had to reveal that. The plate was re-projected onto the skin geometry and we enhanced it all by actually lighting the geometry in 3D and we’re doing HDR illumination on it, occlusion and reflection renders and all of these are passes for the compositor in Nuke.

fxg: How did you decide to go with the Houdini approach for Alice? Did you try any other methods?

Palop: When we were planning on how to do it, we thought we thought we could have a compromise between Maya and Houdini and move some of the tiles in Maya by traditional animation or thought we’d move some smaller ones in Houdini, but it ended up an all-Houdini thing, because the final concept of the shot really asked for very tiny tiles. So we had too many to control by hand. It also comes down to who you have in your crew and the skillsets. We had really good Houdini technical directors here at DD.

fxg: Can you talk about the kitchen bot shots?

Butler: If you recall, the All Spark in the first film left a shard. The shard is being kept by the government and the Autobots under surveillance, but there’s a little splinter that was left with Sam at the end of the first film. At home, he’s looking for items to give to Mikaela, he finds the splinter and it burns through the floor, into the kitchen. A pulse is given off when it lands in the kitchen and it brings eight kitchen appliances to life. They were a stand mixer, a blender, a microwave, a waste-disposal unit, a toaster, an espresso machine, a router and a vacuum cleaner.

So they come to life and they’re pretty pissed off. They know they’re bad and they need to go and get hold of Sam. There’s a lot of humour that goes along with this. For instance, Blender is a fairly well endowed character with a blender at the end of his appendage. He’s quick to fire. The waste-disposal unit is like a Tasmanian Devil. He’s running around, out of control, very skittish. He’s mouth is shaped like a waste-disposal unit we’re used to seeing with rotating blades as teeth. The stand mixer uses the normal implements that comes with as weapons. It’s a somewhat comical event or sequence of events. So they storm upstairs and beat Sam up. Eventually, Bumblebee comes to the rescue from the garage and lays them to waste.

fxg: I’m guessing for the kitchen bots, there was a lot of on-set interaction for those characters.

Butler: Yes, there was a lot of off-screen pushing and pulling of doors and curtains and things for that. We worked to those plate interactions. Michael Bay is a big fan of shooting anything that he can, and I’d have to agree with him that that’s a good thing to do. So you’ve always got something to work with.

fxg: How about Wheels?

Butler: Wheels is like a remote-controlled truck. Once he transforms he’s biped on a mission to get the shard that Sam has given to Mikaela. Wheels is very cute, very rude, obnoxious, but he’s funny. He’s lovable but horrible. He’s a big character in the movie. He has a Jersey accent, almost from the mafia with his attitude. He’s also a horny little devil. There are times in the movie where he’s literally humping Mikaela’s leg. He’s busted and Mikaela runs over and grabs him and sticks him in a tool box. She keeps him for the rest of the movie and they utilise him to help find this group of robots called the Seekers. He’s a little sidekick. He’s packed with character.

fxg: How was the Soundwave accomplished?

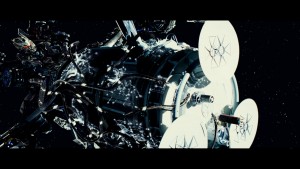

Butler: He’s our biggest Decepticon. From space he attacks all these satellites and uses them to access information about where the shard is and where Sam is located. He’s interesting in that while he doesn’t have a transformation, per se, he is a combination of mechanical robotic pieces as well as these organic space-age tentacles. He will lock onto a satellite and as his tentacles search out, they lay out these very geometrically shaped and patterned tentacles akin to the structure you see on a circuit board.

Palop: Soundwave involved typical character animation and lighting but it also had an effects component, in terms of his tentacles. The smaller ‘effects’ tentacles were less organic than the bigger ones and totally different in material type. The bigger ones looked more like metallic rubber. The little ones looked more like glass. They creep over the satellite almost like an L-system. You can see a very mathematical pattern as these guys learn where to go. It’s pretty cool seeing the data actually being fed through these things with the pulses of light.

For the tentacles, we weren’t initially sure what they were supposed to look like. But we did a couple of tests and we got lucky and Michael liked what he saw, so we took it a step further and developed a whole look for them.

We ended up using an entirely hand-drawn spline approach for the tentacles. We drew curves and that’s how we got those things to move. The placement on the surface was all done by hand. The rendering of the tentacles was done with ray-tracing – reflections and refractions. They were supposed to be transparent, almost like a fiber optic cable. We used Nuke for this. We actually used reflection vectors sometimes because we couldn’t actually refract the background because we were putting the tentacles over a plate. So we ended up doing the refractions in 2D.

fxg: Then there’s Reedman. How was he created?

Butler: Reedman was my favourite. He’s called Reedman, like the reeds growing in water, because he’s made out of hundreds of these blade-like facets. He’s a bunch of razor blades, really, but cooler looking than that! He’s able to orient himself in a particular axis to make himself invisible from that particular axis. In order to get hold of the shard, which is in an otherwise impenetrable place, Soundwave launches a pod which holds Ravage (done by ILM) who lands on the Earth.

He spits out a bunch of ball-bearing creatures called The Plague. These are Microcons – little metallic pill bugs that assemble together in the thousands upon thousands to form Reedman. So there’s a big build up where they assemble in two shots. They end up becoming this large praying mantis-ish type creature. He is so sharp that he actually leaps through one of the guards and rips him in two. Blood hit the lens, at least on one version we did. He was really fun to build and animate.

Palop: There were two parts to Reedman. One of them was more of a classic crowd simulation shot where we had the ball bearings coming into the room and they organise themselves. They have some intelligence to them – they don’t just roll around. They move in a mechanical way. As the camera’s getting closer to them, you see them in their transformation mode. These are tiny ball bearings, maybe a third of an inch, so the camera had to go really close to fill the frame with one of these guys.

So you then see the balls break into little pieces and these insect-like creatures transform. We go through from ball into insects, and so we have a crowd of thousands of creatures. In the next shot, what we see as first are big piles of these tiny little guys crawling on top of each other. From those piles you see something emerging – they are taking on the form of the robot they’ll eventually become. They use it as a path to walk on. In our setup we actually had to have the robot in the final pose so that our little guys knew where to go.

It changes so much in perspective because of how little these guys are. So from not more than two feet away from camera they’re just little dots on screen. We tried some depth of field macro photography techniques in Nuke that we could control in the comp, to convey the idea of scale. Putting all things together, it became a really complicated thing to do. Initially, it puts you off a bit, because you don’t really know what you’re looking at. It’s just mesmerizing to look at because so much is going on. With racking the focus closer or further to camera, you actually start to see what’s happening.

fxg: What were some of Reedman’s technical challenges?

Palop: The crowd and particle simulation was done in Houdini. We basically knew where the guys needed to be in their final positions. We approached it in a way that we had to simulate the particle system in reverse. When you don’t know where you’re starting at but you know where to finish, it’s easier to just reverse it. We ran a simulation that would actually tell them how to climb down, because it was a lot more forgiving. We weren’t too worried about interpenetrations because at the scale at which we working they either moved too fast or we were too far away.

So as we ran the sim of them climbing down, we captured all that data and just played it back, which made it look like they were actually climbing up. We had animation cycles from the animation department, who gave us pretty much anything – walk cycles, climb cycles, idle cycles. We wanted them to have a little motion even if standing still. All these cycles were copied onto particles as attributes. Eventually at render time they get replaced by real geometry and you render your crowd systems that way. For lighting we used our HDR data, some spot lighting and a lot of compositing. Even with such heavy 3D characters we end up with a lot of 2D work, which gave us the flexibility to work with the shots.

fxg: What were some of the other sequences DD worked on?

Butler: We did a few smoke trails for missiles and meteorite shots. There was also a helicopter crash scene, towards the end. You know, Michael’s so great. He’s big. He’s all about explosions, and blowing shit up and big scale things. And he’s got great stunt guys and practical effects teams. Thankfully John Frazier is the best in the business. So they’re out in the desert blowing shit up and the shot involved bringing down a helicopter. While Michael was bold enough to have a complete fuselage come crashing to the ground on a rig right in front of a camera, they didn’t want to put helicopter blades on it. So that fell to us. We put the collective and the blades on and put CG people in the chopper. The blades break apart, smash into a million pieces, interact with the ground and all the paraphernalia that go along with a shot like that to make it feel realistic.

fxg: Michael Bay’s obviously involved quite heavily with Digital Domain. How did that impact on the production at DD?

Butler: Well, I’ve been at Digital Domain for a while now. I was here when James Cameron was an owner and am here now when Michael Bay is a part-owner. It actually worked out pretty well for us. There are advantages and disadvantages. The obvious disadvantage is that he’s effectively one of my bosses. So normally I need to please the director and now I have to make sure the company does well too. So the hats I have to wear start to fuse together.

But the good side of it is that Michael is a businessman and a producer, as well as a director. He’s extremely conscientious and cognizant of the financial aspects of everything as he goes through the creative process. So the advantage of that is that later on he’s just as much on your side of not wasting money going down the wrong path. Some of what we do can be development that might not be efficient, and Michael is dead against wasting money in that way. So he would help us to get to the solution early on. It was a little tricky because you would have to show things a little earlier than you might want to. You’d have to bite your lip and deal with being screamed at for stuff you didn’t want to show, but if you can push all the egos aside you can get to the result quicker. I may have lost a little hair and gone a little greyer, but it worked out really well.

Interview by Ian Failes