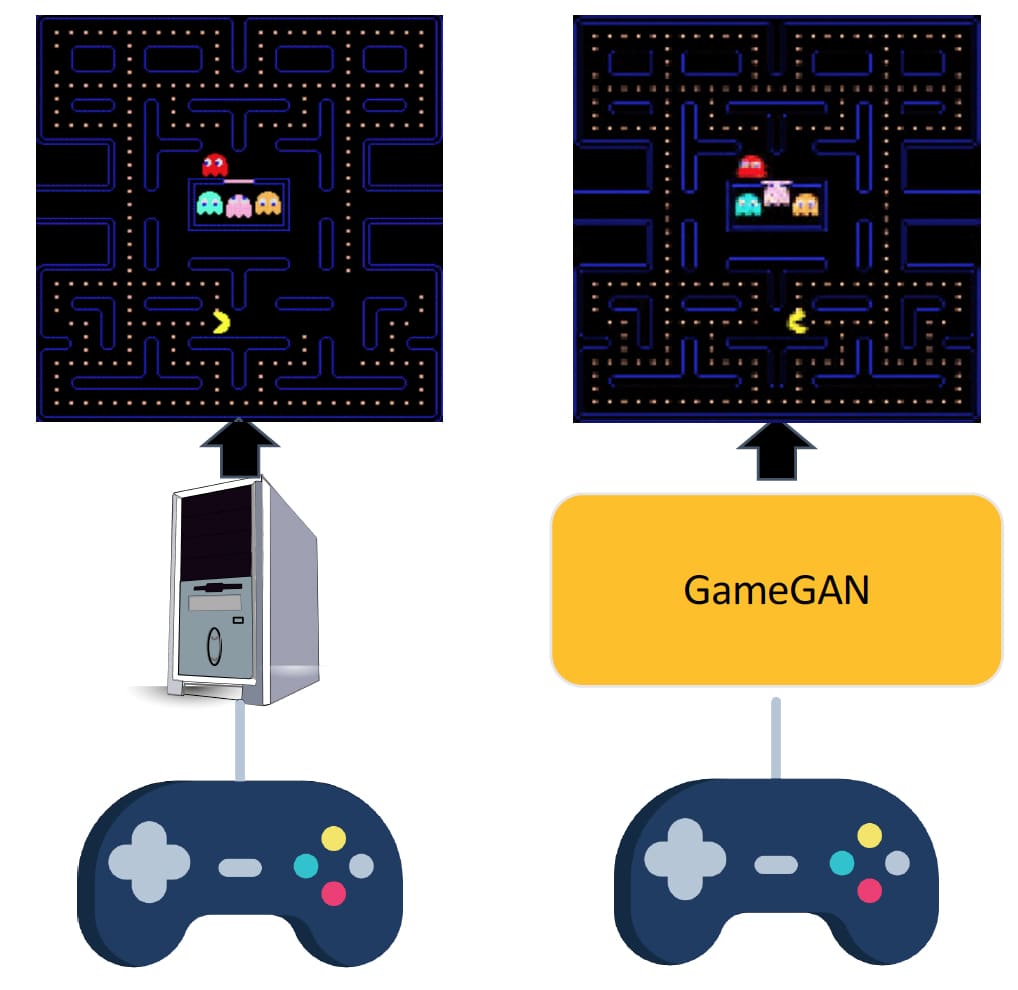

Simulation is a crucial component of any game system, but normally the game-engine uses GPU simulations for some specific visual effect. NVIDIA research has reversed that dynamic. Deploying a new Machine Learning (ML) engine, GameGAN, NVIDIA Research’s new AI system learned to simulate Pac-Man without any underlying game engine. The result is a fully working version of Pac-Man where the AI engine takes the input from a user’s console controller and then just “renders” the next screen using a carefully designed generative adversarial network (GAN). It does this without using a traditional state engine or old style graphic programming model, it simply learned over 4 days of watching Pac-Man being played what the next logical screen would look like and it jumps directly to rendering that screen.

In order to simulate correctly, the NVIDIA team needed to build a complex ML engine that could simulate Pac-Man by simply watching another agent interact with the game environment. GameGAN did not have to specifically learn from another AI engine, but it is faster to have am AI bot constantly play 24/7 Pac-Man than hire people to do it. While the other bot played continuously for days on end, the GameGAN watched and took it all in as training data. GameGAN does not decode the ‘rules’ of Pac-Man and then write a new version of the game in traditional software, it simply skips the middle step and produces the next correct interactive output, via a neural rendering engine.

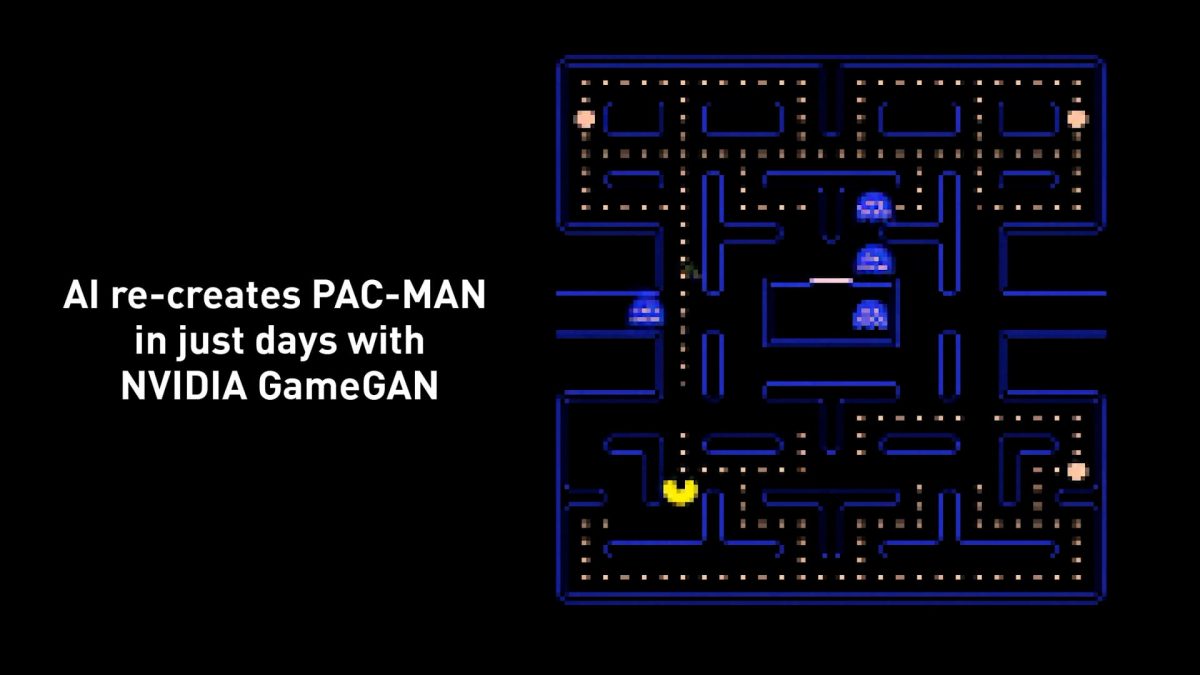

NVIDIA focuses on graphics games as a proxy for real-world environments. GameGAN is a generative model that learns to visually imitate the game by ingesting screenplay and keyboard actions during training. In the future, this could be applied to robotics, factory automation, or any other number of use cases. The output game functions as if it was a version of Pac-Man written with traditional code. Human players can interactively play this GameGAN created Pac-Man, as ML tends to take days to train but then runs lightning-fast when deployed.

GameGAN is able to disentangle static and dynamic components within the images it is observing making the behavior of the model “more interpretable, and relevant for downstream tasks that require explicit reasoning over dynamic elements. This enables many interesting applications such as swapping different components of the game to build new games that do not exist,” the NVIDIA research team has published. The GameGAN is never told the objective of eating dots and not being eaten one’s self, it is provided with no guidelines for what is considered a ‘win’, or even why that the player who controls Pac-Man must eat all the dots inside an enclosed maze while avoiding the ghosts. It infers the responses and then for example, correctly turns the characters blue when the operator/user eats a large flashing power pellet or capsule, but GameGAN has no underlying logic, understanding or sense of ghosts, fruit, or power capsules, – it just sees pixels.

Real World Use

NVIDIA’s aim is not only to honor the memory of Pac-Man 40 years since it was first launched but also to show how systems can learn through controlled observation. Before deploying ML-based agents and robots in the real world, an AI agent needs to undergo extensive testing in challenging simulated environments. Designing good simulators is extremely important, for example, self-driving car systems need to pass hundreds of hours of simulation before actual road tests are performed. This is traditionally done by writing procedural models to generate valid and diverse scenes, and complex behaviors to see how the AI self-driving car reacts to the actions of others. However, writing simulators that encompass a large number of diverse scenarios is extremely time-consuming and requires highly skilled experts. GameGAN or some version of it could prove invaluable in such real-world situations.

What is significant about GameGAN is how unsupervised the training was, normally this type of work would involve a significant amount of supervision such as access to agents’ ground-truth baseline. The GameGAN just observed the output of the real game being played combined with the keystrokes that used to play the game.

The system was made using the Pytorch ML libraries and as is typical of this style of work, the training time was approximately 4 days. As a side note, the team only simulated the visuals of the game, but Professor Sanja Fidler who worked on the project and leads NVIDIA’s Toronto Research office did laugh and agree that perhaps the next version could also learn the music of Pac-Man and all the electronic sound effects. Professor Fidler’s team is currently working on other games and expanding GameGAN further, but those projects are not public yet. While GameGAN viewed the output of another AI playing the original Pac-man, Professor Fidler joked that her team had to personally test the GameGAN version, so she joked that “yes, NVIDIA pays us to play a lot of video games all day long”.