Latest from the Wikihuman project.

The Wikihuman team has just published its latest findings with the alSurface shader.

The “al” in alSurface refers to Anders Langlands (right), a VFX sequence supervisor currently at Weta Digital, who wrote a series of shaders for Arnold.

Langlands’ work is respected across the industry, he was formerly at MPC and worked on such films as X-Men: Apocalypse and Man of Steel.

One aspect of the alSurface shader that has been particularly praised by the community, is how well it works in reproducing human skin. Langland recently open sourced the shader itself, allowing others to implement it into their own rendering engines, and the team at Chaos Group implemented it in V-Ray.

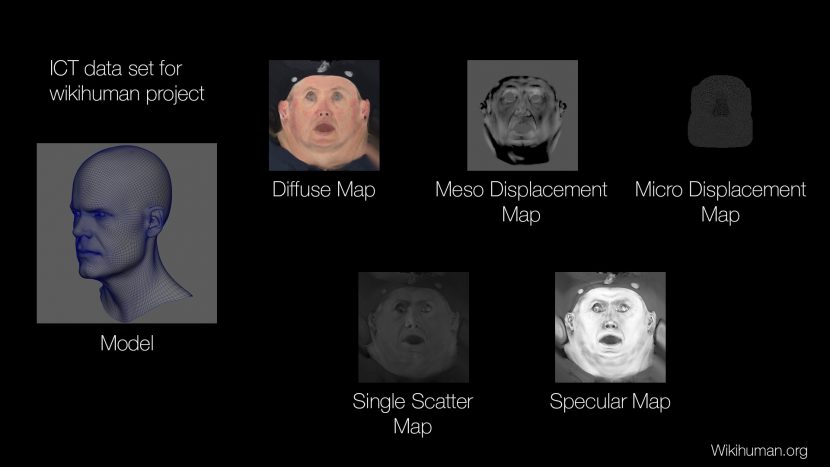

As regular readers will know, we are actively involved in supporting the Wikihuman project that was born out of the Chaos Group Labs, spearheaded by Chris Nichols (Director of Chaos Group Labs), a good friend of fxguide and an ex-DD 3D expert who is widely respected in the industry. Chris and the team, as part of the open source Wikihuman project, have now applied the alSurface shader to one of the newer data sets of the Wikihuman project : our own Mike Seymour. Mike was scanned recently at USC ICT – as part of USC’s ongoing contribution to the Wikihuman project.

(The alSurface is not restricted to just Arnold or V-Ray, it is currently available for download from Guithub – with versions for Maya, 3dsMax and no doubt more to come.)

To quote from the V-Ray blog about porting the alSurface to V-Ray :

Anders’ motivation was to implement production shaders for Arnold that he felt fit the VFX world’s needs beyond what Arnold provided natively. One of the most important shaders he wrote in the group is the alSurface shader. Also known as an uber-shader, this shader allowed the user to recreate most surfaces including, solids, metal, transparent, reflective, and even Sub Surface Scattering such as skin.

Based on a strong demand from a V-Ray user, Luc Begins, on the V-Ray Forum, and the fact that the shader was now open sourced, Vlado decided to implement parts of the alSurface shader, specifically, all the parts needed to reproduce skin.

It takes into account diffuse, 2 levels of specular, and sub surface scattering. The only parts that are missing are the refraction and translucency, which may be added at some later point.

The Wikihuman project has been working on understanding the issues about reproducing photorealisitc humans. As part of this aim the team is open sourcing specific Lightstage data sets, with some of the most accurate sampled geometry, reference and skin detail data available.

But the Wikihuman project’s aim is not to just render a single good image, it is to explore the ‘formula’ behind recreating realistic faces so that any serious artist can do it. The team wants to offer a solution that is more generic and less ‘secret sauce” than has been widely available before. From the start the Digital Human League or DHL (which is the team of artists that are behind the Wikihuman project) – knew that the standard skin shader in V-Ray would not be ideal. So the DHL has been exploring new shaders that would better suit the Lightstage data acquired from ICT. The alSurface shader should theoretically use the Lightstage data more natively, which is ideal for the object of making this process more widely available.

Based on the renderings you can see at the Wikihuman site there are several key improvements with the alSurface shader. The overall Sub-Surface Shading is more balanced and does not have any of the extra glows around the nose or lips as was seen with earlier tests.

More coming:

We really encourage you to check out all the technical details in Chris’s great blog post on the Wikihuman site, and keep an ‘eye’ out for more great data sets that will be coming including a whole separate implementation ‘focused’ on human eyes!

Right is a shot of Mike getting his eyes scanned in Zurich for one of the next stages of the Wiki-Human project.

Click here for a link to Wikihuman Web page

- Note the Wikihuman site has many more examples and comparisons between the shaders.

Earlier data sets used by the Digital Human League to test included Emily 2.1. The core data reference source for the Wikihuman testing has always been based on data acquired from the Light Stage data at ICT.

Earlier renders using the Emily data set:

As always this work is done as part of an effort to contribute to the community and is not ‘owned’ by Chaos Group, but their generous support is greatly appreciated. Wikihuman is open to all under Creative Commons License for non-commercial direct use.

Great I’ve been waiting for this. Really looking forward to playing with Mikes head!

I cant wait for the eyes! ~ Thank you so much for sharing this research!