DI4D, has just launched their PURE4D, a new efficient and scalable 4D facial capture solution. This new pipeline allows developers to utilize high-fidelity 4D facial performance capture to drive high volumes of in-game animation for realistic digital-double characters. PURE4D delivers the same level of fidelity and realism previously only available for movie visual effects and pre-rendered cinematics for large-scale video game projects. Utilizing the company’s DI4D PRO system, head-mounted camera, and new machine learning technology, PURE4D is able to deliver high-fidelity facial animation at scale.

The launch demo video above shows the capture and animation fidelity of the new PURE4D, which can be used in any engine or game.

DI4D’s 4D technology is already used by the world’s leading studios in video games and movies including Activision, Digital Domain, MPC, Remedy Entertainment, Electronic Arts, and many more. As the company’s name suggests their focus is on high fidelity accurate time-based animation, or data that the new engines and renders demand to provide nuanced performances. DI4D has a seated PRO capture system with nine 12-megapixel cameras that captures the highest fidelity 4D facial data of the performer. This system has proven itself in countless high-end productions. Today the PRO is mainly used for technical or machine learning data or as a source for facial rigging data, but today it is “very rarely used for performance capture, just because of the nature of performances, and that you don’t get good quality natural performance, but it’s always been our desire to get that level of fidelity in the data we provide from a head-mounted system,” comments Colin Urquhart, co-founder, and CEO of DI4D.

Advancements in-game platforms such as PS5 and the increasing graphical sophistication of video game engines such as UE5 are blurring the line between pre-rendered cinematics and in-game characters rendered in real-time. The company was formed in 2003 in Glasgow, UK, and has since expanded to Los Angeles, CA. DI4D’s 4D performance capture services are used in VFX for movies, television, video games, and advanced research applications. Recent projects include the Warner Brothers movies Blade Runner 2049 and Fantastic Beasts and Where to Find Them, the Netflix animated anthology Love Death and Robots, as well as Microsoft Studios video game Quantum Break, with several more projects currently in production or awaiting a post-COVID release.

In-game digital humans have been becoming more realistic with every generation of platforms, and this trend will be accelerated by the launch of the new generation of more powerful video game consoles and game engines. However, this creates a key challenge for video game developers: how to generate ever more detailed and realistic content while staying within budget?

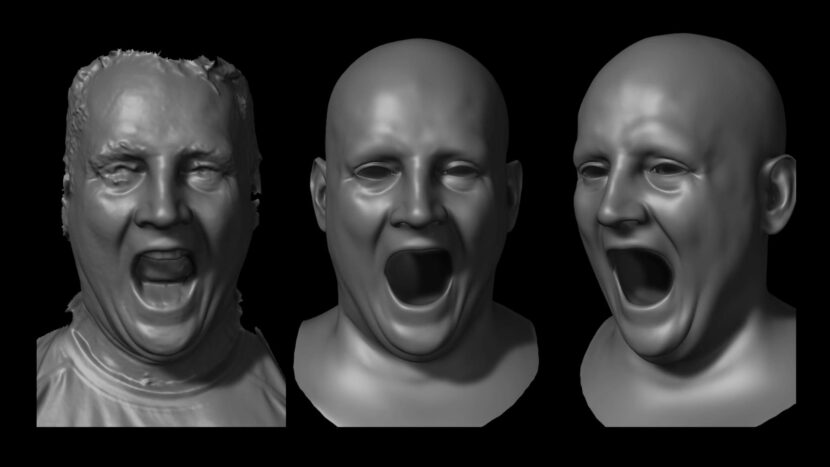

The PURE4D system uses DI4D’s proprietary mesh tracking solution, and thus combines accurate facial performance data from DI4D HMC (head-mounted camera) systems with even higher fidelity expression data from DI4D PRO. The system then deploys advanced machine learning technology to scale for a large amount of character animation which is typical in a AAA game.

The DI4D HMC is about 1kg, which is on the heavier side, but it is well balanced and has camera data specifications similar to the Technoprops’ rig. The DI4D MHC has stereo 4-megapixel cameras, (2K x2K) recording at up to 60fps, although given the portrait nature of a human face the DI4D system records 2K x 1.5K. The system works with a belt or back-mounted CPU unit and this was the unit that was used to deliver 4D data for DI4D’s most recent work. The company experimented with having a much larger array of cameras mounted on an HMC, but it “defeats the point of the exercise of using a helmet, as it just becomes too heavy to wear,” explains Urquhart. Without the ability to mount more cameras on the actor, the company turned to machine learning to provide the sort of high-quality 4D data the company is known for in its seated multi-camera rigs.

The new PURE4D pipeline consists of:

- The DI4D PRO system is used to acquire high-fidelity 4D facial expression data of each actor.

- Head-mounted DI4D HMC systems which is then used to capture accurate 4D facial performance data, often from multiple actors, simultaneously with full-body motion capture.

- Machine learning algorithms that efficiently combine both sets of data to significantly increase the scalability of the solution.

The pipeline aims to capture nuanced and subtle expressions from the actors’ facial performance and faithfully transferred them to the in-game animation of digital-double characters. First, an actor does a range of motion (ROM) and sample lines in the PRO system, this established the baseline solution space for that actor. “Then we capture the performance with the head-mounted (HMC) camera system. We create the base 4D data from the HMC cameras and then solve it to those shapes that we pulled from the PRO session, explains Urquhart. “The idea is that you get the best of both worlds. You get the higher fidelity from the PRO system, but the natural performance from HMC.” At this stage, there would normally be a fair amount of manual cleanup, especially around blinks and the actor’s eyelids. As manual cleanup limits scaling on large volumes of work needed for games, the last stage is a new A.I. cleanup. “We’ve been working on this for several years,” he explains. “Now we can use new machine learning to more efficiently produce the 4D data without the more traditional manual tracking process, which is critical for large projects.”

PURE4D is designed to meet the requirements of large-scale next-gen game projects that require thousands of seconds of high-quality facial animation. The company just delivered their first project using this new technology and they reduce the whole process of generating over an hour of 4D data to a couple of months, which is much more than the company was previously able to do at this quality level. DI4D is now able to handle projects with multiple hours of high-quality 4D data while maintaining high fidelity and accurate performance capture.

PURE4D does not require a traditional facial animation rig as part of the capture process. Until now, providing this level of detail and realism was only possible for big-budget film projects. PURE4D faithfully captures an actor’s facial performance without the need for artist corrections, and it can be delivered as realistic facial animation ready for in-engine use.

The DI4D machine learning code was all developed in-house in the companies normal Glasgow based R&D. The LA office is mainly a client-facing service office.

Real-time

Urquhart says the process is not real-time, but now that the company has implemented strong machine learning, that this technology does open the door to doing something in real-time in the future. “It is an aspiration that we’ll be able to do real-time processing of data at some point, but that’s not something that we’re quite at that stage of confidence to do just yet.” For now, the company is keen to move the quality that they have been providing for pre-rendered cinematics into the whole game experience, rendered in real-time but the company is just not ready to process in real-time from during a live capture.

There are interesting applications of using 4D data for real-time applications. “Pulling the facial shapes from the code, from the PRO data to create a simple shape rig is actually a super-efficient delivery mechanism for real-time,’ Urquhart points out. In this application, one doesn’t necessarily get exactly ‘4d data’, where every single frame is a different shape. In this instance, it is a combination of shapes but because “that is what we call that an ‘unconstrained rig’, you can see that the shapes are still directly from the actor’s face when you solved it, and so you are still very faithful to the original performance,” he explains. “It is really exciting, and these are the new types of projects we seek to addressed, and not just the pure cinematics and VFX that we’ve done in the past.”