DigiPro once again delivered an exceptional set of talks, focused on production, to a richly talented and senior group of 400 TDs, production professionals, and VFX supervisors. fxguide has been covering DgiPro since the first-ever event held at Dreamworks in 2012, and since that time we have also been an official photographer of the event. Here then are a selection of images from this year’s event.

Kelsey Hurley (Disney) and Valérie Bernard (Animal Logic) as this year’s Symposium Co-Chairs did a great job leading off the day and welcoming so many people back -post-COVID.

Program Co-Chairs, Per Christensen and Cyrus A. Wilson put together a brilliant day of talks.

Almost every talk was live and extremely well presented, – providing a wealth of production information, below are a few highlights from the day.

KEYNOTE:

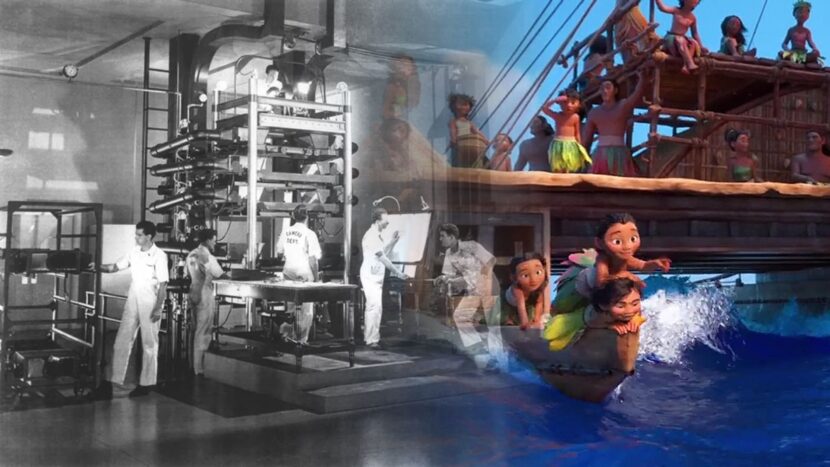

Walt Disney Animation and the 100 years union of Art and Tech

Marlon provided one of the true highlights of the day, an animator’s personal perspective on the role of technology as it moved into traditional animation at Disney. Marlon first joined Disney to work on the original Lion King, and he mapped the introduction of technology from then until today. Far from a dry technical lecture, his engaging and personal accounts of how various films addressed problems with more and more CGI, – was perhaps the most entertaining talk of the day. Speaking candidly and with genuine enthusiasm, Marlon reflected on the massive traditions of Disney Animation and respectfully illuminated the skills of both the animators and the technical teams that constantly innovate at Disney.

Joe Letteri:

Technology And Innovation In Avatar: The Way Of Water

This talk provided a comprehensive look at the innovative technology and workflows developed for Avatar: The Way of Water. Multiple Oscar winner Joe Letteri covered Wētā’s approach to R&D, actual shot production; and how the teams came together to develop a fundamentally new pipeline for the show. The talk included how Wētā integrated AI systems into their artists’ workflows without sacrificing full artist control. Included was an overview of on-set innovations including new capture systems and the neural-network-driven depth compositing workflow, as well as Wētā’s new facial system (APFS) and the integrated multi-physics integrated solver Loki.

Joe Letteri is one of the industry’s most influential figures, yet he has a wonderfully inclusive way of talking to a professional audience. Rather than ‘showing off’ the incredible innovations of Avatar 2, his presentation is one of someone sharing knowledge with his community, and done without ever talking down to an audience.

In addition to a great talk on Cloud computing at Wētā FX’, in the afternoon session, there was another Wētā presentation regarding Avatar2: – Pahi, Wētā FX’s unified water pipeline and toolset. This session was focused on just a sub-set of the topics Joe had covered in the earlier session but in much more detail. Pahi allows procedural blocking visualization for preproduction, simulation of various water phenomena from large-scale splashes with airborne spray and mist, underwater bubbles and foam to small-scale ripples, thin film, and drips, and a compositing system to combine different elements together for rendering. Rather than prescribing a one-size-fits-all solution, Pahi encompasses a number of state-of-the-art techniques from reference engineering-grade solvers to highly art-directable tools.

Nicholas Illingworth, Effects Supervisor at Wētā FX was one of the speakers in the second Avatar session. Fxguide spoke to him after the event, to get a brief overview of the workflow for underwater shots. Most of their talk at DigiPro focused on surface interactions with water, such as breaking the surface, spray, waves, and dynamic interaction.

Underwater Simulation

“For the FX team, unless we were entering or exiting the water we wouldn’t traditionally do a water solve for the water volume. What we could do is create a product we referred to as a ‘physEnv package’.” Nicholas Illingworth explained. This was largely derived from the procedural ocean waves (FFT). This has useful information such as wind speed, direction, and the orbital velocity motion from the given waves, with some depth falling off. “This was very handy for our creatures team, for their muscle, skin cloth, and hair solves.” The production shot extensive footage in the Bahamas, some of which included actors underwater being propelled at high speeds. “Jim, (Dir. James Cameron) absolutely loved the rippling in the skin/fascia, and this was a feature he requested in the fast-moving underwater shots. ” The density of the water was largely uniform 1000kg/m^3, and the team didn’t make changes for things such as salinity or temperature, just for simplicity and avoiding unnecessary mathematical complexity. “For shots where we did do a water solve, at the surface, etc., this physEnv package would be used by motion to gauge eyelines for waves or splashes, and the creatures team would use this solve to drive their hair and costume solves, and in some cases, skin and facia.” For the solved PhysEnv package, he adds “this would be an SDF and velocity field for the Creatures team and a geometric representation for the animation teams.”

Bubbles.

The team had several different ways to do bubbles, there were tiny skin bubbles that would stick to micro details on the face, but these wouldn’t need to. be ‘solved’ as they were largely instanced. “If we were simulating hero bubbles, exhalation, that sort of thing we would solve the water around the bubbles for accurate drag/pressure etc,” he explained. “Small diffuse bubbles, say one of our whale-like creatures or Tulkun broke the surface and entrained a bunch of air, we would solve quite a deep water volume so we could capture the small details, vortices, etc. that could later drive our diffuse bubble sims.” Nicholas notes that the team never actually referred to the creatures as whales “or Jim would get mad”.

Rendering

Weta’s team would render a water volume usually with an IOR of 1.33, this could be changed from time to time, but not very often. There were controls for things like turbidity so they could dial that dependent if it were a deep open ocean shot or something in a shallow reef. “The water volume itself was homogenous, and we’d get textural breakup either in the lighting pass or with some comp tricks like deep noise to help get some spatial variation.” The character beauty renders were rendered with this water volume, along with the FFT/procedural ocean waves which helped when compared to some of the dry for wet shoots, which could have a large specular component on skin/eye lights that one wouldn’t expect to see underwater. “Caustics were a huge help to sell direction of travel and motion, along with cloud gobos used in Lighting, to help have some spatial variation in pools of light and dark. Biomass and small fish would also help give a sense of scale and travel.”

Water FX Reel 2023 from Alexey Stomakhin on Vimeo.

Magenta Green Screen:

Spectrally multiplexed Alpha Matting (with AI re-colorization)

Dmitriy Smirnov, Research Scientist, presented Netflix Magenta Green Screen, an innovative tool for creating ground truth green screen comps and mattes for possible training data applications.

See our upcoming fxguide ‘Synthetic Data’ story for more information on this unique new approach.

ILM StageCraft

LED volume virtual production brings its own unique set of challenges that differ from typical applications of real-time rendering. ILM StageCraft is a suite of virtual production tools that incorporates powerful off-the-shelf systems, such as Unreal, with proprietary solutions.

To meet the demanding requirements for The Mandalorian season 2 and beyond, Industrial Light & Magic (ILM) developed and augmented proprietary technology in StageCraft 2.0, including a new real-time rendering engine, Helios, and a collection of interactive tools.

Jeroen Schulte and Stephen Hill presented a very entertaining and informative overview of Stagecraft from the original version 1, using a Modified UE 4.20 in 2018, to the present day. Discussing Helios and Helios 2.0 support using Vulcan GPU accelerated ray tracing such as the team used on Obi-Wan Kenobi. This was the first show to only use Helios2 along with Stagecraft’s advanced CC tools, higher performance, and ray-traced shadows and reflections.

Helios’ under-the-hood geometry works with ILM’s hero assets LOD system, making the loop very tight from production to real-time assets and back again.

The team also discussed the way that the backgrounds are first class in the ILM system, discussing their Nuke to Helios pipeline in Zeno. Of real interest were the advances in haze shots or participating media. – based on the system developed for the CARNE y ARENA a virtual reality project and more recently on The Mandalorian season 2. Stagecraft has worked hard to have on-screen haze matching in volume haze, and even working with 3D color correction volumes.

From Around the Day:

And …