fxguide visited scanning company Avatar Factory on location in Melbourne to get 3D scanned. The company operates a mobile scanning truck, which allows for remote scanning. Typical projects involve both hero actor scanning and ‘catalog work’ of scanning all props on a production for later reference. They provide capture and initial model creation in Reality Capture and supply low, medium or high res. project files and source material.

fxguide visited scanning company Avatar Factory on location in Melbourne to get 3D scanned. The company operates a mobile scanning truck, which allows for remote scanning. Typical projects involve both hero actor scanning and ‘catalog work’ of scanning all props on a production for later reference. They provide capture and initial model creation in Reality Capture and supply low, medium or high res. project files and source material.

The rig is about a year old and it’s first job was God’s Favorite Idiot shot in Byron Bay, more recently they scanned for La Brea shot in Victoria and they will soon be returning, it is believed, for season 2.

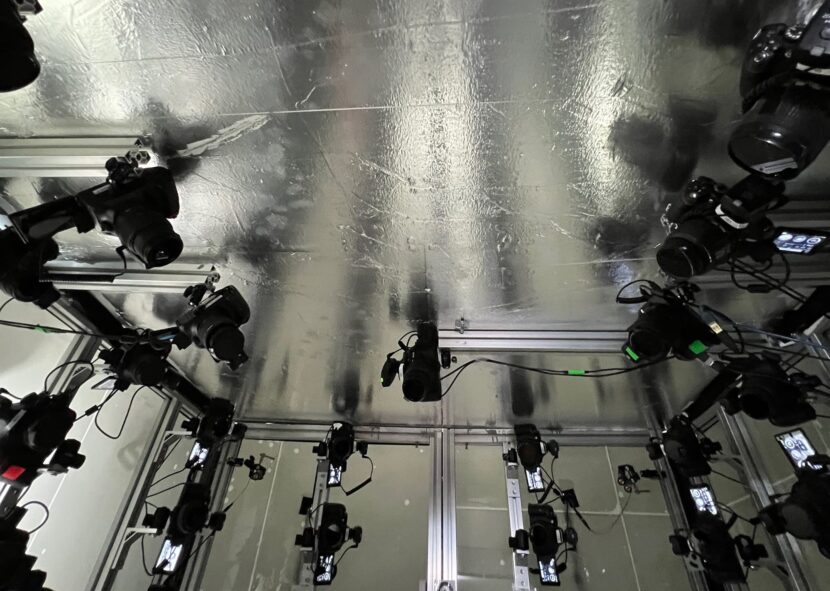

The Avatar Factory truck uses a photogrammetry approach with cross-polarized scanning. The lighting is provided by translucent walls, behind which are a set of strobe lights. The rig is built so no camera will experience lens flare, which is normally a major issue given the cameras are pointing in literally every direction.

These lights and the walls provide polarised light. All the cameras are cross-polarized to cancel this original light out. However polarised light becomes unpolarised when it lights and bounces off the actor. Thus the subject is lit and the background of the truck appears dark. This produces an output of the actor’s albedo lighting without clear shadows or highlights. While this should work, there is still the issue of the floor and ceiling. Light bouncing off these surfaces would also become unpolarized and as a result, bounce general light in and around the scanning rig. Here Ruff admits that his earlier physics education became invaluable. He recalled that metallic dielectric surfaces do not un-polarize the light, so he rigged the floor and ceiling in silver film. This protects the bounce light and provides valuable fill for the actor, helped even more by raising the actors up on a small riser so they are a few inches off the floor.

Below is a ‘normal’ density model for review, it was produced at just 25M tri’s. A ‘high’ density model of someone of similar stature would typically be in the order of 90-110M tri’s. Along with 16bit 8k exr textures in either sRGB or ACES format.

The scanning environment inside Avatar Factory’s truck houses 162 Nikon DSLRs, many are D5200 models (24MP), but the key top-end cameras are eight D850 shooting with a 45.7MP resolution full-frame CMOS sensors. “We’ve got three different models of cameras and three different lenses. So even though they’re pretty consistent, there’s still a little bit of color science that needs to be incorporated to create a calibrated raw file or SRGB file,” explains Ruff. “You need so many cameras so you can accurately model into the folds of costumes. For example, if someone is wearing a very large coat with very deep folds in it, then we need to see into the cavity behind the fold that may be occluded for many of the other cameras.”

An additional problem is a need for photogrammetry to find matching points in the image from each camera’s point of view. In our test, you may notice a large black unreflective sweater that Mike is wearing. This featureless cloth is at the edge of what can be resolved photogrammetrically. Ruff previously faced an even more complex problem of a person in a completely black costume. “We had one experience on a shoot where we were provided with an actor who was in absolutely featureless black, – head to toe, including black boots and a black motorcycle helmet,” recalls Ruff. Naturally, this similarly provides no clear patterns for the reconstructing photogrammetry to use. As a result, the team developed a method of lasers that can be used to provide an additional meta data set of the actor’s topology. The laser signal is split, thus weak, and the process requires a precise pre-capture capture a split second before the main capture.

Avatar Factory uses CaptureGRID software for file management, it works off the serial number of each camera to identify each camera. The cameras had previously been triggered via a complex wiring setup, but now Ruff has moved to use a wireless signal to precisely trigger and synchronize the cameras. The team primarily does full scans but as a subset of 54 cameras are aimed at the actor’s face, the same rig can be used to provide dedicated FACS facial scans, without re-rigging or without any significant additional setup time.

Avatar Factory uses CaptureGRID software for file management, it works off the serial number of each camera to identify each camera. The cameras had previously been triggered via a complex wiring setup, but now Ruff has moved to use a wireless signal to precisely trigger and synchronize the cameras. The team primarily does full scans but as a subset of 54 cameras are aimed at the actor’s face, the same rig can be used to provide dedicated FACS facial scans, without re-rigging or without any significant additional setup time.

The requirements of a visual effects team can vary but once the data moves to the workstations and is logged, the standard pipeline is to convert this to a model using Reality Capture Enterprise. The raw data and the output models are then uploaded for use by the commissioning VFX team.

As fxguide was leaving the Avatar Factory Truck was heading to their next shoot at the Docklands Studio Melbourne, where they were able to bump in, capture 60 scans and bump out all in the same day, complete with their own 3-phase power, 33kVa generator, as a fully stand-alone unit.