UE5.2

At today’s State of Unreal keynote at GDC 2023, Epic Games revealed demos, details and plans for UE 5.2, MetaHuman Animator, UE for Fortnite and much more.

Unreal Engine is the backbone of the Epic ecosystem. Since the launch of UE5 last spring, 77% of users have moved over to UE5. Unreal Engine 5.2 offers further refinement and optimizations alongside several new features, including the new Substrate shading system, which gives artists the freedom to compose and layer different shading models to achieve levels of fidelity and quality not possible before in real-time.

Substrate is shipping in 5.2 as a pre-full-release ‘Experimental’. The State-of-Unreal demo showcased the latest technology with a Rivian R1T all-electric truck. The vehicle demonstrated physics, with the truck’s digi-double exhibiting precise tire deformation as it bounds over rocks and roots, and independent air suspension. It also splashed through mud and puddles with realistic fluid simulation and water rendering.

Additionally, the demo showed a large open-world environment that was built using procedural tools created by artists that build on top of a small, hand-placed environment where the demo began. Shipping again as ‘Experimental’ in 5.2, new in-editor and run-time Procedural Content Generation (PCG) tools enable artists to define rules and parameters to quickly populate expansive, highly detailed spaces that are art-directable and work seamlessly – adjusting around and to, hand-placed content.

MetaHuman Animator: high-fidelity performance capture

One of the most significant demonstrations and announcements was the new MetaHuman Animator. MetaHuman Animator is a new feature set for the MetaHuman framework. The new animator module and its matching new LiveLink provide an incredible, – fast and accurate – way to produce artist-friendly facial animation.

MetaHuman Animator enables artists and TDs to reproduce facial performance as high-fidelity animation on MetaHuman characters.

The new system can use both a normal iPhone or stereo helmet-mounted cameras to capture the individuality, realism, and fidelity of the performance, transferring its detail onto any MetaHuman.

This offers a faster, simpler workflow that anyone use, regardless of animation or MoCap experience. MetaHuman Animator can produce the quality of facial animation required by AAA game developers and filmmakers, while at the same time being accessible to indie studios and even hobbyists.

MetaHuman Animator is design to use an iPhone or any professional vertical stereo HMC capture solution, including those from Technoprops, delivering even higher-quality results. For those studios with MoCap suits such as Xsens for body capture, MetaHuman Animator supports timecode to align with body motion capture and audio to deliver a full character performance.

If you’ve used the Mesh to MetaHuman, you’ll know that part of that process creates a MetaHuman Identity from a 3D scan or sculpt; MetaHuman Animator builds on that technology to enable MetaHuman Identities (DNA) to be created from a small amount of captured footage. The process takes just a few minutes and only needs to be done once for each actor. It builds the base from just three images – neural looking ahead and neural turned to the right and then left. Plus, additionally, an image showing the actor’s teeth can be used for teeth fitting.

The resulting MetaHuman Identity is used to interpret the performance by tracking and solving the positions for the MetaHuman facial rig. The result is that subtle expressions are accurately retargeted onto any MetaHuman target character, regardless of the differences between the actor and the ‘standard’ MetaHuman’s features. In UE5 you can visually confirm the performance and compare the animated MetaHuman Identity with the footage of the actor, frame by frame.

The animation data is clean, the control curves are semantically correct, and then able to be adjusted, edited, or tweaked, if required for artistic purposes.

Since the process uses the standard MetaHuman Identity the data will be valid and work as new versions of improvements are released to the MetaHuman format in the future.

MetaHuman Animator’s processing capabilities will be part of the MetaHuman Plugin for Unreal Engine, which is free to download. MetaHuman Animator works with iPhone 11 or later. There is also a new free Live Link Face app for iOS, which has some additional capture modes to support this workflow.

To complete the animation the system can use the audio to produce convincing tongue animation using Machine Learning.

The new system has two primary strengths, the speed of delivering animator-friendly facial capture without significant cost, and the fidelity & accuracy of those results. The hope is that this new LiveLink and MetaHuman Animator may get faster over time, and while not discussed by Epic, lead to real-time applications. As with all MetaHuman setups the underlying rig is universal and interchangeable. While the GDC demo used a bespoke rig from Ninja Theory for Melina Juergens’ demo, normally the animation will allow for vast amounts of re-targeting and reuse opportunities, especially in areas such as Previz and background characters. The system also works equally well with non-realistic characters that adopt the MetaHuman rig.

Unreal Editor for Fortnite (now in Public Beta)

Since 2018, it’s been possible to create your own island in Fortnite thanks to the Fortnite Creative toolset. Today, there are more than one million of these islands and over 40% of player time in Fortnite is spent in them.

With Unreal Editor for Fortnite (UEFN) it is now possible for artists, creators, and developers to have massively more powerful tools and greater creative flexibility inside Fortnite’s world ecosystem.

UEFN is a version of Unreal Editor that can create and publish experiences directly to Fortnite. With many of UE5’s most powerful features. UEFN provides an opportunity to use a new programming language Verse for the first time. This is aimed at getting UEFN teams up and running with the ability to script alongside existing Fortnite Creative tools, Verse offers powerful customization capabilities such as manipulating or chaining together devices and the ability to create new game logic easily. With upcoming features, it will enable future scalability to vast open worlds. Verse is now launched in Fortnite, and will come to all Unreal Engine users within a couple of years.

In the State of Unreal, Epic also showed a brand new GDC demo that showcases a variety of key UEFN features including Niagara, Verse, Sequencer, Control Rig, custom assets, existing Creative devices, and custom audio. The demo includes three key parts: an opening section highlighting how to enhance existing Fortnite Creative devices using Verse, a deeper dive into the editor, and an exciting boss battle to close out the segment. This was done as a live demo running on Fortnite servers, via a PC.

Creator Economy 2.0

UEFN is being launched alongside Creator Economy 2.0, and in particular, developer engagement payouts. This is a huge and yet simple new way for eligible Fortnite island creators to receive revenue based on the level of engagement that occurs with their published content.

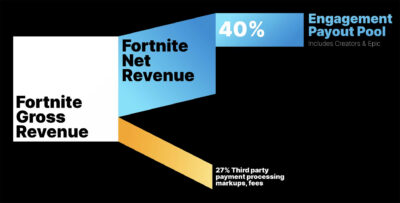

Engagement payouts proportionally distribute 40% of the net revenue from Fortnite’s Item Shop and most real-money Fortnite purchases to the creators of eligible islands and experiences, both islands from independent creators and Epic’s own such as Battle Royale. In other words, roughly speaking, 40% of Fortnite’s revenue will go into pot to be used to fund Epic’s own Fortnite development and developers that contribute to the Fortnite experience.