In July 2015 at FMX we wrote a piece about the DreamSpace project based in the EU. The project looked to redefine traditional workflows to more adequately reflect the non-linear/non-waterfall model of modern productions. This was one of the first times fxguide interviewed Ncam CEO Nic Hatch.

While four years ago, the main benefit of Ncam seemed to be its real time tracking, we confess that the Ncam guys showed us a lot about their depth compositing, but we chose to focus on Ncam’s real time spacial tracking instead. After all, at the time the idea of keying someone inside or outside a doorway did not seem a complex problem in need of a solution. The issue of a live action foreground person keyed off a green screen and composited into a set seemed to be something solved daily in any VFX house. Why did a post house need depth compositing for the camera to place a video layer behind something, using a special Ncam camera apparatus? Luckily, Nic Hatch could see far more deeply into the imminent age of real time game engines on set production than we could!

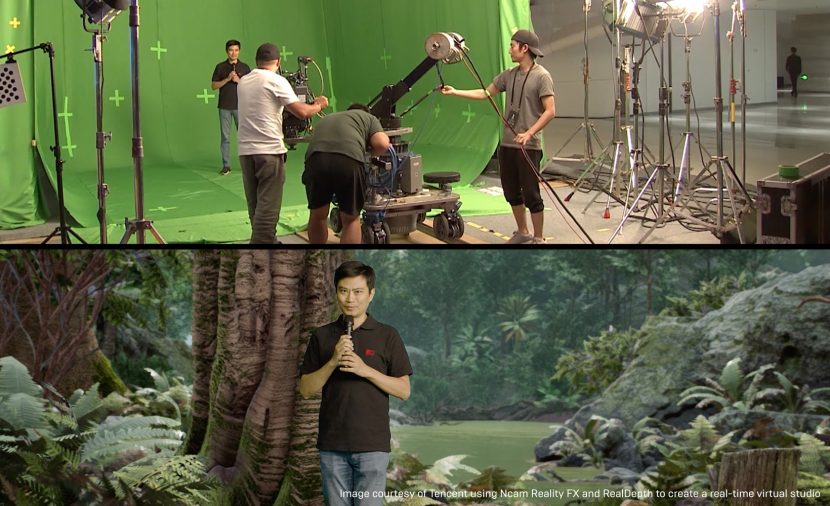

To combine live action in post was not the ‘killer application’. To do this live on set, during production, very much is that application. Ncam allows hosts to walk around graphics or through virtual sets in real time with such precision and stability that this can be used live to air or in a host of real time applications. Ncam just required real time game engines, such as UE4, to provide the level of visual quality that would enable this new dynamic form of virtual production.

To layer keyed live action into complex screens requires detailed information about the exact camera position, lens information and a depth map of the screen, especially as Ncam allows this with a completely fluid camera. Unlike previous solutions, that would have required motion control, Ncam can do all of this from a hand held camera, even with partial occlusion.

Ncam offers a complete and customisable platform that enables virtual sets and graphics in real-time. At its core is their unique camera tracking solution that offers virtual and augmented graphic technology using a special camera hardware add-on and complex software. The system uses a lightweight sensor bar attached to a camera to track natural features in the environment, allowing the camera to move freely in all locations while generating a continuous stream of extremely precise positional, rotational and lens information. Ncam’s SDK allows direct feeds into a real time engine such as UE4.

The system can be used on any type of production from indoor or outdoor use to mounted wire rigs or hand-held camera configurations. Ncam’s products are used worldwide and have been used in the production of: Solo: A Star Wars Story (Walt Disney Studios), Deadpool 2 (Marvel), UEFA Champions League (BT Sport), NFC Championship Game (Fox Sports), Game of Thrones Season 8 (HBO), Monday Night Football (ESPN), Super Bowl (Fox Sports), and Avengers: Age of Ultron (Marvel).

At its core, Ncam relies on a camera mounted specialist piece of hardware. This small light weight sensor bar combines a suite of sensors. Most visible are the two tiny stereo computer vision cameras. Not so obvious are the 12 additional sensors inside the Ncam hardware bar. These are such things as accelerometers and gyroscopes, which together with the stereo camera pair make Ncam able to fully see the set in special depth, with a real time virtual point cloud of data. The same hardware unit also interfaces with the various controls on the lens, such as a Preston follow-focus control, which means Ncam knows where the lens is, what it is looking at, what the focus, field of view all are, and importantly, where everything in front of the lens is located. The props, set, actors, camera and lensing are all mapped and understood in real time. This is key to virtual production and this data can fold into a more traditional pipeline for high end visual effects. At the moment the data file is not embedded and it is provided as a secondary meta data stream.

Predictive movement

Ncam from the outset has relied on Simultaneous Localisation and Mapping (SLAM) technology, to solve the problem of camera tracking. to solve the problem of camera tracking. Ncam however does not only gather data but it also provides insightful information. The software uses this data to do predictive movement and have robust redundancy. It knows where the camera was and where it thinks it is going. The software handles any loss of useful signal from the cameras. If the actor blocks one of the stereo lenses, or even both, the system will continue uninterrupted based on the remaining aggregate sensor data. The software integrates all this data into one useful input. For example, while the computer vision cameras could run at up to 120 fps, the other sensors run at 250 fps and so all the various data is retimed and normalised into one coherent, stable output which is clocked to the timecode of the primary production camera.

Some sets have very challenging lighting and Ncam has an option to run the cameras in Infrared mode, for strobing or flashing light scenes. The system is also designed to have low latency so a camera operator can watch the composited output of the live action and the UE4 graphics as a combined shot, for much more accurate framing and blocking. It is much easier to line up the shot of the knight and the dragon, if you can see the whole scene and not just a guy in armour alone on a green sound stage.

Lens calibration

The camera tracking resolves to 6 degrees of freedom, XYZ position and then 3 degrees of rotation. To this is added the production camera’s lens data, in addition to zoom and focus, Ncam has to know the lens curvature or lens distortion during all possible zooms and focus and iris aperture adjustments for the UE4 Graphics to fit perfectly together with the live action. Any wide lens clearly bends the image producing curved lines that would otherwise be straight in the real world. All the real time graphics have to match this frame by frame, so the lens properties are mapped on a lens serial number basis. Every lens is different, so while a production may start with a template of say a Cooke 32mm S4/i lens, each lens is different and Ncam provides charts and tools for calibration. Ncam is compatible with systems such as Arri’s Lens Data System (LDS), but those systems typically don’t give image distortion over the entire optical range of the lens. At the start of any project, productions can calibrate their own lenses with Ncam’s proprietary system of charts and tools to map the distortion and pin cushioning of their lens and then just reference them by serial number.

In the end the system produces stable smooth, accurate information that can perfectly align real time graphics with live action material. “We spend a lot of time working to fuse the various technologies of all those different sensor, I guess that’s sort of our secret sauce and why it works so well”, explains Ncam founder Nic Hatch.

Depth Understanding

The other huge benefit of Ncam is depth understanding. When elements are combined in UE4, the engine knows where the live action is relative to the UE4 camera. This allows someone to be filmed, hand held if you like, walking in front and behind UE4 graphics. Without the depth information the video can only sit like a flat card in UE4. Not unlike a projector screen. With Ncam as the actor walks forward on set, they walk forward in UE4, passing objects all at the correct distance. This adds enormous production value and integrates the live action and real time graphics in a dramatically more believable way. This one feature completely changes Ncam’s use in motion graphics, explanatory news sequences and narrative sequences.

“Game engine integration has always been very important to us. At a trade show in 2016 we showed I think the first prototype of this kind of live action integrated with the Unreal Engine… so we have a pretty close relationship” Hatch adds. The company has doubled staff in the last year to 30 people and the biggest proportion of Ncam’s staff are involved in R&D. A key part of their development effort is building APIs and links into software such as UE4 for the most efficient virtual production pipeline.

Real Light

While most of the focus has been on Ncam’s understanding of the space in front of the camera and what the camera is doing. The company also has an advanced project to understand the lighting of the scene. Their ‘Real Light’ project allows for a live light probe to be in the scene and inform the UE4 engine of the changing light levels and directions. Real Light is designed to solve the challenge of making virtual production assets look like they are part of the real-world scene. Real Light captures real-world lighting in terms of direction, colour, intensity and HDR maps allowing the UE engine to adapt to each and every lighting change. Importantly it also understands the depth and position of the light sources in the scene, so the two world interact correctly. This means that the digital assets can both fit technically and look correctly lit, which is a major advance in live action, game asset integration.

As the Ncam team were born out of Filmlight, the high end grading company, Ncam has a deep understand of colour science that allows the Real Light to interface the correct colour space information back into an increasingly physically plausible lighting rig in Unreal.

Ncam at IBC discussing Real Light.

Through versatile real-time data gathering and integration into real time graphics, Ncam creates technological efficiencies that streamline the on-set production process, saving notable post-production time and allowing innovative real time solutions for high end virtual production and high end pipelines.

“There is no single workflow which can be defined, whereby everyone can or will follow,” Hatch explains. “For example, a ‘simple’ green screen car shoot setup might be achievable with a good camera track, a decent key, good LED lighting based on pre-shot plates and some good colour correction tools, all at TV episodic quality…this to be achievable with relative ease. However, a full CG environment and exploding buildings would present a significantly more challenging proposition. And there is, of course, a lot of middle ground.” Today, he adds, the notions of pre and post-vis are less relevant. “Post-vis is becoming more important today,…and PreVis continues throughout the production process in terms of feeding the virtual production assets.” Ncam takes the approach that the entire production is visualisation and the focus needs to be on immediacy of results to improve the creative process and allow the creative team direct interaction.

- This article is based on our guest blog post on Epic Games web site.