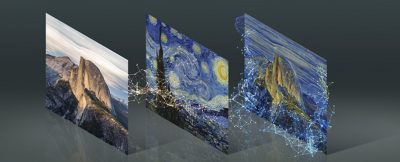

GANs or generative adversarial networks, have had an enormous impact on facial simulation, style transfers and they are increasingly being introduced into VFX pipelines around the world. GANs are an insanely powerful and relatively new form of Machine Learning, and NVIDIA just made it easier and quicker to train them.

NVIDIA Research’s latest AI model addresses the need for large training sets when working with GANs. The new adaptive discriminator augmentation, (ADA) uses a fraction of the training data material needed by a typical GAN. NVIDIA demonstrated its power by emulating renowned painters of portraits.

By applying ADA neural network training approaches to the popular NVIDIA StyleGAN2 model, the company’s researchers reimagined artwork based on fewer than 1,500 images from the Metropolitan Museum of Art. Using NVIDIA DGX systems to accelerate training, they generated new AI art inspired by the historical portraits.

The ADA approach reduces the number of training images by 10x to 20x, while still getting strongly plausible results. The same method could someday have a significant impact in VFX, for producing character faces that could both help train other ML models or work in hybrid CGI applications.

“These results mean people can use GANs to tackle problems where vast quantities of data are too time-consuming or difficult to obtain,” said David Luebke, vice president of graphics research at NVIDIA. “I can’t wait to see what artists and researchers use it for.”

For VFX this could mean a range of new facial inferences and simulations are possible, especially where there is limited high-quality material available. This could aid in new VFX tools for actors no longer alive but also allow new applications to be made for normal people without them needing to provide large amounts of high-quality footage.

The Training Data Dilemma

GANs have long followed a basic principle: the more training data, the better the results. That’s because each GAN consists of two cooperating and competing networks — a generator, which creates synthetic images, and a discriminator, which learns what realistic images should look like based on training data. A GAN can be thought of as a ‘Counterfeiter’ and a ‘Cop’. With the counterfeiter learning steadily what will get pass the cop and pass as believable, or ‘undetectable even when fake’.

The discriminator ‘cop’ coaches the generator ‘counterfeiter’, giving pixel-by-pixel feedback to help it improve the realism of its synthetic images. But with limited training data to learn from, a discriminator won’t be able to help the generator reach its full potential — like a rookie cop who’s experienced far less crimes than a seasoned detective. Unless the cop can be adequately trained, they can be too easily fooled.

It typically takes 50,000 to 100,000 training images to train a high-quality GAN. In video terms that might be over an hour of footage. But in many cases, artists simply don’t have tens or hundreds of thousands of sample images at their disposal. With only a couple of thousand images for training, many GANs fail at producing realistic results. This problem, called overfitting, occurs when the discriminator simply over processes the training images and fails to provide useful feedback to the generator. In other words, overfitting happens when a model learns the detail and noise in the training data to the extent that it negatively impacts the performance of the model on new data or in this case, a new face. The actual noise or random fluctuations in the training data are picked up and learned as concepts by the model. The GAN does not then react well to new data and negatively impact the model’s ability to generalize.

It typically takes 50,000 to 100,000 training images to train a high-quality GAN. In video terms that might be over an hour of footage. But in many cases, artists simply don’t have tens or hundreds of thousands of sample images at their disposal. With only a couple of thousand images for training, many GANs fail at producing realistic results. This problem, called overfitting, occurs when the discriminator simply over processes the training images and fails to provide useful feedback to the generator. In other words, overfitting happens when a model learns the detail and noise in the training data to the extent that it negatively impacts the performance of the model on new data or in this case, a new face. The actual noise or random fluctuations in the training data are picked up and learned as concepts by the model. The GAN does not then react well to new data and negatively impact the model’s ability to generalize.

In image classification tasks, researchers get around overfitting with data augmentation, a technique that expands smaller datasets using copies of existing images that are randomly distorted by processes like rotating, cropping, or flipping, forcing the model to generalize better. But previous attempts to apply augmentation to GAN training images resulted in a generator that learned to mimic those artificial distortions, rather than creating believable synthetic images or faces.

NVIDIA Research’s ADA method applies data augmentations adaptively, meaning the amount of data augmentation is adjusted at different points in the training process to avoid overfitting. This enables models like StyleGAN2 to achieve results that are as good as if they had been run using an order of magnitude more training images.

Researchers can now apply GANs to previously impractical applications where examples are too scarce, too hard to obtain or too time-consuming to gather into a large dataset. StyleGAN has been hugely successful and influential. Different editions of StyleGAN have been used by artists to create stunning exhibits and produce a new manga based on the style of legendary illustrator Osamu Tezuka. It’s even been adopted by Adobe to power Photoshop’s new AI tool, Neural Filters.

With less training data required to get started, StyleGAN2 with ADA was being applied to rare art, where there just is no way to have enough original works of art of one style to train on. As a test, NVIDIA used it on the work by Paris-based AI art collective Obvious on African Kota masks.

StyleGAN2

By applying the ADA with the popular NVIDIA StyleGAN2 model, the team produced synthetic training data that works as well as having somehow discovered a vault of previously undocumented art treasures.

By applying the ADA with the popular NVIDIA StyleGAN2 model, the team produced synthetic training data that works as well as having somehow discovered a vault of previously undocumented art treasures.

The research paper behind this project is being presented this week at the annual Conference on Neural Information Processing Systems, known as NeurIPS. It’s one of the 28 NVIDIA Research papers accepted to the AI conference.

This new method is the latest in a legacy of GAN innovations by NVIDIA researchers, who developed the SIGGRAPH Award-Winning GAN-based models for the AI painting app GauGAN, as well as the game engine mimicker GameGAN, and the pet photo transformer GANimal. All these previous GAN solutions are available on the NVIDIA AI Playground.