Loom.ai, has become a key provider mobile virtual human 3D avatar solutions with it’s partnership to have its its fully embedded SDK solution in the new Samsung Galaxy S9 and S9+ devices. The move was first announced at the Mobile World Congress 2018. The new smartphones from Samsung will leverage Loom.ai’s technology to power their AR Emojis; fully customizable, personalized, 3D avatars created from a single photograph.

We ran this image below of fxguide’s Mike Seymour through the new Loom.ai API, beside are three frames from the animated avatar that is generated in just a few seconds. The process uses a combination of machine learning techniques style of approach to build the complex faces with much more detail than any single image provides.

“Our partnership with Samsung opens up an exciting era of business opportunities for content partners around the avatar ecosystem,” added Mahesh Ramasubramanian, co-founder and CEO of Loom.ai. “By combining our years of experience and experimentation in deep learning and VFX with Samsung’s impressive product and consumer base, we’re able to put the power of real-time, personalized expression directly in the hands of users.”

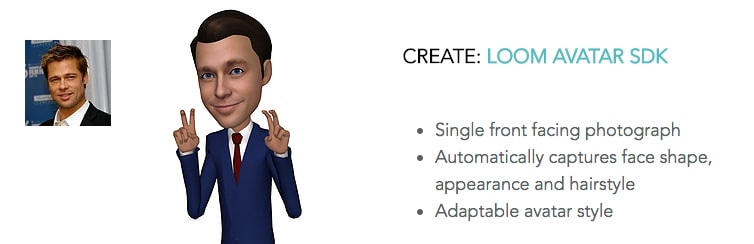

Loom.ai’s technology allows users to:

- Create – With a single photograph, Loom.ai’s platform creates a fully automatic, personalized, 3D avatar and facial rig in seconds. Deep learning recreates the user’s likeness in shape, texture and movement resulting in the most personalized avatar available.

- Animate – By using facial expressions and movement, users drive personalized avatars and favorite characters. Loom.ai’s technology is compatible with several third-party real-time facial feature tracking solutions.

- Share – Avatars can be instantly shared with friends regardless of device or platform. Image analysis captures the key characteristics about a user’s face and is optimized to run efficiently on mobile devices. If the phone that the avatar is being sent to is not Avatar ready then a GIF is sent.

Loom.ai is focused on virtual communication through the creation, animation and sharing of personalized, 3D avatars. Based in San Francisco, and an alumni of the Y Combinator Fellowship. Loom.ai co-founders are CEO Mahesh Ramasubramanian and CTO Kiran Bhat, who together bring decades of hard core visual effects and animation experience with digital faces. Mahesh Ramasubramanian comes from DreamWorks Animation where he was the visual effects supervisor on such movies as Madagascar 3 and Home, and he also worked on the Academy Award-winning Shrek. Kiran Bhat previously architected ILM’s facial performance capture system and was the R&D facial lead on The Avengers, Pirates of the Caribbean, and TMNT.

“The magic is in bringing the avatars to life and making an emotional connection,” added Ramasubramanian. “Using Loom.ai’s facial musculature rigs powered by robust image analysis software, our partners can create personalized 3D animated experiences with similar visual fidelity seen in feature films, all from a single image.”

In just a few seconds, the new Loom.ai can take a single photograph and transform it into a representative 3D avatar. The face is animatable and expressive in real-time, these avatars can be used to power current and future applications in mobile messaging, entertainment, AR/VR, e-commerce, video conferencing, broadcasting and more.

Developer Issues and Questions

“Content developers can access the Loom.ai “mapper” SDK on the S9/S9+ within Samsung’s eco-system. Loom.ai and Samsung will be jointly putting out a facial rig specification in an open source format. Characters that are built with these specification will be compatible with the AR camera functionality on the Galaxy devices.” explained Kiran Bhat, co-founder.

For developers this extends to being able to customize clothes and accessories of the Loomies. The customization pipeline for AR Emoji’s will be exposed within Samsung’s content ecosystem + platform.

![]() A critical issue with avatars is how long the lag is in lip syncing a character. The Loom.ai mapper SDK itself is quite fast: “typically 200fps on a modern mobile hardware. The overall lip sync rate is limited by camera frame rates and the speed of the real-time feature tracking systems that camera vendors use internally,” adds Bhat. There are several third-party real-time facial feature tracking solutions, which could be used for 2d tracking in the market such as ArcSoft, Ulsee, etc.

A critical issue with avatars is how long the lag is in lip syncing a character. The Loom.ai mapper SDK itself is quite fast: “typically 200fps on a modern mobile hardware. The overall lip sync rate is limited by camera frame rates and the speed of the real-time feature tracking systems that camera vendors use internally,” adds Bhat. There are several third-party real-time facial feature tracking solutions, which could be used for 2d tracking in the market such as ArcSoft, Ulsee, etc.

Importantly to performance all the computation of the avatar is local to the device or smart phone, so the system does not solve this on the cloud and then stream the resulting video, which would be possibly much slower and consume data bandwidth more aggressively. The only way to access the personalized avatar is via the Loom.ai SDK suite. “So, neither consumers nor other 3rd party vendors have direct access to the consumer’s 3D likeness,” explains Bhat. All of one’s work in making and animating the Avatar is local but in term of sending someone an avatar message, the current model is to send this as video or as a GIF.

Some facial animation systems have been shown to work by reducing the users voice to text and then animating the emoji as a text to lip sync estimation. This is not how the Samsung system will work. The Loom Mapper SDK supports: vision-> FACS ->animation. Which means a users face drives the rig by markerless facial tracking.

Loom.ai was a part of our fxguide MEETMIKE project at SIGGRAPH, more on that here and the video about behind the scenes here at fxphd. The system at SIGGRAPH that the MIKE project used was not optimised for mobile phone or device use. “We optimized the Loom Avatar SDK and our deep learning tech for mobile devices — it only takes a few seconds to build a full customized avatar from a photograph on mobile. We also support multiple client-specific styles, in addition to the default Loomie style. Plus, we have a new mapper SDK for facial animation” explains Bhat.

The team’s web site if http://www.loom.ai