Autodesk MotionBuilder is one of the industry standards in animation, mocap and virtual production software. But before it was MotionBuilder, it was FiLMBOX, a product developed by Kaydara. Now, the original developers of FiLMBOX are being recognized by the Academy of Motion Picture Arts and Sciences with a Scientific and Engineering Award at this Saturday’s Scientific and Technical Awards in Los Angeles.

fxguide will be there covering the event, but as a special preview we talk to Benoit Sevigny, one of the award recipients along with Andre Gauthier, Yves Boudreault and Robert Lanciault, about how FiLMBOX came to be.

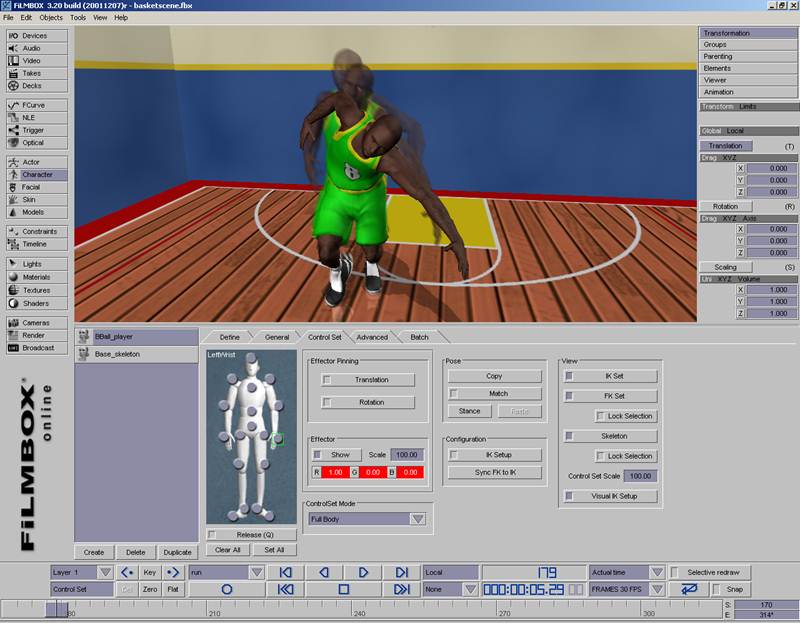

FiLMBOX was released by Kaydara first to aid in motion control projects. The software began development in 1994. Kaydara was acquired by Alias in 2004, and then by Autodesk in 2006. FiLMBOX and then MotionBuilder ultimately found its feet in motion capture. Sevigny notes that “initially it was not really a totally mocap system – it was a plugin for Softimage that let you import animation curves. Later on we made it a full fledged product. And we designed FBX (short for FiLMBOX) which became the standard for animation file exchange.”

Around 1999, just before NAB that year, Sevigny says he witnessed artists doing facial animation from audio tracks. “It was pretty painful,” he recalls. “So I got this idea out of the blue to create some simple tools that would be able to drive shapes and at the same time I wanted it to be rea-time. So you could do mocap and record a voice, and animate the mouth automatically instead of doing this later on.”

That became the VoiceReality – real-time voice recognition for automatic facial animation – part of MotionBuilder. Sevigny also contributed much of the mathematical libraries that handled vectors in the software, which we initially tested for the in-built collision detection system.

“Initially,” outlines Sevigny, “FiLMBOX was really designed as a realtime digital patchbay, and you could control anything from anything. The fact that the system is always live is unique among animation systems. You could freely mix live sources with pre-recorded data, and the viewer would keep on rendering everything, including live data, even when the transport controller was in stop state.”

“There was an SGI version,” says Sevigny, of the development of FiLMBOX. “When Windows NT was released with openGL we ported it to the PC. Later on I wrote a port to the DEC Alpha – I don’t think we ever sold any licenses for that machine! We also ported it to the Mac.”

Over time, FiLMBOX/MotionBuilder would enable several approaches to animation. Sevigny says that one should “think of MotionBuilder as an animation system, built on top of a digital patchbay and powered by a realtime engine. The number of devices you could hook up to it was impressive.”

Sevigny lists these as:

– Mocap, gloves and facial tracking systems

– MIDI

– Moving lights

– Input devices and Control surfaces

– Network

– Audio I/O

– Video I/O and VTRs

– Voice

– Timecode

“There was not only a graph editor that allowed you to connect anything together,” adds Sevigny, “but also an innovative spreadsheet that allowed you to write mathematical expressions pretty much like Excel, with the difference that cells were live and supported vector algebra. The use of lock-free data structures allowed users to completely bypass the operating system and improve response time. With this architecture, the more processors you threw at FiLMBOX, the happier it was.”

“At one point,” he says, “we provided a Quicktime plugin to playback FBX files in Quicktime. Also, the a middleware version of HumanIK made its way into many game engines.”

The result is that MotionBuilder has found prominent use as a mocap tool in television, games and films – including notably in Avatar and Tintin. Interestingly, a relatively early incarnation of FiLMBOX was used during the making of The Matrix to synchronize the SLR cameras in terms of milliseconds for the famous bullet time shots.

Although MotionBuilder has had significant impact for nearly two decades, Sevigny believes that for a long while the software’s release was ahead of its time. “Back then,” he says, “people were still debating whether or not mocap was viable because of the challenges involved in mapping raw captured data on digital characters. Ironically, MotionBuilder is also one of the best keyframing tools around as it allows you to freely layer mocap and keyframing one on top of the other.”

“We foresaw the convergence of game and film creation tools,” adds Sevigny, “but the market was not ready for this. But the current sophistication of games and the growing need to re-use film assets to create derivatives like games will soon make this a reality.”

After leaving Kaydara in 2004 shortly after the Alias acquisition, Sevigny headed to Apple where he worked on, among other things, the image rendering engine shared by Final Cut Pro and Motion. “We were also designing a next-generation Shake,” he says. “Unfortunately when Shake was abandoned by Apple, at the same time the Motion guys were starting to be interested in what we were doing with our renderer. When they re-designed Final Cut Pro, they are now sharing the rendering technology.”

Sevigny now works at Unity Technologies, the company behind the Unity game engine.

Sevigny’s memorable moments with FiLMBOX

Early usage: “One of our first clients was using FiLMBOX to reconstruct crime scenes and accident for legal use, another one in Amsterdam was capturing chickens with it!”

Didgeredoo play: “At conferences we would hire puppeteers to do live performances. At one point there was a guy who came in with his didgeredoo – he said he would like to try this out with FiLMBOX. Our demo artists set up a simple scene with tubes and spheres and constraints between them, and this guy started playing didgeredoo – he would animate the tubes with the sound of his instruments – it was pretty funny.”

Virtual sets: Sevigny wrote a simple image processing pipeline with a keyer and a color corrector. “It was for the very first programmable GPU. I had to write the thing between registrable combiners – I think I wrote the first keyer on a GPU!”

Live shows: one of these included the cartoon animated Johnny Bravo which made use of the VoiceReality tool for live lip sync. “People would call in during the show and he would answer the telephone and talk to them live.”

Space: The Hayden Planetarium at the Rose Center for Earth and Space facility included a large annexe in which an entire map of the universe could be projected. At one point, the FiLMBOX team rigged their system with a single 28 processor InfiniteReality2 system with seven graphics pipes – until it crashed. “So we flew over there to fix the problem. While I was on the plane I realized why it was crashing. It was because we created a lot of threads to handle all the IO. I realized the default stack size multiplied by the number of threads multiplied by 28 would exceed the 32 bit memory space. So when I got there it took me one minute – I just reduced the stack size for the threads and then – bang – we saw everything on the dome – it was amazing.”

Realtime performances: Ray Kurzweil’s Virtual Ramona who performed and sang in realtime at the New York Music and Internet Expo at Madison Square Garden in New York City.

Mortal Combat: “During a visit to a client who was working on Mortal Combat,” says Sevigny, “André and I ended up capturing the movements of the hydra’s head on flexible rods with sensors, we jokingly recognised each other while watching the film. It was my first and only captured performance in a movie!”