SIGGRAPH this year returns to Disneyland and the Anaheim Convention centre. The main conference does not start for a day or two but just prior to SIGGRAPH there are several co-located events, run under the umbrella of SIGGRAPH but actually separate from it.

One that returns this year for only its second time is DigiPro. This is a conference aiming to provide a place for production proven papers, that are still peer reviewed, but very much more leaning towards more practical implementations than perhaps SIGGRAPH’s main papers – which can be more theoretical. Also it just aims to get the right people together in a focused group to discuss the really complex technical issues of R&D in a production environment. By SIGGRAPH standards it is small, and yet it succeeds in gathering researchers from some of the best companies in the world. Not only were there CTOs and researchers from DNeg, ILM, DreamWorks, Image Engine, Weta Digital and others but also many from top facilities in the far east and Europe, coupled with the head researchers and scientists from companies like Side Effects Software and other application manufacturers. If truth be told, on par with any of the technical papers is the luncheon. From renderfarm memory limits, to open source issues and detailed production discussions – the quality of the luncheon discussion would be worth the cost of the conference alone. But of course, the papers that are the real focus.

Lead by keynote speaker Tony DeRose, Research Group Lead at Pixar, the talks this year were on the whole very good, some could have used more studio approved final footage, but some like DeRose’s were simply covering research that may yet to be used in a blockbuster.

The group headed by DeRose was established by Ed Catmull, President of Disney and Pixar Studios, in 2004, to paraphrase, “If everything we have done gets into production we have failed. Part of our charter is to fail sometimes,” explained DeRose. Catmull felt that studios had generally become risk adverse and so he established this group inside Pixar Studios to do the work that no director or film would directly require, but yet could perhaps benefit from. It does real research but focused on the issues of the animation industry. As such about 50% of the time the work the team does is only vaguely related to a common production issues and again about half the time the work may not even be directly used. To illustrate the point DeRose in a brilliantly relaxed and yet technical talk showed a range of real research projects the team had done, from hair research to multi-touch layout control for digital environments, but two in particular seemed to represent the work of the group.

If Pixar is a studio that is director driven, then this group is researcher driven. DeRose’s team is chosen for their passion and knack of picking problems – it is not top down driven so the topics of research can be diverse. The first example is about producing a fast interactive renderer for the lighting department.

Real time lighting

DeRose showed a scene from Toy Story 3 and the vast complexity of lights used in a typical shot at the kindergarten. He then explained the new approach of physically based lighting and shading with ray tracing used on Monsters University (see our story). In the context of this move the team looked to produce a new fast real time lighting tool, that would allow a ray traced image to be seen quickly enough to light. As the new approach to a scene may have say only half a dozen lights, but still be subtle and complex – the system was fast enough to isolate any light and almost instantly see the shot illuminated with just that light, while still having all the correct BRDFs and soft shadows etc of a hero shot.

This project was both a success but it is not yet being used in production. With the new system lighters have been able to light shots in literally half the time.

The system uses the OptiX SDK from Nvidia. In terms of performance the team were obtaining about 100 million rays per second. What their research found was that there is a key value at about 30 million rays a sec. If an artist can see their work being rendered over 30 million rays a second, then the artist can see ‘through the noise’ and use the system very effectively as ‘real time’. The system will be shown on the Nvidia booth this week during SIGGRAPH. In contrast, we spoke to one leading researcher at a major company who had only gotten 3-5 million rays a second.

Non-realistic rendering

The second project is creatively and technically successful, but since being completed it has sat on the shelf waiting for a director to need it. This second example seems perfect for a Pixar short and a real innovation built on previously published SIGGRAPH papers like a Hertzmann et al (2001) paper on non-realistic rendering using ‘train by example’. In the earlier work a pair of still images are combined with the form and content of the normal image but with the artistic style of the second.

Read the paper and watch the video (Stylizing Animation By Example – Pierre Benard, Forrester Cole, Michael Kass, Igor Mordatch, James Hegarty, Martin Sebastian Senn, Kurt Fleischer, Davide Pesare, Katherine Breeden, April 2013).

What Pixar has succeeded in doing is building a working animation version, and the results are stunning. The example was an ice skater – animated in a normal 3D character way, with a stylized but fully 3D shaded fast ice skating shot, with spins and fast movement. This would typically be rendered out of say RenderMan and looks fully finished. An artist then overpaints on every 10 to 14 frames using traditional digital paint – but to look like a pencil or oil rendering. Making each of these keyframes look like concept art for example. In this case it could have come right out of an Art of Pixar concept art book. The computer then matches and fills in everything in between. The result looks like an entire animated shot in the style of concept art – rough and expressive, but without any popping or digital filtering looks. Previous attempts either only worked on a still.. or looked like say a Sapphire plugin with a real ‘image processing’ look. This looked like hand drawn animation, but better, in that no inbetweening real ink and paint artist could avoid bubbling of the textures or flickering of the paint strokes between frames. As with all such great work, it looks correct and then you stop and ask yourself – hang on.. how did they do that?

The innovation part is easy, DeRose said, but transferring the ideas and technology can be hard, so the team always partner early with production people and try and allow them to “learn the craft as we go”, and then be a viral agent for the research inside Pixar. DeRose strongly stated that they had found first person transfer by far the best, one on one contact and discussion.

Other examples were also shown – each thought provoking and clearly of great interest to the audience, most of whom must have seen this as a dream job!

Future work is in providing tools in the areas of:

- Complexity management – a film is a very hard thing to co-orodinate and manage all of the elements

- Everything being in real time

- Fast simulation cloth hair, muscles, skin (too often animators have to animate virtually naked characters, not seeing physics of those characters hair and cloth on their desktops…during the modeling phase. DeRose’s team aim to remove the need for farm rendering to see all this complexity)

Generally

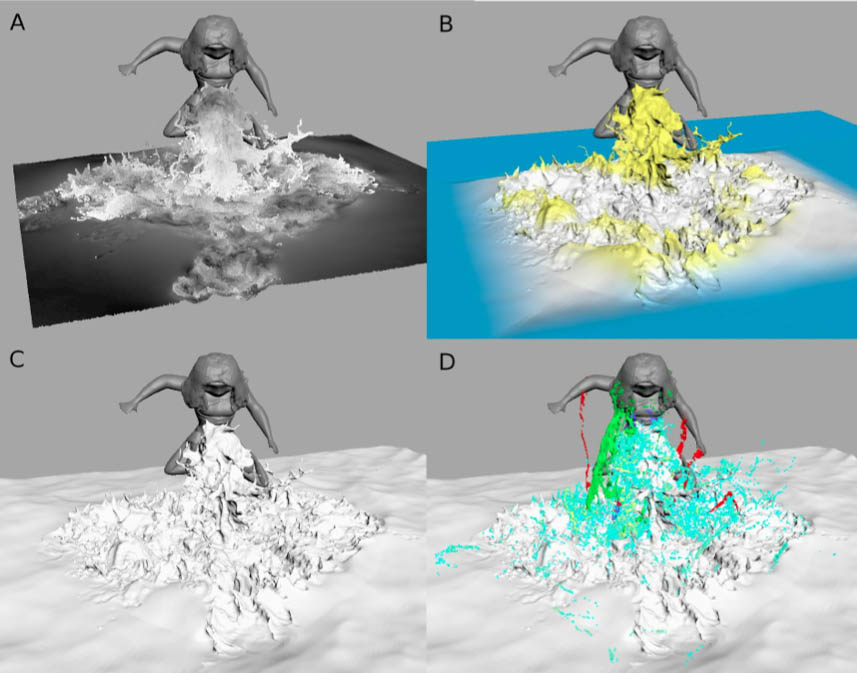

Other good talks included a great update on OpenSubdiv, and its appearance now in Maya (for more on that see the Open Source panel today – Sunday 2pm at Siggraph), a great talk on the water in the animated film The Croods, (Liquids in The Croods: Jeff Budsberg Michael Losure Ken Museth Matt Baer, DreamWorks Animation) which was both dense and entertaining to see.

And a spectacular final talk on Weta’s Iron Man 3 work, specifically the tool they developed to get Tony Stark in and out of a range of Iron Man suits in the end action sequence.

This last talk had brilliant studio footage and making of material. Earlier talks showed for example: a few key unseen new before / afters from the upcoming Neill Blomkamp’s Elysium – in the Image Engine talk, (Developing a Unified Pipeline with Character) but the Weta talk benefited from the film Iron Man 3 having been out now for a while. Weta showed extensive animation reels, rigging and test shots to explain how the team handled the 300 shots in just a few months. Weta’s approach of applying serious R&D to even short run major projects really paid off and the new rigging/modeling approach allowed animators to solve modeling problems and get Tony Stark in and out of a dozen of Iron Man suits while retaining the production quality of the earlier work of ILM and DNeg – but with considerable less production time. Marvel should be thanked for allowing Weta to show such detailed behind the scenes work.

(Talk: Guide Rig: The Magic Behind Suit-Connect :Weta Digital on Iron Man 3. Guy Williams, Weta Digital VFX supervisor).

DigiPro is a small but very well run conference. As this is only the second year, we can only hope it continues to grow. The major sponsor this year was Walt Disney Animation Studios who facilitated a great event.