Papers Fast Forward

One of the highlights of any SIGGRAPH is the Technical Papers Fast Forward event. This is when every one of the technical papers presenters gets a short 30 seconds to show the essence of their paper as a preview. Each speaker can also play a short video – but everything is tightly controlled since with so many speakers the event could run literally all night without a harsh time limit.

Over the years the presenters have introduced a tradition of not taking their work too seriously so the night is full of bad jokes, puns and truly crappy amateur acting in semi funny videos. Not all the presenters ham it up, but there are always some who are so off the wall it is impossible to know what their paper is even about.

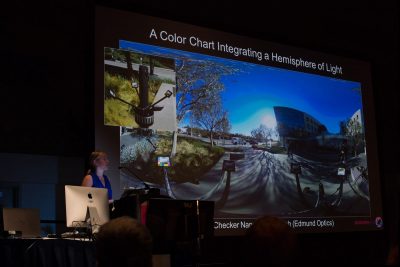

Left is the USC ICT team presenting their paper (without silly humor) Practical Multispectral Lighting Reproduction as part of the Papers Fast Forward on Sunday night and below is Chloe LeGendre presenting that same paper in full, Monday morning in the Computational Photography Session. For more on this paper, see our Story.

The room is generally supportive and it is a good chance to stumble on a paper you may not have heard of, as such the vast hall is packed with SIGGRAPH attendees. It is no doubt true that many people wish they did attend more of the hard core technical papers, but given the maturity of our industry it is also true that many papers are extremely complex and not very accessible. Still, the technical excellence of the SIGGRAPH papers is at the core of that makes this conference so important and well respected.

Life is Shorts

On Sunday afternoon Pixar and Disney presented work on two of their shots Piper and Inner Workings. The later of these was shown for the first time in North America in this session.

Piper

We have covered this film in our SIGGRAPH preview and podcast, but the session at SIGGRAPH covered a range of technical issues beyond our podcast. In addition to the discussion on effects and animation, the DOP of the short film gave a particularly compelling discussion on the framing, composition an lighting of Piper.

Erik Smitt, DOP on the film, pointed out that they sort of make the birds (and Piper in particular) subject not object in the film. The director had always wanted to be down with the birds and so this translated to a very real photographic look. While nature photography would normally be on a very long lens such as a 400mm, the DOP decided to shoot more on an 80mm so as to not flatten the shapes too much due to the optical characteristics of a typical lens.

Most of the photography is very low to the ground and shot with a very shallow depth of field. This produces a long view into the distance with a typical shot seeing from cm (foreground) to kilometres (in the distance) – all in the same shot.

The aim was to simplify the frame to made it readable. DOF helped with that but so did adding marine haze and adjusting the quality of light.

The birds were simplified and made more ‘cartoon’ but the lighting was maintained as a very real physically plausible lighting approach. The lighting design was complex and advanced but the birds and their textures were simplified. The approach that was decided upon in pre-production was unusual. “Little golden books were the rule of thumb. We asked ‘what if we shot a movie inside a Little Golden book – put camera and lights in there?’,” explained Smitt.

The short film had a full day in terms of passage of time and lighting, so the tones of the scenes had to reflect what was happening in the story. So the lighting broke down to three scenes or times of day. These were labeled by the nature of the Waves in the film.

Three passages connected to the waves:

- Wave one… soft colour, soft shading, soft pastels

- Wave two… harsh lighting – uncomfortable (but still adorable)

- Wave three…yellows and glorious sunsets

The team did a colour script, expressing the changes between morning and afternoon and then what they can do with the composition to match that. For example in Wave two when the wave is most threatening, the midday light provided matching sharp shadows with sharp forms. Visually this meant that Piper’s nest is surrounded by pointy shadows from the grass blades shadow which appear almost as teeth on the sand surrounding Piper.

In contrast, the same sand is lit with softer light at the beginning of the film using an IBL real sky with an added giant ‘flags’ in the sky in the form of a cloud diffuser. The team built real Image-based lighting from HDRs actually taken at the beach near Pixar’s offices.

Smitt pointed out that IBL are normally difficult as you can’t tip it in Y without moving the horizon So they split out the light and cloud so they could move it for eye lights. They also added two coloured soft box style area lights to help warm up the next location. These were motivated by sun but add some yellow and blue soft boxes for fill and back light.

In terms of lighting the effects, added marine layer or haze also helped have light spill over the shadows of the background hills and illuminated into the shadows. This created atmosphere and also reduced contrast in the deep parts of frame to make the characters pop out in the screen.

Erik Smitt explains that DOP virtual cinematography is such a great example of reinforcing story and it adds tremendous production value, while producing some of the most attractive images seen from the Pixar team in short or feature film production.

Disney Inner Workings Premiere

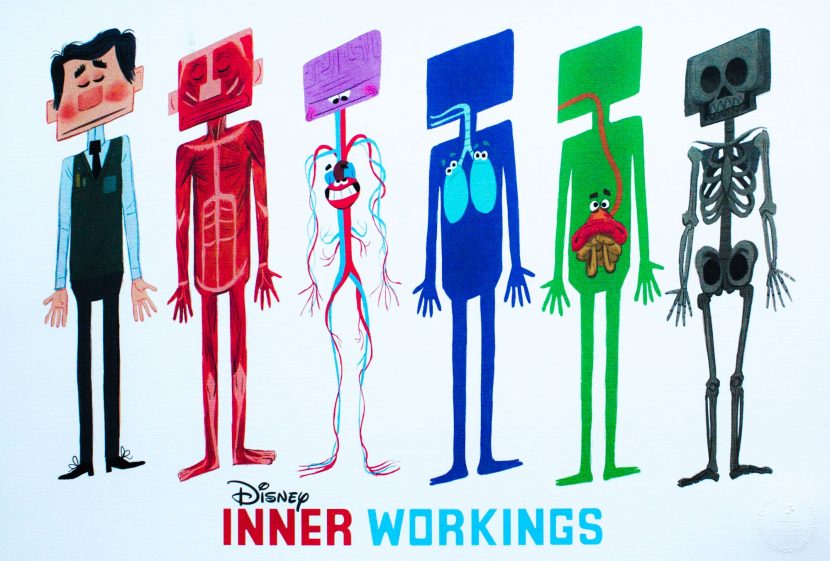

Leo Matsuda (Director), Sean Lurie (Producer), and Josh Staub (VFX Supervisor) premiered Inner Workings. The short is about the inner workings of the body controlling an employee Paul at Boring Boring and Glum. In much the same vein as Inside Out, the film explores a character’s motivation from, in this case their brain, lungs, gut and heart. Unlike Inside Out, the film is completely without dialogue.

The director Leo Matsuda was born “before the internet. I am not that old but I had to read books and my favourite was the Encyclopedia Britannica.” He explained he would love to find the acetate clear plastic multiple pages showing the inner workings of the body. Matsuda is from a Japanese=Brazilian background, and the film can really be viewed as his personal tug of war between Japanese and Brazilian culture.

There is a pitch program at Disney much like the famous one at Pixar and Matsuda presented a funny and query set of drawings that won his the right to make the film inside Disney.

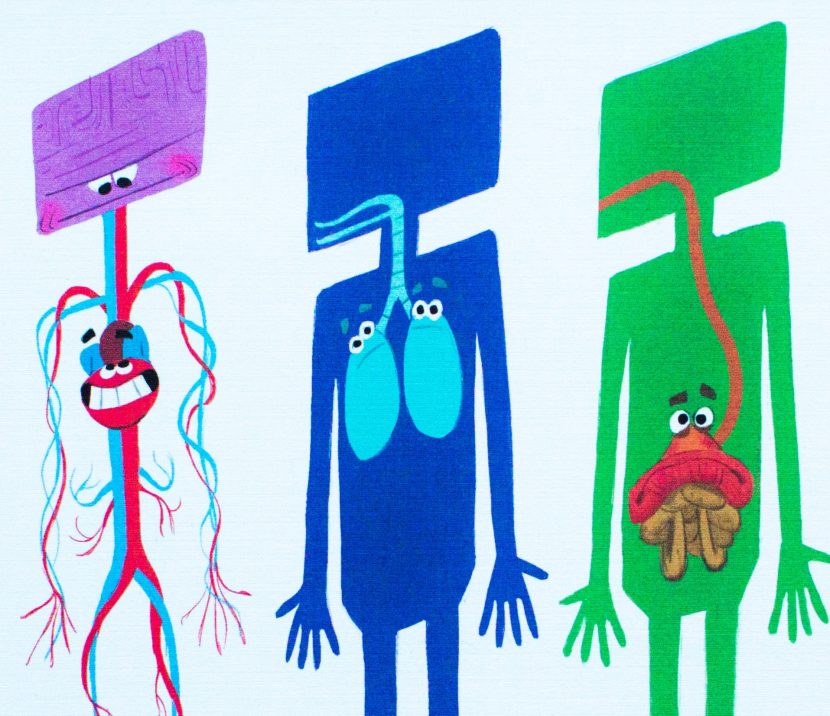

The original pitch drawings showed a hapless guy accidentally smelling the perfume of a woman in a lift and we see internally his skeleton collapse and thus his collapses. The film’s hero, Paul, was going to be a generic white guy, but Matsuda’s team suggested that the character should look like the director himself- very graphical cartoonish and Asian. This became known as Paul version 1. The character was fun but somewhat traditional, that was until one night when Matsuda sent a painting of a much squarer character and that became Paul version 2 to Josh Staub. This new version of Paul unlocked the rest of the look of the film, It allowed the brain of Paul to be also square and of course in contrast the heart is round.

This ‘square vs round’ aesthetic then dominated the production for example when the head is in control, Paul;s movements are rigid and straight and when his heart is in control his animation is very round and loose.

While this approach to square and round design was a rich aesthetic to explore it was not without its problems. For example Paul’s square face and head translated to Paul similing across the front of his face from front facing shots, but on the side view you would not see any of his mount, so f the side shots the mouth cuts back to the neck.To solve this the team took a page from stop frame animation and they actually came up with two heads and the animation swaps dynamically between the two heads as his head turns.

This round vs square is seend in everything in the art department. The heart wants Paul to be all round, such as the beach scene, and the world of the mind is very square in every respect, such as in the office of BB&G.

Since this was just a short the team soon realised that the crowd scenes would be hard to pull off as they just did not have the modelling resources of a feature film. Thus for each crowd they made one male and one female and then dressed them differently. Surprisingly this works extremely effectively.

As the director explained, “the real story was inside Paul, he is just the vessel.” So the team simplified his bodily organs into 3D puppet style muppets.

The team had to then solve the look of the internal organs. The aim was to designed the gut, lungs and heart as real organs crossed with muppets, but the task was not easy. “Where do you put the eyes on say the gut?” explained Matsuda. Furthermore, the gut is only seen a few shots. To help, the team had 2D animators at Disney explore some animation as pencil cel tests. They found this very productive since it all happened before the team had done the modelling or rigging, so they could be much more efficient.

The film was fast cutting with 150 shots in 6 minutes. By comparison, Disney’s Feast, which is about the same duration, has only 65 shots.

The silhouette posing had to be very strong for when we see Paul as a black silhouette… as we see his interior organs which is half the film time of the story.

The film broke down into 5 types of shots:

- Normal exterior no interior

- Mainly normal with Paul in Exterior via silhouette

- Mainly interior but some exterior around the edges

- Mainly brain thinking and imagining – ‘Brain visions’

- Inside fully inside style 100% interior(but lit so it could be turned into an C

The brains thinking about dying was 2D animation, which meant it had to be finished first so the 3D brain could react to it.

Inner Workings will premiere with Moana (Walt Disney Animation Studios) November 23, 2016 – it has been made for stereo viewing as well as mono projection.

Dark Hides the Light

This session had five different presentations ranging from producing higher quality shadows in real time games to the challenges of creating content for a 400ft horseshoe shaped screen for a theme park ride.

HFTS: Hybrid Frustum-Traced Shadows in The Division

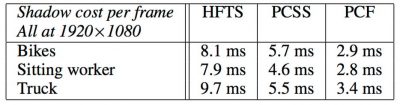

First up was Jon Story from NVIDIA Corporation with a paper titled “HFTS: Hybrid Frustum-Traced Shadows in The Division”

Story showed a hybrid shadow technique that combines hard-contact shadows of irregular z-buffering with the soft shadows of percentage-closer soft shadows. The result is high-quality shadows from occluders both near and far. The technique is demonstrated in Tom Clancy’s The Division.

He began by showing the classic bicycle example where shadows are too blurred and the shadows of the spokes are almost gone. He then showed a result using the new technique and the improved, far more realistic shadows.

The problem with using an irregular z buffer approach is that it can be slow due to long lists and requiring CPU readback. The solution chosen was to produce a heat map and re-project areas where long lists exist. This improves light space mapping, producing more realistic shadows and eliminating CPU readback.

They landed on a hybrid approach using Lerp Factor (linear interpolation function) and Percentage‐Closer Soft Shadows (PCSS), for generating perceptually accurate soft shadows. One concern is always time:

http://developer.download.nvidia.com/shaderlibrary/docs/shadow_PCSS.pdf

The Paper: HFTS: hybrid frustum-traced shadows in “the division”

The Paper: HFTS: hybrid frustum-traced shadows in “the division”

Sparse Shadow Tree

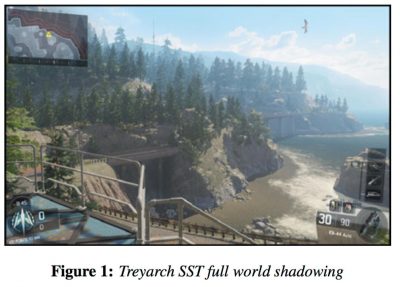

The second presentation was “Sparse Shadow Tree” by Kevin Myers from Activistion Blizzard, Inc.

This is a shadow-compression technique for global outdoor shadowing that encodes geometry in a GPU-readable, sparse tree, eliminating the need to render static occluders at runtime. Sun shadows present special challenges due to their scale (often feet across in foreground and miles in the background). Also their integrity is important as we know well what this should look like.

This is a shadow-compression technique for global outdoor shadowing that encodes geometry in a GPU-readable, sparse tree, eliminating the need to render static occluders at runtime. Sun shadows present special challenges due to their scale (often feet across in foreground and miles in the background). Also their integrity is important as we know well what this should look like.

Splits is the current gold standard method … parallel split shadow maps (PSSM)

http://http.developer.nvidia.com/GPUGems3/gpugems3_ch10.html

Tried adding static shadow map to PSSM but there was too much data in the static map. This led to working on compressed depth maps, point cloud and tiles.

The Paper: Sparse shadow tree

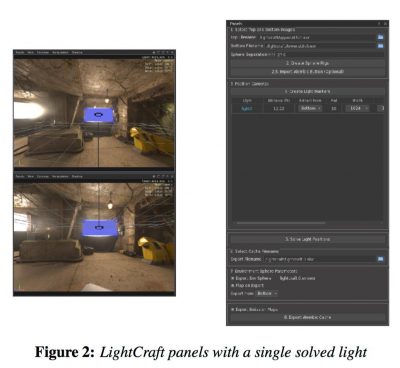

Extract Light Position and Information From On-Set Photography

LightCraft is a new Industrial Light & Magic production tool used on “Warcraft”, “Jurassic World”, and “Star Wars: The Force Awakens” that semi-automatically extracts 3D light position, size, and light composition from on-set photography. Alex Suter R&D, Victor Schutz, Dan Lobl and Brian Gee all from Lucasfilm Ltd.

For the Warcraft film they were faced with 1400 shots, 1000 requiring CG creatures. While they had existing in-house tools they wanted a new, faster, friendlier tool for Integrating CG and live action. They needed to use on set photography to build 3D lighting rigs. They shot typical spherical hdri, two sets for stereo.

The main motivation for the new UI was to simplify for speed, to be able to handle the high number of shots. Also important was artist adoption. The tool is semi automatic, not automatic and it exports to Alembic.

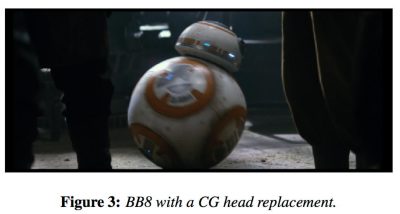

Dan Lobl showed a scene from Force Awakens where the director had a last minute request to change the  screen direction of a shot of BB8. Flipping the frame was not an option as fans would tear them apart noticing this.

screen direction of a shot of BB8. Flipping the frame was not an option as fans would tear them apart noticing this.

They also had less than 24 hours to complete. He credited LightCraft with making this possible. Lightcraft also was used on Jurassic World. The goal was to make a tool that was automated, fast, fun to use. They feel they accomplished the goal.

The Paper: LightCraft: extract light position and information from on-set photography

Rendering Fast & Furious: Supercharged in Stereo for a 400-Foot Curved Screen

For Fast & Furious: Supercharged, MPC needed to produce footage for projection in stereo on an enormous U-shaped  screen. This raised three main challenges: anticipating the projection warping, recomposing the perspective, and ensuring a consistent stereoscopic effect. The MPC team developed a custom camera model and an “audience experience” validation process. Presenting was Rob Pieke, Technicolor, Moving Picture Company London.

screen. This raised three main challenges: anticipating the projection warping, recomposing the perspective, and ensuring a consistent stereoscopic effect. The MPC team developed a custom camera model and an “audience experience” validation process. Presenting was Rob Pieke, Technicolor, Moving Picture Company London.

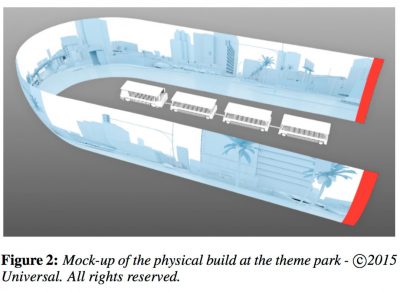

For a new ride experience at Universal Studios Hollywood the tram ride vehicle enters a hanger into a 400 ft U shaped screen. The good news was it was one shot, but would be projected in 4K by 18 projectors, in stereo and was minutes long.

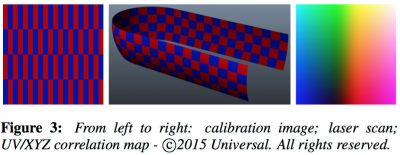

It was designed to be the worlds largest screen. MPC sent grid test images that were projected and scanned to give them very accurate data as to how things would look.

This proved to be very important as four trams enter the horseshoe at a time so unlike a theatre there is not

clear constant sweet spot (he advised if you ride it, sit in the middle). Riders turn their heads and can look anywhere at any time.

There is a huge angle difference from front car to back car. One trick is to pull the camera back and then scale objects back, but this makes animation far more difficult.

Convergence issues due to length of cars, seating and the horseshoe made the grid tests invaluable as they were able to render tests from various positions on ride to get a good idea what the rider would experience and adjust as needed.

From the paper:

Using this map, we extended RenderMan and mental ray with a custom camera we referred to as “warpCam”. The standard perspective projection was bypassed and, instead, the direction for each pixel’s camera ray was driven from our measured data. This resulted in images which appeared heavily warped when viewed directly on a computer monitor, but which appeared straight and proper when projected on the final screen.

Note: a final frame is 27K, rendered as a single 27544 x 2160 image.

The Paper: Rendering fast & furious: supercharged in stereo for a 400ft curved screen

Luma HDRv: An Open-Source High-Dynamic-Range Video Codec Optimized by Large-Scale Testing

Last up was Jonas Unger, Linköpings universitet, with Luma HDRv. The Luma HDRv codec uses a perceptually motivated method to store high dynamic range (HDR) video with a limited number of bits. The method, as originally described in the HDR Extension for MPEG-4, ensures that the quantized video stream will be visually indistinguishable from the input HDR. The stream is then compressed using Google’s VP9 video codec, which provides a 12 bit encoding depth. As compared to the legacy code of the MPEG-4 HDR video codec (proprietary), Luma offers a substantial improvement in compression efficiency.

Others on the paper: Gabriel Eilertsen , Linköpings universitet. Rafał K. Mantiuk, University of Cambridge

From the paper:

In the design of the encoder we perform a large-scale test, using 33 HDR video sequences in order to compare 2 video codecs, 4 luminance encoding techniques (transfer functions) and 3 color encoding methods. This serves both as an evaluation of existing techniques for encoding of HDR luminances and colors, as well as to optimize the performance of Luma HDRv.

There is no HDR open source video encoding system, this works to solve that.

The Pipeline they came up with is:

- transform color space

- transform luminance

- encode

- package

Luma HDRv uses VP9 for encoding, with PQ-Barten for lumi-nance quantization at 11 bits, and encoding in the Lu′v′ colorspace.

lumahdrv.org

Siggraph 2016 Paper: Luma HDRv: an open source high dynamic range video codec optimized by large-scale testing