One of the issues with transmitting and collaborating with 3D is the time to prepare the data and then download it. There are many formats for encoding a 3D scene but almost none that can encode, transmit and reconstruct 3D in real time, with only a tiny lag for processing.

A research group at Purdue University in the US has presented a way to do just that and to do it using standard video streaming pipelines. Their research represents a new method for efficiently and precisely encoding 3-D spatial data and color texture into a regular 2-D video format.

This new platform could enable high-quality 3D video communication on mobile devices such as smartphones and tablets using existing standard wireless networks. “To our knowledge, this system is the first of its kind that can deliver dense and accurate 3-D video content in real time across standard wireless networks to remote mobile devices such as smartphones and tablets,” said Song Zhang, an associate professor in Purdue University’s School of Mechanical Engineering.

The platform is called Holostream and it drastically reduces the data size of 3-D video without substantially sacrificing data quality, allowing transmission within the bandwidths provided by existing wireless networks. The system allows people to feel or appear as if they were present in a remote location, without having to model and render them. It is effectively 3D video over a normal MPEG delivery pipe.

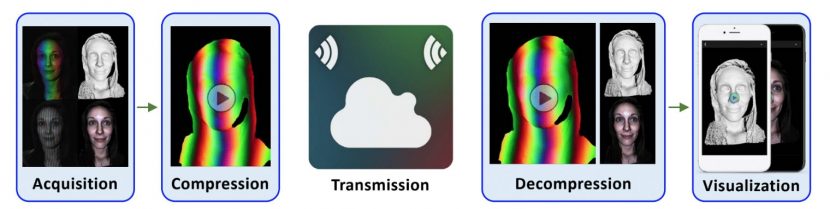

The team have developed a compression method that can drastically reduce 3-D video data size without substantially sacrificing data quality to the point of no longer being useful. In fact, it has varying quality setting depending on bandwidth. “The framework was tested using standard medium-bandwidth networks to simultaneously deliver high-quality 3-D videos to multiple mobile devices. Holostream is made possible through a new pipeline for 3-D video recording, compression, transmission, decompression and visualization” comments Zhang.

The team have developed a compression method that can drastically reduce 3-D video data size without substantially sacrificing data quality to the point of no longer being useful. In fact, it has varying quality setting depending on bandwidth. “The framework was tested using standard medium-bandwidth networks to simultaneously deliver high-quality 3-D videos to multiple mobile devices. Holostream is made possible through a new pipeline for 3-D video recording, compression, transmission, decompression and visualization” comments Zhang.

The work is built around the PhD thesis of Purdue doctoral student Tyler Bell. To test the system, the team developed both the hardware and software for the pipeline including a new 3-D video capture camera system. Their prototype uses their own RGB-D 3-D camera to capture the images. It uses an LED light to project structured patterns of stripes onto the object being scanned to read the depth. The depth and colour information is then encoded into a data stream which could be just a standard MPEG stream. This is then sent as if normal video over any network. At the other end the special MPEG data is decoded and displayed.

“Overall, I think that 3D sensing and 3D communications have the great potential to impact a wide variety of industries, as well as society as a whole,” commented Bell. “There are many areas today where a platform such as Holostream could be directly beneficial (e.g., teleconferencing, telemedicine). Additionally, as we enter further into the era of AR and VR, the demand to quickly provide realistic 3D data or 3D video content to users will only increase. In these scenarios, the potential applications for 3D communication systems such as Holostream may be limitless.”

The final images reconstructed dont look like ‘3D’ simply because it can be thought of as a 3D model with a perfectly aligned video camera projection. They are not rendered with shaders, they are live 3D forms.

An encoding and reconstructing method with robust transmission for 3D model topological data over wireless networks has been a research effort for some years. The great thing about this work is the use of generalised encoding and transmission technology.

Roughly speaking if one was to look at the 3D data stream when compressed, about 40% of the data is 3D data and 60% would be colour information, but it is not that simple. For example, a modern CMOS sensor works from a raw black and white pixel image. But it is an image where each pixel is assumed to have been recorded through a Bayer pattern. This grid of filters invented Bayer, in 1976, is still used in nearly every digital camera today. Normally the camera debayers via a matrix to produce an image, but inherently before the debayering or matrixing operation the image is already 1/3 its final size, simply as it is still the raw B/W file. Key aspects such as encoding and transmitting the pre-matrixed colour information allows the Purdue University team data compression without quality loss, but this only works for lossless compression Holostream option. The Holostream program does not just work on one varying scale of settings, it actually uses different algorithms for the different cases of lossless and lossy transmission.

On the lossy side of the equation, the algorithms for MPEG or JPEG compression do a good job on smooth or gently gradated data, in other words, data without high frequencies. As any 3D artist knows, a simple smooth 3D model with a high frequency texture map looks much more complex than the underlying geometry. The Holostream therefore can achieve high compression if the 3D model is not noisy and is biased to the important low frequency shapes. One does not have to choose this option, but if you want high 3D model data compression, – it does work remarkably well.

Lossy frame by frame storage can achieve a ratio of 518:1 without substantial quality reduction. When paired with the H.264 video codec, their method achieved a compression ratio of 1,602:1, with a bitrate of only 4.8 megabits per second (Mbps), while maintaining a quality 3D video representations (as seen here below).

The test camera runs at 480x 640 pixels right now. A standard 480×640 image of a face can be stored with lossless PNG, and the file size of the mesh is reduced from 32 MB to 288 KB, achieving a compression ratio of approximately 112:1 versus storing the same information within the OBJ format. Of course, for transmission it is more than sensible to use a lossy compression such as MPEG and here the savings are even more vast.

While the camera is currently a special custom camera, the team are working on the logical next step of using an ‘off the shelf’ RGBD camera. There is no reason why such a solution will not work, and this opens up the technology for a host of new applications. Camera tech is Prof. Song Zhang specialisation area, but the team knows how important it is to generalise to a range of cameras. Some depth camera rigs work more favorably than others, for example the XBox Kinect has a different resolution for its depth map than its video image. this means two streams are required as there is no 1:1 match between colour and depth maps.

The decoding can be done by any decompression decoder. “We do the middle part the compression/ decompress for transmission, but the actual way it is decoded at the other end doesn’t matter, it is how we visualise that decoded data that provides the 3D” explained Zhang. Any H264 decoder would work as the process encodes into standard H264, it is just how that final image is interpreted that matters.

One way to understand the technology is to think of each frame as being a separate ‘point cloud’ per frame. While the model looks like it has correspondence between frames, since the image sequence is stable, in reality each frame is conceptually separate, in the way each video frame is separate in a traditional video camera.

Latency is vital for any video conferencing style conversation. Zhang admits that “right now there is a little bit of latency, it is a small issue, but we are working on reducing this. Tyler recently got it down to under a second but we are working on reducing it further”.

The third author of the research is Prof. Jan Allebach and he provided much of the colour science insight. Allebach has published over 90 papers in refereed journals and over 232 conference publications. He is inventor on 15 issued patents. The results of his research on image rendering algorithms have been licensed to major vendors of imaging products, and can be found in tens of millions of units that have been sold worldwide.

The team is now expanding the camera input and looking to commercialise the process.