Hoon Kim is the founder of Beeble, who have just released SwitchLight. Based in South Korea, the company has developed a powerful machine-learning tool for relighting images, specifically people. At the moment the VFX program only works on still images but the team is just about to release a video clip version, and we have early access to that new technology (see below).

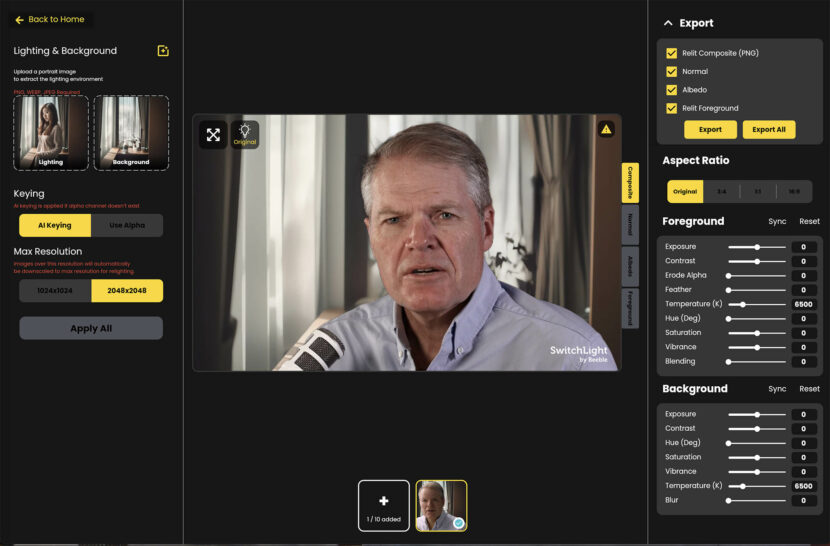

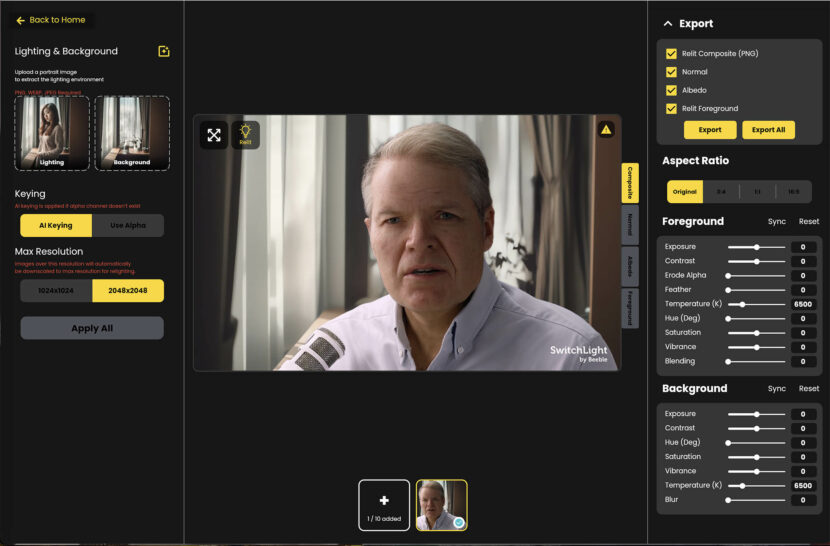

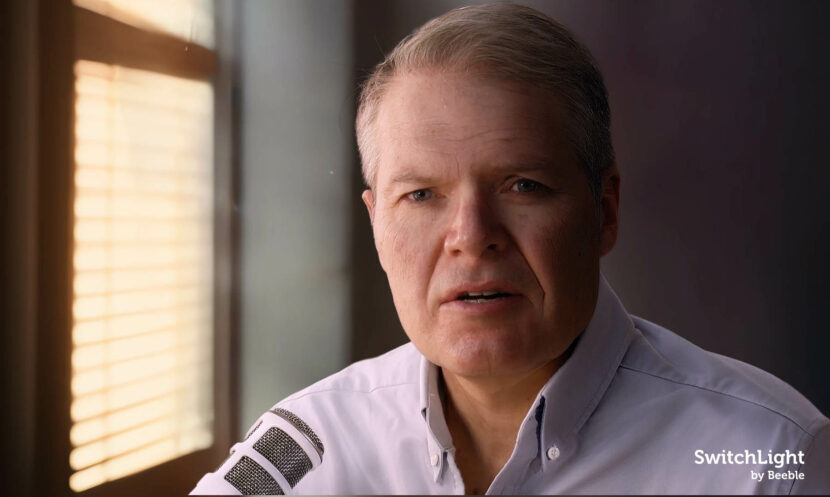

SwitchLight works by the user loading an image and then selecting a background. The program uses Machine Learning to remove the actor from their original background and composite them into the new background while simultaneously relighting them correctly to reflect their new environment.

We have seen similar tools appearing with DaVinci Resolve 18.5 new ReLight node, but in Resolve you relight with additional lights you manually add and control. Those new lights in DaVinci allow you to eye-match to a new lighting setup. SwitchLight by comparison does almost everything automatically based on an image-based lighting analysis of the new background. Also unlike DaVinci, it uses AI to isolate the actor so that any tweaks are only on the actor and do not affect the rest of the image. The major current DaVinci advantage is Resolve’s lack of flicking.

Source image:

The original ungraded subject AI keyed over a new background

Subject relit with AI

Normal Map

Both the Background and foreground can be moved and scaled.

In many ways, this new tool builds conceptually on the work Google Research presented at SIGGRAPH 2021. That paper showed their research and technique including foreground estimation via alpha matting, relighting, and compositing, using only a single RGB portrait image and a new target HDR lighting environment. Interestingly, Beeble built their own LightStage that was used to help generate training data for their company, and Dr Paul Debevec who co-authored the Google Technical SIGGRAPH paper, originated and developed the Light Stage, famously winning multiple Sci-Tech awards for this and his work in inventing Image Based Lighting generally. You can read the Google “Learning to Relight Portraits for Background Replacement” paper here.

SwitchLight

We spoke to Hoon Kim about the product and the company’s plans.

FXGUIDE: Your stated aim had previously been to build a mobile virtual production platform, yet SwitchLight is not currently mobile-focused?

Hoon Kim: We originally aimed to build a mobile-focused virtual production platform. However, based on user feedback, our current focus is now primarily on the professional VFX industry, which is the most active users of SwitchLight. We are, of course, open to developing a mobile app if there’s demand for it.

FXGUIDE: SwitchLight allows anyone to do realistic compositing with accurate lighting and photorealistic background, why did decide to do this – what was your motivation?

Hoon Kim: The motivation to create SwitchLight was born out of our expertise in AI, and the desire to make this technology useful for the creative community. We noticed a gap in the market around lighting solutions and decided to focus on that, while other areas like speech AI and text-to-image were well catered for. We envision a future where anyone can create Hollywood-grade content, and we want to contribute to that.

FXGUIDE: In rough terms how do you generate the albedo that allows you to then relight?

Hoon Kim: We collect high-quality proprietary datasets for training AI.

FXGUIDE: Do you form a depth map that can then be used for relighting?

Hoon Kim: As of now, we do not offer any features that support depth map generation. However, this is an addition we plan to implement in the coming months.

FXGUIDE: You have indicated that you are going to implement full video relighting soon, how temporally stable is your approach?

Hoon Kim: Depends on the source footage and target lighting, but flickering is the main issue breaking temporal consistency. Simple deflickering tools can mitigate this issue but at the cost of introducing artifacts such as the ghosting effect. This is due to AI not understanding the relationships between the nearby frames, and this is one of our top priorities. However, we are actually launching the video feature within a few days (with flickering) to iterate with users and learn from them as fast as possible. We figured out this is the best way to improve our product. Below is a sample of that early video footage implementation.

FXGUIDE: What colourspaces are you working in?

Hoon Kim: Right now sRGB. But we are working to support the linear color domain.

FXGUIDE: What ML approaches do you use for the AI keying of the subject actor? Is it totally your own or based on some pre-published segmentation approach?

Hoon Kim: Currently based on a pre-published approach, but a complete update using our own model is being prepared.

FXGUIDE: What are the company’s medium-term plans – is there a roadmap of what you will be addressing first?

Hoon Kim: In the medium term, we aim to develop a product that professional VFX artists find useful. This includes video support and a local app. We are considering a standalone desktop app and a plugin for Nuke due to high user demand. However, since a web app is the best way to iterate with the users, we will be continuously updating the web app version of SwitchLight.

FXGUIDE: Does it work just as well with different ages of people babies/kids etc? I assume this is influenced by your training data for faces?

Hoon Kim: Performance varies, but our model tends to be more affected by skin color than age. Our training dataset does have an influence. We are actively improving SwitchLight’s performance on darker skin tones and expect to see better results soon.

FXGUIDE: Thanks so much and we can’t wait to see where you take this in the future.