The Heretic / Siggraph Exclusive

Unity’s award-winning Demo team and the creators of Adam and Book of the Dead, presented a short film The Heretic at SIGGRAPH 2019 as part of Real-Time Live. The real-time cinematic runs at 30 fps at 1440p on a consumer-class desktop PC. The Heretic shows the use of advanced techniques based on HDRP (High Definition Render Pipeline) to generate realistic real-time images of people and environments.

Fxguide spoke to the Unity Team:

Krasimir Nechevski: Animation Director

Lasse Jon Fuglsang Pedersen: Sr. Programmer

Adrian Lazar: Technical Artist

Silvia Rasheva: Producer

Robert Cupisz: Tech Lead

Veselin Efremov: Creative Director

FXG: The facial rig for the character, how many blend shapes were you using? Who made the facial rig?

Krasimir Nechevski: Animation Director. Since this was our first attempt at making a realistic-looking digital human in Unity, we wanted to approach the process carefully and keep the amount of content to a minimum, while at the same time work to achieve the highest quality we can.

There are two traditionally used methods for creating realistic digital humans: rig-driven and 4D. Each one has pros and cons, and we weren’t particularly happy with the results of either. So we decided to try a new approach which mixes elements of both, allowing us to take the quality a step up from what we usually see.

The 4D scanning is a process that produces a sequence of blend shapes of the whole face of the actor, for each frame of the duration of his performance (in our case, 7 seconds). It gives us the highest possible realism of movement, but that comes at the cost of lower resolution. We combined it with high-resolution texture sets, including wrinkle maps, which we made with the help of a fully functioning facial rig created for us by Snappers. The rig they produced had 318 blend shapes and 16 sets of 4K albedo, normal and cavity maps, additionally, there were 48 activation masks.

Lasse Jon Fuglsang Pedersen: Sr. Programmer: Given the fine pose-driven texture details of the facial rig, we were able to apply these details to the 4D sequence by programmatically establishing a correlation between the 4D frames and the blend shapes of the facial rig.

Practically speaking, we used a least squares approach to find a reasonably good combination of blend shapes to approximate each given 4D frame. This essentially gave us a set of “fitted weights” per 4D frame, and these fitted weights allowed us to drive the activation masks of the facial rig during the 4D playback.

FXG: Can you discuss the process of driving the facial animation, was it driven by a real person with head-mounted cameras or by an animator?

Krasimir Nechevski: Animation Director. The facial performance in the film was captured in 4D at UK-based studio Infinite Realities which has many years of experience in the area of capturing digital doubles. The performance is delivered in a dome-style setup with multiple cameras arranged on a sphere, with the actor sitting in the middle. As a result, the majority of the work done after the capture was mostly cleanup and reconstruction of the data, rather than the animation of a rig. This processing was done with the help of machine learning algorithms developed by Russia-based company Russian 3D Scanner, who are constantly evolving their popular software Wrap3D.

The 4D capture approach also means that the facial performance was decoupled from the body performance as it was shot separately. This presented a certain level of complexity to make a believable head-body performance, but it also guaranteed that the facial movement is as real as it can be and not altered by any process related to the effort of recreating the movement through a rig.

FXG: Can you discuss the skin shaders, please?

Lasse Jon Fuglsang Pedersen: Sr. Programmer: For shading the skin, we knew early on that we needed to have great looking subsurface scattering, transmission, and possibly a secondary specular lobe to break up the lighting a bit.

Since the project uses Unity’s High Definition Render Pipeline (HDRP), we were able to rely on these features being present out-of-the-box, and we were able to test them relatively early.

We made some customizations to achieve more elaborate skin shading. We wanted to prevent the skin from looking too sweaty, especially under strong lighting, so we added specular occlusion and a bit of roughness in small cavities, based on cavity maps that define the pores in the skin.

In addition, the skin shader also contains custom code for mixing in the fine pose-driven texture details from the facial rig, and this is an integral piece to adding the fine details during the 4D playback.

FXG: The ‘journey’ during the clip was reinforced with the strong framing and blocking could you discuss that aspect please?

Silvia Rasheva: Producer: On an abstract conceptual level, there are two elements of the journey that Director Veselin Efremov wanted to convey through this sequence – one is the idea of transition, where spatial transition reflects transitions through developmental or evolutionary stages, and the other one is the idea of departure from reality which is gradually revealed. The journey is completed in the second part of the film, which is currently in production.

In the first part, the character is in a confined space. We have used tight framing and relied on close-up shots to reinforce a sense of confinement as well as draw attention to details and elements of his props and accessories, which reveal some hints into the underlying backstory and world.

The themes of human-machine interaction and the future of humanity as enabled by an increasingly entangled with technology are recurrent topics for us. The dynamic between the human and his robotic companion in “The Heretic” is conveyed through a sequence of shots that communicate different aspects of their relationship, and we’ve used some dynamic alignment for a waft of symbolism.

FXG: Can you discuss the nature of the ‘barriers’ in the blocking and how it played with the lensing and how it opened up during the piece?

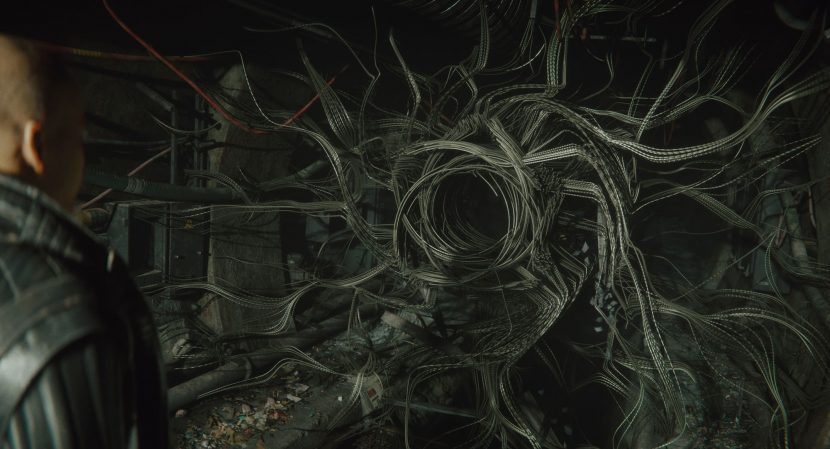

Silvia Rasheva: Producer: In this part of the film, there are three consecutive barriers that the character goes through. Starting with kicking down the door and entering from a regular urban world (of which we only show a vague glimpse and mostly build it through soundscape) into a secluded, abandoned space, which carries an idea of a recent past. We then introduce the futuristic element represented by the robotic companion, Boston, which forcefully tears down the second door for the human to pass through. And the third one is the transition from the physical world into a different dimension which happens through a portal that transforms the entire reality around the character.

While the first two doors are smashed through standard soft-body simulation, the world transformation is a centerpiece of the film and called for a more creative approach. We worked with Technical Artist Cyril Jover of Unity’s Paris-based GFX team, and leaned on his creativity and technical prowess to design and implement a visual effect based on a non-trivial technical solution. His approach was to develop functionality in Unity which is akin to the process of 3D scanning, but happens in-engine: at the point of transition, Unity “scans” the 3D environment as seen from the point of view of the character, and reprojects it onto surfaces which can then be manipulated — sliced into pieces, twisted around, shaded and lit differently — to achieve the desired artistic result. The technology behind this “warp effect” has many potential areas of application in the future.

It has been a very exciting process to witness how, because of the versatility and extensibility of the Unity engine, programmers are able to express their creativity to unlock unique, otherwise unimaginable artistic solutions. This intersection of skills was essential throughout production, including the creation of our VFX-based characters, Boston and Morgan.

FXG: How were the environments made, in terms of asset creation and formation?

Silvia Rasheva: Producer: When the goal is to create realistic environments, it is standard practice to use scanned assets. We have made extensive use of Quixel’s incredibly rich online library Megascans, which has seamless compatibility with Unity’s shading model, so the process is as simple as drag and drop.

For the authoring of all custom assets in the environment, we collaborated with Hungary-based company Treehouse Ninjas, who specializes in the creation of environments for both game and film projects. They did some scans of caves, rock faces and other items, and combined them with handmade assets in the process of building up the environment.

The environment was blocked from a very early stage in the production, and we took a lot of freedom to move things around in keeping with the evolution of the story and direction. The shot dressing was done continuously and saw many iterations – this is easy enough to do in real-time, and, from a production perspective, is practically free. We always allow ourselves a lot of freedom in adjusting the various elements of the film in accordance to each other. This is possible because of the real-time nature of our filmmaking process.

The biggest challenge when working on the environment was the expectation that there will be specific shots to execute. When we create our short films in Unity, we can take more creative freedoms and apply a game-informed approach to environment building, which is closer to the process used for live-action films than to CG, in that you can build the ”location” first and then look for interesting cameras later, you don’t have to build for the shot. This way of working gives more freedom to the director and art team to collaborate together and exchange ideas throughout the production.

FXG: Can you discuss the lighting approach in terms of what sort of lights were used?

Veselin Efremov: Creative Director: All the lighting is real-time, using the HDRP analytical lights, both punctual and area. That gave us perfect artistic control over the look of every shot, where carefully placed large rectangle lights can act as reflectors, and small local accents guide the attention of the viewer. Using light layers, we control what lights affect which surfaces, so it’s literally painting with light. Shadow quality has increased immensely through the years, and using screen-space contact shadows that catch even the smallest details, or controlling the softness of the penumbra, we can achieve a highly realistic representation of the behaviour of light.

FXG: Could you discuss the characters asset creation in terms of cloth and body (rigging, texturing and modeling)?

Krasimir Nechevski: Animation Director. When we were capturing the actor’s performance, we also did a couple of full-body scans. Later we only had to replace the hands with higher resolution ones, which were precisely fit to the original ones in the scan. The meshes were then cleaned and used as collision meshes in the process of creating the garments.

The software we used to create and animate the clothes was Marvelous designer. Most of the meshes were then simply skinned to the bones of the rig with one exception – the jacket.

It was the biggest challenge in terms of clothing. The jacket was also simulated in Marvelous, and then a simplified version was exported as an alembic stream of meshes per frame. The design of the jacket and the stitching patterns underwent numerous iterations until everything fell into place, but the end result was worth it.

Real-Time Live! Presentation

The Heretic contains a character of Boston which is made up of steel wires navigating the environment, conforming to the shape of a bird-like creature. Animating thousands of wires in a traditional way was unthinkable. Boston was implemented as a set of tools, scripts, and shaders within the Unity engine. The character Morgan doesn’t have a clearly- defined physical manifestation, morphs between its female and male forms, and constantly varies its size. Morgan was implemented using the Unity Visual Effect Graph, extended with additional tools and features.

FXG: Can you discuss Adrian Lazar’s presentation at Real-Time Live?

Silvia Rasheva: Producer: We did a live demonstration of our process for creating and working with procedural VFX characters that react in real-time to being manipulated in the engine. Our Technical Artist Adrian Lazar also broke down the robotic bird character “Boston,” showing what the work process looks like internally – the speed of iteration and the freedom of artistic decisions that are enabled by real-time technology. He also gave the first sneak peek into a new character, “Morgan,” who appears in the second part of the short film, which is currently in production.

FXG: What are the challenges of making a VFX-based character, in general? How does creating them in real-time help with the creation process?

Robert Cupisz: Tech Lead: Probably the hardest part is bridging the initial open character concepts and the emergent qualities we discover along the way and decide to keep – all within somewhat unpredictable technical limitations. We start out with ideas about the character’s function in the story, its affordances, and a mood board to inspire the look. Ideally, the next step would be concept art to flesh out the design. We did even try it this time, and Georgi Simeonov did an admirable job predicting how this dynamic system could or should behave. But the key was to start at the opposite end and start experimenting with technology, to try to find interesting emergent behaviour, which would meet all the criteria and still be directable. As we converge on the solution from the tech art side, so does the concept art and the writing.

On the flip side, going through this difficult, iterative process is made easier by the fact that we’re working with real-time effects. In some cases, we can explore the space of possibilities rather quickly, sometimes directly during a conversation. If we weren’t able to iterate quickly to explore this uncharted territory, I imagine we would’ve been forced to settle on a much simpler and, crucially, more predictable design.

FXG: What were the unique challenges you faced when creating the robotic bird Boston? How did those challenges differ from creating Morgan and how were they similar?

Robert Cupisz: Tech Lead: Boston’s distinguishing characteristic is that it’s made with bundles of wires. This makes it a rather different beast than VFX characters composed as, say, clouds of particles. The wires need to be long, continuous, and behave plausibly. The emergent quality of the technology behind them needs to give rise to lots of interesting shapes without the need to manually model the details. All of this would be for nothing, of course, if the character wasn’t directable and exploded into chaotic behavior – an important consideration we had in mind when designing all of the tools controlling Boston.

Adrian Lazar: Technical Artist: Morgan’s unique feature – shape-shifting – combined with the fact that they need to scale many times over and at times be one with the environment made it very tricky to create precise concept art that shows all the different stages and their transitions.

This meant that we had to build the look and the technology in parallel, one driving the other: sometimes the creative side would dictate the technical solution, other times a technical limitation would influence the look.

FXG: What technologies were used to create these characters and what was your experience like using them?

Robert Cupisz: Tech Lead: We started work on the character Boston before Unity’s Visual Effect Graph was production-ready, so it is made entirely as a set of runtime/editor scripts and compute shaders. Unity’s ability to instantly compile and hot-reload compute shaders was invaluable. Additionally, the Unity editor’s extensibility meant that we could easily create editor tools, gizmos and inspectors for manipulating Boston and exploring the space of possibilities.

Adrian Lazar: Technical Artist: Because of the character’s constantly shifting physical representation, we decided to build Morgan out of GPU particles using Unity’s new VFX Graph.

Having real-time particles means that we can transform the shape more organically and with faster iterations, something that wouldn’t be possible if we simulated the effect offline or if we used a complex rig to drive the different body parts.

This solution also opens up for the possibility of having live sessions, where we can develop and prototype the look together with the Director and Art Director, directly in the final shot, having the final lighting and post-processing.

If you or your team is interested in Real-Time Live at SIGGRAPH Asia in Brisbane click here.