How do you plan for shooting life-threateningly dangerous scenes, set in space, without being in space or putting your actors in harm’s way? This was the question posed by Director, George Clooney, and Director of Photography, Martin Ruhe to the visual effects team on The Midnight Sky.

“Planning the spacewalk and Maya’s subsequent death was the largest part of pre-production work for visual effects”, says Visual Effects Supervisor, Matt Kasmir. “From the start, it was incredibly important that they were grounded in reality, using the same filmic principles in space that we use on earth”.

Kasmir and Greg Baxter, Executive Producer, enlisted NVIZ to help. Led by Head of Visualisation, Janek Lender, the team at NVIZ initially helped to visualize Maya’s death which takes place in the confined space of the Aether spaceship airlock. Working with Production Designer, Jim Bissell, the team used Art Department plans and created a digital airlock environment. Once this was approved, the animation blocking for Maya’s death could begin.

“From the start, both George and Martin were keen to work with new technologies to develop these sequences”, said Kasmir. “We all agreed that the route of traditional previs, animating individual shots, was not to the right path to take. The focus was on the story, on our characters and so we chose to visualise their movements in the airlock as a complete performance”.

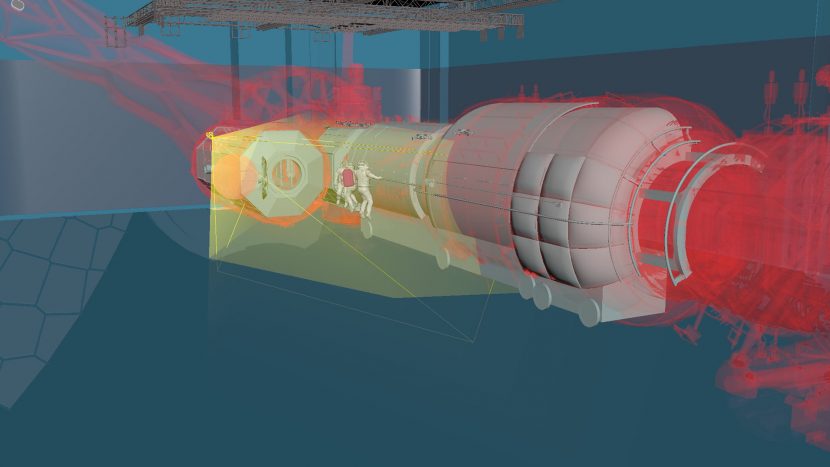

Ruhe and Lender created a ‘master animation’ for Maya’s death, blocking out the continuous action of the characters in the airlock, all the time viewing this from an objective camera, an angle that would not be seen in the film as the camera would have been floating outside the Aether to achieve it.

“This kept us focused on the performance and allowed us to block out the travel of the characters from when the crew entered the airlock to their final positions at the end of the scene”, said Lender. Once Ruhe was happy with the blocking, the animation was transferred to Epic’s Unreal Engine, and the controls handed over directly to Clooney and Ruhe.

“George and Martin were intrigued by not only ‘shooting’ the previs through a virtual camera system, but also using this method to develop the sequences during both pre-production and principal photography,” says Kasmir.

NVIZ’s virtual camera system, ARENA, was set up in the Orangery in Shepperton Studios for the first virtual cinematography session.

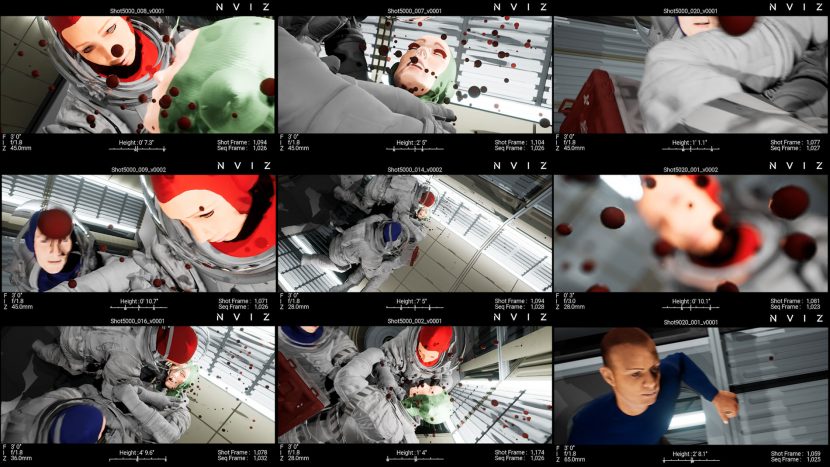

“ARENA works like a ‘director’s viewfinder’ allowing directors and DoPs to lens their shots ahead of principal photography”, says Lender, “the full set-up is very light and consists of a PC and a tablet. Martin (Ruhe) would move around the physical space of the Orangery viewing the animation we had created through the ARENA tablet. We integrated Martin’s preferred set of lenses and both shooting camera bodies into the system so that the sessions were as close to a technical rehearsal as possible.”

Over approximately 10 sessions, Ruhe planned Maya’s death with the output of ARENA being recorded, and the ‘digital rushes’ being supplied directly to the editorial team.

“In one ARENA session, Martin was attempting to shoot-up from a low angle and realized that the camera could not get low enough to the floor (as it was too big). Through discussion with the Art Department, the set design was altered slightly to include a strategically placed trough which was large enough to accommodate the camera”, said Lender.

https://vimeo.com/519140643

The team at NVIZ followed a similar process for the spacewalk in which a number of Aether crew members go outside to perform repairs, during which time the ship encounters a second debris field.

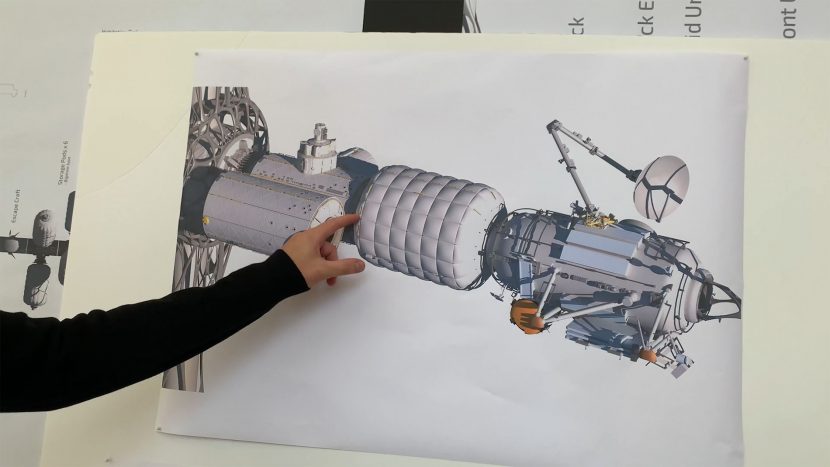

Working again with Bissell and VFX Art Director, Jonathan Opgenhaffen, a fully 3D model of the Aether spaceship was incorporated into the master animation scene for this sequence, allowing Ruhe and Lender to accurately block the path of the crew as they moved across the ship.

“Knowing the topography of the ship and the areas of damage that the crew was to work on was invaluable for blocking out the action, the characters movements and the impacting debris field”, said Lender, “and, on occasion, the blocking also fed back into the Aether design determining, for example, where a hand-hold would need to be or an attachment point for the crew’s tethers”.

The Orangery was not big enough to mark out the full length of the spaceship and so the team temporarily moved to W Stage. The set pieces were lined-up to plan and Ruhe walked the length of the spaceship viewing the debris hitting the ship in real-time, through ARENA. This process allowed the filmmakers to further understand the journey of the crew, and helped establish the timings of the sequences.

“During the first ARENA session for this sequence, Clooney took the system and immediately started walking around the space and describing, in amazing detail, the beats of the sequence, shot-by-shot”, said Kasmir, “It was an organic evolving process which helped us to get further, faster. It informed design decisions early on in the process, which fed down throughout the entire production process”.

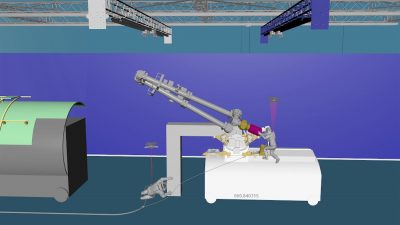

The main ARENA sessions for the spacewalk took place over 2 ½ weeks, in and around the principal photography in Iceland. These virtual cinematography sessions became a place where all production departments discussed the logistical and technical demands of the live-action shoot. A digital model of the 3D gantry rig (wire rig), on which the actors were to perform, was incorporated into the digital environment and, working with Stunt Coordinator, Paul Linney, Lender and his team NVIZ could show where any piece of set-build would block the trajectory of the rig moving the actors.

https://vimeo.com/519141213

Part of filmmaking is planning, and as our imagination takes us to new places, new technologies help us to visualise, not only the final process, but the steps we need to take to get us there.