A little while ago fxphd.com, as part of our Background Fundamental’s classes, covered Adobe’s Character Animator Application. The software is free (or included) with Adobe’s After Effects CC. Last Sunday night Fox’s The Simpsons used the technology to allow Homer to answer questions live for both the East Coast and West Coast feeds.

Scroll down to the end of this story for a free copy of our entire fxphd class.

The software first appeared as a free bonus application “Adobe Character Animator (Preview)” without much fanfare. Since its first showing it has advanced to the point it is now a serious tool.

Dan Castellaneta who voices Homer and The Simpsons team (along with some Adobe specialists) implemented a Simpsons’ Character Animator script pipeline that uses a normal RGB camera to face track Castellaneta and in real time animate the character. Critically, the software does this fast enough that the ‘lip sync’ of Homer seems to be perfectly aligned with the animation rendering using only a half second delay.

The Simpsons‘ team had explored this some years ago when the Fox show famously did a 3D ending with PDI. At that time the live animation would have had to have been a 2D rendering of a 3D model using traditional motion capture, and no such system could render ‘Simpsons quality’ in real time. Run the clock forward to today and one can do this on a laptop with its inbuilt webcam. (Watch our class to learn how you can do this below). The Adobe Character Animator software is not a 3D package or a modified traditional motion capture rig, rather a clever use of advances in markerless facial tracking along with a layered animation solution.

What made The Simpsons’ Live Sunday night clip so effective was the inherent nature of Homer – who is often still (if not slow) in his demeanor and animation style. This worked in combination with a complex ‘background’ that contained canned traditional animation. The somewhat limited animation range of Homer during the live event felt more natural as various other characters moved in and out of shot. As the software can track eyes, blinks, head sway and mouth movement, Homer could answer questions. Of course, unlike the normal show, the material had to be ad lib thus it missed some of the blistering humour that the writers room has famously contributed that made the show such a cornerstone of Fox’s programming. Luckily, Castellaneta is a gifted stand up comedian in his own right.

As we demonstrated in our fxphd class below, the animator makes a series of mouth shapes tagged to various sounds or visemes. While words are made up of phonemes, Visemes are their visual counterparts. This is key as one viseme usually has many phonemes (or sounds) associated with it. In a sense, you build a character tree of layers and nested groups in Photoshop. Once you map in Photoshop, the various mouth movements and other facial elements, you export this into Adobe’s Character Animator. It is Character Animator that then reads the actors face and selects the right layer in the character tree to display. The system is quite intelligent in that not every shape needs to be mapped and yet the system will still work.

In addition to the software ‘reading’ via the camera the actors facial movements and mouth shapes, someone can trigger other events with the keyboard. The actual live Homer character is a combination of motion ‘captured’ dialogue action and keyboard performance. In this case, The Simpsons producer and director David Silverman drove the keyboard.

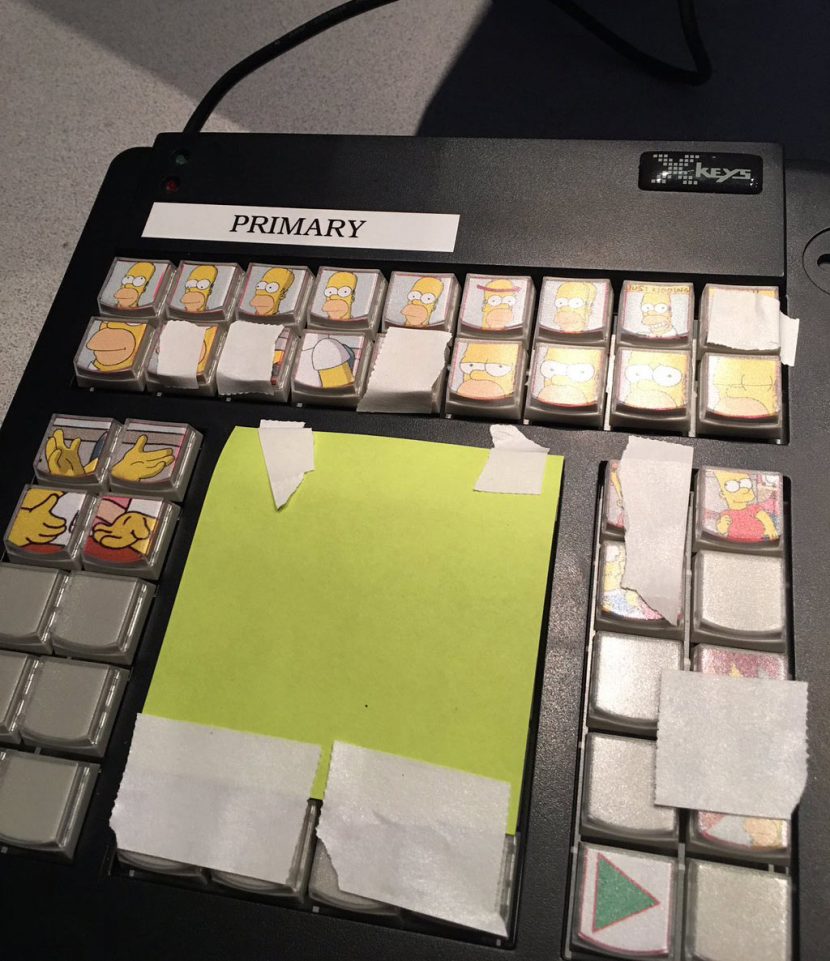

Instead of a traditional keyboard Silverman used an X-keys keyboard with various pre-determined Homer elements (that were not part of the lip sync) – each linked to an single button press. The team used a version of the X-keys XK-60 USB Keyboard which Fox Sports uses for their live work. The X-keys can link to Windows, Mac or Linux. A system with 60 programmable keys can be bought for less than $200, but it is not required and normally not used with Adobe Character Animator.

The Adobe software is currently on Preview 4 and it has advanced significantly since being introduced over a year ago. What is not perhaps self evident is that the same system can drive animation that is not just a character talking. There is no reason why a performer can not be mapped to things that are not a direct 1:1 character match. The system also allows dependencies such as swaying props hanging off the characters that The Simpsons did not use.

In the end, the Homer animation was extremely effective but it was not primarily driven by the actual actors whole facial performance, rather Homer’s mouth and of course motivation/dialogue was all Castellaneta but a large part of the rest of the performance was ‘puppeted’ by Silverman. This produces the most professional results right now, but a single artists – animating everything themselves can produce excellent results especially when the character is highly stylised.

Background Fundamentals: Adobe Character Animator and Go-Animate. (Adobe CA starts 12:37 minutes in)

Mike Seymour demos Character Animator in this free fxphd class. Note that he uses the previous version of the software, and more can now be done in the application itself.

Note: that “Homer Live – East Coast Feed” youtube link has many dropped frames, which makes it hard to evaluate the lip sync. For higher quality, use this link:

Homer Live: East Coast: https://www.youtube.com/watch?v=Bz0ShKbYOuw

Also of interest:

International version: https://www.youtube.com/watch?v=4Kn-eEfjuyQ

Homer’s Apology To Latin America: https://www.youtube.com/watch?v=wVFbTun49Qc

Homer’s UK Apology: https://www.youtube.com/watch?v=e8Juc6AwPt0