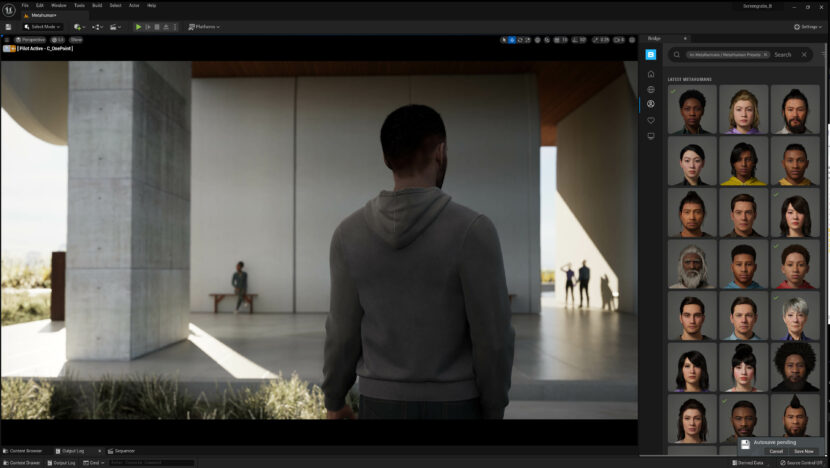

The release version of Unreal Engine 5 (UE5) is now available to download. UE5 has a redesigned Unreal Editor, better performance, new artist-friendly animation tools, extended mesh creation, and editing toolset, improved path tracing, and an improved Meta-human interface.

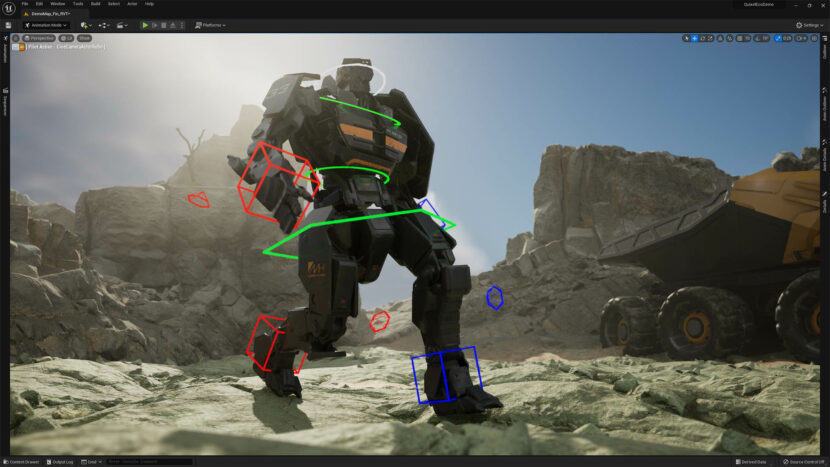

In UE5 there is now new animation authoring, with a new suite of artist-friendly tools that enable users to work directly in the Unreal Editor. Artists now have the ability to quickly and easily create rigs and share them across multiple characters with an enhanced, production-ready Control Rig, which can then be animated in Sequencer. In Sequencer, users can save and apply poses with a new Pose Browser, and apply blended keys with undershoot or overshoot using the UE5: Tween tool.

In UE5, there is now an entirely new retargeting toolset that enables artists to quickly and easily reuse and augment existing animations. The new IK Rig system provides a method of interactively creating IK solvers (including the new Full-Body IK), and then defining target goals for them. The resulting IK Rig Asset can then be embedded into an Animation Blueprint where the goals can be controlled at runtime. This new method of retargeting replaces the Retarget Manager from Unreal Engine 4. The IK Retargeter allows users to transfer animations between characters with different skeletons and proportions. For example, the retargeting tools even allow retargeting from human animation to quadrupeds. There are new UE5 Mannequins, Manny and Quinn, that are built on the same Control Rig and animation system as MetaHumans.

Introduced in Unreal Engine 4.27, UE5 also has a Path Tracer. It is a DXR-accelerated, physically accurate progressive rendering mode that requires no additional setup. It allows users to produce offline renderer-quality imagery from UE5. In this version, the Path Tracer delivers enhancements including stability, performance, and features such as support for hair primitives and an eye shader model, plus improvements in sampling, BRDF models, light transport, supported geometries, and more.

rendered 4k (Jpeg)

There is also available a new Lyra Starter Game as a sample gameplay project built with UE5 in mind to serve as a starting point for creating new games, as well as being available as a hands-on learning resource. The Lyra Game includes the new UE5 Mannequins. Additionally, users can download another free sample project: City Sample, which reveals how the city scene from The Matrix Awakens: An Unreal Engine 5 Experience was made (see our story here).

Virtual Production

nDisplay’s support for the UE5 Path Tracer opens up the lighting workflow, allowing for an accurate, live preview of changes made on stage. In addition to in-camera-VFX productions leveraging UE 5.0’s new rendering features to maximize output quality for filmmakers, Unreal Engine 5.0 improves virtual production workflows. The stability and maturity of UE 4.27 has been brought forward into the release version UE 5.0.

In-camera-VFX tools support all the key workflows of 4.27 with new features added in UE 5.0 to further support stage operations and content production. For example, Nanite is supported as a Beta feature in both Movie Render Queue and nDisplay rendering pipelines for virtual production. If used with GPULM or the Path Tracer, the Nanite Fallback Meshes are used instead of the full-detail surface. USD support has broadened from just Levels to Sequencer allowing for production workflows like layout to utilize the UE5 in a USD pipeline. However in this release, while Lumen may seem to work with nDisplay, Epic has stated that it is not yet ready for in-camera-VFX production workflows using VR and/or nDisplay rendering pipelines.

RealityScan Beta Preview

Epic also introduced a new limited access Beta to RealityScan. a free 3D scanning app, developed in collaboration with Quixel, that turns smartphone photos into high-fidelity 3D models.

With RealityScan, creating digital 3D objects starts with scanning with one’s mobile phone. RealityScan then walks users through the scanning experience with real-time feedback and AR guidance. RealityScan enables users to check the quality of their data without the need for long wait times.

Once the scanning is done, RealityScan the finished assets can be uploaded to Sketchfab, for publishing, sharing, and selling 3D, VR and AR content.