Epic Games has published a guide for virtual production (VP): The Virtual Production Field Guide. It is a downloadable PDF intended as a foundational information document for anyone either interested in VP or already leveraging these techniques in production.

Virtual production is a broad term for a wide range of computer-aided filmmaking methods that are intended to enhance the creative process and increase efficiency. Epic Games are at SIGGRAPH next week and, along with a set of major partners, they will be showing a range of technology and approaches to VP, from Real-Time Live to specific talks.

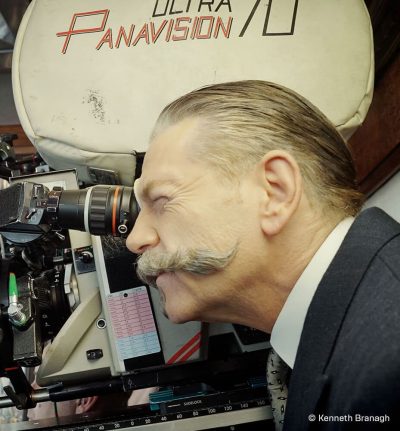

The use of these real-time tools can turn the traditional linear pipeline into a parallel process where the lines between pre-production, production, and post-production are blurred, making the entire pipeline more fluid and collaborative. The field guide both explains terminology and includes interviews with key players in this space including Ben Grossmann (Magnopus: Lion King), Director Wes Ball, Director Sir Kenneth Branagh, Glenn Derry (Fox VFX Lab), Producer Connie Kennedy, DOP Bill Pope ( The Matrix trilogy, The Jungle Book, and Alita: Battle Angel), DOP Haris Zambarloukos (Thor, Murder on the Orient Express) and the Third Floor’s Virtual Production Supervisor, Kaya Jabar.

The use of these real-time tools can turn the traditional linear pipeline into a parallel process where the lines between pre-production, production, and post-production are blurred, making the entire pipeline more fluid and collaborative. The field guide both explains terminology and includes interviews with key players in this space including Ben Grossmann (Magnopus: Lion King), Director Wes Ball, Director Sir Kenneth Branagh, Glenn Derry (Fox VFX Lab), Producer Connie Kennedy, DOP Bill Pope ( The Matrix trilogy, The Jungle Book, and Alita: Battle Angel), DOP Haris Zambarloukos (Thor, Murder on the Orient Express) and the Third Floor’s Virtual Production Supervisor, Kaya Jabar.

Below is an extract from the guide.

Virtual Production Supervisor Interview: Kaya Jabar

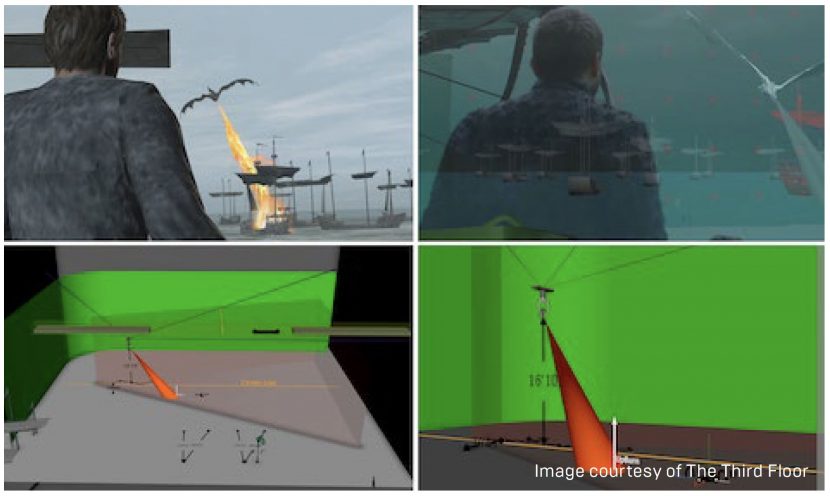

Kaya Jabar has worked at The Third Floor for nearly three years. She worked as a virtual production supervisor on projects such as Game of Thrones, Dumbo, and Allied. Jabar loves being able to flesh out ideas and carry them over to realistic shooting scenarios, evaluating available equipment, and coming up with new configurations to achieve impossible shots.

Kaya Jabar has worked at The Third Floor for nearly three years. She worked as a virtual production supervisor on projects such as Game of Thrones, Dumbo, and Allied. Jabar loves being able to flesh out ideas and carry them over to realistic shooting scenarios, evaluating available equipment, and coming up with new configurations to achieve impossible shots.

Can you describe your work as a virtual production supervisor?

I started in games, then joined The Third Floor as a visualization artist, and later transitioned into virtual production three years ago. My first show was Beauty and the Beast where part of The Third Floor’s work was doing programmed motion control and Simulcam using visualized files. We used MotionBuilder for rendering, and the live composite was mostly foreground characters. Next, I did Allied with Robert Zemeckis and Kevin Baillie.

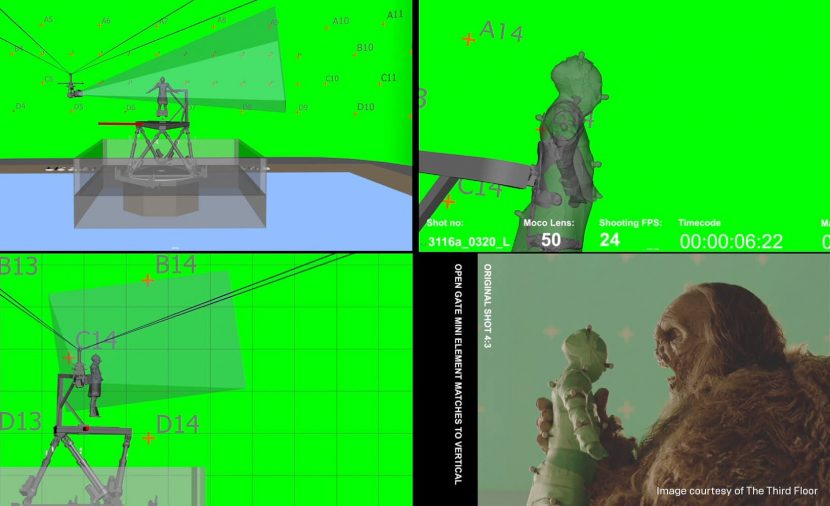

In the last few years, I worked on The Third Floor’s team, embedded with the production crew on Game of Thrones. I did previs in season seven. Then in season eight, I supervised motion control and virtual production, primarily running the main stages and prototyping production tools. Anything to do with mounting flame throwers on robot arms, driving motion control rigs, virtual scouting, custom LED eyelines, and fun stuff like that. I also used a bit of NCAM real-time camera tracking and composites on that show.

Now I’m back in the office focusing on our real-time pipeline and looking at how virtual production can be further leveraged. At The Third Floor, we’re generally on productions quite early, building and animating previs assets to storyboard the action quickly. It’s been pivotal on a few shows where you can have visualized content speedily and use it to make decisions faster to achieve more impressive things on camera.

Which tools do you use?

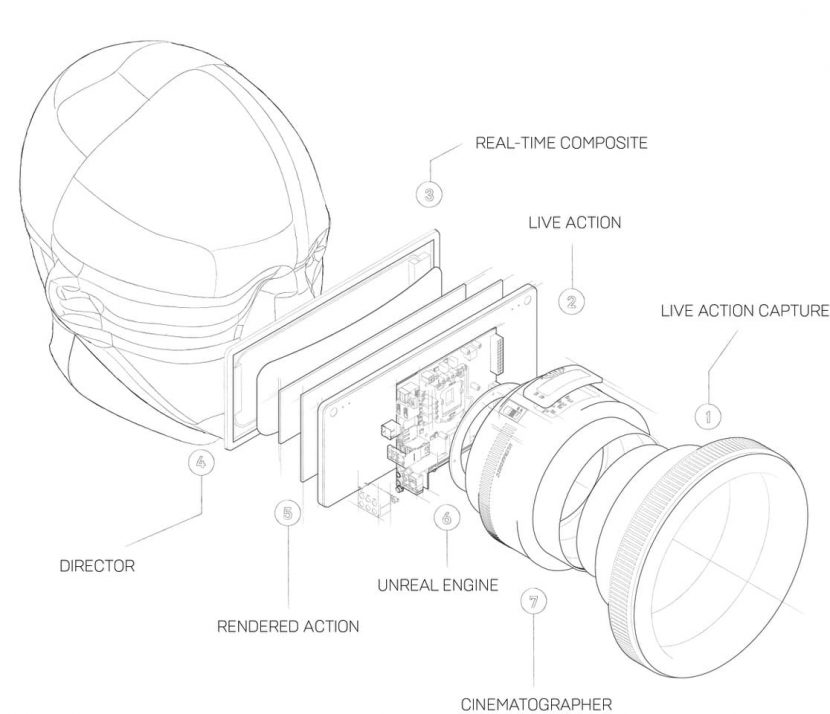

We use Unreal in our current pipeline for real-time rendering, so we’re leveraging that and building tools like our virtual scouting and virtual camera tools around it as we evolve the workflow. By leveraging Unreal, our goal is to be able to work with clients, directors, and cinematographers in ways that get them closer to a final pixel image, so they can make more informed decisions and be more involved in the process.

How does your work overlap with the visual effects pipeline?

It tends to flow both ways, for example, on Game of Thrones, we pre-animated a lot. So I was responsible for all the dragon-riding virtual production, which was incredibly complex. It was three pieces of motion control equipment all in sync and slaved to the final comp.

We would previs everything, get that approved quickly, and then send our scenes to the finals house. And then they’d send us exports back, so I could use those exports to do any NCAM work or motion control work I needed. It empowered us to pull quite a bit of animation work forward in production.

Do you think virtual production will increasingly deliver final pixel imagery?

At least for OTT [over the top, streaming media] such as TV and lower budget productions, we’re heading that way. For the final season on Game of Thrones, we had six months’ turnover between finishing filming and delivering six hours of content. For those kinds of shows where the production house isn’t the VFX house as well, I think you can push previs assets further, due to the fact that you can cross-pollinate between final VFX houses, which they can give Alembic caches for all your work and so on.

Much attention is being drawn to virtual production lately. I’ve been working on it for over four years, and other people have since Avatar, but it was never conceivable that you would get final pixel in-camera. It was always a prototyping tool. With improving GPUs, especially the NVIDIA RTX cards and global illumination, I can see it being brought more on set.

How do you keep the virtual production department as efficient as possible?

By understanding the brief and having the virtual production supervisor involved early. On my most successful shows, I’ve been involved from the beginning and had somebody there who was auditioning the technology. You need to be able to look at it from the standpoint of: is this something that will take seven days to set up for one shot, or can we use iteratively without a team of 15 people?

I worked on Dumbo as well, and it was essential to look at all the different hardware and software providers and run them through their paces. A lot of it is making sure data flows into the effects pipeline. There’s a lot of duplication of work which can be frustrating, where you’ve made all these decisions in one software package, and they don’t flow over to the other software packages or the other supervisors, etc.

How do you bring new crew members up to speed with virtual production?

We need to understand the problems the crew is trying to solve and make sure that the tech addresses those rather than pushing a technical idea and way of working that doesn’t answer the original brief. If somebody is looking for a quick way to storyboard in VR, then we should focus on that. Let’s not put a virtual camera on them, or any of those other inputs, overwhelming them with tech.

I’m a big believer in flexibility and being able to tailor experience and UI design to address different approaches and working with different directing styles and preferences, etc. It’s being able to understand what they’re trying to do, so we can do it more quickly by not involving final animation.

What is The Third Floor doing to stay on the leading edge?

All our supervisors get deployed on the shows as boots on the ground. We not only talk to the creatives but also the technicians. I speak to every single motion control operator and every person who is prototyping solutions and approaches to understand the questions they’re being asked. On Thrones, that collaboration and continuously innovating on everyone’s ideas and input led to some very successful results, for example, for dragon riding shots to use a Spidercam motion base and a Libra camera, which had never really been used in sync together.

We are often active across many other parts of a show, not just virtual production. A lot of our tools are bespoke per show, so we will create a specific rig that we know they are using or other components custom to the project. But we then also build up experience and knowledge with each project and find ways to generalize some of the tools and processes that can overall streamline the work.

Did virtual production help inspire the significant leap in visual effects scope for the final few seasons on Thrones?

Game of Thrones was this unique ecosystem of people who had worked together for so long that they’d gained each other’s trust. Season six had about seven major dragon shots. Joining on season seven, my biggest challenge was doing 80 major dragon shots with entirely new equipment. What worked there was the relationship The Third Floor had with the entire production: the executive producers, DPs, the directors, and all the key departments. The VFX producer trusted us to come up with new solutions.

They said, “This is impossibly ambitious, so let’s all sit in a room and talk to all the heads of departments. Let’s figure out what VFX can do, what virtual production can do, and what the final VFX vendors can provide for them to accomplish that.” That type of relationship and collaboration happens, but for TV it’s unique because you need to work with the same people again year after year. If you don’t get along, usually you don’t get asked back.

What do you think about the future of virtual production?

What do you think about the future of virtual production?

I’m quite excited about final pixel in-camera, at least for environments and set extensions. More departments are working in real-time engines. I think it would be fantastic to walk onto a set and have every camera see the virtual sets as well as the built sets. We’re showing the camera crew and the director what they’re going to see, but we need to find a way to engage the actors more as well.

Has virtual production, including the work you’ve done, raised the scope on the small screen?

I genuinely hope so because I think there is a lot of virtue in planning. What we achieved on Game of Thrones with the modest team that we had, was purely because everybody was willing to pre-plan, make decisions, and stick to them. If you use visualization the way it’s meant to be used, you can achieve absolute greatness in small scale.

There are so many ideas in the creative world, and I would like to be able to help directors and others who don’t have a $100 million budget. I’m looking forward to smaller movies getting the tools they need to achieve the vision which was previously reserved just for Hollywood blockbusters. It’s exciting to democratize the process.