It has been almost exactly four years since Dr. Paul Debevec joined Google with several members of his University of Southern California Institute for Creative Technologies (USC ICT) team. While Debevec is still an adjunct professor at USC and supervising Ph.D. research at his old Lab, his focus has been on advanced AR and VR technology at Google. Starting in 2020, this work has been so successful that Google has decided to open up Paul’s team to allow them to collaborate with Industry on commercial projects in much the same way as USC became a hub for industry engagement. While it is too early to reveal the details of any of these first new film production projects, we can get a glimpse at what the Google team might be involved in by looking at their incredible research output. Debevec’s team is not reproducing what is already at USC since Light Stage’s Polarized Spherical Gradient Facial Scanning is still very much in demand and working, but they have built a new Light Stage X4 (nicknamed Solaris), to allow for new research beyond what any previous Light Stage has been able to do. The team has also benefited greatly by joining forces with another Google research team, headed by Dr. Shahram Izadi, a Director at Google within the AR/VR division. Prior to Google, Izadi was CTO and co-founder of perceptiveIO, a Bay-Area startup specializing in real-time computer vision and machine learning techniques for AR/VR. His company was acquired by Alphabet/Google in 2017. Izadi’s team are experts in volumetric capture and have done groundbreaking research at both perceptiveIO and Microsoft prior to that. Additionally, both teams have enjoyed the massive, if not almost incomprehensible, cloud computing power of Google’s internal resources.

As we first reported in Feb of last year, Light Stage X4 is nicknamed Solaris as it accurately simulates natural lighting environments including the Sun. Since then the true innovations in this new Light Stage have been revealed. “We tried to advance it in every way that we could think of,” remarked Debevec. The Light Stage was not specifically built with the purpose of offering a service, but now it is built, the team sees industry collaboration as a way to engage on some of the most complex problems in computer graphics, simulation, and image sampling.

Light Stage X4 : Solaris

A good way to grasp what the Google team can now offer to the production community is to examine the differences and advances in Light Stage X4 over the previous iterations.

a) The fourth dimension: Time

For many people, the Light Stage produces the finest and most detailed scans of human faces, but it seemed that these scans were primarily super high-resolution snapshots. In other words, the Light Stage could produce pore level detail but primarily of still (FACS) facial poses. This is partially true, before Debevec and the team left USC full time they were working on some time-based approaches but in an effort to get the greatest detail the stages relied on still cameras and thus the output of highly-detailed data from the system’s Polarized Spherical Gradient Facial Scanning. (For more about the USC ICT process, check out our fxguide story). “What’s been great about going to Google is that we designed our new Light Stage to be big enough to scan a whole body, yet small enough that we could still zoom in on a face and do a good facial scan – but all of it was designed to be for time-based captures.”

The new Solaris provides 4D reconstruction and the most impressive technical output so far has been the recent Relightables project. First shown at SIGGRAPH Asia last year to a packed audience, Relightables allows volumetric performance capture of humans with realistic relighting. In many ways, this is a return to one of the original functions of earlier Light Stages as a device that could relight an actor to the lighting of any HDR sampled location. By comparison, the Relightables project goes vastly further in that it allows full-body, moving volumetric capture that can be blended into traditional CG pipelines. It uses recent advances in deep learning to achieve high-quality models that can be relighted in arbitrary environments.

“We are able to get, through a combination of time multiplex lighting and some re-engineered active depth sensors, scans of human bodies that are actually pretty competitive with what you can get from a standard photogrammetry system because we get that surface normal information and displacement maps that give you good per-pixel detail,” explains Debevec. But a series of traditional photogrammetry reconstructions would produce an unrelated set of models with no correlation between points over time. In other words, they would lack frame to frame correlation and would flicker, since there would be a lack of consistency. The Relightables project solves this.

The subjects are recorded inside the augmented Light Stage X4 which is outfitted with 331 new custom-built LED lights, an array of high-resolution cameras, and a set of custom high-resolution depth sensors. Using special colour graduated lighting the system films effectively at 60 fps and outputs 30 fps detailed and consistent 3D relightable models, that are temporarily stable. But to make the system actor friendly the actual lighting solution is slightly more clever. “We do a trick where the lighting actually changes between the two gradients at 180Hz, so fast you don’t notice,” explains Debevec. “The cameras run at 60Hz with short shutters capturing every third frame. Thus, they still see the two patterns alternating, and actors see a stable flat lighting condition with no flicker.”

The system is not only remarkable for the density and thus the detail of these models, but the software also has innovative algorithms that provide sensible and stable geometry, even when the actor is juggling, or doing complex hand movements. Many volumetric systems can capture whole convex shapes but struggle knowing how to separate a ball from a hand as the juggler transitions from ball-in-hand to ball-just-off the hand. These visual tears and errors are normally very noticeable around hand movements and where cloths lift slightly from the body, say around the neck. The Relightables project not only solves these examples but it handles hair and other notoriously difficult facial aspects. Debevec does not claim that hair scanning is ‘solved’ in the Solaris but it works well for regular haircuts and most non-flowing shorter hair.

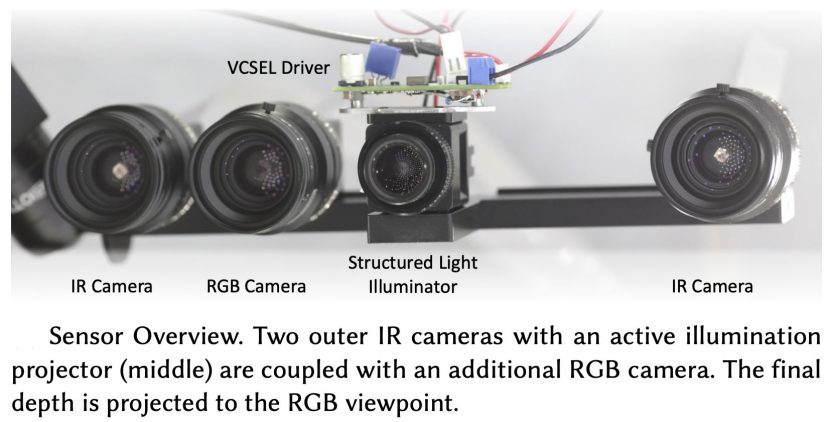

Izadi’s team’s advances in volumetric processing and Debevec’s team’s new Light Stage technology generates a lot of data. The Light Stage X4 has multiple custom depth sensors that can capture high resolution (4112 × 3008) depth maps at 60 Hz. The Solaris has 90 high-resolution 12.4 MP reconstruction cameras. The capture cameras are a combination of 32 Infrared (IR) cameras, which use active IR structured light illumination, as well as 58 RGB cameras. The IR sensors provide accurate and reliable 3D measurements, while the RGB cameras capture high-quality geometry normal maps and textures. The cameras record raw video with the process using two different interleaved visible lighting conditions or spherical gradient illumination. A clip of 600 frames or, 10 seconds, generates roughly 650 GB of data, which represents a whopping 80 gigapixels per second (!) and being Google – this is then uploaded to the cloud, allow for a capture session in the morning to be processed and able to be used in the afternoon if needed. Amazingly, Google needed to upgrade its own internet connection to their Playa Vista offices to handle the vast data streams.

Given the complexity of the setup, the process does not rely on a green screen to perform the matte generation to isolate the actor and to guide the reconstruction, but rather they employ a deep learning based segmentation to retrieve precise edges, which then become the bounding polygons of the model.

b) Facial scanning vs full-body capture.

The Relightables project captured an entire full-body scan, while this is more than the typical Light Stage facial scans, Light Stage X4 is not the first or largest Light Stage to attempt this. Light Stage 6 was a vastly bigger stage and the first Light Stage to achieve full-body scanning.

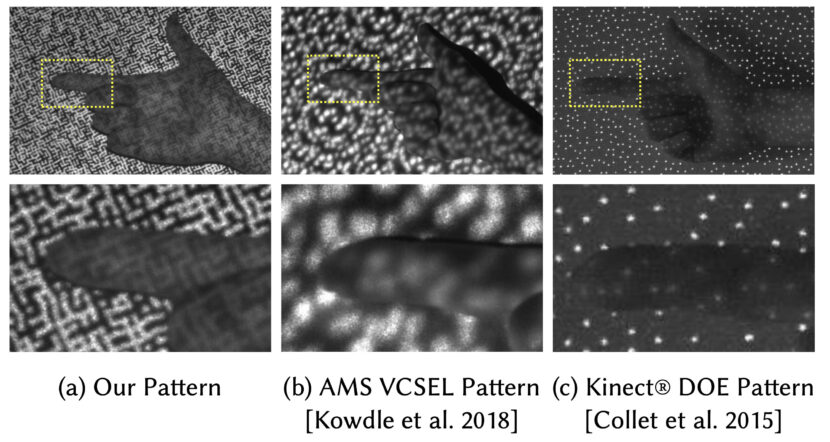

One of the ways the team achieved the fidelity and accuracy they did on the Relightables project is down to the pattern that is projected during part of a capture. It is more dense and detailed than previous solutions, the most obvious prior technology being the pattern from the popular Microsoft Kinect Sensor.

As can be seen above, the pattern is dense and complex. “It’s basically kind of what the laser projection that a Microsoft Kinect would put out, but 10 times brighter, 10 times higher resolution and more unique in pattern”, Debevec explains.

c) Light stage X4 Spectral sensing and lighting.

Near the end of their time at USC ICT the team was exploring full spectral capture to feed the new wave of Spectral renderers. The Solaris has seven LED colours able to be used to produce controlled spectral illumination. In addition to Red, Green and Blue, there is a Royal Blue (often used with Blue), Lime, Amber, and White

The 90 cameras in the rig are 12.4 MP resolution cameras. Both the RGB and IR cameras are Ximea scientific cameras (XimeaMX124) that use the Sony IMX253 sensor, which is a 4112 × 3008 resolution CMOS chip but importantly, built with a global shutter. For the surface reconstruction pipeline process, the 58 colour cameras and 32 IR cameras produce 90 depth maps from multi-view (active stereo). The multi-view algorithm has semantic segmentation which produces a tight point cloud that allows a Poisson geometric reconstruction.

One might wonder ‘why do the cameras run at 60 Hz when modern digital cameras can shoot much higher frame rates?’ To capture the photometric surface normal orientations, two colour gradient maps needed. The two three-dimensionally reversed gradients sum up to normal even white light. The white light combined frame provides the RBG texture maps and the difference between the two paired gradient images provides the surface normal. Thus for each output frame, two images are needed. USC ICT did experiments with much faster high-speed cameras, but the faster one shoots, the more noise can be created as less and less light has time to be exposed to each frame. A 1/500th of a second shutter allows a lot less time for light to get to the sensor than a 1/60th second shutter, so either depth of field is compromised or one must massively increase the amount of light falling on the subject which can be extremely uncomfortable for the actor. But that is only part of the answer.

While the team could use moderately higher speed cameras, “it is just more practical to use cameras that do 60 frames per second and get the information you need to relight from that rather than use high-speed cameras,” Debevec explains, pointing the vast amounts of data are already being generated. “The problem isn’t not having enough data, even at Google, – I mean there’s almost too much freaking data! We already have a lot of data. We definitely don’t need 10 times more data.” Instead of more data in the area of facial scanning, the team relied on innovative Machine Learning (ML). They started by building a training data set of faces under every direction that light comes from, using their OLAT (One Light At a Time) data sets (previously known as 4D reflectance fields). They then added to the ML, for each one of those faces, what that person looks like under the standard two color gradient lighting conditions. This is the most standard lighting pattern the Light stage uses, it lights the actor with first the one-color gradient, then the second color gradient, and then it spins the lights down from the top to the bottom in a spiral. Debevec explains that they then trained the neural network with “what the two gradients looked like and what the person’s whole reflectance field looks like, – with every single light direction, just soloed one at a time”. He goes on to explain that having completed the training stage, they can “then send it a completely new face with just the color gradients and ask it ‘what would that person’s OLAT look like?’ As it’s a neural network, it makes ‘something up’ and that output is actually pretty good.” This allows the team to do image-based lighting from an HDR on all the individual lighting conditions and the results are extremely plausible. “It actually nicely matches ground truth to that person’s actual OLAT if you test it on data it hasn’t seen” Debevec comments. With this additional ML the team only needs the two lighting conditions and with some optical flow adjustments, and the process provides a highly accurate 30 frame a second relighting output.

Graham Fyffe lead Senior Software Engineer for Google on much of the full body relightability features and storage infrastructure explains that “surprisingly when we extended the 2009 color gradient work to multiple views, the math got even simpler because the view-dependent effects kind of average out.” Fyffe and the team had also been using ML on their Deep Reflectance Fields research which specifically looked at faces and introduced the first system that could deal with dynamic performances with 4D reflectance fields, enabling photo-realistic relighting of moving faces. The core of this 2019 SIGGRAPH paper consisted of a Deep Neural Network that could predict full 4D reflectance fields from two images captured under spherical gradient illumination. Commenting on this related work, Fyffe said, “it was nice to use machine learning to expand upon the 2009 color gradient work, which previously only estimated a local shading model. With ML we are able to leverage spatial context to infer much more.”

Virtual Production

The new Light Stage has interesting applications in virtual production, allowing an actor to be tested giving a performance in any location, under any suggested lighting test. Their wardrobe and costume can be evaluated as the cloth is scanned accurately and reproduced faithfully both in form and colour.

When reviewing the Google team’s research outputs, one must factor in that these are automatically generated composites and do not include any standard compositing techniques such as edge blending, light wrap, or light halation. it also includes no participating media to add fog or depth cueing. While the SIGGRAPH test shots are impressive, they are unenhanced and unadorned to faithfully present academic honest research. Of course, the film industry projects currently underway with the Google team are free and encouraged to use all these additional VFX effects to ‘sell’ their shots. The Google test images are directly generated from the data and produced as straight V-Ray renders of the volumetrically captured individual in a traditional 3D generated environment.

As we have documented, with The Mandalorian, LED lighting of actors is a hot topic in Hollywood right now with major advances on many fronts (see our story here), something that is only intensifying interest as Google opens up to work more collaboratively and commercially with teams, notwithstanding the current global crisis- although, Debevec jokes that if the COVID-19 lockdown continues he might need to build a Light Stage in his kitchen. In reality, the Google Playa Vista Solaris Light Stage is fully able to run without anyone present: the team left a dummy in the hot seat when they moved to working from their homes, and they can remotely turn on the Solaris Light Stage, take test shots, and shut it down again.

Future Directions

There are certainly more opportunities to use ML with this research. Debevec speculates that a similar technique could work for whole bodies when sampled in the Light Stage X4. “I would think that basic technique would probably apply to whole bodies pretty well,” he comments. There are other pieces of engineering that the Google team are keen to explore, and there is a really a big use case of the Relightables approach being produced as relatively lightweight assets that could then be played back on mobile hardware. “I think there is interesting work to figure out how you can create a fully relightable neurally-related version of someone that you could then use in practice?” As ML is often slow to learn but fast when actually used, such an approach would be widely applicable to the use cases that Google is interested in. “There’s a bit more work to be done there. I don’t think it’s impossible, but that’s kind of where the boundary case would be.

Rendering synthetic objects into real scenes is a ‘coming home’ intellectually for Debevec whose original 1998 paper, when he was still at Berkeley University, sought to bridge traditional and image-based graphics with global illumination and high dynamic range photography. In the 22 years that have followed, Debevec has seen this work move from simple still objects to fully free-form human movement volumetric capture. This is combined with their Deep Light (2019) research into estimating a plausible high dynamic range (HDR), omnidirectional illumination from an unconstrained, normal low dynamic range (LDR) picture on a mobile phone camera, culminating in allowing these incredibly complex capture performances to be played back in realtime as an AR experience on an Android phone.

Google has shown a strong commitment to the team’s work both in the Light Stage and their related work in Light Fields (see our story here), as a result, the team has been slowly growing and building more internal structure.

The Relightables project is full circle for Debevec. At the very start of his career, he was deeply interested in the notion of controlled relighting. The only reason that Paul Debevec started down the entire path of Light Stage scanning captures was a lack of money to buy lights. “At Berkeley, I couldn’t afford that many lights,” he amusingly recalls. Unable to build a stage full of lights, he came up with the idea of swinging a light rig around a person and combining the images together later. “I’d run out of funding at Berkeley, but I really wanted to do this. So I paid for the first ‘light stage’ myself but I could only afford to buy one light to go around people – and then I had to calculate the real lighting as a post-production process.” Only when he had more funding was Debevec able to build the classic Light Stage sphere with lights fully surrounding an actor that would be at the core of his research for the next 20 years. “I think the whole fact that the Light Stage became known best as a facial scanning system is an accident on top of an accident, and yet that is what has ended up getting used directly in the most number of feature films”.