In February, we ran a story on real-time face-swapping. In that story, we recounted how Pinscreen, at the World Economic Forum (WEF) in Davos Switzerland, had shown a system where someone could walk up to a computer screen and it would immediately perform a face swap. For example, the demo allowed anyone, male or female, to instantly look like actor Will Smith. For the first time last month, Pinscreen explained that a new advanced version of this real-time technology is now moving inside the UE4 game engine. The new AI approach makes a fully CG digital character look dramatically more realistic. This has significant ramifications for both games and virtual production.

The technology at the core of the demo In the original Davos system was paGAN RT. That real-time version seemed to dispense with the need for training data of the source face and just produced the output target face. While it was real-time, it did not fully match the non-real-time quality of paGAN HD, which is Pinscreen’s advanced non-real-time advanced version. The real-time demonstration attracted a lot of attention for its lack of time needed to gather training data. The new paGAN 2 hybrid process is different. It runs in real-time but combines a new inferred synthetic face into a CG scene, replacing not images seen from a webcam, but the face of a digital character in the game engine. This is important on many levels. Firstly, the face is known and can be used for training, unlike the Davos demo. This directly raises the output quality from the previous paGAN RT to a level very close to the full paGAN HD but still running in real-time. The second enormous difference is that unlike a system that is rendering on top of a video stream, paGAN 2 is doing its AI face-swapping ‘in-engine’, so it is aware of the 3D space. It knows exactly where the face is in 3D space and more importantly, it no longer gets confused by hands or other things occluding the face. In the normal world of AI or ‘deepfake’ style face swapping, facial occlusion causes huge problems. Imagine a singer with a microphone. In the video version, the microphone they are holding might cover their mouth, upsetting the face-swapping technology. In this UE4 version, the mic would be a 3D mic, and the face-swapping happens to the face, behind the mic. As the mic and the singer’s hands are all actual 3D objects, paGAN is aware of the spacial positioning of the face relative to the mic and the singer’s hands.

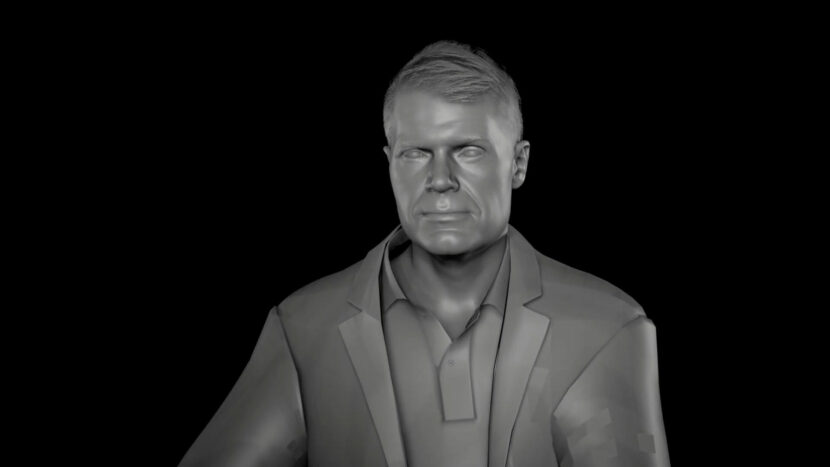

In the demo we have above with fxguide’s Mike Seymour, the digital character in UE4 is being created by a Cubic Motion Persona head rig + an Xsens suit that Mike is wearing in his lab at Sydney Uni. This feeds 3lateral’s rig logic inside UE4.24. Pinscreen’s software then combines this data with their paGAN 2 processes to produce the final result. The microphone in the foreground is a UE4 asset (by Genoris2) and the room is a still jpeg that has been loaded on a card inside UE4. With paGAN 2 process the character can be real-time, have the correct blending, and the training is done systematically and not like traditional deepfake software.

It is possible currently to run a non-real-time face-swapping software such as DeepFaceLab or Faceswap_GAN after one has rendered a CG character, and in this respect, the CG character is no different from a pre-recorded video of a real person. The new paGAN 2 system allows one to talk and interact with the realistic digital character, in addition to the camera being able to move or be controlled by the user and a host of other benefits from being done ‘in-engine’. Pinscreen as a company is focused on virtual agents, digital assistants, and increasingly popular digital influencers. With this new paGAN 2 innovation, these characters can look real and talk directly with their audiences.

The paGAN 2 process does not solely rely on Generative Adversarial Networks (GANs) as the name might imply. In reality, most public open-source face-swapping approaches such as DeepFake (Faceswaps) and DeepFaceLab tend to rely very heavily on Autoencoders and often do not even require the use of a GAN. To make a more generalized, more robust, and temporarily stable solution, Pinscreen does a number of additional steps, which include the intelligent uses of GANs at multiple stages in the process. This increases the quality of the results and makes the system able to handle a wider range of lighting conditions, expressions, subjects, and circumstances. Pinscreen lead artist Anda Deng comments: ‘while the neural face rendering does an amazing job in ensuring a realistic face generation, my job is to make sure that the rig and shaders of the entire avatar match the realism of real Mike Seymour. This requires careful integration of artistic and scanned assets with the Unreal Engine”.

One of the key innovations of the Davos demo was training an ML system to do a ‘many to one’ facial solution. This generalizing allows the computer to solve for anyone walking up to the demo to be mapped ‘one to one’ with the machine’s already trained internal model, which it had been trained on to work with a variety of different celebrities. By dropping this generalization, ie. need to instantly work on whoever walked up, the paGAN 2 system improves performance dramatically. Other approaches also have to spend valuable time solving head position and orientation, but a game engine already knows all of this information, far more accurately than in the video processing approach can estimate.

The paGAN 2 can process real-time ray traced virtual scenes, so the company is very confident that as Epic Games migrates from UE4 to an increasingly ray traced UE5, the paGAN technology will migrate and the same approaches will work. As the face is not applied as a post-effect but during the process of producing the final scene, any other UE4 post-processes such as lens flares, or camera aberrations would also apply to the paGAN 2 face. The process runs at whatever the framerate of the GPU or system allows. There is nothing stopping the team from developing an additional V-Ray for UE4 renderer type version for offline use, but the company’s main focus is on real-time performance due to the large number of use cases this facilitates. The core technology is render engine agnostic, for example, Pinscreen’s internal test rig runs on LINUX.

The process currently works in real-time but with the load split over two applications. When it is fully integrated into one, it will allow for more dynamic applications such as in virtual production. The process is one that would benefit from an NVIDIA 2080 or Titan RTX card, and it has not been designed or implemented for lower-cost or mobile devices. It runs in CUDA on NVIDIA cards, so this would exclude implementing it on an Apple OSX computer for the foreseeable future. This is why for the digital human agents that Pinscreen are also working on, they have decided to focus on a cloud solution for rendering of their virtual assistants, which means they will be able to be deployed on mobile devices and web browsers.

Full body real-time Pinscreen Intelligent Agent Prototype in Unreal Engine

To make sure that the system respects all nationalities and ethnic backgrounds, Pinscreen has invested heavily in data sets covering as wide an array of facial types as possible. Data-driven solutions can have biases against certain ethnic types, purely based on biases in the training data. Pinscreen has an active world-wide client base and is profitable, in part due to its international focus and how strongly the team has wanted to balance gender and ethnic backgrounds, and support minorities in its’ training data.

While the team is very focused on real-time, it is possible that a non-real-time version could be built. It is also possible to consider implementing some export function from say, UE4 for offline re-rendering in a virtual production studio pipeline, but this would be in the form of data export and a deep learning solution export and not a single .fbx or USD style export. This is because the paGAN 2 solution is not baking into the UE4 textures but rather building on them. While the team is only currently working on the face, similar solutions are being explored for hair and the company roadmap includes a similar solution for full bodies. Bodies pose extra problems, as the reality is that artists would want not only the human form but a synthesis inferred of the cloths. Pinscreen has a team working on clothes and fashion but a paGAN 2 style approach used so effectively on faces would not directly translate to full, clothed bodies in motion.

Full Disclosure:

The author, Dr. Mike Seymour is currently doing joint unpaid academic research with Pinscreen.