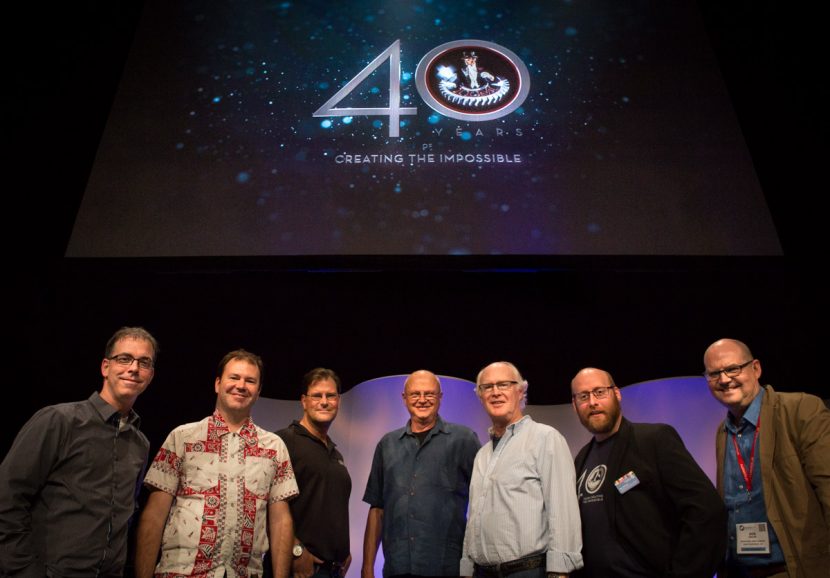

Another packed day at SIGGRAPH where ILM gave an incredible 40th anniversary presentation, MPC and DNeg discussed some feature film tools, and several facial capture and animation talks and papers were given. Here’s our run down from the day.

ILM’s 40th anniversary

- Scott Farrar

- Dennis Muren

- Glen McIntosh

- Tim Alexander

- Rob Bredow

Looking back on the start of ILM, the first Star Wars film and what it meant to the industry as a whole, – Dennis Muren gave credit to John Dykstra for helping to change the whole nature of the industry, “there was not really permanent work in LA before that” he recalled, (note, Star Wars was made in LA before there was an actual ILM company in Northern California).

Scott Farrar spoke “of the bolt of lighting” that was motion control that allowed multiple passes, while Muren chimed in that “there was there this crazy equipment that I knew nothing about, but that is why I wanted to work there!” as the panel discussed computer controlled motors and stepper motor motion control. “It gave Star Wars a weird look,” says Muren. “I would not say it is real but it was confident.”

Muren discussed joining ILM as it was being reformed “for two more years to do the film Empire – and I am still there”, he said. Interestingly he went on to say that “On Empire there was an idea of doing robotic walkers and I thought it was impossible – based on how long it took to get the motion control cameras working – we would have still being doing it! – Empire was the hardest show ever for me.“

In one surprising reveal, the panel said there were actually some 30 odd 70mm prints with 17 or 18 temp shots in them. “The studio promised to pull those prints and replace them,” noted Muren. “I don’t know if they did?”, joking that it was one of the few times ILM did not actually – technically make its delivery deadline.

Muren, of course, also worked on Raiders of the Lost Ark, which used cloud tanks for the final sequence of the film when the Ark is opened and out flies first angelic ghosts who will soon become demonic. “They had every trick in the book, the ghost (pointing to an iconic image from the film) – that is ‘Gretel the ghost’ – that’s one of the receptionists at ILM.”

Farrar joined in 1981 for Star Trek 2. There was a huge change in the industry at that time since according to Farrar since very few people understood visual effects and they were not naturally something that appeared in a film. For ILM to start to grow and for an industry to form they needed scripts , “if you didn’t have George Lucas or some of his friends like Steven Spielberg to understand what could be done, and then for the writers to write the scripts”, he explained, “then the we couldn’t do our work”

In the pre-digital era an important milestone was in the film Dragonslayer where Phil Tippett enhanced the animation of the creatures with go-motion to employ motors on the rod puppets while the aperture was open, this introducing motion blur. Muren commented how even he and others were not bothered by seeing traditional stop frame animation, but audiences did not like it, once Tippett introduced go-motion several of the panelist commented on just how remarkably more believable the effects were.

Click here to watch our fxguidetv episode on ILM’s 40th Exclusive Video

When Return of the Jedi came around, Muren was tasked with making, amongst other sequences, the speeder bike chase – 103 shots in three minutes. Ultimately that was made possible with Steadicam plates shot by Garret Brown, which lead into a discussion of the importance of real footage whenever possible “I really believe in – if you shot it for real you should,” said Muren. He explained how key it was to film when one could, and Ben Snow remarked that even today a supervisor will email around inside ILM asking other sups if anyone has some examples of a shot filmed say on on green screen but outside, vs. in a studio to show to a director or producer just how much better just using natural light is that studio lighting. The whole panel agreed that ILM always pushes for real but this does make it hard to later revise and perhaps add extra shots, that being said it is preferred when possible.

For Back to the Future II, Farrar says “whatever idea we had he (director Robert Zemeckis) would come up with a way to make it more difficult.” Farrar also paid credit to paint and roto team at ILM – making traveling mattes for the optical printer, was key to the multiple Michael J Fox characters especially in the second film. There was also a fun discussion about the Hoverboard, Ben Snow joking about the internet’s obsession with it. The hoverboard reflected a huge range of solutions. For example, Fox would jump on the hoverboard on wires and he had actual magnets in his shoes to stay connected, “it would only be good for 48 frames but it sold the shot.” In other shots the vast Siggraph audience were shown funny shots of Michael J Fox’s shoes were drilled and screwed on to the hoverboard and then Fox was carried onto set by a grip.

On Roger Rabbit (RR), Farrar told the story of doing a test of RR going under a light, bumping into a trash can. “That was the whole idea of Roger Rabbit, he would cast shadows and be lit by the light.” Farrar discussed a test that has never been seen – but this one shot sold the film and showed it could be done. It was the first time there had been a cartoon feature which such complex shading and lighting and everyone agreed how remarkable an achievement it was, as this was still in the pre-digital era. Star Wars had some vector graphics. “We did not know much about it,” said Muren, “except George was setting it up – and he got Ed Catmull – and a bunch of his friends,’’ referring to the birth of Pixar. “I got especially quite impatient to see if I could use any of this technology.”

Farrar discussed an early Lucasfilm Computer Division shot for the Genesis sequence on Wrath of Khan, while the sequence was remarkable and fully digital, “to film it – it had to be filmed off a monitor on a VistaVision camera. There was no film recorder at the time.”

Muren’s breakthrough in computer graphics was for the stained glass man in Young Sherlock Holmes. “I thought, let’s see if this will ever pay off, ’cause George had put a lot of money into it, and it worked out great. They did 8 shots took about 6 months.”

Other films included CG work – Howard the Duck, Willow, and then The Abyss. The Willow morph, for example, was based on a SIGGRAPH paper single frame transition of one still to another and ” then all we did was do it at 24 frames a sec” he jokes. ILM did 17 shots on The Abyss, with John Knoll working heavily on it. Says Muren: “The pod was meant to turn into a hand at one stage and reach for a bomb or something and I think we never worked out how to do that in the time we had.” interestingly only recently did many of the panelists find out they had actually ILM bid against Pixar for the job. Pixar by this stage having become a separate company and one that even did commercials and made hardware.

After Abyss, Muren took time off and got a copy of Foley and van Dam (a key computer graphics book) and sat in a coffee shop and tried to work it out “I learned that everything had to be specified – and I thought I can do that – It is not magic – everything has to be specified and I know I can do that.” This gave him the confidence to embrace digital – even if he did not actually ever finish reading the book.

And so followed Terminator 2 which had only 50 shots. “For me,” says Muren, “T2 was the breakthrough film because that is where we got digital compositing working. That is the main thing I did in my year off – work on digital compositing.”

This 40th celebration panel discussed many other moments from ILM’s history. Snow, for example, recalled receiving a fax from Spielberg about Twister which said ‘Well, I am really impressed you can track the twister into the plate, but it is like a man who dyes his hair – there is something not quite right there!” – laughing Snow remakred “I still have the fax !!”

Another was the huge task was required for The Phantom Menace – 2,200 shots in two years. “George always wanted effects to be a white color job – but it’s important to get dirty,” said Muren as the panel noted as the discussed how Lucas liked stage work and green screen, as it worked for his flexibility in film making. But Episode one – while huge was not the only film being done at ILM pointed out Snow, by the time ILM was doing films like Episode One it was also simultaneously doing Peal Harbor at the same time he pointed out.

Now, of course, ILM has another batch of Star Wars films on its slate, plus new ventures such as ILMxLAB, it’s immersive entertainment outlet. Here the studio wants to “experiment to see what storytelling would look like if you had full resolution rendering – running on an iPad – rendered in the cloud – in theory whereever you have Netflicks you can have this,” commented Bredow as he showed a scene from a Star Wars (possible) story being played on an Ipad – but rendered in the cloud. The ipad realtime rendering is remarkable an we will cover this more in our Day 3 coverage and the Game/Film Panel. Bredow discussed how ILM has made some experiences and then ported the work to VR, showing a VR clip on the same world.

“How do we keep this going for the next 40 years?” asked Ben Snow. “Well, I am looking for people, so go to [email protected],” joked Bredow, who said he was immediately looking for 10 more software engineers…

The Panel was very popular and helped by an outstanding set of rare clips and behind the screen’s footage that the great team behind the scenes had assembled and theat Greg Grusby from ILM V.J ed while the supervisors talked. Hours and hours of footage from over the 40 years of ILM delighted the crowd and reminded us all of how pivotal the company has been in the last 40 years of film making.

Capturing the World talks

Panocam on Jupiter Ascending

Panocam consisted of six Red cameras that could be flown around a city to produce a vast background aerial plate, Double Negative (Dneg) created a dynamic, action packed, and photorealistic sequence combining footage from a green-screen stage of the ‘rollerblading aerial hero’, with a multi-camera RED array background, and complex CG animation.

DNeg’s Stig, the tool normally used for stitching of on set reference material was adapted for the Panocam RED rig, the output was 6 views from each camera plus a master camera which can be used to window onto in Nuke for the part of the world the composite required. Without the vast background DNeg would have had to do a single track shot and merge a green screen with a rough match moved background, which does not scale well or respond to.

DNeg had already done something similar on Man of Steel, with a postvis pipeline that would attempt to stitch various plates together and prep them for roto, and matchmove. With the more flexible full Panocam version used on Jupiter Ascending – aligned with LIDAR of the city allows for greatest flexibility It does require more work up front but is vastly more flexible, in both Maya and Nuke – with the body geo tracks of the green screen actors, to make sure the actors seem to fly correctly and not skid and drift during the dramatic action. The foreground was shot on greenscreen on ALEXA, (this did mean some effort by DNeg had to match the color space of the Alexa to the Red).This rig has been used on a few shows at DNeg since Jupiter Ascending.

Roundshot Pipeline at MPC for Godzilla

For Godzilla and many upcoming films at MPC, hundreds of panoramic images were acquired and a proper pipeline was needed to supply departments with quick previews and automated stitching. Roundshots are panoramic images. Kirk Chantraine, Software Lead at Moving Picture Company, Vancouver (MPC) discussed the tool used on Godzilla, and the team are currently working on Batman vs. Superman, Jungle Book, Suicide Squad and many others.

74 images, or more if it is an HDR, make up the spherical environment captured on set, for any film the team on location would shoot 100 to 500 such panos so a film may have 60,000 images coming back to the studio. These are used by editorial, and vfx for environments, lighting and compositing. While MPC looked at the Spheron, the resolution was not high enough for MPC so they moved to the Roundshot VR drive. For more info there is a web site on gear at www.roundshot.com. There was no SDK access which MPC would like, but with the XML generated from the system the team can produce contact sheets and automate processes after the shoot.

There are a number of different ways to capture on set – a 50 mm lens is used. They will be moving to a 100mm lens to allow it to be used on an 8K film. A typical pano may take 4 mins, but an unbracketed night shot might be 8 mins, and a nighttime HDR can be 20 mins. It is possible to capture just subsets but a spherical full set is what is normally wanted and in RAW format without baked in gamma.

Bracketing is not always done, but often is. The XML output allows contact sheets (via Nuke) and also confidence data, and it feeds into a dailies pipeline. Slates are done at the start with images from a mobile phone and at the end a Macbeth chart is shot. This is all run by Envirocam ingest that converts the final to HDR and OpenEXR and in the new ACEScg colorspace pipeline. It can also be reviewed in RV before the shot moves forward into the asset pipeline. The team would like to extend this to PTGui and some other pano tools in the future.

Blendshapes from commodity RGB-D sensor

This talk demonstrates a near-automatic technique for generating a set of photorealistic blendshapes from facial scans using commodity RGB-D sensors, such as the Microsoft Kinect or Intel RealSense. The method has the potential to expand the number of photoreal, individualized faces that are available for simulation.

The first stage is to detect the face and isolate the face from the body. For the majority of the rest of the process the team focus on just this hockey mask, only recombining the face to head and body. The team uses computer vision image segmentation to isolate an area not dissimilar from a hockey mask region. If this is just piped into a face reconstruction the middle of the face seems not too bad but the glancing sides of the head are too noisy and need to be adjusted. This adjusted data is used for Mesh Generation which has some smoothing and noise reduction. Next the mesh needs to be textured. On a single frame the texture data would be somewhat incomplete with holes and artifacts. A solution would be to average a number of frames, but this produces a face that is both soft and looses a lot of the detail needed especially in the eyes. ICT take one frame either side of the key frame plus texture frames from two ends of an extreme left and right head turn. These 5 frames are then stitched using an algorithm from Hauang Ming et al, Computer Graphics Forum 2009.

Graham Fyffe from ICT also presented FlashMob: Near-Instant Capture of High-Resolution Facial Geometry and Reflectance, which is a near-instant method for acquiring facial geometry, diffuse color, specular intensity, specular exponent, and surface orientation. Six flashes are fired in rapid succession with subsets of 24 DSLR cameras, which are arranged to produce an even distribution of specular highlights. Total capture time is below the 67ms blink reflex. The system is simpler than say the ICT Lightstage, this is as Fyffe joked the ‘faster, cheaper, easier’ solution and uses just SLRs and Flashes.

Graham Fyffe from ICT also presented FlashMob: Near-Instant Capture of High-Resolution Facial Geometry and Reflectance, which is a near-instant method for acquiring facial geometry, diffuse color, specular intensity, specular exponent, and surface orientation. Six flashes are fired in rapid succession with subsets of 24 DSLR cameras, which are arranged to produce an even distribution of specular highlights. Total capture time is below the 67ms blink reflex. The system is simpler than say the ICT Lightstage, this is as Fyffe joked the ‘faster, cheaper, easier’ solution and uses just SLRs and Flashes.Detailed Spatio-Temporal Reconstruction of Eyelids

The first method for detailed spatio-temporal reconstruction of eyelids faithfully tracks the lid where visible and creates a plausible geometry where hidden. It is the first method to capture the complex folding behavior in this important region of the face. This session was from Amit Bermano, Markus Gross, Thabo Beeler, Yeara Kozlov, Derek Bradely from Disney Research Zürich, ETH Zürich and Bernd Bickel from the Institute of Science and Technology Austria, Disney Research Zürich.

The eye by the team under go eyelid reconstruction, driven by a set of constraints from a set of meshes. The base sampling is from a set of cameras (in an arc around the face).

Dynamic 3D Avatar Creation from Hand-Held Video Input

A complete pipeline for creating fully rigged and detailed 3D facial avatars from hand-held video. Using a minimalistic acquisition process, the system facilitates a range of new applications in computer animation and consumer-level online communication based on personalized avatars. Alexandru Ichim

from École Polytechnique Fédérale de Lausanne presented the team’s paper.

This facial reconstruction, from say waving around an iPhone, borders on magic it is so remarkable. This takes a great step to bringing high quality avatars to the general public.

It has good geometry, good textures and is clearly recognizable to the source person. The output is lightweight and easy to make. This system does not compete with high end systems like say a Lightstage scan, but given the only uses an iPhone – it is remarkable. There has been some great avatar work with RGBD cameras or sensors but they have relied very heavily on the depth info.

If the person is static – then with an 8Mpixel camera – the system needs about 100 images (again with the actor static) – then a video is recorded with the actor making a range of expressions.

The static model is then feed into a dynamic modeling system that uses the video and then this is used to animate the face.

Agisoft used for the first MVS reconstruction, the PCA + thin shell deformation +

Driving High-Resolution Facial Scans With Video Performance Capture

Animating facial geometry and reflectance using video performances, borrowing geometry and reflectance detail from high-quality static expression scans. Combining multiple optical-flow constraints weighted by confidence maps eliminates drift.

The system while coming from the world of ICT’s Lightstage seeks to offer a simpler system which will facilitate wider use from a broader audience.