Each year we pick a paper to highlight just prior to SIGGRAPH from the hard core technical papers. Last year we focused on Beam Rendering. This year we are focusing on a paper from USC ICT and one that leads on from our story on Faceshift and Hao Li’s work on VR facial capture.

ICT is remarkable for the depth and breath of their research. They are one of a few key places in the world, such as Disney Zurich, Pixar and others that are year in year out presenting powerful research papers as part of the core of SIGGRAPH – the technical papers. Each year there are many more papers submitted than accepted, competition is tough and the papers are all peer reviewed for the highest academic standards.

While the technical papers are often the most opaque to every day artists, often containing equations and quantitative proofs, they remain the very cornerstone of the conference and the place where key ideas are often first made public. There is a very easy path to trace from technical paper to a SIGGRAPH talk the following year, to panel the year after, perhaps a technical book on Amazon or a mention in an article here on fxguide – to finally a button in your app at your desk.

Skin Microstructure Deformation with Displacement Map Convolution

Koki Nagano, Graham Fyffe, Oleg Alexander, Jernej Barbic, Hao Li, Abhijeet Ghosh and Paul Debevec.

The paper is described as:

“Synthesizing the effects of skin microstructure deformation by anisotropically filtering a high-resolution displacement map to match normal distribution changes in measured skin samples. Facial animation made with the technique shows more realistic and expressive skin under deformation and strain”.

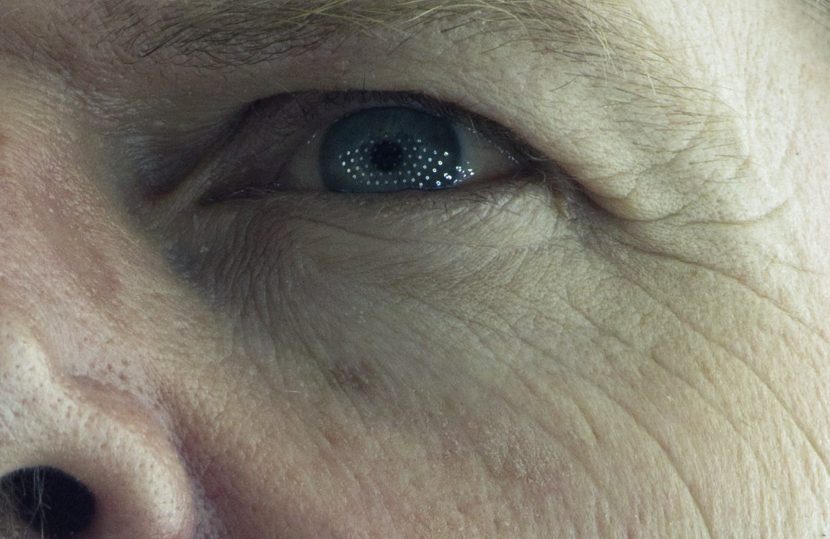

In lay terms this paper shows a path for replicating the way skin compresses and expands – much as an accordion would – all around your face. Why is this important? Well, it turns out that while we can’t see super fine skin detail in a render directly, we see its effect on specular highlights. A lot has been written about sub surface scattering and the diffuse side of faces, but the specular highlights now need some attention and this paper shows how to make those spec highlights more realistic as one moves around the face.

It turns out also for all the science we have, most of our face is made up of the same sort of skin (give or take). On the end of your nose it doesn’t need to do much so it is fairly flat and without a lot of micro wrinkles, but around your eyes and mouth we require our faces to do a lot of stretching and compressing. Thus it is that the skin there tends to be sort of folded and wrinkled but in a certain direction, the direction you are most going to use. This directional compression and expansion results in an anisotropic specular highlight that is broken up and thus your face does not look ‘plastic’. While SSS will produce the wax like skin quality, this very particular specular response will make the skin not seem fake and plastic.

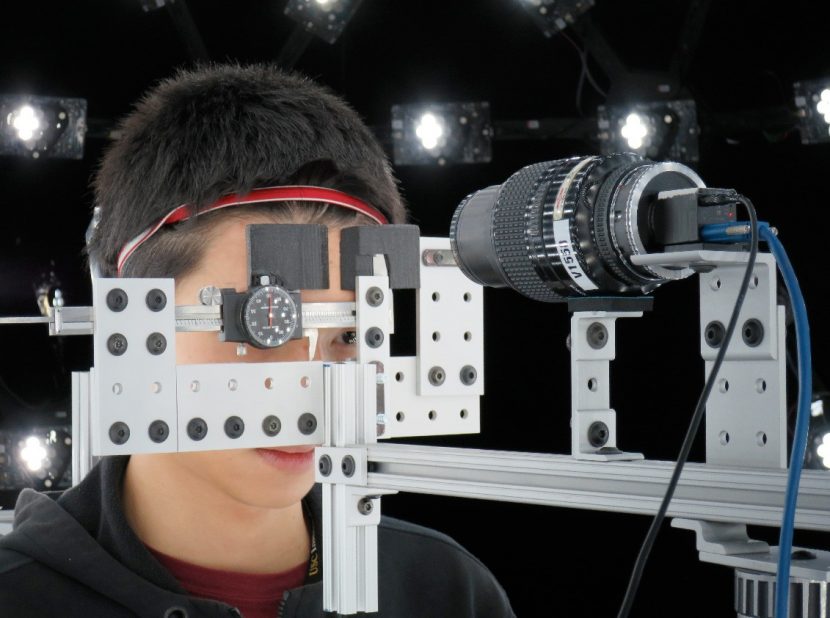

How does the this get done? Previously the team had shown how to up-res a face to super high resolution using basically samples and cleverly interpolating up from a 6K map to a 16K map of the face. Once you have this super or uber high resolution skin you want to dynamically change the spec response as the skin gets rougher or smooth from compression or stretching.

If you are fully animating a face say with blendshapes, you can create a ‘stress field’ (this could be a 2D stress field), the approach then sharpens or blurs (applies a convolution filter) with the directionality indicated by the stress field. This adjustment to the ‘uber fine’ detail based spec produces a spec that looks more believable without sending render times through the roof. (Of course if you were doing flesh sims or something more complicated you could get more detailed or accurate stress fields from the sim.)

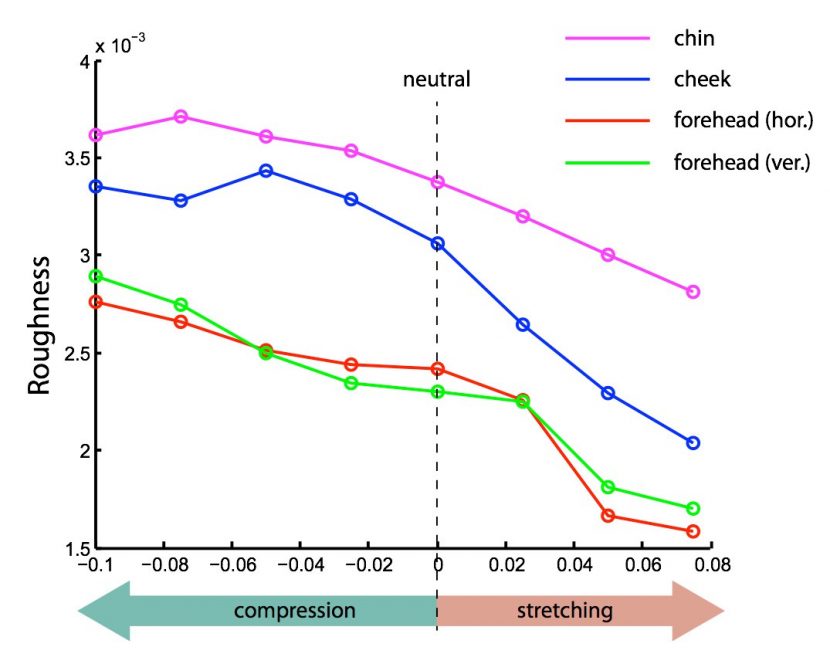

This new paper builds on the earlier ICT skin microstructure paper by Graham et al. 2013, but that approach was based on adding skin detail to a still neutral face and not about the dynamic appearance of the skin microstructures as you change expressions. As the skin deforms, generally speaking, the skin becomes rougher when it compresses and smoother when it is stretches.

The paper outlines not just what happens but an effective and achievable way to implement the effect in a CG face, based on a high resolution scan. Note that the USC ICT Lightstage combined with the micro structure up res-ing is producing the highest res facial scans commercially accessible in the world.

Background on skin “roughness”

Here is some background from our friends at the research group Digital Human League (which some ICT and fxguide people are a part of)

Skin roughness/topology serves as a reserve of the tissue, providing the skin surface layer with elasticity through a network of very fine grooves and ridges which allow the skin to deform. These fine-scale structures vary in size and patterns throughout the body. The statistical variation of such structures, or roughness, is an important component to skin that affects it at many scales.

When light hits the skin, it is generally split into different components. Part of the light enters the skin, scatters, and comes out (better known as subsurface scattering). A small percentage of light scatters but does not penetrate (better known as diffuse). Finally, some of the light reflects back off the skin surface (better know as specular). This specular is the very first surface layer reflection and is greatly affected by the surface roughness.

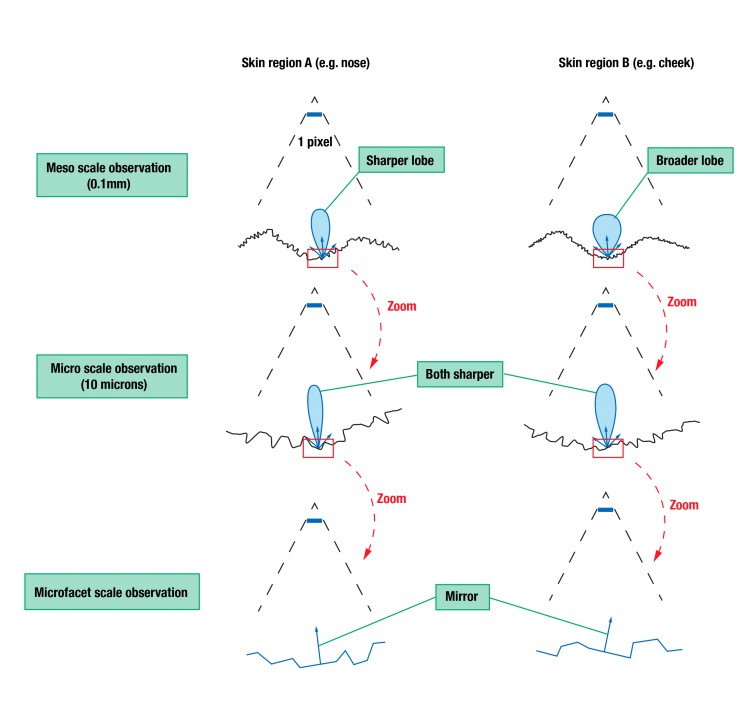

When doing a scan of a face at ICT, the face and textures are captured at 0.1mm of detail, which comes out as 6k maps (including displacement). Therefore, the geometry is able to get detail down to the pores and fine wrinkles. We call this the “meso” structure.

However, there is still a great more detail in skin between pores which breaks up the surface highlights even more. Traditionally, one way to mimic this “micro” geometry break up was to use a BRDF such as Cook-Torrance or GGX. Since certain areas of the skin are shinier than others a roughness map is still needed for these to be used correctly.

The paper is not based on theory – the team used special rigs to sample the skin and thus they have excellent comparisons between fully CG skin with this anisotropic spec detail and real skin.

The paper is being presented in the technical papers: Appearance Capture

Wednesday, 12 August 2:00 PM – 3:30 PM, Room 152.