Epic Games has released the second volume of its Virtual Production Field Guide, a free in-depth resource for creators at any stage of the virtual production process in film and television. This latest volume of the Virtual Production Field Guide dives into workflow evolutions including remote multi-user collaboration, new features released as well as what’s coming this year in Unreal Engine 5, and two dozen new interviews with industry leaders about their hands-on experiences with virtual production.

Epic Games has released the second volume of its Virtual Production Field Guide, a free in-depth resource for creators at any stage of the virtual production process in film and television. This latest volume of the Virtual Production Field Guide dives into workflow evolutions including remote multi-user collaboration, new features released as well as what’s coming this year in Unreal Engine 5, and two dozen new interviews with industry leaders about their hands-on experiences with virtual production.

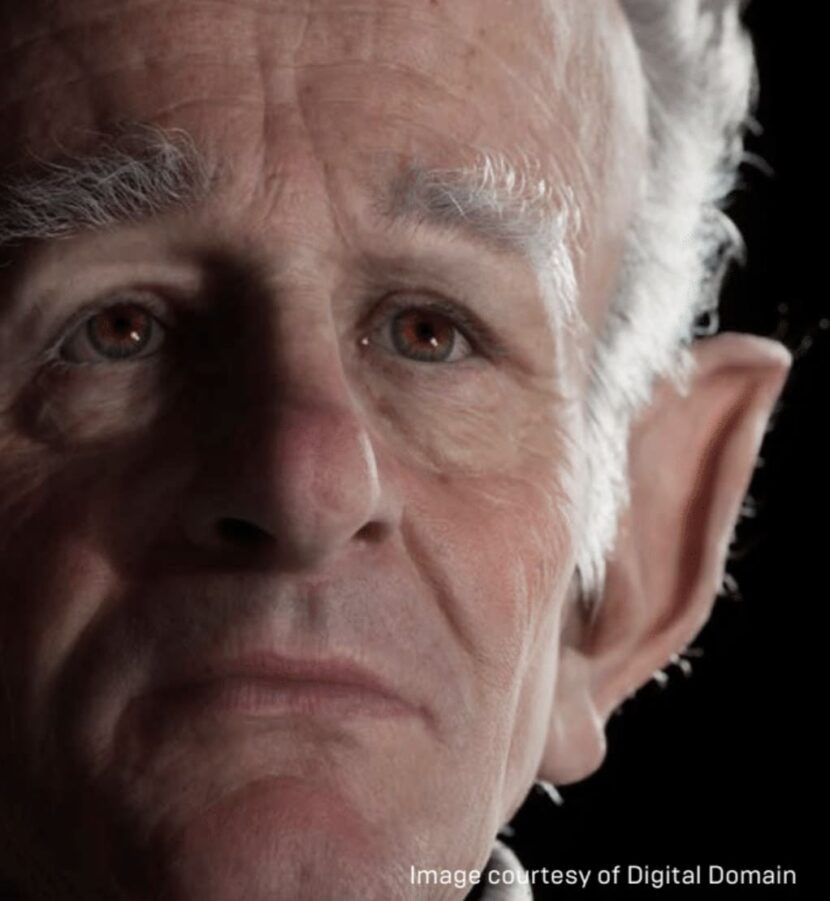

One such contributor is Darren Hendler at Digital Domain.

Darren Hendler

Hendler is the Director of Digital Domain’s Digital Humans Group. His job includes researching and spearheading new technologies for the creation of photoreal characters. Hendler’s credits include Pirates of the Caribbean, FF7, Maleficent, Beauty and the Beast, and Avengers: Infinity War.

Hendler is the Director of Digital Domain’s Digital Humans Group. His job includes researching and spearheading new technologies for the creation of photoreal characters. Hendler’s credits include Pirates of the Caribbean, FF7, Maleficent, Beauty and the Beast, and Avengers: Infinity War.

Can you talk about your role at Digital Domain?

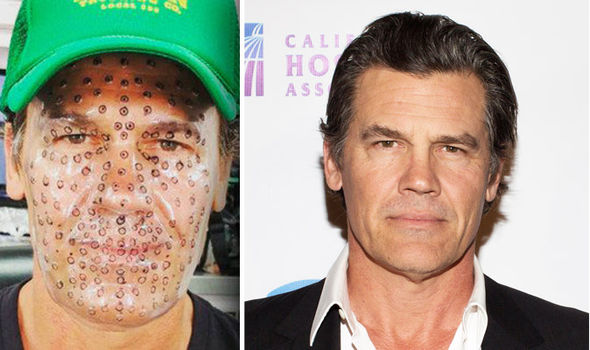

Hendler: My background is in visual effects for feature films. I’ve done an enormous amount of virtual production, especially in turning actors into digital characters. On Avengers: Infinity War I was primarily responsible for our work turning Josh Brolin into Thanos. I’m still very much involved in the feature film side, which I love, and also now the real-time side of things.

Digital humans are one of the key components in the holy grail of virtual production. We’re trying to accurately get the actor’s performance to drive their creature or character. There’s a whole series of steps of scanning the actor’s face in super-high-resolution, down to their pore-level details and their fine wrinkles. We’re even scanning their blood flow in their face to get this representation of what their skin looks like as they’re busy talking and moving.

The trick to virtual production is how you get your actor’s performance naturally. The primary technique is helmet cameras with markers on their face and mocap markers on their body, or an accelerometer suit to capture their body motion. That setup allows your actors to live on set with the other actors, interacting, performing, and getting everything live, and that’s the key to the performance.

The biggest problem has been the quality of the data coming out, not necessarily the body motion but the facial motion. That’s where the expressive performance is coming from. Seated capture systems get much higher-quality data. Unfortunately, that’s the most unnatural position, and their face doesn’t match their body movement. So, that’s where things are really starting to change recently on the virtual production side.

Where does Unreal Engine enter the pipeline?

Hendler: Up until this moment, everything has been offline with some sort of real-time form for body motion. About two or three years ago, we were looking at what Unreal Engine was able to do. It was getting pretty close to the quality we see on a feature film, so we wondered how far we could push it with a different mindset.

We didn’t need to build a game, but we just wanted a few of these things to look amazing. So, we started putting some of our existing digital humans into the engine and experimenting with the look, quality, and lighting to see what kind of feedback we could get in real-time. It has been an eye-opening experience, especially when running some of the stats on the characters.

At the moment, a single frame generated in Unreal Engine doesn’t produce the same visual results as a five-hour render. But it’s a million times faster, and the results are getting pretty close. We’ve been showing versions of this to a lot of different studios. The look is good enough to use real-time virtual production performances and go straight into editorial with them as a proxy.

The facial performance is not 100 percent of what we can get from our offline system. But now we see a route where our filmmakers and actors on set can look at these versions and say, “Okay, I can see how this performance came through. I can see how this would work or not work on this character.”

How challenging is it to map the human face to non-human characters, where there’s not always a one-to-one correlation between features?

Hendler: We’ve had a fantastic amount of success with that. First, we get an articulate capture from the actor and map out their anatomy and structures. We map out the structures on the other character, and then we have techniques to map the data from one to the other. We always run our actors through a range of motions, different expressions, and various emotions. Then we see how it looks on the character and make adjustments. Finally, the system learns from our changes and tells the network to adjust the character to a specific look and feel whenever it gets facial input close to a specific expression.

At some point, the actors aren’t even going to need to wear motion capture suits. We’ll be able to translate the live main unit camera to get their body and facial motion and swap them out to the digital character. From there, we’ll get a live representation of what that emotive performance on the character will look like. It’s accelerating to the point where it’s going to change a lot about how we do things because we’ll get these much better previews.

How do you create realistic eye movement?

Hendler: We start with an actor tech day and capture all these different scans, including capturing an eye scan and eye range of motion. We take a 4K or 8K camera and frame it right on their eyes. Then we have them do a range of motions and look-around tests. We try to impart as much of the anatomy of the eye as possible in a similar form to the digital character.

Thanos is an excellent example of that. We want to get a lot of the curvature and the shape of the eyes and those details correct. The more you do that, the quicker the eye performance falls into place.

We’re also starting to see results from new capture techniques. For the longest time, helmet-mounted capture systems were just throwing away the eye data. Now we can capture subtle shifts and micro eye darts at 60 frames a second, sometimes higher. We’ve got that rich data set combined with newer deep learning techniques and even deep fake techniques in the future.

Another thing that we’ve been working on is the shape of the body and the clothing. We’ve started to generate real-time versions of anatomy and clothing. We run sample capture data through a series of high-powered machines to simulate the anatomy and the clothing. Then, with deep learning, we can play 90 percent of the simulation in real-time. With all of that running in Unreal Engine, we’re starting to complete the final look in real-time.

What advice would you give someone interested in a career in digital humans?

Hendler: I like websites like ArtStation, where you’ve got students and other artists just creating the most amazing work and talking about how they did it. There are so many classes, like Gnomon and others, out there too. There are also so many resources online for people just to pick up a copy of ZBrush and Maya and start building their digital human or their digital self-portrait.

You can also bring those characters into Unreal Engine. Even for us, as we were jumping into the engine, it was super helpful because it comes primed with digital human assets that you can already use. So you can immediately go from sculpting into the real-time version of that character.

The tricky part is some of the motion, but even there you can hook up your iPhone with ARKit to Unreal Engine. So much of this has been a democratization of the process, where somebody at home can now put up a realistically rendered talking head. Even five years ago, that would’ve taken us a long time to get to.

Where do you see digital humans evolving next?

Hendler: You’re going to see an explosion of virtual YouTube and Instagram celebrities. We see them already in a single frame, and soon, they will start to move and perform. You’ll have a live actor transforming into an artificial human, creature, or character delivering blogs. That’s the distillation of virtual production in finding this whole new avenue—content delivery.

Hendler: You’re going to see an explosion of virtual YouTube and Instagram celebrities. We see them already in a single frame, and soon, they will start to move and perform. You’ll have a live actor transforming into an artificial human, creature, or character delivering blogs. That’s the distillation of virtual production in finding this whole new avenue—content delivery.

We’re also starting to see a lot more discussion related to COVID-19 over how we capture people virtually. We’re already doing projects and can actually get a huge amount of the performance from a Zoom call. We’re also building autonomous human agents for more realistic meetings and all that kind of stuff.

What makes this work well is us working together with the actors and the actors understanding this. We’re building a tool for you to deliver your performance. When we do all these things right, and you’re able to perform as a digital character, that’s when it’s incredible.

Digital Domain

Matthias Wittman, VFX Supervisor @ Digital Domain, will also be part of the upcoming Real-Time Conference, Digital Human talks, co-hosted by fxguide’s Mike Seymour, (April 26/27). He will be presenting “Talking to Douglas, Creating an Autonomous Digital Human“. Also presenting will be Marc Petit, General Manager of Unreal Engine at Epic Games.

Digital Domain was also recently honored at the Advanced Imaging Society’s 11th annual awards for technical achievements. Masquerade 2.0, the company’s facial capture system, was recognized for its distinguished technical achievement. Masquerade generates high-quality moving 3D meshes of an actor’s facial performance from a helmet capture system (HMC). This data can then be transformed into a digital character’s face or their digital double, or a completely different digital person. With Masquerade, the actor is free to move around on set, interacting live with other actors to create a more natural performance. The images from the HMC worn by the actors are processed using machine learning into a high quality, per frame, moving mesh that contains the actor’s nuanced performance, complete with wrinkle detail, skin sliding and subtle eye motion, etc. We posted an in-depth story on Masquerade 2.0 in 2020.

Field Guide Vol II

The first volume of the Virtual Production Field Guide was released in July 2019, designed as a foundational roadmap for the industry as the adoption of virtual production techniques was poised to explode. Since then, a number of additional high-profile virtual productions have been completed, with new methodologies developed and tangible lessons ready to share with the industry. The second volume expands upon the first with over 100 pages of all-new content, covering a variety of virtual production workflows including remote collaboration, visualization, in-camera VFX, and animation.

This new volume of the Virtual Production Field Guide was put together by Noah Kadner who wrote the first volume in 2019. It features interviews with directors Jon Favreau and Rick Famuyiwa, Netflix’s Girish Balakrishnan and Christina Lee Storm, VFX supervisor Rob Legato, cinematographer Greig Fraser, Digital Domain’s Darren Hendler, DNEG’s George Murphy, Sony Pictures Imageworks’ Jerome Chen, ILM’s Andrew Jones, Richard Bluff, and Charmaine Chan, and many more.

As the guide comments, what really altered filmmaking and its relationship with virtual production was the worldwide pandemic. “Although the pandemic brought an undeniable level of loss to the world, it has also caused massive changes in how we interact and work together. Many of these changes will be felt in filmmaking forever.” Remote collaboration and using tools from the evolving virtual production toolbox went from a nice-to-have to a must-have for almost all filmmakers. The Guide examines a variety of workflow scenarios, the impact of COVID-19 on production, and the growing ecosystem of virtual production service providers.

As the guide comments, what really altered filmmaking and its relationship with virtual production was the worldwide pandemic. “Although the pandemic brought an undeniable level of loss to the world, it has also caused massive changes in how we interact and work together. Many of these changes will be felt in filmmaking forever.” Remote collaboration and using tools from the evolving virtual production toolbox went from a nice-to-have to a must-have for almost all filmmakers. The Guide examines a variety of workflow scenarios, the impact of COVID-19 on production, and the growing ecosystem of virtual production service providers.

Click here to download the Virtual Production Field Guide as a PDF, or visit Epic’s Virtual Production Hub to learn more about how virtual production and the craft of filmmaking.