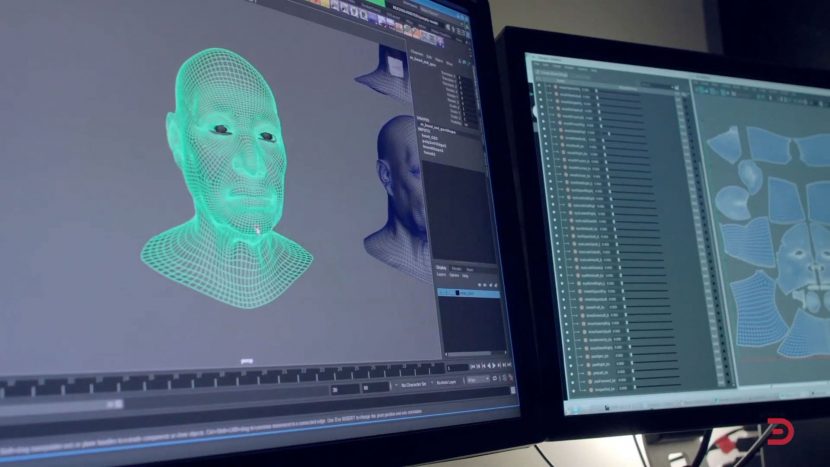

Masquerade 2.0, is the latest iteration of Digital Domain’s in-house facial capture system. Version 2.0 has been rebuilt from the ground up to bring feature film-quality aspects to new and often real-time applications such as next-gen games, episodic TV, virtual production, and commercials. At its core, Masquerade is the same tech Digital Domain used to create Thanos in Avengers: Infinity War but now enhanced to use machine learning and process faces in much faster production environments.

Masquerade v1 came out of Digital Domain’s work for Avengers films and trying to come up with a way to get the helmet cameras to be able to get an accurate representation of the actor’s facial performance. After Avengers, the team looked back and tried to figure out what was next. Before Thanos, Digital Domain had already spent 3 or 4 months doing extensive testing and development, and Thanos was hailed as one of the strongest digital characters yet, but the major Digital Domain film pipeline was labour intensive and time-consuming. See our fxguide story here.

Digital Domain has locations in Los Angeles, Vancouver, Montreal, Beijing, Shanghai, Shenzhen, Hong Kong, Taipei, and Hyderabad. Over the last quarter of a century, Digital Domain has been a leader in visual effects and expanded globally into digital humans, virtual production, previsualization, and virtual reality, and producing imagery for commercials, game cinematics, and an impressive roster of film projects.

We spoke to Darren Hendler, Director of Digital Humans Group at Digital Domain, and Dr. Doug Roble, Senior Director of Software R&D. Both are isolated by COVID but both in California. Digital Domain has already begun applying this new technology to several next-gen game projects, turning out over 50 hours of game footage 10x faster than their previous methods, and they are not done yet.

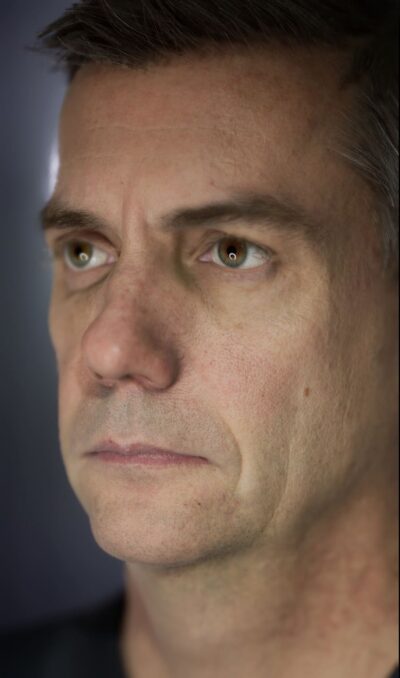

Unlike methods that take over a year to complete, Masquerade 2.0 can deliver a photorealistic 3D character and dozens of hours of performance in a few months. Using machine learning, Masquerade has been trained to accurately capture expressive details down to the wrinkles on an actor’s face, without limiting their on-set movement. This freedom has helped Digital Domain bring emotive characters to a range of high-end projects including a recent Time Magazine cover recreation of Dr. Martin Luther King Jr. we reported here.

Unlike methods that take over a year to complete, Masquerade 2.0 can deliver a photorealistic 3D character and dozens of hours of performance in a few months. Using machine learning, Masquerade has been trained to accurately capture expressive details down to the wrinkles on an actor’s face, without limiting their on-set movement. This freedom has helped Digital Domain bring emotive characters to a range of high-end projects including a recent Time Magazine cover recreation of Dr. Martin Luther King Jr. we reported here.

Masquerade 2.0 drives one of the highest-quality facial capture systems in the world. The system was developed over the course of two years by Digital Domain’s Software Lead, Lucio Moser with help from Software Engineer Dimitry Kachkovski. Masquerade 2.0 now targets minute facial motions, including more accurate lip shapes and subtle movements around the eyes than version 1.0. With this new data, Digital Domain can deliver more emotive performances to their projects, bringing the intricacies of human facial dynamics to their digital characters.

“Masquerade 2.0 is powerful enough for a feature film, but accessible enough for games and other digital character applications, blasting past the constraints that used to hold us back,” explains Hendler. “Through new optimizations, Masquerade can deliver a one-to-one version of an actor’s performance almost out of the gate, allowing us to keep many details we used to have to throw away. This has completely revolutionized our delivery process, allowing us to turn around assets faster than anybody else. In the past, 50 hours of footage meant 600,000 hours of artist time. Today, Masquerade cuts that by 95%.”

In addition to time, the new Masquerade system is still a marker-based system, but it is a much more accurate system. The other major issue is how the team tracks the markers. “In that first Avengers movie, we were using Masquerade with marker trackers, but all the facial markers were being tracked manually or semi-manually,” Hendler points out. “So, there’s a huge amount of work involved in that. With the new Masquerade 2.0, we really looked at how we could get a much more accurate representation of the actor’s face, and how could we almost fully automate the entire process.”Masquerade 2.0, was really born out of the next generation of projects at Digital Domain. They are currently doing a lot of specific game production. One project is doing somewhere between 30 and 50 hours of articulate facial capture. “That’s simply undreamt of before with our previous system, and that is all pretty much data that nobody is touching,” Hendler adds. Under the old system, this would be too much performance data to manage and stay on schedule, as it happens their process is almost fully automated, with just somewhere between 2% and 5% of shots coming back for some moderate, or small amounts of user lead modification.

How it works (the simple version)

The process starts off with the actor in a seated rig providing a set of high-resolution 4D data along with very high resolution fixed scans. This provides a training data set of how the actors face moves.

The facial modeling team, under the leadership of Ron Miller, creates a detailed high-resolution face model from these high-resolution scans which forms the basis for all the facial data. Later, the actor puts on a vertical stereo helmet camera rig (HMC) with markers on their face. Digital Domain has a clever AI or GAN (Generative Adversarial Network) machine learning system that works out what is or isn’t a marker. GANs are helpful in knowing what is a marker and what is the iris of the eye, for example. Then, with some other clever AI tools using the training data from earlier, the system labels those markers and tracks those markers. It is very fast and very robust now at doing this. “On top of that, our computer vision and other systems go through and make sure that these predicted markers move exactly as they should, through a very accurate camera model,” says Hendler. “We also have mechanisms to figure out if a particular marker really is where it should be, or if something funny is going on? Furthermore, what would happen if I removed this, what would I fill this data in with?”. In short, there is a whole series of different modules in Masquerade 2.0 that are all based around identifying, and accurately predicting, and tracking all of the different markers across the active space.

The facial modeling team, under the leadership of Ron Miller, creates a detailed high-resolution face model from these high-resolution scans which forms the basis for all the facial data. Later, the actor puts on a vertical stereo helmet camera rig (HMC) with markers on their face. Digital Domain has a clever AI or GAN (Generative Adversarial Network) machine learning system that works out what is or isn’t a marker. GANs are helpful in knowing what is a marker and what is the iris of the eye, for example. Then, with some other clever AI tools using the training data from earlier, the system labels those markers and tracks those markers. It is very fast and very robust now at doing this. “On top of that, our computer vision and other systems go through and make sure that these predicted markers move exactly as they should, through a very accurate camera model,” says Hendler. “We also have mechanisms to figure out if a particular marker really is where it should be, or if something funny is going on? Furthermore, what would happen if I removed this, what would I fill this data in with?”. In short, there is a whole series of different modules in Masquerade 2.0 that are all based around identifying, and accurately predicting, and tracking all of the different markers across the active space.

The next goal of Masquerade 2.0 is to be able to create a moving mesh that exactly matches the imagery of the actor from both HMC views as accurately as possible. After Avengers, the team reconstructed their pipeline and questioned all the data and their results. “We were sitting there scrutinizing the way the lip curls come through and when it wasn’t coming through correctly, we investigated why it wasn’t working. And so there’s this entire process to generate a moving mesh exactly matching the actor,” Hendler. “Once we compare the moving mesh to the actors face, if there are missing details or features that are not coming through sufficiently our team can adjust select areas of select poses to exactly match what the actor was doing”. These corrections are then fed back into Masquerade as updated training data.

The output from the Masquerade 2.0 process is a moving mesh of the face with fully animated eyes, which the team wasn’t capturing in the original Masquerade version. That mesh is also solved onto some form of facial rig as part of the process. On top of that, the team retains any differences that may exist between the source and the 3d moving mesh. This set of deltas or differences are then used to more accurately determine what the actor’s performance is doing. Masquerade is intertwined with a series of facial rigs and facial rig modules designed by facial rigging lead Rickey Cloudsdale and Lead Rigger David Mclean, and it is this combination that allows the animation team to easily adjust the final facial animations.

Digital Domain serves a number of different customers who may all require different outputs. Some clients want just the raw 3D moving 4D meshes, but others are being solved to the client’s rigs. Still others, Hendler explains, “we’re generating and handing to them a full facial rig that we’ll hit that the performance quality pretty closely, but is also optimized to run in a real-time engine”. That rig is a proprietary Digital Domain UE4 facial rig. This can sometimes work better as a client’s rig may not have all the right shapes in their rig. In other words, the Masquerade system is producing very accurate results but the client’s rig can’t reproduce those facial expressions due to combinatorial networks and other limitations.

For their own work Masquerade can feed the high-resolution film pipeline or the real-time UE4 pipeline seen with the Digital Doug project at SIGGRAPH 2019. The two key differences between the Digital Doug project and the earlier MEET MIKE UE4 project is that Masquerade uses 4D training data and after it has advanced animation stages using complex machine learning.

Machine Learning Background.

In Machine Learning generally, there are two types of artificial neural networks, those used for pattern recognition typically are Convolution Neural Networks (CNN) and those used for things that are more temporal (time-based) are Recurrent Neural Networks (RNN). For example, reading an image and identifying there is a cat in the shot is a CNN problem. Understanding voice commands to your Alexa or Siri, is typically an RNN problem. A key difference is the CNN has no memory of the last picture it looked at. Specifically, an LSTM (Long short-term Memory) RNN builds on what it learnt in the last image in the sequence it looked at, which might make one think that Digital Domain is solving their Masquerade pipeline using RNN deep learning, but they are not. Both are AI tools, and both are advanced machine learning approaches, which means that they take training data to build the solutions they deploy, but as Doug Roble points out, “Training recurrent neural networks is no easy task,” adding jokingly. ”

RNN are different from traditional ‘feed-forward’ neural networks. Feedforward neural networks were the first and simplest type of artificial neural network devised, and as the name implies, they don’t cycle back, inside the network the information only moves forward. This is not to be confused with what they do internally which is learn by passing back tweaks internally (inside the network) to reduce error in a technique known as back-propagation. This approach of carefully finding a path to less and less error which is called gradient descent. In general, the problem of teaching a network to perform better and better is a quite subtle problem that requires additional techniques. “We’re taking advantage of a lot of temporal information combined with the statistical model to help more straightforward feed-forward networks …because it works better if we know what happened in the previous frame,” Roble explains.

One can do much of what can be normally achieved with an RNN by using CNNs combined with an additional memory aspect. “We are not doing quite that, but that’s kind of similar to what we’re doing and that we have this sort of notion of time associated with this (deep learning) stuff”. Roble leaves the details for his team to explain in the future as they are keen to publish formal SIGGRAPH papers on their specific implementations.

Marker tracking mechanism

The marker tracking mechanism is a good example of this. The new automated tracking mechanism within Masquerade is not working using old-style ‘Harris Corner’ pattern matching or just single frame CNNs. It is using some time-based data but not as an RNN. “We have a lot of statistical data for the face, how the face is moving, the gradients and velocities of all the different things on the face. All of that is used in our prediction of markers and specifically in the prediction of when markers are not correct as well. We need to ensure that those markers are always statistically correct – motion wise,” explains Hendler. With actor tracking, it is not just a mathematical error the tracking mechanism needs to allow for. Actors can put their hand to their face and partially block the camera. In these situations, the system needs to maintain a plausible idea of where all the trackers are, even if the audience won’t specifically see that part of the actor’s face. But the system still needs to statistically fill in the missing data and be able to predict the hidden part of the face so that the rest of the face is correct and so it can pick up the markers again as the hand recedes.

Tracking markers

Digital Domain typically uses 150 markers on the face. The number is to provide enough data when some markers might be occluded. Digital Domain doesn’t have to do this whole approach with markers necessarily says Hendler. “It’s just that every time we look at markerless approaches the level of attention to detail in the framing, the focus, the ability to have any kind of tolerance to helmet shifts, – really makes it very hard to have a robust system, which is why we keep on going back to the marker detail on the face approach”. The markers don’t need to be exact day to day on set. The system adapts to daily changes in applying the markers to the actor’s face.

Digital Domain does a lot of facial capture work in the IR space. The IR cameras have a shallow depth of field, and the dots being very high contrast, provide accurate information that just skin pore detail would not reliably do. The cameras are running at 2K resolution. The Real-Time Live Digital Doug at SIGGRAPH used the Masquerade live system which streamed the RGB camera data. For the normal Masquerade system, the data is recorded onto the onboard computer with a WiFi live stream for on-set supervision.

Delta corrections

The raw Masquerade output is compared not just to the training data that may have come from a seated Medusa session or DI4D training data session but also from some much higher-level ICT Light stage scans or some other very accurate photogrammetry session. For example, an actor, in addition to recording training data, may have 12 Light stage expression scans done. These are static but extremely accurate. Feeding this into the process and using Digital Domain’s machine learning approaches, the Masquerade process fills in missing data not there in either the Disney Studio’s Research Medusa or USC-ICT Light stage static scans when looking at a recorded actor’s new performance.

“We have a process by which we’re regenerating some form of shapes for the actor, for our actor rig,” Hendler explains. “But we also use that Delta data to modify and adjust those shapes. And so those shapes get closer and closer to the Actor’s likeness.” Understandably there will always be some form of Delta between the two, some difference mathematically, and Masquerade has different ways in which to retain that delta or error and use it correctively later in the process.

A giant self-correcting feedback correction system

Masquerade works much as it did before, but with more automation, much greater speed, and with each individual step improved, while maintaining the fundamentally same approach. Masquerade is a very modular system, “The cool thing about Masquerade is each one of those modules learns to be better,” explains Doug Roble.” There’s this module that tracks the markers and it uses what it knows about the shapes to predict where the markers should be. And it learns based on what it’s seeing and the correctives in the process. It then gets smarter. It basically figures out where those markers should be, which then makes the tracking better, which then feeds back into the shape prediction.”

This makes Masquerade better and somewhat of a giant feedback loop in a machine learning kind of way. “And the artists can plug these modules in together and say, okay, let’s spend a little bit more time making this one thing better, – and it then ripples the effects of that through the whole pipeline,” he adds. All of which makes the entire Masquerade process sound like it works in a similar fashion to a Neural Network. This is also what makes Digital Domain’s work so difficult and impressive. Just as with actual Neural Networks, the theory of self-improvement is one thing, but inside an AI when a system is doing a gradient descent, to reduce errors in a back-propagation, it can veer off to infinity or crash to zero. Massive pipelines that pass along data to reduce error and thus become smarter are incredibly hard to make and even harder to reliably get to work without human intervention and constant supervision. “One of the cool things about Masquerade is that when we’re trying to make this stuff ‘better’, we have a lot of different ways of determining loss. For example, the system also uses optical flow. Optical flow sort of has error built-in. If we use that in conjunction with actual OpenCV tracking of markers, and then we layer on optical flow, we can help the OpenCV tracking of the markers,” Roble explains. “And then we have multiple head-mounted cameras so we can start doing 3D reconstructions – so we can get 3D loss there as well.” And so Roble explains the layering of all these different loss functions on each one of these different things, “makes it all easier and simpler to do the back-propagation because we can scoot in these loss functions that help things along the way. And that was one of the key genius things that the authors of this stuff he came up with. It is using as much information from the computer vision and not just trying to do pure machine learning to solve the problem”.

FACS

One of the other innovations that Digital Domain is working on is how it works with FACS, the Facial Action Coding System. Their system is called Shape Propagation, and in the system, one can provide a 3D version of an actor’s head. Digital Domain can then simulate a full set of FACS shapes, 1500 different shapes that are based on average FACS shapes. “They’re all different expressions, but they’re not that person’s expressions. It has a smile, but it’s not that person’s smile,” says Hendler. Thus Digital Domain is building, by transfer, a set of shapes for the new face without the client having to provide a FACS session of scans. Digital Domain can then solve that to the 4D data coming out of the Masquerade system when the actor is wearing the HMC, – but it wouldn’t be that actor’s unique expression – it would not be their exact smile. What the team is rolling out as part of their new Shape Propagation is automatically rebuilding all of those FACS shapes to ensure that the Shape Propagation system gets as close as it can to the actual actor’s performance. It’s actually updating and modifying all of Masquerade FACS shapes without doing or providing one. It is doing this without requiring a custom modification or hand tweaking of those shapes to build up a normal rig. “It all gets back to this modular system that can refine each part of the module to adapt to what it has,” says Roble. “So if we’ve got you in Australia without a special 4D system or anything similar, we could have you put on the HMC – put some dots on your face, and we could create a plausible version of you just from what we captured.” Furthermore, as the team captured more information about the location of the vertices and that person’s shapes “we would be able to tighten it up. We’ll figure out what your face is doing from just watching what your face is doing”.

Neural rendering

As fxguide has covered extensively, facial reconstruction and simulation is an area of keen research using neural rendering. Digital Domain is cagey to discuss the topic, “so we are definitely involved in neural rendering and hybrid situations and the possible application in feature films. I just don’t think we are ready to talk about it just yet,” joked Hendler. Digital Domain is already working with their clients on new approaches, modifying filming procedures, special custom actor acquisitions, and more, all of which, is because “the hybrid approach is already here and being used in production” states Hendler on the record, but he would not be drawn further on any details.

“Digital Domain innovations are responsible for some of the most memorable visuals of the past 27 years,” said Daniel Seah, executive director, and chief executive officer of Digital Domain. The VFX team at Digital Domain has brought artistry and technology to films including Titanic, The Curious Case of Benjamin Button and blockbusters Ready Player One, Avengers: Infinity War, and Avengers: Endgame. “With Masquerade 2.0, digital humans will not only grow more accessible to our clients but more realistic and appealing to audiences, raising the prestige and credibility of our industry around the world,” he adds.

“Our innovation strategy at Digital Domain is all about improving our internal pipelines and serving our clients. Delivering believable human-driven performance is not just vital for our features clients, but for our game, episodic and streaming, experiential and advertising clients as well,” said John Fragomeni, global VFX president. “As audiences become more and more expectant of near-flawless imagery for all their content, we are constantly pushing to create solutions that are superior in quality, faster, and adaptable to any form of entertainment.”

SIGGRAPH ASIA

Both Darren Hendler and Doug Roble will be talking at SIGGRAPH Asia as part of the pane: Digital humans are back! Creating and using believable avatars in the age of covid – Part 2

Date and time (SG Time, GMT +8): 11th of December, 11am – 12 :30

Date and time (SG Time, GMT +8): 11th of December, 11am – 12 :30

They will be joining Christophe Hery (Facebook), Hao Li (Pinscreen) and fxguide’s own Mike Seymour. This is a virtual event. For more information see sa2020.siggraph.org