Chris Nichols is a long-time friend of fxguide and an inspiration to the VFX community. As Director of Chaos Labs and host of the CG Garage & Martini Giant podcasts, he provides a brilliant link between the cutting-edge developments in rendering at Chaos and the VFX user community.

V-Ray has long been one of the industry’s most respected rendering engines. In this podcast, we talk to Chris about Chaos’ V-Ray compatible, real-time ray tracer: Vantage. Chris is speaking next week at the RTC conference (see below) about Vantage and a proof of concept film Killtopia, done with animation start-up Voltaku. Voltaku is a new studio merging real-time virtual production and gaming pipelines to create projects and stories that transcend traditional platforms, media, and devices.

Chris helped pioneer the bridge between V-Ray, Unreal and interactive Vantage renders that both produce ultra-fast results and yet also dovetails into a more traditional AOV comp pipeline. The work Chaos has done in virtual production rendering is spectacular and builds on years of production workflow experimentation.

In this week’s podcast, you will hear Chris and Mike refer to the much earlier Kevin Margo’s CONSTRUCT project. Read our fxguide story on that project here. The renewed interest in real-time ray tracing for virtual production came from Charles Borland, the CEO of Voltaku, who approached Chris and wanted to build on the CONSTRUCT pipeline.

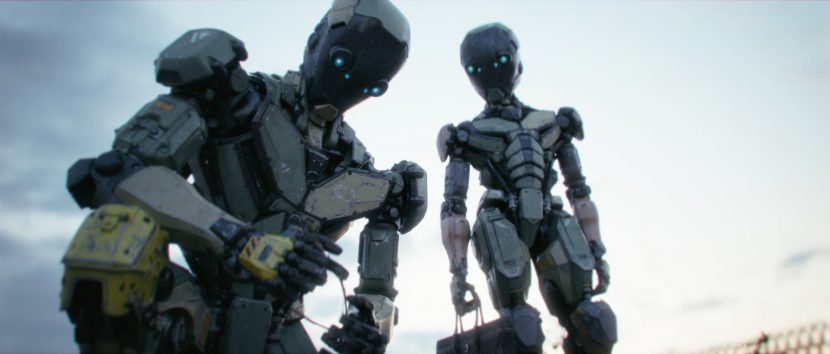

For the proof of concept, the team highlights one of Killtopia’s most vivid characters, Stiletto, as she has a cheeky back-and-forth with a robot cameraman. Voltaku also partnered with David Levy at Pitch Dev Studios to do all the concept art and asset development, and his team also created a general location layout. As Chris explains in this episode, as the project meant moving from the hard surface robot characters of CONSTRUCT to a humanoid character, it required a major jump in the tech and infrastructure of the virtual production pipeline.

You can see the results of their collaboration as part of Chris’ Real-Time Conference talk and the subsequent panel discussion on Monday, Nov 7th 4.30 PST.

Real-Time Conference

Real-Time Conference

After “Building the Metaverse” (April 2021) and “Populating the Metaverse” (December 2021), this November 2022 RTC continues to explore the way real-time technologies enable the creation of the Metaverse, through three different perspectives: Creativity, Technology, and Economics.

The RTC November 7-9, 2022 topics include:

- Creativity, Technology, Economics: The Open Metaverse Paradigm

- Virtual Production | Accelerating Content Creation with Machine Learning

- Digital Twins, Computer-Aided Engineering (CAE) & Simulation

- The Open Metaverse Paradigm – Explore the Business Metaverse

- Virtual Production | The Risks and Benefits for Small Studios Going Real-Time

- Real-Time at SIGGRAPH’22: A retrospective + Real-TIme at SIGGRAPH Asia ’22: An Early Look

- The Open Metaverse Paradigm – Tools to Develop the Future

- Architecture, Engineering, Construction & Operations (AECO)

- Virtual Production | Decentralized! How to enable distributed workforces

- Interactive Storytelling | Controlling the Interactive Narrative

- Virtual Production | Accelerating Animation Production with Real-Time Workflows

- On Set Lighting Technologies

- Interoperability | Meeting the Challenge of Interoperability Across Real-Time Industries

- Immersion in Broadcast – Delivering Next-Generation Viewer Experiences

- Virtual Production | The Future of Virtual Production Technology

- Retail & 3D Commerce

- Digital Fashion

You can register for free: Online here

Above: Pixomondo and William F. White are sponsoring RTC with the use of their LED stage in Toronto.