Not everything is huge enough for a major story, and in a sometimes depressing media landscape, it’s always good to highlight brilliant things people are doing. Here, in no particular order, are five unrelated things that are just excellent, and made us happy here in the fxguide tech compound.

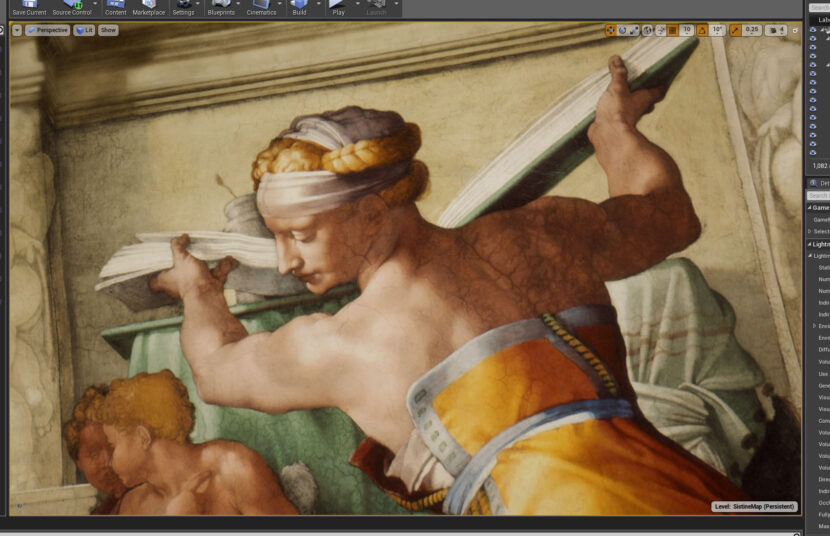

1. The ceiling of the Sistine Chapel

The Ceiling of the Sistine Chapel, well actually viewing Michelangelo’s Sistine Ceiling on a new Valve Index headset in UE4. Earlier this year Chris Evans, SIGGRAPH 2019 Games Co-Chair, showed his Il Divino: Michelangelo’s Sistine Ceiling in VR. About 400+ people got to experience this in LA, but as it is now on Steam, over 8,000 people have already seen this amazing work.

While it works on a variety of VR headsets – it is especially amazing on the Valve Index. The resolution and fidelity of the optics are a huge jump in just one generation of technology. The field of view feels about 25% wider than the HTC Vive, and it runs at very high Hz, up to 120 in some cases. This is the perfect VR experience to savour the high-fidelity visual re-creation without eye strain, even from 70 feet below the ceiling.

Chris Evans has a longtime passion for Michelangelo’s work, we previously reported on his VR work with Michelangelo’s David (SIGGRAPH 2017).

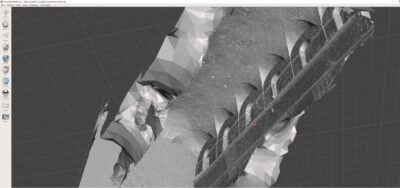

What is remarkable about Evan’s work is that he was never allowed to film in the actual Sistine chapel. The entire piece is made up from examining detailed public historical records and scrapping public domain images that people have taken (semi-illegally). The Vatican does not allow photography, yet in this age of camera phones, many people do take images and post them. This makes the Sistine Chapel one of the most photographed works of art in the world, and there are many books that recreate parts of the frescoes down to 1:1 scale images. These thousands of public images were used to produce a photometric reconstruction, which worked along with 19th-century architectural drawings to model the chapel. But the texturing was more complex. As all these images were taken at different times with different colour temperatures and filters, Evans had a huge task to faithfully normalise the colours of the fresco and tile the images while avoiding blurring from image processing.

What is remarkable about Evan’s work is that he was never allowed to film in the actual Sistine chapel. The entire piece is made up from examining detailed public historical records and scrapping public domain images that people have taken (semi-illegally). The Vatican does not allow photography, yet in this age of camera phones, many people do take images and post them. This makes the Sistine Chapel one of the most photographed works of art in the world, and there are many books that recreate parts of the frescoes down to 1:1 scale images. These thousands of public images were used to produce a photometric reconstruction, which worked along with 19th-century architectural drawings to model the chapel. But the texturing was more complex. As all these images were taken at different times with different colour temperatures and filters, Evans had a huge task to faithfully normalise the colours of the fresco and tile the images while avoiding blurring from image processing.

Paul Huston, a matte painting and texturing colleague of Evans, and formerly head of the Digital Matte dept at ILM, also worked with him on the project. Evans joked with Huston “that from the windows down, I only really need it to be plausible. There are some Popes under the windows I actually call the ‘plausible Popes,’ because no one takes photos of them!” He added that “my focus has always been on the ceiling, sometimes at the detriment of everything else, so it’s been great to have Paul helping me out. I’ve actually known Paul longer than I’ve known Michelangelo, and he’s been a great mentor over the years”.

Michelangelo painted over 6,000 square feet of fresco with more than 300 individual figures packed into every inch. While many people have visited the Vatican, it is now possible to examine the work in VR to the degree where you can see individual brush strokes and cracks in the plaster thanks to 90-odd 4K maps, all running live in Unreal Engine.

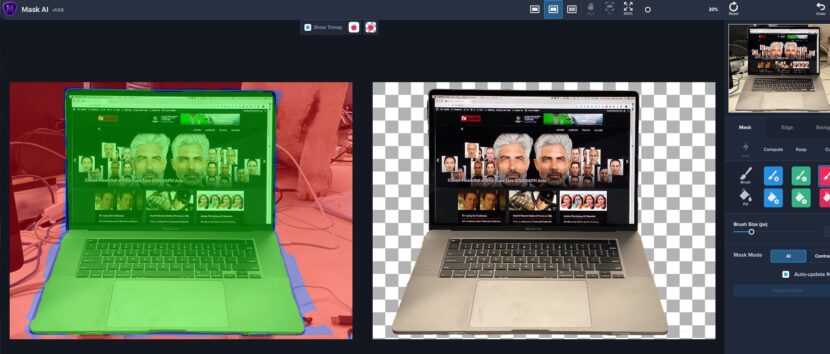

2. The new Apple 16″ MacBook Pro Laptop.

This should not be such a joy, after all, it is similar to the previous MacBook Pro. The keyword is similar. The differences are wonderful. Firstly, the new keyboard keys: the arrows are better, and you just don’t know how much you miss an escape key until it is not an option, or rather it is not a normal key but a software-only option. And, surprisingly, the really improved speakers make one smile. It’s not that these minor things alone make the MacBook Pro great, in a sense, they are tiny points, but this ongoing refinement and improvement of the MacBook Pro just continues to make this laptop great. I am aware I could be called a fanboy, and I accept that happily. I am, I love well-made Apple products and I love this 8 core i9 Macbook Pro.

Years of constant improvement and attention to detail matter. For example, the similarity of the colours on the Laptop compared to the new upcoming Apple monitor to even my iPad Pro is something one can almost take for granted. The new 16-inch, 500 nits display is the largest-ever Retina display on a Mac notebook, but it is the consistent rich colors, without having to recalibrate with probes, which’s just one thing on a long list of small but important improvements that make one’s day just that bit easier.

https://www.youtube.com/watch?v=ysRigNyavF4

I use my Laptop constantly and when traveling it’s both my workstation and my entertainment system so the new 6-speaker sound system was something I was hoping would provide a noticeable improvement. It does, and it works much better than I even hoped. I still use a Rode mic for podcasts, where audio quality on interview calls is key, but the new 3 mic system means I no longer travel with a mic for Skype calls and interviews. In short, this is just a great pro production tool if you are constantly on the move, and work from wherever you happen to be.

3. Topaz AI Gigapixel

Topaz has been knocking it out of the park with their applications of Machine Learning and AI to common image processing problems. My favorite is their AI Gigapixel which scales up images. This program simply works. It works better than Photoshop, better than it used to work, and better than it should. If you have ever found old smallish images from earlier in our career, or gotten sent images that are only medium resolution and you want to blow them up then this Topaz AI Gigapixel is for you. The program is $99 and there is a free trial version. I don’t know the team behind this program or much about how they developed it, but I use it a lot. It’s brilliant.

The company now makes a range of similar AI tools such as Mask AI which I am only just starting to use and seems a tad less successful or automatic than the Gigapixel. One cannot help but feel that while much of this technology is aimed at still images, this type of powerful AI processing has to find its way into serious compositing packages for processing clips soon.

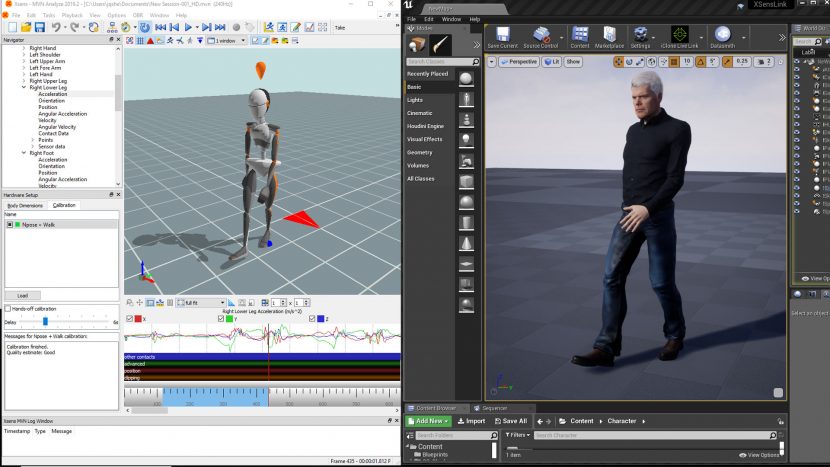

4. XSens suit

The Xsens suit is not new, but as we have been using it lately, it is worth commenting just how well-made and well-engineered the Xsens MoCap suit is. Providing clean data and a HD mode ( While the suit works reliably live, with the loss of Ikinema from general use (due to their acquisition), being able to have a capture cleaned up and refined with the near-real-time secondary processing is a great advantage. I am no MoCap expert, but I know a lot of them and when I have done site visits a vast majority use XSens suits when doing this style of work, away from full capture volumes. The Xsens MoCap suit is not the cheapest, but increasingly I find myself balancing price with ease of working, reliability, and not dying young of stress – (the latter of which I am a big fan of).

5. ‘Baby Yoda’

What can one say about the brilliance of The Mandalorian and the iconic character of 2019: ‘Baby Yoda’? This show is outstanding, I want to buy a t-shirt emblazoned with “In Favreau we trust“. Jon Favreau has created a TV show that stands alone, and while it is in the Star Wars universe it neither feels like a poor cousin technically nor narratively. The shows are entertaining and the technology involved groundbreaking. Plus, Favreau stopped any pre-release of Baby Yoda in the marketing or in the line of merchandise, which alone was a stroke of genius.

This show has everything:

- Stagecraft from ILM powered by UE4, resulting in innovative new visual effects in-camera.

- Great Stories, drawing on classic films and mythology

- Star Wars references without being just a collection of greatest hits from the previous films.

- Characters arcs played out for both action and humor

- Oh, and did I mention Baby Yoda: created old school as a puppet!

While I like Disney+ and many of its other great offerings, we would pay alone for just The Mandalorian.

Baby Yoda is an animatronic creation operated by a team of puppeteers. It’s tactile and alive, charming and familiar. But what I love is that the physical approach of a puppet happily co-exists with the latest in cutting edge Digital VFX. This debate of ‘real vs CG’ is boring and pointless. What Favreau has shown is that both approaches are valid, the audience wants the emotional connection not a validation of different technologies. The Mandalorian simply uses what is best for what is being shot. In the case of Baby Yoda, it is old school (most of the time – there are some ILM 3D Baby Yoda shots), but the same team also uses the most innovative on set real-time UE4 graphics to shoot final pixels in-camera from inside the ultra-high-resolution real-time StageCraft video cube set. The Mandalorian Stagecraft sound stage has LED video walls on all sides and above which are used to provide in-camera effects.

Often times the digital locations that are seen in the Mandalorian are filmed live on the LED walls. These displays offer the correct dynamic parallax as the camera moves since the LED views are generated in real-time. If the green screen is needed then just a dynamic patch can appear behind the actor, but the rest of the box or cube-like set still produces the correct contact lighting. In LA in June, Favreau commented that “we got a tremendous percentage of shots actually in-camera, just with the real-time renders from the [UE4] engine, that I didn’t think Epic was going to be capable of. For certain types of shots, depending on the focal length and shooting with anamorphic lensing, we could see in camera, the lighting, the interactive light, the layout, the background, the horizon. We didn’t have to mash things together later (in post).”

Often times the digital locations that are seen in the Mandalorian are filmed live on the LED walls. These displays offer the correct dynamic parallax as the camera moves since the LED views are generated in real-time. If the green screen is needed then just a dynamic patch can appear behind the actor, but the rest of the box or cube-like set still produces the correct contact lighting. In LA in June, Favreau commented that “we got a tremendous percentage of shots actually in-camera, just with the real-time renders from the [UE4] engine, that I didn’t think Epic was going to be capable of. For certain types of shots, depending on the focal length and shooting with anamorphic lensing, we could see in camera, the lighting, the interactive light, the layout, the background, the horizon. We didn’t have to mash things together later (in post).”