Here at fxguide have written about the coming power of NeRFs and NVIDIA is a leader in this area. Often artists need to sample environments and props to produce digital twins, copies, or digital duplicates. Photogrammetry poses real issues in terms of the number of images required. NVIDIA has just published its work on using video from a phone to produce 3D models using NeRF technology.

Neural surface reconstruction has already been shown to be a powerful way to recover dense 3D surfaces using image-based neural rendering, but many current methods struggle to provide models with enough detailed surface structures.

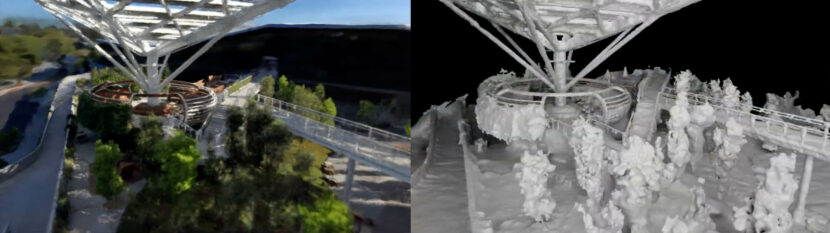

To address this issue, NVIDIA has released Neuralangelo, which combines the power of multi-resolution 3D hash grids with neural surface rendering. Two key ingredients enable this approach, First is the use of numerical gradients for computing higher-order derivatives as a smoothing operation. Secondly, Neuralangelo uses a coarse-to-fine optimization on the generated hash grids controlling different levels of details. Even without extra camera inputs such as depth maps, Neuralangelo can effectively produce dense 3D surface structures from a clip with fidelity significantly better than most previous methods. This enables detailed large-scale scene reconstruction from video captures, such as drones and handheld phone videos.

As Neuralangelo generates 3D structures with intricate details and textures, VFX professionals can import these 3D objects into their favorite 3D and design applications, and edit them further for production use.

Why not just use Photogrammetry?

Normal image-based photogrammetry techniques use a ‘volumetric occupancy grid’ to represent the scene it is capturing. Each voxel in Photogrammetry is visited and marked as ‘occupied’ if there is a tight color constancy between the corresponding projected image pixels from the various original camera views. The photometric consistency assumption typically fails when you are using autoexposure or filming reflective surfaces (non-Lambertian) materials, which are extremely common of course, in the real world. NeRF technology no longer requires this color constancy constraints across multiple views when doing a NeRF 3D reconstruction. By comparison, NeRFs achieve photorealistic results with view-dependent effects, i.e. unlike photogrammetry the surfaces seem to capture the way the surface changes depending on the angle viewing it.

How does it work?

One could build a point cloud, using multi-view stereo techniques but this often leads to missing or noisy surfaces and they still struggle with non-Lambertian materials. NeRFs achieve photorealistic images with view-dependent effects as they use coordinate-based multi-layer perceptrons (MLPs) to represent the scene as an implicit function. It encodes 3D scenes with an MLP mapping 3D spatial locations with color and volume densities. Leveraging the inherent continuity of MLPs with neural volume rendering can allow for optimized surfaces to interpolate between spatial locations, resulting in smooth and complete surface representations. The problem with these MLP neural renders has been that they don’t scale well. But a recent paper Instant neural graphics primitives with a multiresolution hash encoding addressed this. The new scalable representation is referred to as Instant NGP (Neural Graphics Primitives).

Instant NGP introduces a hybrid 3D grid structure with a multi-resolution hash encoding and a lightweight MLP that scales. The hybrid representation greatly increases the power of neural fields and has achieved great success at representing very fine-grained details for objects. In NVIDIA’s new work, they offer Neuralangelo as a high-fidelity surface reconstruction using this new technology. Neuralangelo adopts Instant NGP as a neural rendering representation of the 3D scene, optimized to work from multiple different views via neural surface rendering.

Neuralangelo reconstructs the scene from multi-view images. Neuralangelo samples 3D locations along a camera view, from a video clip, and uses a multi-resolution hash encoding to encode the positions. The process offered by Neuralangelo is simple yet effective: using numerical gradients for higher-order derivatives plus a coarse-to-fine optimization strategy, Neuralangelo offers the power of multi-resolution hash encoding for neural surface reconstruction.

Neuralangelo effectively recovers dense scene information of both object-centric captures and large-scale indoor/outdoor scenes with extremely high detail, enabling detailed large-scale scene reconstruction from a normal video.

Neuralangelo’s can translate objects with complex real-world textures and complex materials, such as roof shingles, panes of glass, and smooth shiny marble. The method’s high-fidelity output makes its 3D reconstructions much more useful. “The 3D reconstruction capabilities Neuralangelo offers will be a huge benefit to creators, helping them recreate the real world in the digital world,” said Ming-Yu Liu, senior director of research and co-author of the paper. “This tool will eventually enable developers to import detailed objects ranging from small statues to massive buildings for use in virtual environments, set reference reconstruction and games or digital twins.”