We were recently asked, (several times actually), where will AI and Machine Learning (ML) impact next? It is a reasonable question since ChatGTP and Stable Diffusion have had such a dramatic impact. It would be possible to just focus on how these two technologies alone will change our workflows and jobs, but there is another revolution happening with machine learning in volumetric capture. Or rather capturing experiences for anyone to view dynamically on standard mobile devices. Welcome to the new world of professional ‘experiencials’ and NeRFs.

AI background

AI or rather machine learning has opened the door for remarkable new possibilities. NeRFs, at any other time, would dominate discussions about ML but it is being partly overlooked due to the attention given to conversational AI and inferred imagery. A neural radiance field or NeRF is a fully-connected neural network for generating new views of complex 3D scenes, based on just a partial set of 2D images (see our earlier GDC story). NeRF takes input images representing a scene and interpolates between them to render a complete scene. As one expert commented to us. “NeRFs have really switched from how we used to think about modeling the world, let’s say a decade ago or even four years ago, and how we think about it in the world of radiance fields. ”

We asked Google’s Bard AI to explain NeRFs:

” NeRF, or Neural Radiance Field, is a type of 3D representation that can be created from a collection of images. NeRFs are able to capture the geometry and appearance of a scene in a way that is more detailed and realistic than traditional 3D models.NeRFs are created using a neural network that is trained on a set of images of a scene. The neural network learns to associate each pixel in an image with a point in 3D space. This information is then used to create a 3D representation of the scene.

NeRFs have a number of advantages over traditional 3D models.

• First, they are able to capture more detail. This is because NeRFs are able to use information from multiple images to create a 3D model.

• Second, NeRFs are able to capture the appearance of a scene more realistically. This is because NeRFs are able to use information about the color and texture of objects in a scene to create a more realistic 3D model.”

Bard does a good job of summarizing. NeRFs are not photogrammetry, in the right context, they are far more powerful. NeRF is not the only major innovation in ML-based Volumetric capture, but it is the stellar example. It produces a 5D function representing the radiance emitted in all directions, as a NeRF output can show you the image with plausible light properties from a new viewing angle, it can ‘sort-of’ be considered a fast ML approximation of a Light Field. A light field can be a more accurate vector function that describes the amount of light – in every direction at every point in space. The space of all possible light rays is given by the five-dimensional plenoptic function, and the magnitude of each ray is given by its radiance. This makes light fields able to be used to render realistic lighting as one moves through a volume, but they are anything but easy or quick to work with.

By contrast to both photogrammetry and plenoptic light field functions, NeRF can be used to render the scene from any viewpoint, even if the viewpoint is not covered by any of the training images. NeRFs are getting vastly faster and more accurate at an unbelievable rate. Volume rendering enables users to create a 2D image of a sampled scene or discretely sampled 3D dataset. For a given camera position, volume rendering algorithms provide the RGBα (Red, Green, Blue, and Alpha channels) for every voxel in the space through which rays from the camera are cast. With modern GPUs, this is now happening with incredible speed.

What matters is not only the ML or the maths involved in volumetric rendering but how this will find its way into our professional working lives. With many new technologies, their demonstration at conception can seem incredible but one can’t immediately see it will structurally change our professional workflow.

Personal Capture: Luma AI

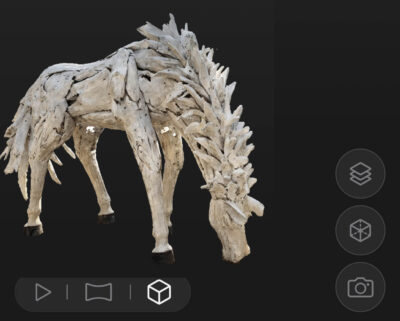

Programs such as Luma AI already let you create your own NeRF files and then export them to a variety of formats, simply and effectively. fxguide created this NeRF of a horse statue in Korea by walking around it with an iPhone using a Beta version of the software. The video below is not a live-action filmed camera move, it is a NeRF camera move around the captured volume. The Luma App processes in the cloud and produces visualizations, video, and data exports such as an object mesh (GLTF or OBJ), a point cloud (PLY), or scene mesh (PLY).

Programs such as Luma AI already let you create your own NeRF files and then export them to a variety of formats, simply and effectively. fxguide created this NeRF of a horse statue in Korea by walking around it with an iPhone using a Beta version of the software. The video below is not a live-action filmed camera move, it is a NeRF camera move around the captured volume. The Luma App processes in the cloud and produces visualizations, video, and data exports such as an object mesh (GLTF or OBJ), a point cloud (PLY), or scene mesh (PLY).

Luma AI works brilliantly but on static scenes, it is not designed to capture motion. Future NeRF solutions and applications need to not only capture a scene and capture motion and ultimately allow re-animation or the driving of new expressive narratives. One company at the forefront of that research is Synthesia. “The big trick is going beyond some kind of static appearance and static light interaction to the dynamics of the scene,” explained CTO Jonathan Starck.

Professional capture

Synthesia’s aim is to make video easy for anyone. “You should be able to go directly from an idea to a beautiful video using text as a universal input, making it as easy as writing an email,” commented Jonathan. “This would make video a part of everyone’s toolbox.” To that end, Synthesia has a large 40-person engaged research team in London, focused on two central pillars, “unlocking richer content by replacing physical cameras and enabling creation from idea to video.”

Representing human performance in high fidelity is essential to VFX, gaming, and even advanced videoconferencing. This week the research team at Synthesia released HumanRF: High-Fidelity Neural Radiance Fields for Humans in Motion.

Representing human performance in high fidelity is essential to VFX, gaming, and even advanced videoconferencing. This week the research team at Synthesia released HumanRF: High-Fidelity Neural Radiance Fields for Humans in Motion.

HumanRF is a 4D dynamic neural scene representation that captures full-body appearance in motion from multiple video inputs and enables playback from any unseen viewpoint. Synthesia already has a major SaaS business in producing inferred photoreal digital humans from a viewpoint-specific deferred neural renderer. Their digital humans face the camera and can deliver any dialogue you provide based on just text or an audio clip. With HumanRF, the team now moves towards digital humans able to view delivering performances from any angle.

It is a major undertaking to produce “photo-real physically plausible dynamics for the whole body, not just the face,” comments Jonathan. “It’s the hair, clothing, hands, etc and it all has to look real, it all has to be ‘production quality'”. Their target is high-fidelity playback with an implicit representation. The team does not want to be bound by modeling of explicit surfaces for humans, “explicit surfaces are always going to be an approximation that breaks down,” he adds. “We are building representations using NeRFs. It’s the task of the network to ‘learn’ what is important to reproduce high-quality input images, rather than requiring an explicit representation of the scene modeled by a person.” The HumanRF method doesn’t use traditional 3D, it is template free. It doesn’t use a prior representation such as SMPL (a body-under-clothing-model). “We need to be free to represent diverse clothing/hair/ dynamics for people.” HumanRF is essentially a very high-quality 3D ‘video’ codec. They do this by having the NeRF effectively ‘model’ the human in motion using a neural network. “We encode temporal segments in a neat way.” he explains. “We expand tensor decomposition of neural volumes to temporal window of frames. It’s a 4D decomposition with continuity across temporal blocks.”

The team behind it includes the brilliant Matthias Nießner, whose work focuses on 3D reconstruction. He is well known for his work on facial reenactment. Along with colleagues, he developed, Face2Face, which was the first work to manipulate facial expressions from consumer cameras in real-time, and then the foundation of Synthesia (see our 2018 story). Also an author of the new paper is Jonathan Starck. fxguide readers may know from his time as CTO of The Foundry in the UK. The research is led by Mustafa Işık, a Research Engineer at Synthesia Lab and who was previously, at AdobeResearch. with Martin Rünz, Synthesia Research Scientist along with Markos Georgopoulos, Taras Khakhulin, and Lourdes Agapito, completing the research team.

Their new research acts as a dynamic video encoder that captures fine details at high compression rates. They do this through a series of innovative steps that factorize space-time into a temporal matrix-vector decomposition. This allows them to obtain smooth (temporally coherent) reconstructions of actors for long sequences while representing high-resolution details even with incredibly challenging complex motion.

To produce the HumanRF work the Synthesia team worked in collaboration with Lee Perry-Smith and the team at InfiniteRealities, who have themselves been designing and building photometric and motion scanning systems since 2009. Together they captured 12MP footage from 160 cameras and 400+ multi-flash LEDs of 16 actor sequences with high-fidelity, per-frame mesh reconstructions.

This week the team released not only the paper on their HumanRF but ActorHQ, a data set of the 12Megapixel/16 sequences of 8 people. The data set is open-sourced to the academic community. “This is a one-of-a-kind dataset that we hope will bootstrap research into high-quality representations that can one day be used in production,” Jonathan explained. “We are now building our own multi-camera studio with Esper and IOI to capture high-fidelity neural actors.” The new studio at Synthesia will up the resolution with the use of new 24 Megapixel cameras and will be used to not only capture more performances but also generate the high-quality ML training data that will allow Synthesia to move to the ultimate goal of being able to direct and drive HumanRF level digital humans while viewing them from any angle.

For a single person in a studio, one can record a lot of data to get to a plausible single performer that can do a range of actions and dynamics. But as Jonathan explains, “if you want to generalize that, for example, so we can create your 3D avatar just from an image or a short video sequence or monocular data, – then that needs large data sets, – because that needs a full generalized model.”

It is worth pointing out that most prior approaches and data sets have been at resolutions of 4MP or lower, and often with quite restricted clothing or ranges of motion. The ActorHQ set represents diversity in not only the actors but their range of clothing types, hairstyles, and body/muscle activations. “We are releasing the dataset and running a workshop at CVPR this year, to officially open the data set to the academic community,” Jonathan comments with the objective of promoting “high-quality view synthesis and high-quality novel pose synthesis”.

INSTA: Instant Volumetric Head Avatars

One might be of the opinion that dynamic NeRFs are only for high-end applications involving 120 cameras and thus a million miles away from what most individuals can do, however also released recently is INSTA, by a separate team but respected colleagues at the Max Planck Institute for Intelligent Systems, in Germany. INSTA lets you almost instantly create or reconstruct an animatable NeRF of your head as a personal avatar, – in just a few minutes.

This work is led by Wojciech Zielonka, with Timo Bolkart, and Justus Thies as co-authors. Since 2015 we have been reporting on Justus Thies’s remarkable research work (since he was a second-year PhD student). He is now the Planck Research Group Leader focusing on the AI-based motion capturing and synthesis of digital humans. The new work seems to mimic the motions and facial expressions of people who might be in a video conference meeting.

To make these avatars – instead of using complex rigs and hundreds of cameras, this work reconstructs the subject’s avatar with INSTA within a few minutes (~10 min) and can then be used in real-time (interactive frame rates). This technology only uses commodity hardware to train and capture the avatar, just a single RGB camera to record the input video. Other state-of-the-art methods that use similar input data to reconstruct a human avatar can be a relatively long time to train, ranging from around one day to almost a week. The system can make an avatar with a DSLR or even from a Youtube video.

Again, the system can allow you to see around the side of the face if the head turns, even if the source actor is filmed from the front, but most importantly the resulting face can be driven in real-time.

So where is it going?

Above is all fact from peer-reviewed professional or academic sources, but to answer our original question about where this is going, we need to speculate. In other words, what follows is not based on insider knowledge, NDA violations, or leaks: it is just speculation.

Why the Metaverse sucks as a model & what APPLE could do?

What could a customer experience company like APPLE do with NeRFs or some version of it?

What could a customer experience company like APPLE do with NeRFs or some version of it?

Apple recently was rumored to have delayed its much-rumored Apple glasses. VR headsets while improving, are hardly mainstream media and entertainment (M&E) devices. Outside some gaming and some corporate applications, VR headsets are just not popular. Nor we would argue is the popularist characterization of a ‘Metaverse’. The hype has been loud and frankly, the idea that one would put on a VR headset and play poker with a bunch of mates regularly is laughable. Perhaps once as a gimmick, but not as a regular broad entertainment template.

People often extrapolate technology and assume a new version of some current practice, so the Internet was going to be great for companies to publish their digital brochures. When in reality the amazing success of Facebook and Youtube was providing a platform for us to post our own things. People predicted home computers would make our life paperless, but instead, it led to an explosion of laser printers. The history of tech is piled high with such poor extrapolations while the reality is that it is new uses that drive killer tech – not old applications done a little bit better. The iPhone exploded in popularity not because it provided an amazing new phone call experience. It provided mobile internet access and the ability to make specialist mobile computing apps. You could even argue it was the addition of a computational photography camera for ‘non-phone’ uses that led to much of the iPhone’s success.

All of which is a long way to say that volumetric ML solutions may be useful for some existing processes, but it will explode when used in new ways. VR headsets are expensive, lacking in convenience, and they are isolating. VR or XR may use this new tech but that is not going to change much. People aren’t going to live in VR worlds depicted in Ready, Player One (as much as we love the book & the film). While Apple is rumored to be stopping the R&D on display glasses, it is not rumored to be halting work on a capture headset. In fact, it allegedly has multiple versions of a capture rig and years of R&D into productizing it into Apple’s ecosystem.

Apple Multi-capture Capture Thingy

What would make sense for Apple is to produce a powerful multi-sensor head rig for capturing reality and allowing users to edit this volumetrically not for a VR or XR set of glasses but for everyday iPhone and iPad users. The latest video FCP software works great on an iPad. The latest Macbook Pro laptops can handle 10 streams of ProRes 422 video at 8192×4320 (8K) resolution and 30 frames per second (!) And that is only on an Apple laptop, which begs the question: what could we possibly need to do on the next generation of a desktop Mac Pro that would justify its purchase? Answer: volumetric editing.

Experiencials

With the democratization of video production, there is virtually no longer such a thing as a corporate video market, but there could be a huge new Pro volumetric market or ‘experiencials’. This would exist to provide experiences that are dynamic and not linear video. The end user would not hide in their own VR rig, but just view the narrative on their mobile devices. If someone is standing in the way of your view of the speaker, either drag to a new position or just move your actual iPhone and the virtual camera will pan to one side. This makes perfect logic for an explosion of new content. People already have iPhones and iPads, so making new content for them is a much better model than trying to sell VR goggles or headgear.

So many people use a second screen while watching something on their giant flat-screen TVs. Imagine you are watching the final of your favorite sport, but the focus is on the other end of the pitch – just swing around and you can have a look at the other end of the field yourself. Imagine your favorite concert, why not watch it from any angle? But most importantly imagine being able to produce an experiencial of how to make or fix something – a YouTube NeRF experiencal. Now the end user can zoom in or view from any angle to see what you are explaining. While any possible Apple hear capture rig could not initially solve these particular problems, just in retail along, volumetric customer experiences, even static ones, would be a huge market.

The key is not selling loads of new headgear for the wider population. The general population want convenience, and they already have a mobile device. The key is building rich data-gathering and volumetric editing tools that allow professionals and semi-professionals to invest in gear and processes that allow them to make these new experiencials for anyone to consume.

Whether it turns out that Apple will launch something like this at WWDC next month or not, the concept, or something like it, is a compelling ‘killer app’. If it is launched, then the key is to see past this as a way of just making a movie but with some new features and instead understand that it will most likely be a dramatic new application altogether that drives this next wave of technology.