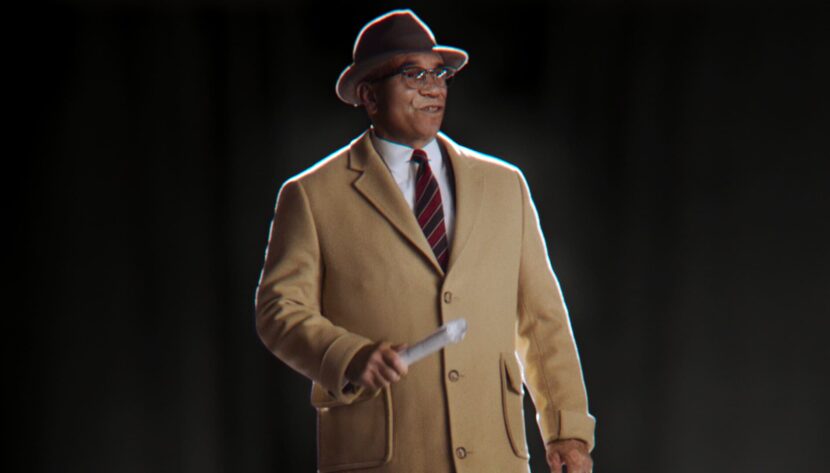

Before the start of this year’s Super Bowl LV, over 100 million fans around the world were treated to a memorable surprise: the digital return of NFL legend Vince Lombardi, with a message of hope and unity. To bring the digital human double to life, the NFL and global creative agency 72andSunny turned to Oscar-winning VFX and digital human pioneer, Digital Domain.

Dan Bartolucci was the visual effects supervisor and Kevin Lau is Digital Domain’s Executive creative director of Advertising, Games, and New Media. The special spot was engineered using Digital Domain’s Charlatan Machine Learning process and completed on Autodesk’s Flame by the skilled DD artists.

Background

The project began with an idea from the NFL and 72andSunny to feature a unifying figure from the past who could potentially remind us that we are stronger together than apart. With the blessing of Lombardi’s estate, the NFL worked alongside Digital Domain to build an adaptive, high-fidelity digital Lombardi that could be used in a broadcast spot, and in the stadium as a life-size hologram. They utilized hours of historical and practical footage directed by Max Malkin of Prettybird, as well as audio provided by NFL Films, to resurrect Coach Lombardi.

“Coach Lombardi has inspired generations for years, making him a perfect embodiment of what happens when we come together,” said Zach Hilder, Group Creative Director at 72andSunny Los Angeles. “Digital Domain’s team immediately got that, and had the technical prowess to pull it off, turning it from an idea to a true representation of Coach Lombardi that not only looks like him, but honors his legacy as well.”

The Charlatan Process

The process involved innovation and artistry, and while it used advanced ML, it was far from a fully automated process. There was no traditional 3D modeling or facial computer graphics, only inferred machine learning and skilled compositing. Great skill was required in preparing the source training data, given that the NFL coach died in 1970. As was curating the Charlatan process and then using and compositing its output together with new body double footage in Flame.

The training clips and film were less than ideal as they were low resolution, grainy, and often had temporal flickering. The nature of machine learning is that one needs not only the right angles on the face but the right emotional expressions to inform the ML inferences. The team not only raided the vast NFL library of footage but material from an HBO documentary on Lombardi (2010) and even the rushes and outtakes from those sources.

One key area of this new approach to digital human doubles is the casting of the base actor. This is a complex process as, ironically, it is not a matter of finding an actor who notionally looks like the late Lombardi. Digital Domain was centrally involved in the process. Lau explained that Dan Bartolucci was very focused on proportions as opposed to the overall look of the actors during casting. “There were people that came in that looked like him when they walked into the casting, but the ‘boardwalk version’ of him,” he explained. But in reality, DD needed not a caricature of Lombardi but someone with the same facial proportions. “If we would’ve used the actors that reminded you of Lombardi, it would have been a disaster, but Dan was focused on the overall proportions, the neckline, the jawline, where his hat sat, and stuff like that,” says Lau. Bartolucci ended up recommending somebody that didn’t immediately look like a Vince Lombardi but ended up being the best foundation for the inferred face replacement.

After receiving live-action plates of an actor cast for his physical proportions of the coach, Digital Domain turned to the difficult task of recreating a legendary face that not only looked correct but would articulate believable high fidelity facial subtly and emotions.

First, a range of curated Lombardi reference clips were pre-processed to address some of the grain, instability, and temporal flicker artifacts. Charlatan was then trained on this footage and also the target face which was providing the underlying final performance. The output of Charlatan was then handed to matte painter and key DD artist Rob Olsson. It was his job to use the Charlatan inferred output as a base and then add additional skin detail that was perhaps not captured by the original old film footage. Olsson then output his frames and poses to guide the compositors as they merged the Charlatan output, Olsson’s fine detail overpaints, and the source footage all into one seamless and consistent clip.

Working with decades-old 2D images and video reference material while working remotely and under a five-week deadline, Digital Domain delivered a high-definition version of Lombardi. Adding detail, from wrinkles to eyebrow hair, the team of flame artists utilized DD’s proprietary face-swap technology Charlatan, but it was still very much an exercise in visual artistry and high-level flame compositing. Patches of skin texture were tracked, facial folds and wrinkles sculpted, all informed but not completed by the advanced machine learning (ML).

Charlatan was created as an internal R&D project, which is part of a much large digital human research undertaking at DD. fxguide has documented their prior Digital Doug work previously. The ML tool helps to blend detailed facial behavior and movement with new live-action footage, leveraging machine learning to seamlessly merge the two. The first fully commercial project that Charlatan was used on was a David Beckham’s aging spot titled Malaria Must Die – So Millions Can Live short, produced by RSA Films Amsterdam. In that transformation, DD used performance clips of both Beckham and an older stand-in to produce the old aged Beckham who delivers the PSA speech.

For this project, Charlatan adapted the digital Lombardi’s face with the physical performance, blending the assets to help the CG face match the actor’s. The result was a realistic and immediately identifiable recreation of Lombardi, delivering an inspirational message for modern audiences.

Interestingly one of the problems digital artists can suffer from when recreating digital humans of real people is a subconscious normalization of the digital face. It is not uncommon in the normal world for family and friends of someone who has had either a facial injury or facelift, to re-normalize the new face and in effect fail to now ‘see’ the facial change. It is not that it is invisible, but just that any such aspect becomes so accepted that it moves into the background of awareness. For digital artists, this means that a face that is technically ‘wrong’ starts to seem normal or correct after hours of examining it. This is not the failing of the artist but rather just how humans process faces. Bartolucci describes this phenomenon as ‘Artist’s Stockholm Syndrome’. This accounts for how some digital faces get trapped in an ‘uncanny ditch’ that the immediate artists cannot articulate. The use of Charlatan provided a non-biased or unaffected data-driven statistical reference of how Lombardi should look at any particular angle and lighting condition, “While we were compositing, we basically always had a reference of what Charlatan produced. It provided it’s a check for us, …we could look at the render and see ‘nope we still have more work to do,” recalls Bartolucci.

For the final frames, some parts of the underlying actor were directly used. “From the beginning, I always knew that we needed to use our actor’s eyes and our actor’s teeth,” commented Bartolucci. “With all the movement that we were going to be doing and how expressive it was going to be, especially with the eye lines – I knew that there would be too many intricacies and we just wouldn’t be able to handle them all in the time we had”. The actor’s eyes were used directly but the actor wore contact lens and the comp team additionally darkened them. The actor also “had prosthetic teeth made to match Vince’s so that all of his dialogue worked correctly,” added Bartolucci.

Peppers Ghost

The Vince Lombardi Trophy is given to the winning team at the end of every Super Bowl and was named in honor of the Green Bay Packers coach. In the actual Raymond James Stadium in Tampa, Florida for the Super Bowl, a Pepper’s Ghost version of Lombardi was displayed, giving the illusion of a life-sized Lombardi standing in the corner of the stadium. With a message of unity, the spot finished with this ‘live’ delivery on the COVID-19 pandemic, America’s struggle against racism, and the other challenges the USA faced in 2020. The actual clip was pre-rendered but seen live in the stadium before the game.

The Pepper’s Ghost portion of the spot had a traditional Jumbotron video backup, but it was always the aim of 72andSunny creative team that the digital Lombardi could ‘enter the stadium’. On the live feed of the Super Bowl coverage, this can be seen at the end of the piece. The Pepper’s Ghost footage was live and the cutaways to the various reactions of fans and invited first responders were controlled by the Super Bowl’s primary live broadcast Director.

“The intent was always to try and create a very heartfelt, warm, and emotional, traditional component, and then have the live Pepper’s Ghost, to bring that honor to him in the stadium,” commented Lau.

Vince Lombardi had one more pregame speech left in him. #SuperBowl https://t.co/wD98u9sUFA

— Patrick Schmidt (@PatrickASchmidt) February 7, 2021

“Charlatan can do things with digital humans with incredible accuracy and fidelity that wasn’t possible before, which is exciting, but also carries a responsibility,” said John Fragomeni, Global VFX president of Digital Domain. “There’s a real cultural investment in Coach Lombardi and everything he stood for. We wanted to do justice to this, so when the audience looked at his face, they felt like he was still with us, leading us on. That moment was our goal.”

Respeecher

To re-create Lombardi’s voice, the creative team turned to digital voice cloning experts Respeecher, to create a realistic and adaptive version of Lombardi’s voice. Respeecher went through hours of existing audio footage, isolating Lombardi’s speech patterns and vocal tenor, creating a digital database. The old recordings were then combined with a voiceover from a speaker with a similar accent and cadence to Lombardi, resulting in a natural performance that feels like it came from the coach.

https://www.youtube.com/watch?v=v_yow_EGbLo&feature=youtu.be

Respeecher most recently did the de-aging of Luke Skywalker’s voice in the final episode of The Mandalorian. We spoke to the Respeecher team about the unique challenges of reproducing the voice of Vince Lombardi.

FXG : When did Respeecher get involved?

We received the first batch of recordings at the very beginning of January. Time pressure was significant, as with the lack of good recordings we knew we will have to apply multiple techniques including those that are in the R&D to be able to provide the result in time. Our models typically need three weeks to train from scratch.

FXG : How much training material did you need?

We needed 60 minutes of clean and sufficiently emotional training material. Unfortunately, we ended up being quite short on data for Vince, so we had to compromise at many places.

FXG : Clearly any training material was recorded years ago.. was there post-or pre-processing needed to the material prior to your normal ML approaches?

One of the main pre-processing steps that we took was hand-picking the cleanest and the most emotional phrases from the data available.

FXG : When someone is listening to the spot… what is real and what is inferred?

We don’t really know that because the final mixing was done on the client-side. However, we do know that there are definitely varying degrees of mixing of the source and the target voices at different times. The cadence is 100% taken from the source actor though (which is what we usually do). Trying to modify the cadence of the source means giving up some control over the output speech, so usually, we try to avoid that.

FXG: How long did the final voice take to train, and how quick was it to generate the actual final audio?

We trained multiple variations of models throughout the project to try to address the shortage of data, so it’s hard to say exactly how long the overall training took. If the data is good though (in which case we train only one model), the training takes about 2-3 weeks. The generation is quite quick – it takes a couple of hours usually, depending on the number of different takes that need to be rendered.

FXG : What is your companies main focus?

Our main focus is on projects in film, animation, and video games, where the technology could provide a unique creative experience and/or cut costs and delivery time. We are currently working on a few documentaries (resurrection projects), v-tubers, film restoration, and censoring, video-games projects where we help with storyboards, voice-over, and cutting time-to-market for dubbing new releases, with dubbing studios, including major ones. Also, we are launching a Voice Marketplace, that enables smaller creators and sound professionals to speak in multiple generic voices.

FXG : What advice would you have for producers wanting to use this technology?

We would say, put as much effort as possible into collecting good target speech material. We don’t require much, just 60 minutes, and sometimes we have to work with less data. But having good clean speech recordings is a keystone for a successful project. Also, Respeecher requires permission and consent from an owner of the voice we recreate.